Table of Contents

Executive Summary

AI agents represent a paradigm shift in automation – digital problem-solvers that independently plan, execute, and learn from multi-step workflows at machine speed. While autonomous AI offers unprecedented productivity and scalability, its independent nature introduces unfamiliar AI agent risks that traditional risk management cannot adequately address. This article summarises our presentation to 180 members of the Institute of Risk Management. Key points include why controls calibrated to autonomy levels should be non-negotiable, why agentic workflows break a key assumption of traditional risk management, and how the Enterprise-Wide Agentic AI Risk Control Framework can help firms navigate their evolution to the era of agentic AI risk management safely.

Introduction

What are the fundamentals of agentic AI risk management that organisations will need for this new age?

It was a privilege for me last week to present on this vital topic to 180 members of the Institute of Risk Management’s global community.

Here is a summary of my presentation (including attendees’ Q&A at the end), the full video, and a download link to the slides, which include all the concepts, a demo of an agent in action, and links to deep-dive content.

What are AI agents (and what benefits do they deliver)?

AI is a family of tools ranging from passive analytic AI to active autonomous AI agents.

Agents (the newest capability) are digital problem-solvers, decision-makers, and action-takers that plan and execute multi-step end-to-end workflows with minimal supervision.

They are an intelligent form of automation, distinguished by their independent action in pursuit of an outcome and their ability to learn from experience.

The most valuable advantages of AI agents include productivity through fast data analysis and decision support, and flexibility and scalability because they are easier to build, adapt, and replicate.

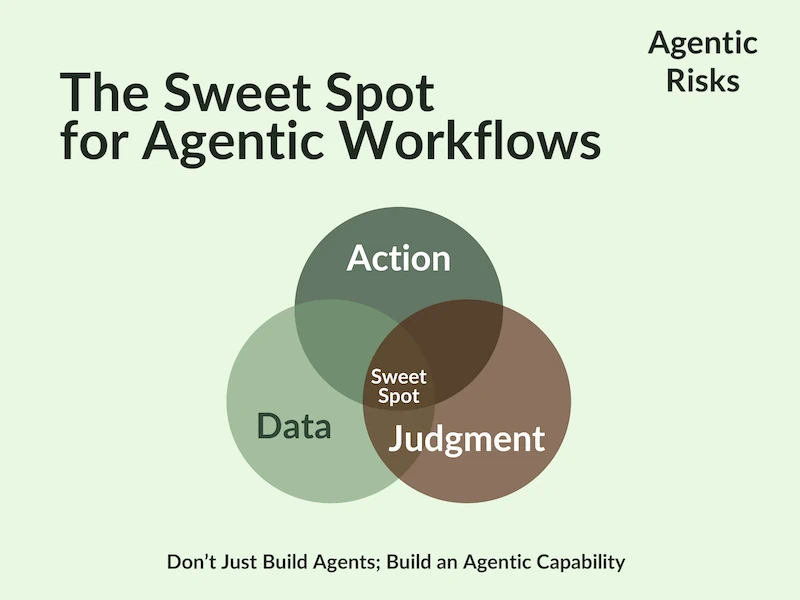

In the webinar, we demonstrated these benefits through a live demo of ‘Fetch’ (a data collection agent) and proposed, therefore, that the ‘sweet spot’ for agentic workflows lies where action, data, and judgment overlap.

Why Risk Controls are Vital for Agentic AI

As a society, we already delegate all five levels of autonomy to non-humans – working dogs. Through centuries of experience, we have learned to grant autonomy slowly and conditionally, to always control it, and to never grant full autonomy around the public. We trust training and controls over ethics, making training non-negotiable (for both handler and agent), placing accountability on the handler, and requiring greater training and control for higher-risk tasks.

Autonomy is a spectrum you calibrate to your needs (not a binary switch), implying that delegating autonomy should be a formal risk appetite decision. Specifically, this is because not every use case can tolerate full autonomy, the risk/reward trade-off is not straightforward, and only humans can be sanctioned.

In other words, risk appetite for AI agents is a decision about how much autonomy you can tolerate and what controls must be in place before you should delegate autonomy.

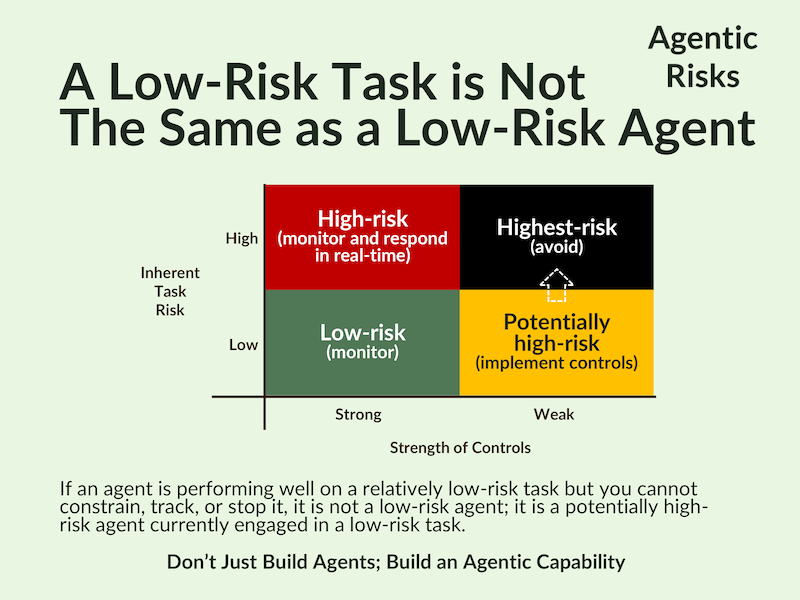

We will cover risk appetite fully in Webinar 2, but it is a vital topic because a low-risk task is not the same as a low-risk agent. In practice, whether an agent is high-risk depends as much on the strength of its controls as on the task it has been given, so only verifiable controls will bring you the benefits agents offer.

Some agentic risks are familiar to humans, e.g. the compounding of small errors, too many tools increasing the chance of a wrong action, and, in such cases, unproductive activity burning through a budget. Humans, too, can fall foul of these risks.

However, on aggregate, agents introduce a broad new class of unfamiliar risks across five categories: individual AI agent risks, multiple AI agent risks, AI agent security threats, AI agent governance failures, and human factors for AI agents.

The Evolution to Agentic AI Risk Management

Once we have delegated the execution phase of a workflow to an AI agent, humans no longer own the end-to-end of an agentic workflow.

This development challenges the traditional risk management process by introducing unpredictability into the workflow and enabling agentic behavioural risks to emerge – potentially faster than a human can respond.

Because of this, agentic workflow risk management must start in the design phase, not after deployment, because many agent failures are only preventable through upfront constraints and testable controls.

As a result, risk managers should take an active role in the design phase, monitor and respond at machine speed, and be ready to address new risks arising from emergent behaviours.

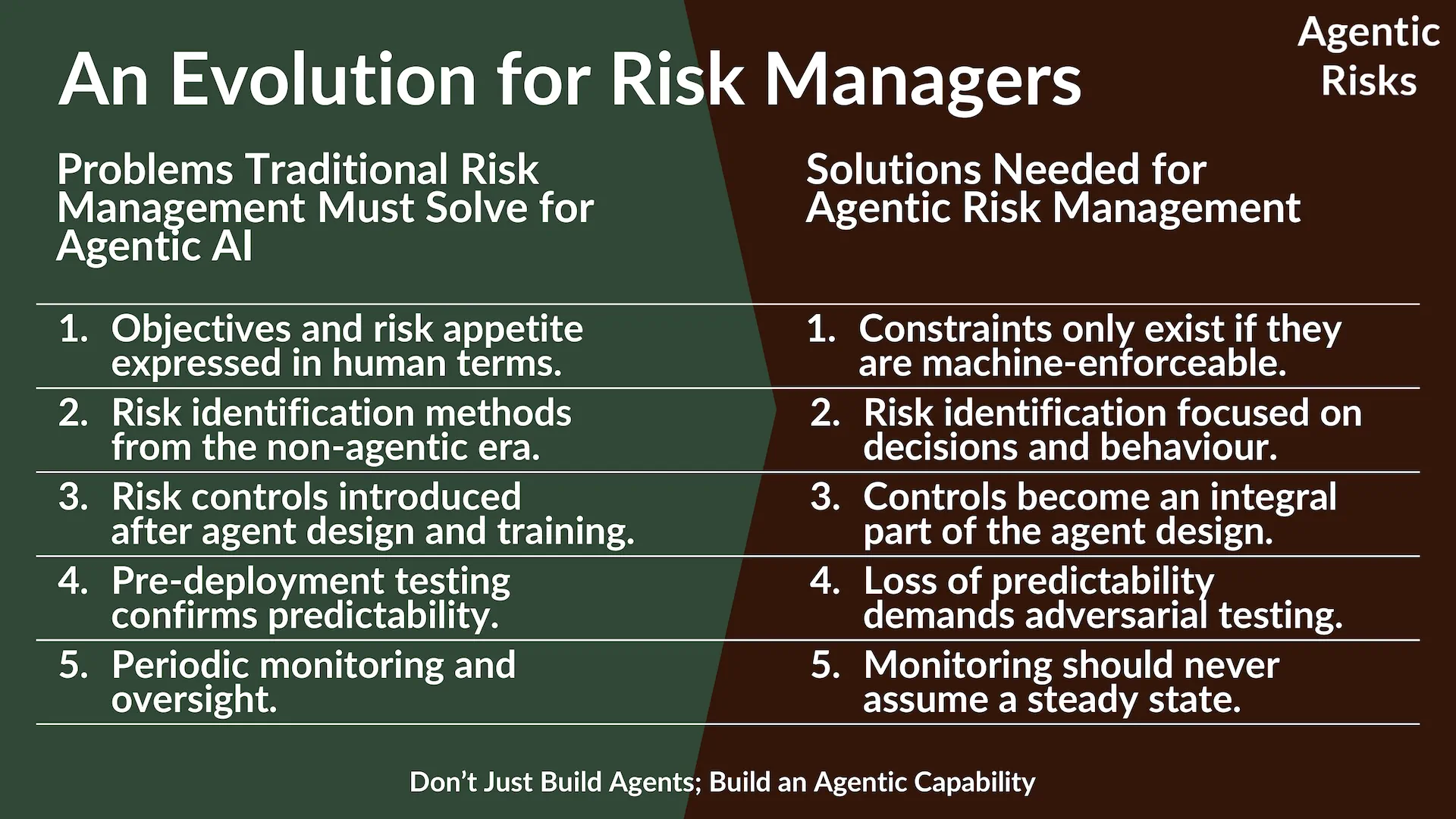

The discipline of risk management must therefore evolve for agentic AI:

- Traditional risk management often relies on human-expressed objectives, non-agentic risk identification methods, post-design controls, confirmatory testing, and periodic monitoring.

- In contrast, agentic risk management replaces these with machine-enforceable constraints, behaviour-focused risk identification, design-integrated controls, adversarial testing, and continuous monitoring for AI agents operating at machine speed.

To help you identify agentic risks, construct proportionate risk treatment plans, and integrate agentic controls into existing risk management frameworks, the Enterprise-Wide Agentic AI Risk Control Framework v3.1 provides 32 objectively defined agentic risk definitions, along with control strategies and itemized controls for each risk.

The Framework also includes a set of agentic risk flags that enable agentic AI risk assessments. These flags help teams move from “Could this go wrong?” to “Show evidence this is controlled,” enabling faster, more defensible assessments of agentic workflows.

Each flag maps to the Framework’s categories, risks, controls, and evidence types, enabling a fast, defensible risk assessment of an agentic workflow.

To conclude, at Agentic Risks, we define agentic risk management as being the discipline of anticipating, averting, and monitoring the risks introduced when humans delegate execution to autonomous AI agents that actively decide how to pursue objectives, learn new ways to achieve their goals, and adapt their behaviour at machine speed.

And we stand ready to help firms adopt agentic workflows safely and with confidence.

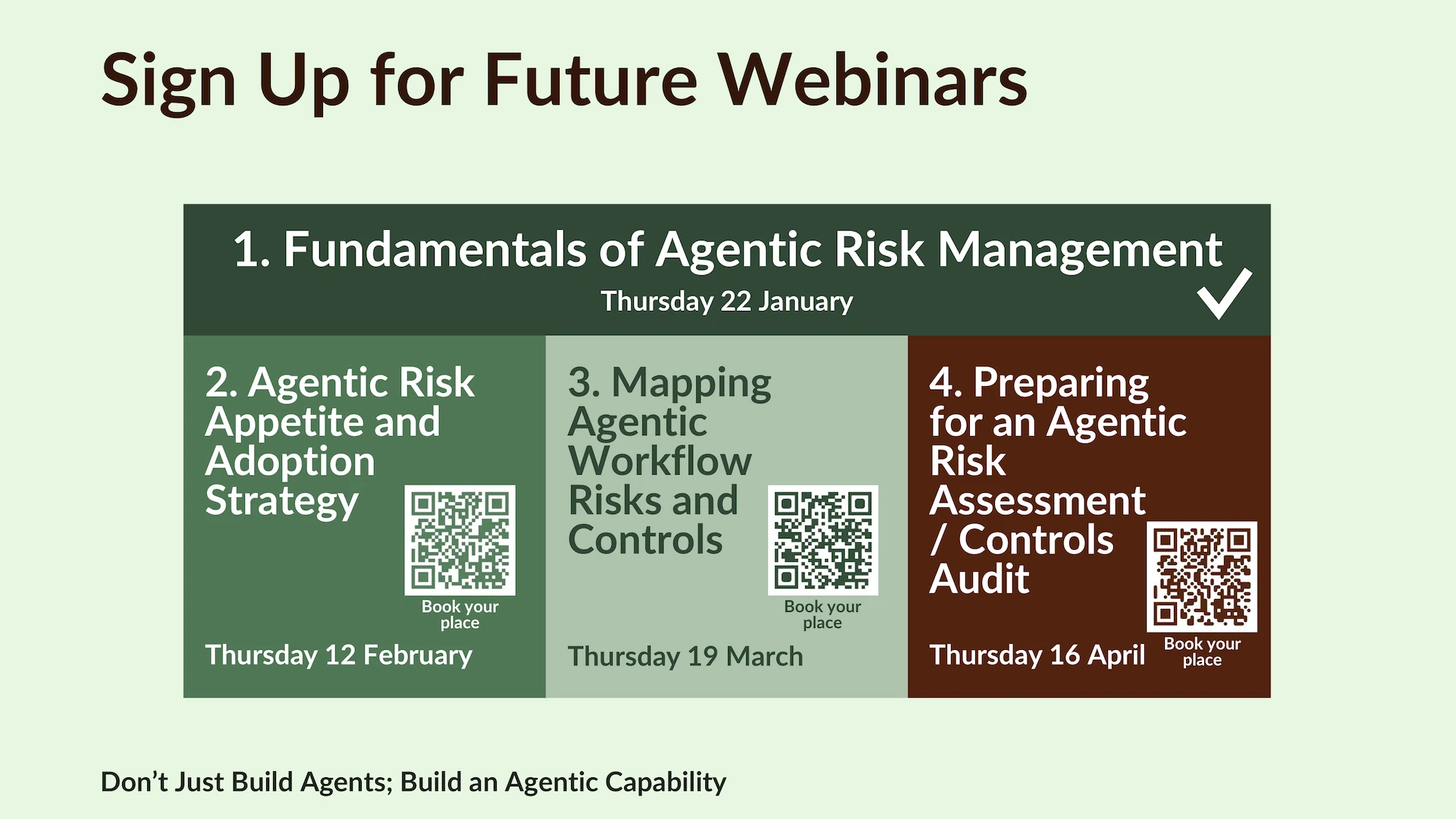

Book Your Place at Future Webinars on Agentic AI Risk Management

Questions and Answers

To adapt a traditional enterprise risk management framework for agentic AI, expand your taxonomy to include agentic risk as a new risk class, comprising the five categories in the Enterprise-Wide Agentic AI Risk Control Framework. Then use the Framework to support your risk identification and construction of agentic risk treatment plans. The key is to integrate agentic risks and controls into existing frameworks like COSO or ISO 31000, rather than treating agentic risk management as a parallel discipline.

Firms often underestimate the risk of emergent behaviour because they are not ready for a system that learns. Managing it begins with prevention in the design phase, when risk managers should walk through the agent’s behavioural options with the engineer to identify risks and encode appropriate controls and monitoring tools. Additional practical assurances include adversarial testing, behavioural anomaly detection with automatic suspension triggers, and mandatory human approval for any agent action that crosses predefined risk thresholds, e.g. financial limits, data access boundaries, or irreversible operations. Goal misalignment and unintended self-propagation are user errors, rather than risks.

To mitigate the risk that preventive agentic controls fail, we recommend firms integrate a dedicated incident response process for AI agents into their existing incident management procedures (see Risk 21 in the Enterprise-Wide Agentic AI Risk Control Framework). It should include proven fallback logic and kill switches (along with criteria and authority for use); a backup procedure and recovery plan; as well as forensic logging, immutable evidence retention, and version control.

To assign accountability for an AI agent, implement lifecycle management as part of your AI governance. Ensure every agent is registered in an inventory, tested, approved, and tracked throughout its lifecycle, blocking unregistered agents from deployment. Specifically, the inventory should map each agent to its business purpose, risk classification, authorized tools, and escalation procedures, making accountability auditable. To learn more, see Risks 1 and 22 of the Enterprise-Wide Agentic AI Risk Control Framework.

AI agents are non-human workers that bring almost endless use cases. In particular, however, they excel at multi-step, rule-based, or repetitive workflows where fragmented systems previously forced teams to perform manual tasks. This makes them well-suited to activities at the intersection of data, action, and human judgment. Common uses include customer support agents resolving tickets, procurement agents researching vendors, IT service desk agents diagnosing issues, financial reconciliation agents, and HR onboarding agents.