Table of Contents

Executive Summary

A risk-based agentic AI adoption strategy classifies AI agents into risk tiers and applies stronger controls where the risk is highest.

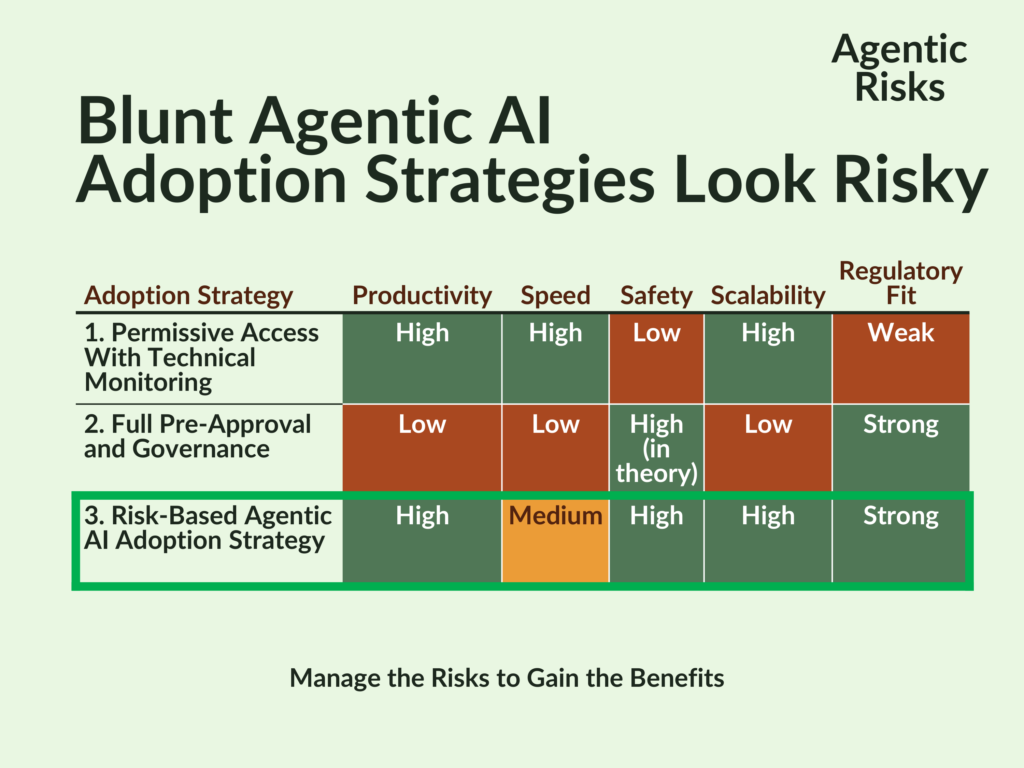

In comparison, more blunt approaches have inherent flaws: permissive access with post-event monitoring sacrifices control, and full pre-approval constrains scalability.

Instead, the risk-based model assigns low-risk agents a register-and-attest process, medium-risk agents a proportionate review, and high-risk agents full governance, making it productive, enforceable, and defensible at scale.

To implement this, add four deliverables to your agentic roadmap: tier criteria, technical enforcement, shadow agent detection, and risk manager dashboards.

If you are at the start of an agentic transformation, the Agentic AI Readiness Assessment evaluates your firm’s readiness across all the prerequisites for agentic AI – including those needed to implement this model – in 90 minutes.

Pressure To Adopt Agentic AI Before You’re Ready

Technology platforms like Copilot Studio, Claude Code, and Agentforce are making AI agents easily available, enabling citizen developers to build agents for personal use, share them with colleagues and external parties. This raises a practical question for risk managers: how to adopt agentic AI safely without slowing innovation.

This creates pressure on executives from staff and investors who are attracted by agents’ promises of greater productivity.

Yet, agentic AI extends existing risk categories and requires a different governance approach because traditional AI governance frameworks do not fully operationalise controls for autonomous, multi-step agent behaviour.

Non-agentic AI governance was designed for static models that produce outputs for your review, not for dynamic autonomous systems that execute actions, that is, outcomes in your names.

Therefore, firms that adopt AI agents before they can control them face overlooked risks, security incidents, and a costly scramble to remediate. The question, then, is not whether to adopt agentic AI, but how to do so safely and at scale.

On Closer Inspection, Blunt Adoption Strategies Look Risky

Building an Agentic AI Governance Framework requires significant effort and some companies have only recently implemented their non-agentic AI governance.

What, then, are their options?

Option 1: Permissive Access with Technical Monitoring

The first adoption strategy is to grant all staff access to agent-building tools with minimal pre-approval, relying instead on technical controls such as AI gateway logs, API call monitoring, data-movement tracking, and retrospective detection and review of agent behaviour.

Despite the availability of governance tools, this option describes how many firms initially configure platforms like Copilot Studio and Google’s Gemini Enterprise – prioritising speed to deployment with post-event monitoring tools such as Microsoft Purview and Defender for Cloud Applications available as a backstop.

On the premise that pre-approval governance at scale is unrealistic, this strategy:

- Eliminates pre-deployment governance.

- Maximises speed to value.

- Allows staff to self-serve immediately.

The problem is that, on its own, detective monitoring controls are insufficient as a primary control because it detects harm after the event but does not prevent it, e.g. data exfiltration, client data misuse, or a regulatory breach.

As a result, risk managers will be permanently reacting to issues rather than preventing foreseeable harm – a posture that would struggle to survive regulatory scrutiny.

Our verdict: despite its powerful proponents, this approach may help explain why only a minority of firms have moved agentic AI into production – 11% in Deloitte’s 2025 Emerging Technology Trends study – suggesting that the gap between pilot and production can be wide, partly because firms may underestimate the governance and operational readiness required to deploy safely.

Option 2: Full Pre-Approval and Governance

Here, you would evolve your traditional AI governance for agentic AI and, mirroring current practices, block any agent that has not passed a Pre-Deployment Agentic Risk Assessment.

In theory, agents are known, understood, and auditable.

However, reviewing every agent is not realistic at the scale needed to meet productivity goals, and attempting it would drive employees to circumvent the process, producing a fleet of shadow agents.

The result would unintentionally turn this option’s auditability from a strength into a weakness by exposing how few agents you control in practice.

Our verdict: this agentic AI adoption strategy is appropriate for externally deployed, high-risk, or system-integrated agents, but it is unsuitable as an organisation-wide model for all staff-built agents.

Conclusion: We Need a Hybrid Option

Both options have worthy goals but fail in different ways: one sacrifices control, the other scalability. What’s needed is a consistent, enforceable hybrid.

Option 3: A Risk-Based Agentic AI Adoption Strategy (A Practical Governance Model)

Option 3 formalises the hybrid approach into a consistent, enforceable model. In simple terms, this is a risk-based AI adoption framework that applies stronger controls only where the risk is highest.

Under this model, agents and users are classified into risk tiers and assigned building tools and oversight proportionate to the tier.

Agent tiering should be based on objective factors and customised for your organisation as part of an AI agent risk classification approach. General factors will include data sensitivity, level of autonomy, system access, multi-agent orchestration, and external exposure. Predefined thresholds and escalation rules ensure consistent classification and enforcement:

- Low-risk – internal-only, personal-productivity agents using public data with limited autonomy can operate under a ‘register and attest’ model.

- Medium-risk – those that have access to business systems and data or are shared internally should undergo a lightweight risk assessment.

- High-risk – externally-facing agents, or those that use confidential data, orchestrate across multiple agents, operate with a high degree of autonomy, or are classified as ‘high risk’ by regulation should be subject to a full Pre-Deployment Agentic Risk Assessment.

This approach is superior to Options 1 and 2 because it reduces risk by matching control intensity with risk exposure, and it avoids governance bottlenecks, balancing productivity, safety, and scalability more effectively than either extreme:

- Prioritises Productivity because most agents will be low-risk personal productivity tools and, therefore, quickly deployed. Medium and high-risk agents can then follow once you have developed the necessary additional governance, the timing of which will depend on your firm’s prior readiness.

- Enforceable – technical controls can be automated by tier, e.g. data-boundary enforcement, API gateways.

- Feasible and Defendable – with risk managers applying the full extent of their agentic governance where the potential for harm is greatest, this strategy aligns with approaches in frameworks such as the EU AI Act, NIST’s AI RMF, DORA’s operational resilience principles, and is feasible at scale because full governance is applied only to a small subset of high-risk agents.

- Credible – supports a more complete AI inventory, reducing (but not eliminating) the risk of shadow agents.

- Adaptable for the Future – as agentic tools evolve, you can recalibrate your tier thresholds through regular reviews without rebuilding the model.

In practice, the key question is: which agents require full governance, and which can be deployed safely with minimal oversight?

How To Incorporate Agent Tiering Into Your Agentic Roadmap

If you adopt Option 3, implementing a system of agent risk tiers should be part of your agentic transformation programme.

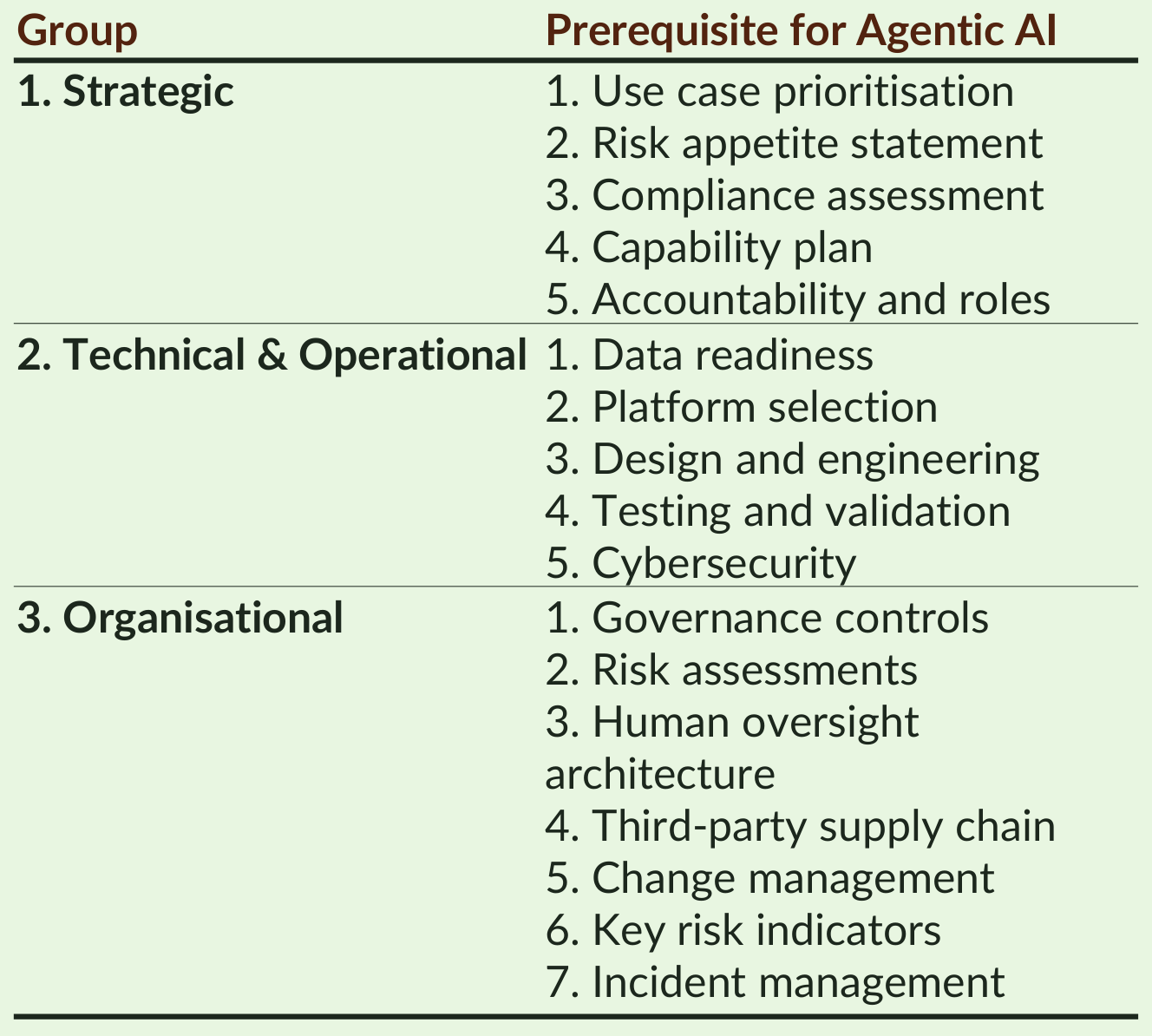

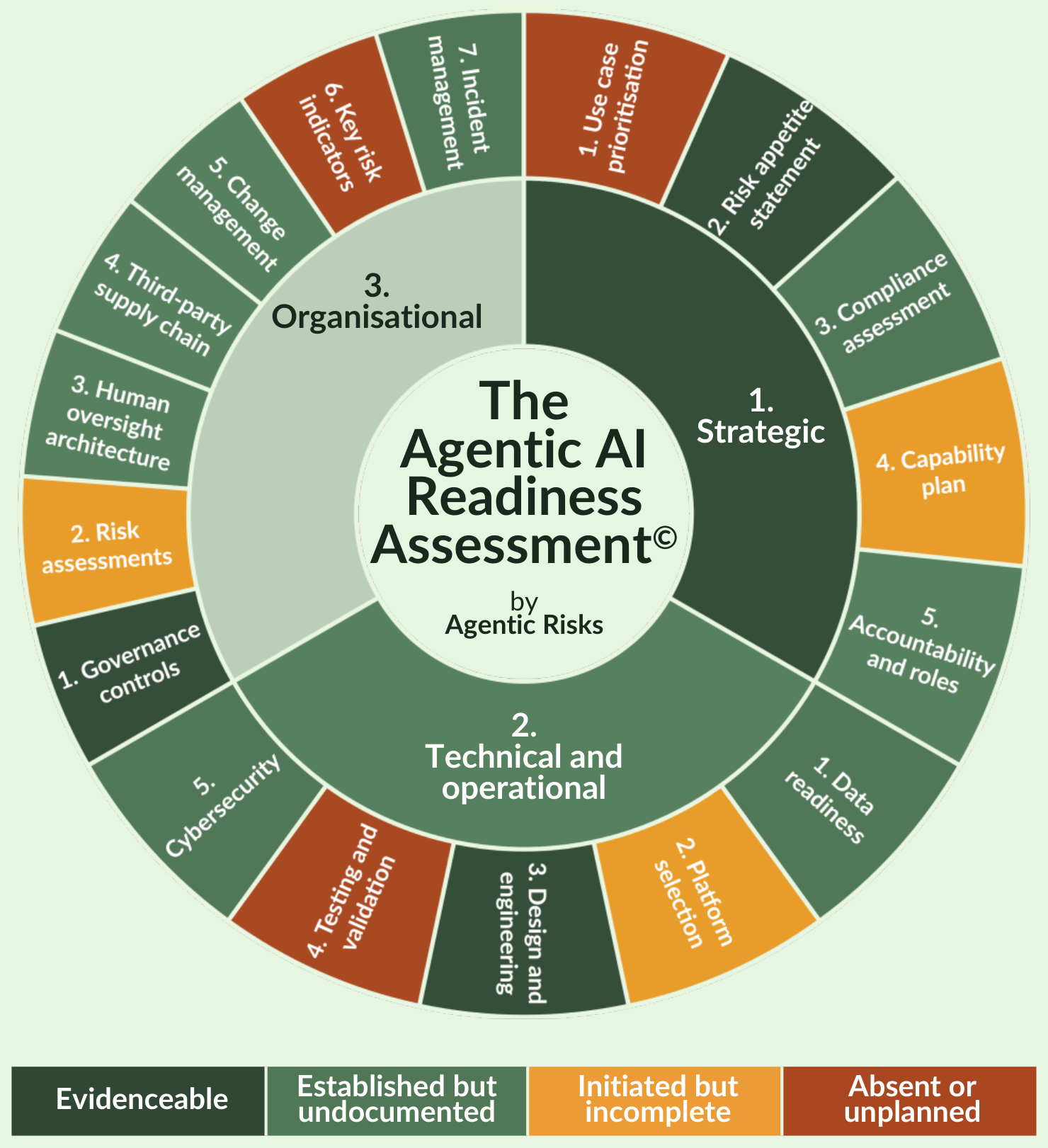

When we plan implementations for clients, we begin with all the prerequisites for agentic AI and then categorise them based on whether you will need them for low, medium, or high-risk agents.

This ensures you have a complete roadmap that you can prioritise for your target risk level.

In summary, here’s how to incorporate agent tiering into the plan:

- At the strategic layer, you will need to Prioritise your Use Cases (1.1) to provide the taxonomy of agent use cases that you will assign to risk tiers before operationalising the tiering criteria through your Risk Appetite Statement (1.2).

- At the organisational level, enforcing the tiers and detecting shadow agents will sit in your Governance Controls (3.1), where you will translate your criteria into machine-enforceable policy and data boundaries.

- Meanwhile, the risk manager’s dashboard will be a product of multiple prerequisites: Accountability and Roles (1.5), Governance Controls (3.1), and Key Risk Indicators (3.6).

Your Agentic Success Will Depend on Readiness

In practice, the firms whose adoption strategies succeed are those whose roadmaps are achievable from the organisation’s current state of readiness.

To achieve this, our Agentic AI Readiness Assessment establishes whether you have each prerequisite in place, its level of maturity and, therefore, the extent of work needed to support your target risk tier, with firms typically needing to do more to support high-risk agents than low-risk ones.

Assess Your Readiness in 90 minutes

If you would like to understand your firm’s readiness for the era of agentic AI, the Agentic AI Readiness Assessment is a triage-style 90-minute session that will give you a complete and systematic view of strengths, weaknesses – strategic, technical and operational, and organisational – and prioritised next steps.

Learn more about the Agentic AI Readiness Assessment at the link and book a ‘no regrets’ session now to ensure your agentic transformation is evidence-based, achievable, and customised to your situation.

Frequently Asked Questions

A risk-based agentic AI adoption strategy is a governance approach that classifies AI agents by risk level and applies stronger controls only where the risk is highest. This allows firms to balance productivity, control, and scalability while safely deploying agentic AI.

To adopt agentic AI safely, firms should use a risk-based approach that defines clear risk tiers, applies proportionate governance controls, enforces technical boundaries, and monitors for shadow AI agents. This ensures innovation can scale without exposing the organisation to unmanaged risk.

Agentic AI systems are autonomous, multi-step, and capable of taking actions rather than just producing outputs. This creates new operational risks that traditional AI governance frameworks do not fully operationalise, requiring more dynamic and risk-based controls.

AI agent risk tiers classify agents based on factors such as data sensitivity, autonomy, system access, and external exposure. Typically, low-risk agents require minimal oversight, medium-risk agents require lightweight review, and high-risk agents require full governance and approval.

Permissive access prioritises speed by allowing broad use of AI tools with monitoring after deployment, while full pre-approval prioritises control by reviewing every agent before use. A risk-based agentic AI adoption strategy combines both by applying controls proportionate to risk.

Organisations can detect shadow AI agents by monitoring API usage, data movement, and system activity using tools such as Microsoft Purview and Defender for Cloud Apps. However, detection is not complete, so governance models should assume some level of unmanaged activity.

A risk-based AI adoption framework aligns with regulatory principles such as proportionality found in the EU AI Act, DORA, and the NIST AI Risk Management Framework. These frameworks require controls to scale with risk, which is the core principle of a risk-based agentic AI adoption strategy.