Table of Contents

Executive Summary

An April 2026 academic paper has confirmed what we advised IRM delegates in January: a low-risk use case does not mean a low-risk agent. The paper also establishes that agentic AI compliance under EU law is not limited to the AI Act – it spans GDPR, DORA, NIS2, and sector-specific regulation simultaneously. Critically, this means behavioural drift is now a live legal obligation, not just a governance preference: firms must trace it, record it, and treat threshold changes as regulatory events. The paper identifies three gaps in the main standards; Agentic Risks’ frameworks already address all three.

An Important New Academic Paper on Agentic AI Compliance

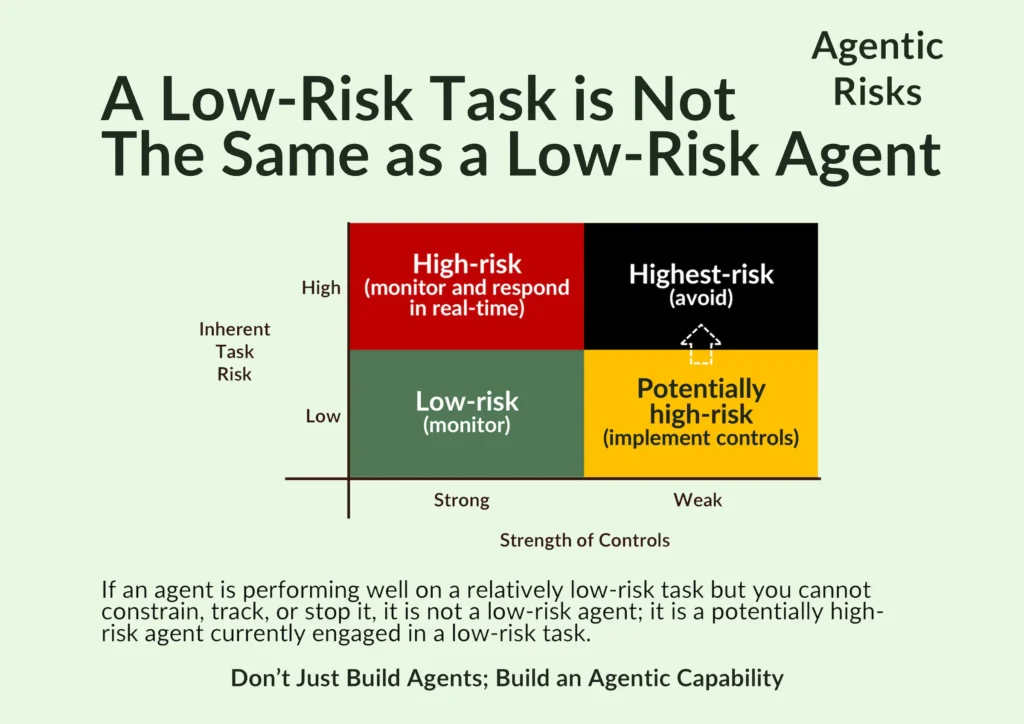

In January 2026, we advised delegates at our Institute of Risk Management (IRM) webinar on the fundamentals of agentic AI risk management that a low-risk task is not the same as a low-risk agent so risk managers should engage in the agent design process.

The distinction matters because agents can drift: they adapt, accumulate memory, discover new tools, and change behaviour over time in ways a static AI model cannot.

In April 2026, a nine-author, multi-institutional academic working paper titled ‘AI Agents Under EU Law: A Compliance Architecture for AI Providers’ arrived at the same conclusion, articulating how Article 3(23) of the EU AI Act applies to behavioural drift in agentic systems. Peer review is pending.

This post summarises what the paper says and what it means for firms deploying agentic AI in regulated environments.

What the Paper Says: Key Findings

The paper provides the first systematic compliance architecture for AI agent providers under EU law.

Its scope is deliberately broad, covering not just the EU AI Act but every additional legislative instrument that an AI agent’s external actions can trigger.

The Regulatory Perimeter Is Wide

Depending on what an agent does, firms may also face obligations under GDPR, the Cyber Resilience Act, the Digital Services Act, the Data Act, DORA (for financial services), NIS2, sector-specific law (MDR, MiFID II), and the revised Product Liability Directive.

In the same way as humans must comply with multiple laws, so too must non-humans.

Practically, this means that compliance teams head widen their horizons when assessing an agent, with the paper providing useful mapping.

The Trigger Is Behavioural

Regulatory obligations will depend on what the agent does, not just on the fact that it is agentic.

For example, an agent screening CVs is high-risk under Annex III of the EU AI Act and carries the full weight of Chapter III obligations.

An agent summarising meeting notes, by contrast, carries only transparency obligations.

Behavioural Drift Is a Live Compliance Obligation, Not Just A Governance Problem

Throughout our webinar series, we have been arguing that unplanned behavioural drift in an agent will impact its risk profile.

The paper formalises this through the lens of compliance: emphasising that it could also affect compliance if the drift amounted to what Article 3(23) of the EU AI Act defines as ‘substantial modification’ – a concept now central to agentic AI compliance under EU law.

The paper concludes that it is not enough to acknowledge that drift might occur.

Instead, a high-risk agentic system can only be compliant with the EU AI Act if its provider can trace its behavioural drift.

To us, this feels well-reasoned. If it became accepted practice, it would create an obligation to detect drift so that you can demonstrate that the system remains within the boundaries assessed during the agent’s conformity assessment.

The Standards Only Partially Address Agentic AI

The EU’s harmonised standards programme (known as M/613, covering risk management, cybersecurity, trustworthiness, and data governance), which exists to give these obligations their testable content, only partially addresses agentic AI.

The paper also identifies three agentic-specific risk areas the standards do not yet cover:

- Multi-agent orchestration compliance gaps, where one agent delegates to others creating recursive accountability chains.

- Cross-session state accumulation, where persistent memory shifts an agent’s behaviour over time.

- Dynamic tool discovery, where an agent connects to tools not in its original catalogue.

These gaps require firms to go further than the standards alone.

A ‘Fourth Tier’ Of Governance Tooling Is Absent

The paper notes that current governance platforms operate at the system level – policies, inventories, documentation.

They answer the question of permission (what is the agent allowed to do?) but not authority (who holds decision-making power over a specific action, right now, and how is that recorded?).

All of this infrastructure does not yet exist as an off-the-shelf capability.

What This Means for Our Work – and for Yours

Industry-wide, work continues to solve the different part of this problem. Since identifying it early (in July 2025), at Agentic Risks, we have built the frameworks firms need:

- Our Enterprise-Wide Agentic AI Risk Control Framework contains 32 agentic risk flags across five categories – individual agent risks, multi-agent risks, security threats, governance failures, and human factors – mapped to over 250 implementable controls that you can learn and select from to construct proportionate risk treatment plans.

- Our Agentic AI Governance Framework provides the overarching governance structure: what to retain from traditional AI governance, plus nine new components you need for agentic AI that ISO 42001, NIST AI RMF, and the EU AI Act were not designed to provide.

Both frameworks map directly to the harmonised EU standards the paper identifies as the compliance layer – prEN 18282 (cybersecurity), prEN 18229-1 (trustworthiness and oversight), prEN 18228 (risk management), and prEN 18286 (quality management system). Our Regulatory Mapping Tables, updated to reflect the M/613 standards, demonstrate coverage against each of them.

On the three gaps the paper identifies in main standards, our practitioner-built Enterprise-Wide Agentic AI Risk Control Framework already provide controls:

- Risk 08 (Inter-Agent Orchestration) addresses multi-agent accountability.

- Risk 05 (Behavioural Drift) addresses cross-session state accumulation.

- Risks 01 and 18 (Lifecycle Management and Vendor Instability) address dynamic tool discovery.

We had already identified them as first-order agentic risks, so our original framework already covered them.

On the “fourth tier” – runtime action-level authority – our Agentic AI Governance Framework calls explicitly for a shift from descriptive / aspirational governance (e.g. “We have a policy that prohibits X”) to operational governance (e.g. “The system cannot do X because of control Y.”)

And our Post-Deployment Agentic Risk Assessment with its 32 Agentic AI Risk Flags provides the evidence layer that firms need for operational safety, audit committees require for governance, and regulators will expect for compliance.

Our direction here is also consistent with Anthropic’s March 2026 endorsement of post-deployment analysis as “one of the highest-leverage priorities in agentic security today.”

The Practical Implications for Regulated Firms

All of this raises three questions for risk managers, compliance officers, and senior leaders in regulated financial services:

- Does your agentic AI risk framework treat behavioural drift as a live compliance obligation or only as a theoretical risk? Treating it only as a theoretical risk can lead to operational and regulatory trouble. Treating it as a live compliance obligation requires early engagement in the design process to minimise the scope for drift, a detection mechanism, version-controlled records, and a procedure for determining when a change constitutes a regulatory event. We recommend you embed this into your agentic key risk indicators.

- Have you mapped your agentic deployments against all applicable regulations, or just the EU AI Act? For financial services firms, DORA has applied since January 2025, while a single agentic AI incident could trigger parallel reporting obligations under NIS2, GDPR, and DORA simultaneously, each with different timelines, formats, and receiving authorities.

- Are your governance controls descriptive or ‘operational’? The paper calls for a system designed to demonstrate actual oversight was exercised, at the action level, during the period of use.

How Our Services Could Help You

Check out our Post-Deployment Agentic AI Risk Assessment service if you have already deployed and want some peace of mind.

And if you want your firm to get the most out of an upcoming agentic transformation (and avoid the pitfalls), take a look at our Agentic AI Executive Workshop.

Frequently Asked Questions

Yes. The EU AI Act applies to agentic AI by implication through its definitions of AI system, high-risk classification, and substantial modification. A nine-author academic paper published in April 2026 provides the first systematic compliance architecture mapping of EU law – including GDPR, DORA, NIS2, and sector-specific regulation – to AI agent deployments.

Yes. The EU AI Act’s Article 3(23) defines ‘substantial modification’ as a threshold that can re-trigger a firm’s conformity assessment obligations. If an agent’s behavioural drift crosses that threshold, it would imply not just a governance concern but also a regulatory event. Firms must be able to trace and demonstrate that drift.

The EU’s M/613 harmonised standards programme only partially addresses agentic AI. The April 2026 academic paper identifies three uncovered areas: multi-agent orchestration compliance gaps, cross-session state accumulation, and dynamic tool discovery. Firms deploying agentic systems must go beyond the standards alone to address these risks.

A post-deployment agentic AI risk assessment is an evidence-led evaluation of whether a live or recently deployed agentic workflow can be owned, constrained, monitored, and safely stopped. Using a complete and verifiable set of risk flags, it provides the evidence layer that audit committees require for governance, and regulators will expect for compliance.

No. An agent’s risk classification depends on its behaviour, not its stated purpose. An agent that begins as a low-risk summarisation tool can accumulate memory, discover new tools, and drift into higher-risk territory over time – without any change to its original design or deployment classification.