Table of Contents

Executive Summary

An agentic workflow is a system where AI agents autonomously plan, decide, and act across interconnected tasks, with explicit controls and human oversight embedded at each stage.

The success of these initiatives rests not just on technology, but on organisations building the capability to govern autonomy before they introduce it.

This article sets out a practical, audit-defensible agentic workflow design process that helps firms decide whether an AI agent should exist, what authority it may safely hold, and how that authority is constrained, tested, and monitored over time.

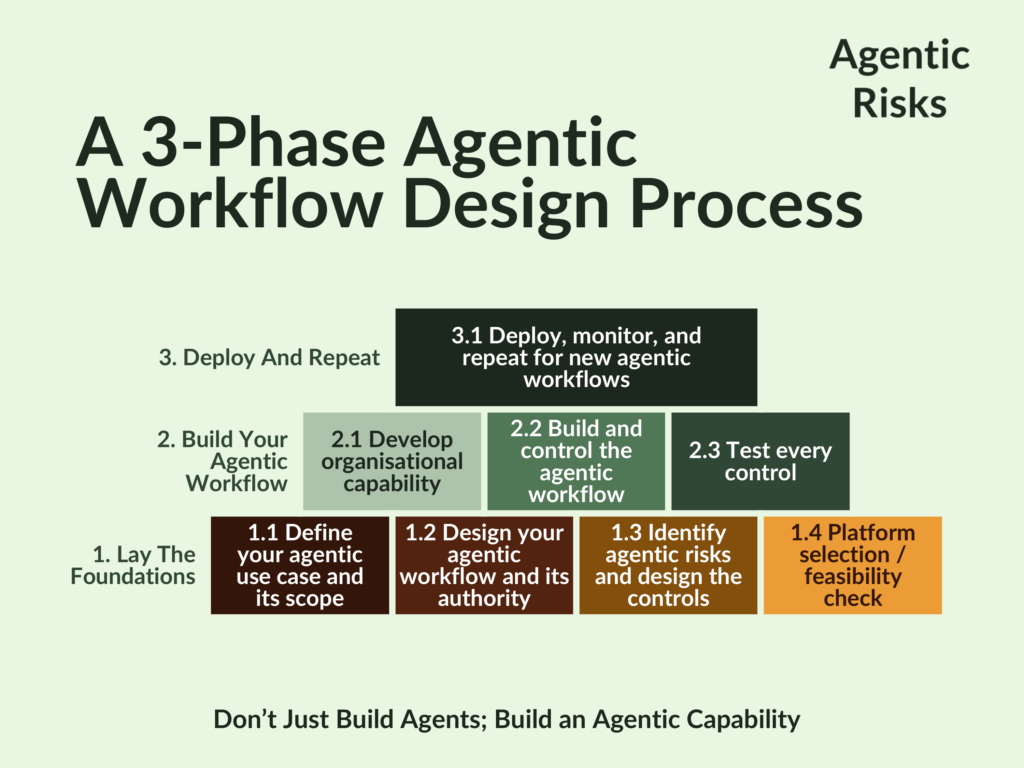

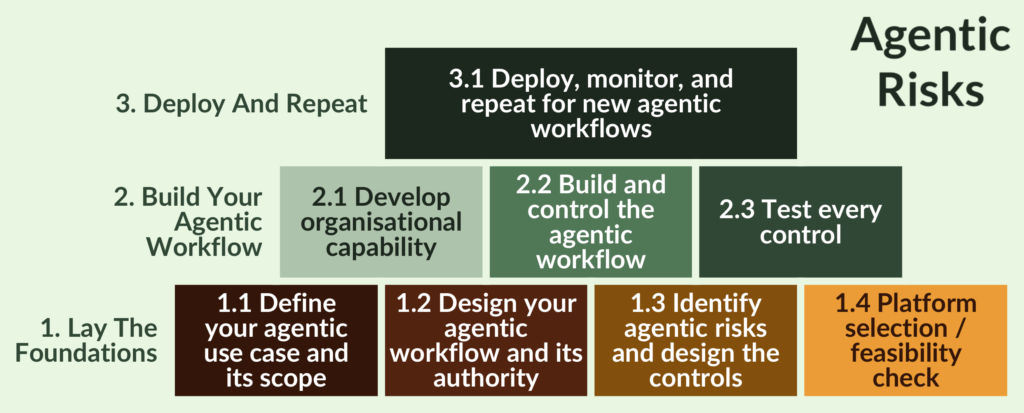

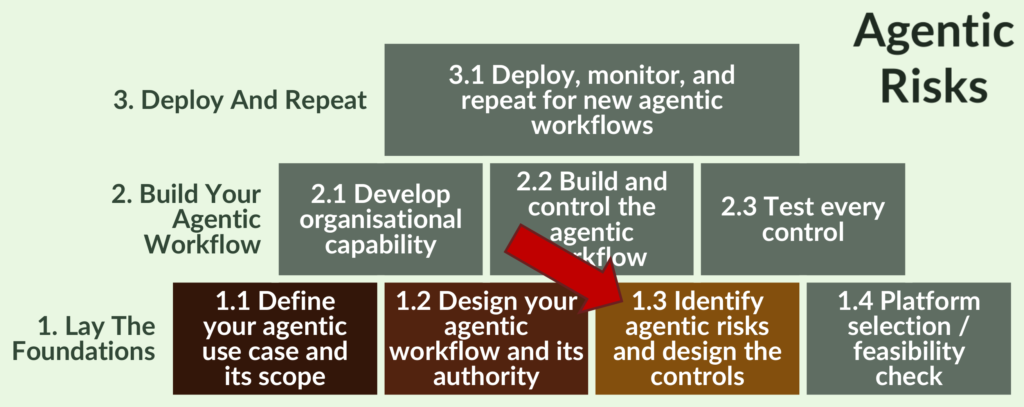

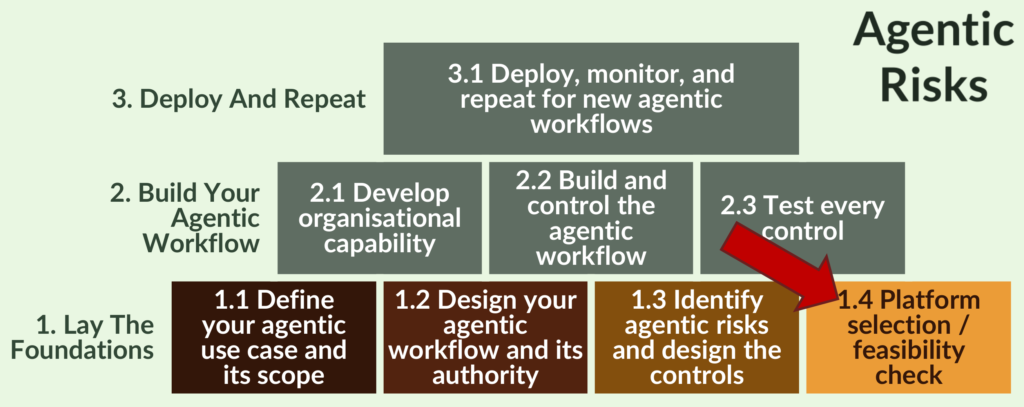

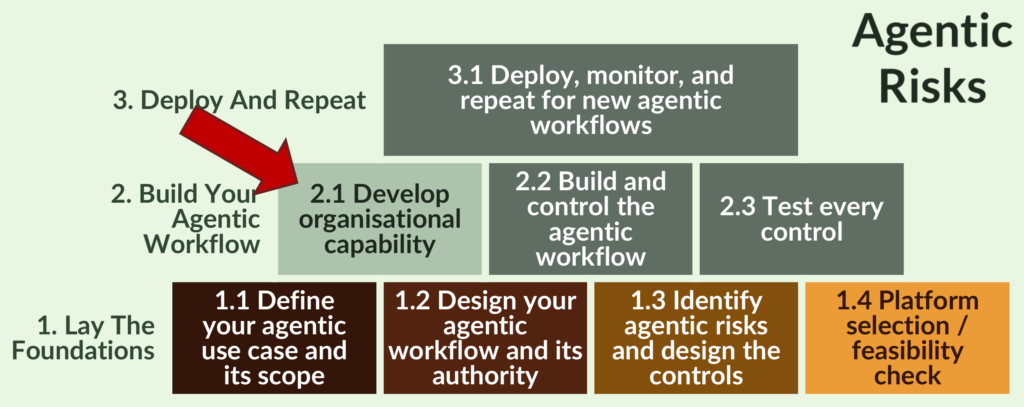

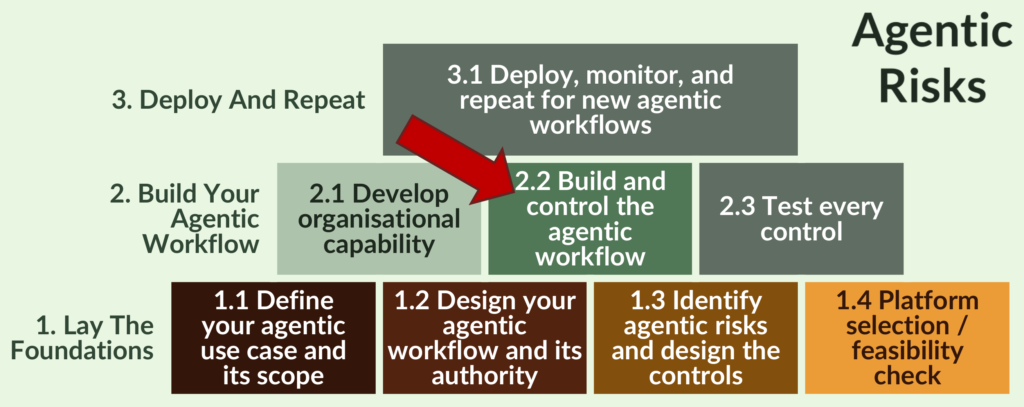

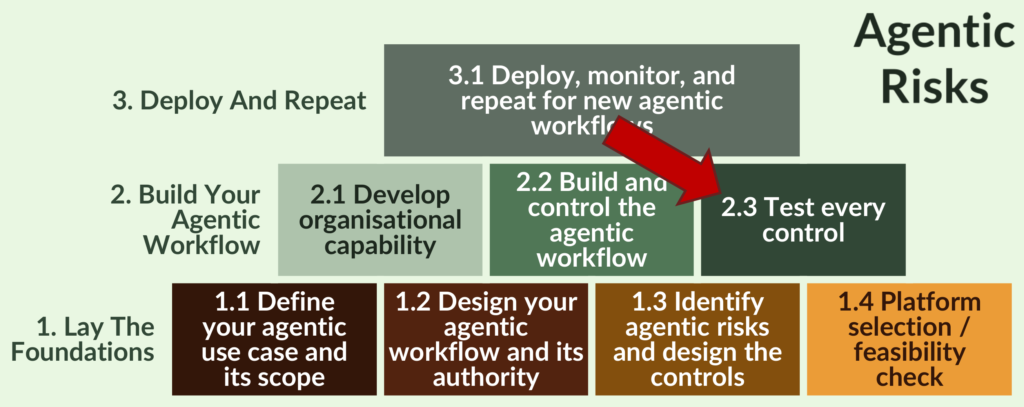

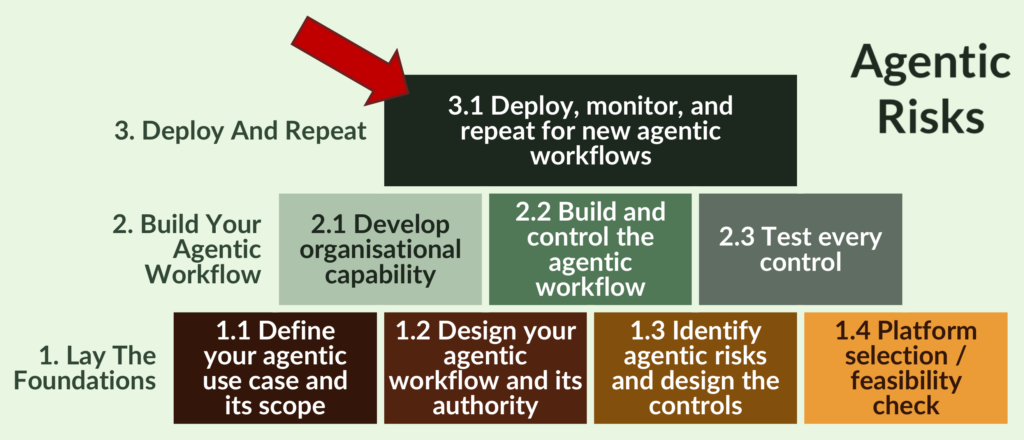

The process is structured into three phases – foundation, build, and deployment – to move firms beyond demos to a repeatable, governable, and scalable agentic workflow.

The outcome is a step-by-step guide on how to design an agentic workflow using a risk-led, auditable agentic workflow design process.

Unstructured Agent-Building Is Costly and Risky by Choice

AI agents can increase productivity, scalability, and the value of human judgment. This makes them attractive for agentic workflows, in which companies authorise autonomous or semi-autonomous agents to make decisions and take actions within defined boundaries.

Yet, Deloitte recently found that only 11% of firms have successfully operationalised agentic AI. So why are most firms failing while a minority proves that it is possible?

Your new agentic workforce will introduce a broad, new class of known risks, so it makes sense to experiment first. However, instead of conducting structured experimentation, almost every firm in Deloitte’s study lacked a strategy for agentic AI.

Instead, many firms pick an agent platform, connect it to a few tools, and expect quick results, only to hit “exception hell” when overlooked risks materialise:

- Without knowing the controls they should be checking, ‘testing’ is weak and more like ‘exploratory probing.’

- Insufficiently controlled and over-permissioned agents that would have failed stronger testing cause damage.

- A narrow, technology-led approach treats agentic AI like software rather than the onboarding of an entirely new type of non-human worker, leaving key support functions unprepared.

Unsurprisingly, agentic workflows built this way break under real conditions, resulting in security incidents, benefits that don’t scale, and a scramble to remediate when external stakeholders ask questions.

Does this matter? Yes: teams investigating failed agentic AI initiatives repeatedly arrive at the same conclusion: the technology worked, but the organisation was not ready to govern autonomous AI agents, test them, and constrain their authority. Therefore, it is risky to believe that software can do all the thinking for you, because risk management is a culture, not just a piece of code.

The Winners Don’t Just Build Agents; They Build an Agentic Capability First

So, what are the 11% doing differently?

In short, they are not just building AI agents, they are building what’s needed to achieve an agentic transformation: a broad-based and repeatable agentic AI capability, covering governance, risk management, and delivery:

- Strategic direction and success criteria – their executives are investing time upfront to set direction and determine their risk appetite, which translates into durable gains: clear success metrics, a coordinated adoption strategy, and the sponsorship of repeatable skills.

- Clear limits and risk reduction – because they know what they want, they can de-risk their agentic transformation by granting bounded autonomy and minimal privileges to their agents.

- Organisational capability and governance – because they have executive sponsorship, they are taking a multi-disciplinary approach that lets them integrate agentic risk management into their existing capabilities, such as information security, cost monitoring, and incident management, reducing the impact if something goes wrong.

- Risk and security integration – this broader involvement also enables agentic risk identification in the design phase, diversified threat modelling, and more effective control design, leading to cost discipline, fewer incidents, faster expansion to new use cases.

- Evaluation and stress testing – lastly, they are experimenting in sandboxes, testing agents the way attackers and edge cases behave, and fixing issues before repeating the process on their next workflow.

Each of these behaviours shows up at a specific point in a successful agentic workflow lifecycle:

- Strategic direction and autonomy limits are set before build.

- Risk and security controls become an integral part of agent design.

- Evaluation and stress testing happen before scale.

A 3-Phase Agentic Workflow Design Process

The 3-phase process below formalises what the 11% are doing differently into a repeatable approval, build, and monitoring cycle.

It is designed to create the organisational capability required to govern agentic workflows safely at scale.

It does so through a risk-led agentic AI design approach that embeds governance and controls before autonomy is granted.

Phase 1. Lay the Foundations Before Granting Autonomy

Executive summary:

This phase decides whether an agent should exist at all and establishes the governance boundaries within which autonomous AI agents may operate.

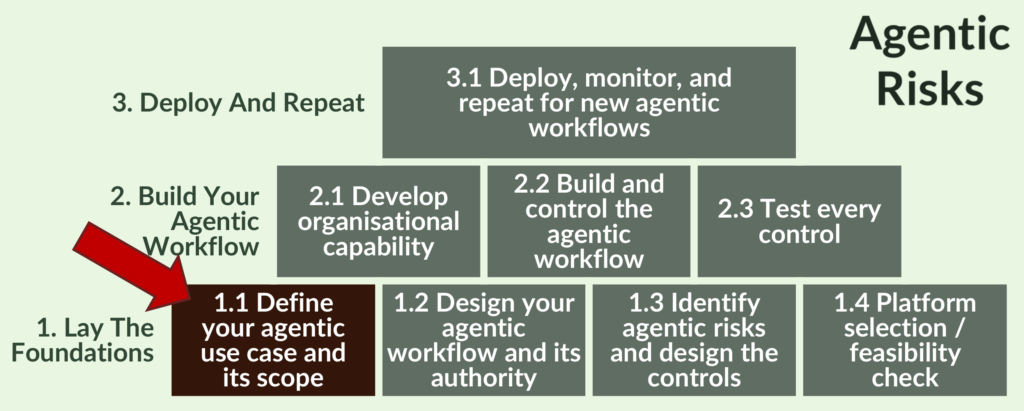

1.1 Define your agentic use case and its scope

What to do

- Map the baseline (non-agentic) process – objective, criticality, suppliers, inputs, process (tasks and decisions), outputs, customers (SIPOC), information security, and scope boundaries.

- Define the problem statement – identify where delays occur, and where errors or unsafe assumptions are costly, their cause(s) and impact (time, cost, and risk).

- Specify the objective and scope – translate business intent into machine-readable and ranked objectives (defined in units of time, cost, quality, risk) as well as instructions on what is in-scope and out of scope. These might include objective prioritisation, trade-off rules, explicit prohibitions, and thresholds.

Why it matters – without understanding your baseline process, you cannot evaluate if the AI agent is performing better or worse than it.

Common mistake – starting with “where can we add an agent?” instead of “where would be the best place for us to develop and prove our agentic capability?”

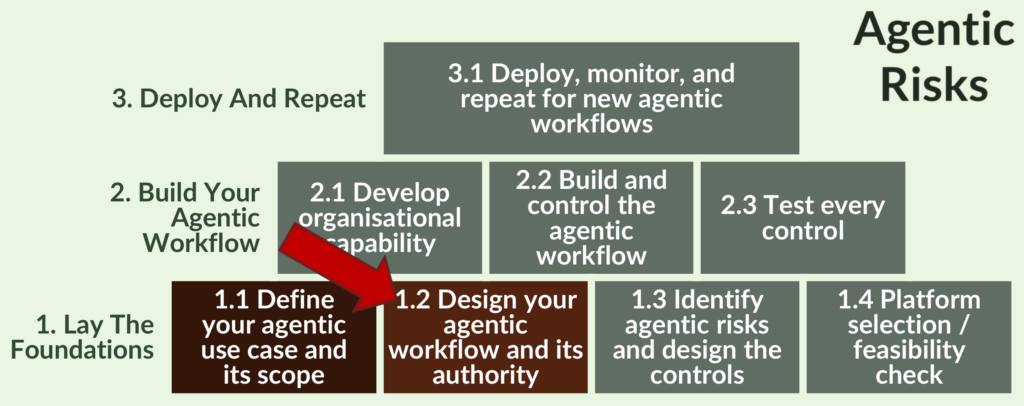

1.2 Design your agentic workflow and its authority

This step uses the objective from Step 1.1 to determine how autonomy will add value without destroying it: it is a vital step to governing autonomous AI agents.

What to do

- Design the agentic workflow

- To show how you expect the agent to achieve the workflow’s objective, use the same mapping technique as in Step 1.1 but replace tasks with behaviours and decisions and categorise them by whether they will be agent-autonomous (e.g. bounded, reversible actions), agent-assisted (e.g. analysis, options, drafting), or human-only (e.g. ethics, accountability, final sign-off).

- Specify decision-making boundaries, actions permitted without human approval, authority limits, escalation paths and triggers.

- Define the measures of success – define 3-5 KPIs that will prove value, e.g. cycle time, error rate, cost per case, rework, or customer sentiment.

- Translate into a structured context an AI agent can follow, for example, a flowchart of goals and decision rules that clarifies the agent-to-agent and agent-to-human hand-offs.

- Fix any baseline problems – embed solutions to the baseline problems identified in Step 1.1, e.g. if the non-agentic procedure includes unsafe assumptions, confirm the assumptions and add them as a knowledge source for the agent.

Why it matters

- Agentic AI is autonomy (not automation), so you will need to explain to executives, auditors, and regulators, “who was allowed to decide what?”

- Agentic systems often fail at handoffs and feedback loops

- If you do not fix your baseline problems, AI will amplify them.

1.3 Identify agentic risks and design the controls

This step applies your organisation’s Agentic Risk Appetite Statement to the workflow and results in an approved agentic workflow risk assessment – the core of effective agentic workflow risk management.

What to do

- Map the risks and controls – Step 1.3 is the analytical core of the entire process. Before a single line of agent code is written, this step requires your risk manager, business, and technology representatives to walk the agent’s full decision pathway together – identifying risks, scoring them, designing controls, and obtaining formal sign-off.

- The step covers five areas

- Risk identification across the agent itself, your organisational capability, the agent’s operating context, and its external attack surface.

- Structured risk definitions.

- Inherent and residual risk scoring.

- Control design with assigned ownership.

- Definition of key risk indicators with tolerance thresholds.

- The output is a formally approved Agentic Workflow Risk Assessment, signed off by the CRO and reviewed by the AI Governance Committee.

- Integrate into enterprise risk frameworks – for each identified agentic risk, also map to standard enterprise risk categories (e.g., operational, strategic, compliance), to enable integration with existing risk controls and audits.

Why it matters

- For each risk you identify in Step 1.3, you should embed a code-level control in Step 2.2.

- Anything missed here will surface either as a test failure in Step 2.3 – after the agent has already been built – or as an incident in production. This makes Step 1.3 a pivotal point in the design process.

- In practice, a well-run 1.3 typically takes two to three structured workshops; organisations that treat it as a form-filling exercise consistently find the gaps in Step 2.3 testing.

See how to conduct a pre-deployment agentic risk assessment.

1.4 Platform selection / feasibility check

This step ensures your chosen platform can enforce the controls identified in Step 1.3. If your organisation is already running agentic workflows on a platform, adapt the checklist below to ensure the platform’s feasibility for your new workflow.

What to do

- Map workflow, risk, auditability, and control-enforcement capabilities against a ‘long list’ of agentic AI platforms.

- Appraise their ability to support your needs.

- Evaluate different vendors’ interoperability with your systems, including identity, data, workflow, and monitoring layers.

- Assess how each platform integrates without bypassing or weakening existing controls (e.g. Identity and access management, approval gates, audit logging).

- Assess their roadmap and independence.

- Create a score a shortlist of potential providers.

- Recommend and decide.

Why it matters

- Technology capabilities vary widely, with some platforms being less able to support deep monitoring, least-privilege enforcement, or adversarial test automation. However, they may still score well on generic criteria, so make sure you develop explicit criteria.

- As a result, selecting a platform before defining control requirements is an unnecessary risk to take and creates the potential for costly rework.

Phase 2. Build Your Agentic Workflow

Executive summary: this phase ensures the agent can only do what it was explicitly designed and authorised to do – and nothing more.

2.1 Develop an organisational capability

What to do

- Start your change management earlier than you would for a traditional organisation redesign project.

- Specifically, train users on what agents can and cannot do, how to challenge their outputs, and how to intervene safely.

- Include your new agentic co-workers in updated policies, RACI matrices, information security procedures, vendor management checks, cost management, incident management, management information, and internal audit.

- Assign explicit ownership for the agentic workflow, including a named business owner accountable for outcomes, a technical owner accountable for controls, and a risk owner accountable for residual risk acceptance.

- Align incentives so humans don’t blindly defer to agents.

Why it matters – agentic AI introduces accountability gaps that traditional RACI models were not designed to handle; without explicit ownership and trained users, controls that look sound on paper will fail in practice.

2.2 Build and control the agentic workflow

What to do

- Design the agent(s) – execution context, and delegation, explicitly specify what identity the agent operates under (e.g. service account, delegated user, system role), how authority is granted and revoked, and how permissions are scoped and audited.

- Integrate and provision the agent, giving it the bare minimum access to APIs, databases, and internal tools, e.g. an agent reading emails shouldn’t have the power to delete the database.

- Define state, memory, and context boundaries, specify what the agent is allowed to remember, where memory is stored, how long it persists, and how memory is reset, versioned, or purged to prevent drift or data leakage.

- Embed code-level controls as an integral part of the agent’s design for each risk identified in Step 1.3. Examples could include hard limits on actions, tool-use, and spend; confidence scores, escalation paths, and human approvals; roll-back functionality and kill switches; logging, versioning, and replay.

- Design testing – confirmatory and adversarial testing scenarios and, if necessary, strengthen your controls to prepare for them.

- Select training data – ensuring it enables the agent to learn all the necessary behaviors, decisions, and constraints.

- Train a narrow MVP with real data and real edge cases, but in a sandboxed environment.

- Include an explainability trail that articulates how the agent reached decisions relevant to risk boundaries and escalation paths.

Why it matters

- Step 1.2 defines the limits of agentic authority and Step 1.3 identifies the risks: Step 2.2 enforces them.

- Least-privilege access and machine-enforced controls are essential to prevent over-permissioned AI agents and insecure tool use.

2.3 Test every control

This step exists to prove that the risks identified in Step 1.3 are controllable in practice through systematic testing and control of AI agents. Your focus should be on testing all the expected functionalities and risk controls under realistic conditions, including adversarial tests like ‘red teaming’.

What to do

- Confirmatory testing – to ensure the agent works.

- Adversarial testing – to see how the agent behaves when it is deliberately stressed. Disciplines such as red-teaming, adversarial prompting, conflicting goals, tool failures, unexpected inputs, autonomy boundary testing, incentive manipulation, and multi-agent testing become foundational.

- Deploy the agent to a control group of test users.

- Monitor agent behaviour and key risk indicators and feed results back into design and retest.

- Validate outcomes, control effectiveness, and approve residual risk exposure.

Why it matters

- Some risks only show up under pressure, ambiguity, volume, and deliberate attack, e.g. a positively tested autonomous system could still be co-opted by a malicious actor or start succeeding in the wrong way (e.g. gaming your controls).

- From a regulatory and audit perspective, this also evidences due care: the ability to show that failure modes were actively explored will increasingly matter if you need to explain an incident after the fact.

Phase 3. Deploy And Repeat

Executive summary: this phase turns a one-off agent into a governed, repeatable, and actively monitored organisational capability, where monitoring is treated as a control, not an afterthought.

3.1 Deploy, monitor, and repeat for new agentic workflows

What to do

- Standardise the agent’s components.

- Grant autonomy gradually – expand the MVP to the workflow’s full scope. For example, you could begin with agentic data gathering and insight generation, graduating to recommendations for human approval. Grant additional autonomy incrementally and only once earned.

- Continue to learn and manage agentic behaviour by conducting continued cross-workflow failure-mode tests.

- Institute governance and controls for long-term ownership and maintenance.

- Institute continuous monitoring of performance, behavioural drift (e.g. boundary testing, or succeeding in new ways), key risk indicators, and cost and tool usage trends.

- Validate the agent against both internal KPIs (such as efficiency, latency, cost, accuracy, completion rate) and risk thresholds (like data leakage, autonomy bounds, and regulatory exposure), so you can control and not just observe.

- Run randomly timed evaluations, e.g. “if I give the agent a fake invoice with a future date, does it still catch the error?”

Why it matters – once an agent is operating autonomously, its risk profile can change faster than any human review cycle. Therefore, risk monitoring for AI agents should be continuous where autonomy, speed, or impact exceed human reaction times, and at least near-real-time in all other cases.

Benefits of Structuring Your Agentic Workflow Design

- Fewer nasty surprises thanks to early risk identification in Phase 1.

- Stronger security posture resulting from Phase 2’s multi-disciplinary engagement and machine-enforced controls.

- Scalable returns on your investment in agentic AI owing to Phase 3’s repeatability.

- Auditability and accountability you can defend across all phases – crucial in a regulated environment.

Together, these benefits explain why firms that invest in safe, auditable agentic workflows outperform those relying on ad-hoc automation without enterprise-grade agentic AI governance.

Don’t Just Build Agents; Build an Agentic Capability

So far, so logical in terms of what needs to be done and in what order.

But building a capability is not always easy, especially if you are at the start of a journey and technology platforms are just screaming “BUILD AGENTS!”

However, to join the 11% of winners, you need to be more strategic than that, because a multi-disciplinary agentic capability of real humans who know how to govern agents is the only way to deliver success.

This is where Agentic Risks can help with:

- Training solutions for executives, project teams, and support functions, e.g. risk, infosec, business continuity, finance, HR, and internal audit.

- Direct assistance in the form of pre- and post-deployment agentic risk assessments (using Gerido, our proprietary agentic risk assessment software), as well as workflow design and implementation.

In March and June 2026, the Institute of Risk Management and the Investment Association will sponsor webinars by Agentic Risks on ‘agentic workflow risks and controls.’

If you are a member of either organisation and would like to learn more about the agentic workflow design process, subscribe to our newsletter for registration links to these upcoming events.

Or if you would like to discuss how we could help you, book a meeting here.

FAQs

Most agentic AI initiatives fail because organisations introduce autonomy before building the capability required to govern it. The technology often works, but risk appetite, controls, testing, and accountability are not designed upfront, leading to over-permissioned agents, weak testing, and failures that only surface in live environments.