Table of Contents

Executive Summary

Agentic AI marks a shift from tools that produce outputs to systems that achieve outcomes.

As a result, agentic workflows differ from traditional ones. In brief, they can plan, decide, and execute multi-step actions autonomously to achieve your goal, remembering and learning from their experiences. Because of this, their behaviour can evolve, creating both opportunities and risks.

Therefore, understanding how agentic workflows differ becomes vital to effective risk identification. So, this article examines 10 dimensions that vary between agentic and traditional workflows – from decision authority and system access to failure modes and accountability – and how risk managers should respond.

Agentic AI Suits Not Just Tasks but End-To-End Workflows

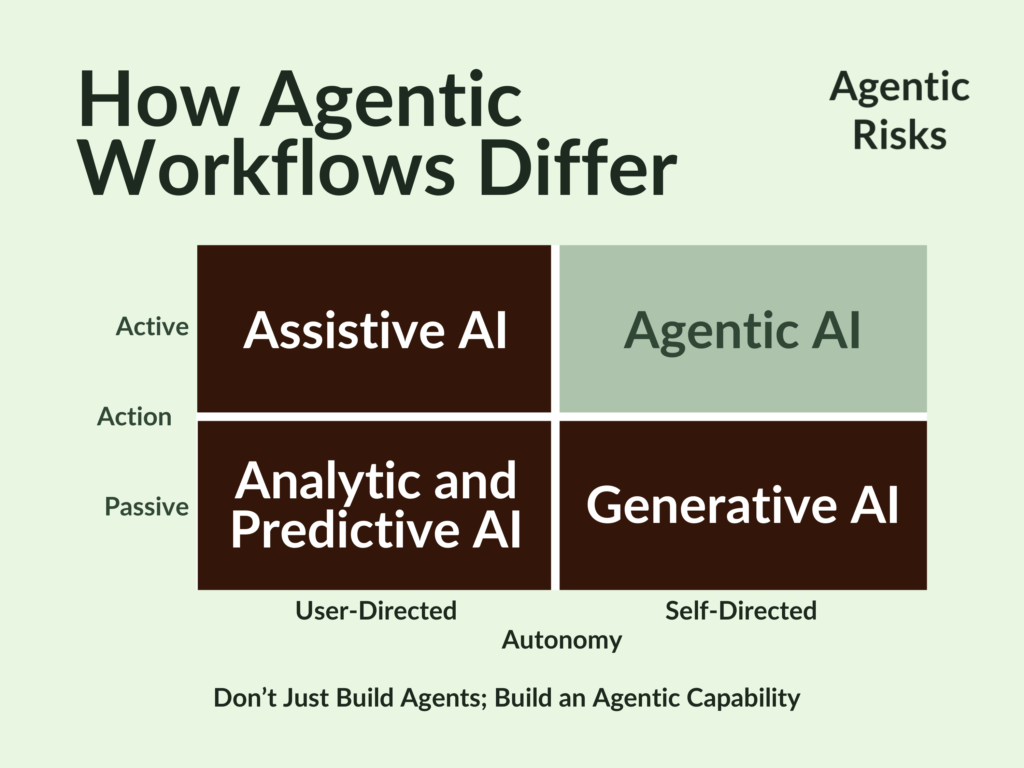

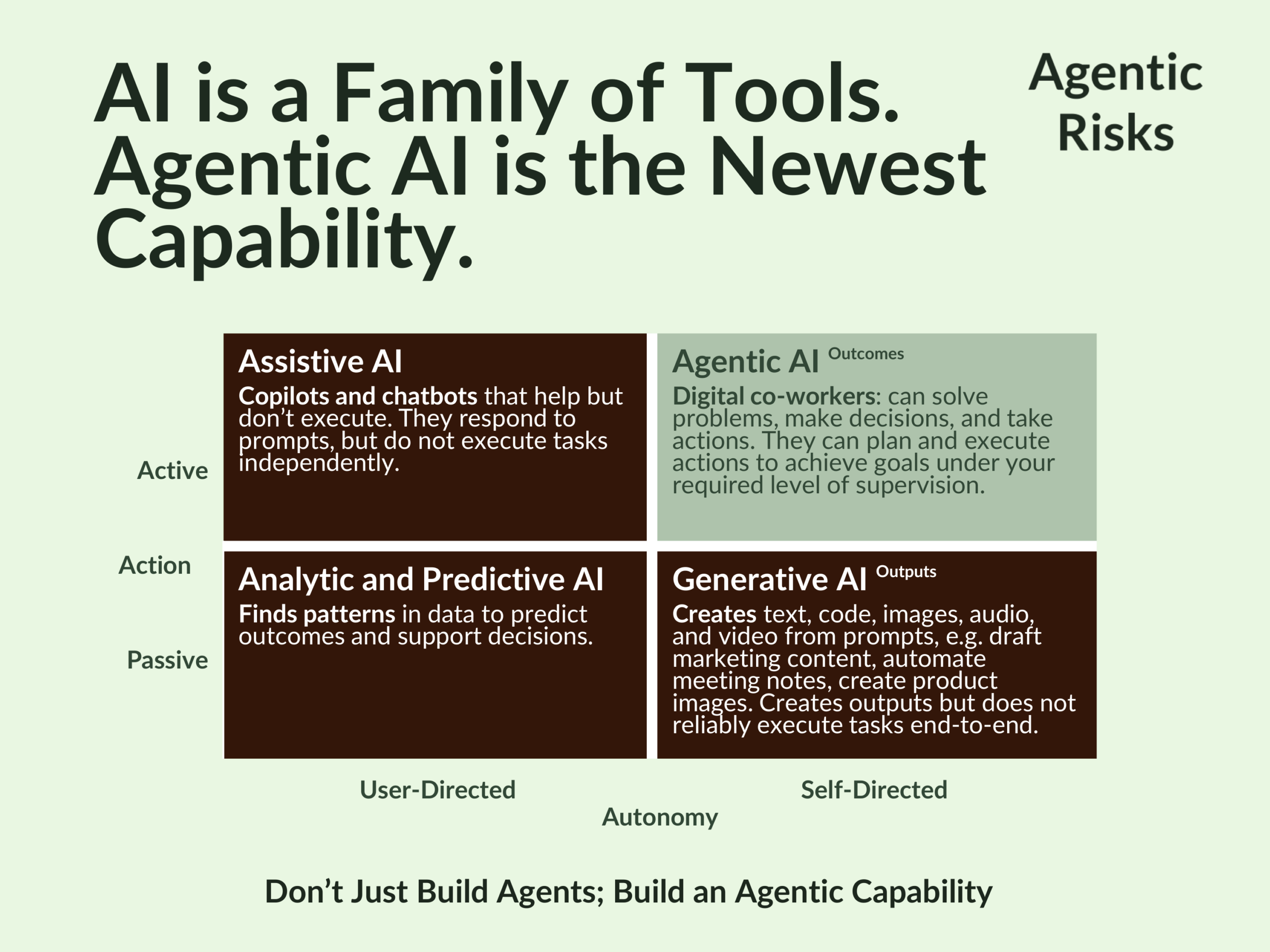

Artificial intelligence (AI) is a family of tools that can be understood by who directs them and how they act within AI workflows.

Across the bottom of our matrix are passive systems – analytic and generative tools – that produce insights or content but rely on their human users to interpret results and execute decisions.

At the top left are assistive systems such as prompt-driven copilots and chatbots: they help with tasks like drafting emails or summarising documents, but they are still user-directed and remain largely unable to execute actions independently.

At the top right sits agentic AI – self-directed digital co-workers that can plan, decide, and execute multi-step actions in pursuit of goals you set them and within constraints you specify.

A key differentiator is that, as gen AI produces outputs, agentic AI creates outcomes – positioning it well to execute not just tasks but end-to-end workflows.

How Agentic Workflows Differ

As we stand at the dawn of the agentic era, it is important to understand that we can only secure their benefits if we also manage the novel risks agentic AI introduces.

As a result, knowledge of how agentic workflows differ from traditional workflows will be vital to identifying a key source of agentic risks.

That, therefore, is the purpose of this article.

Example: Non-Agentic Workflow

At a high level, traditional workflows perform their tasks with no discretion over what to do, how to do it, or whether to do it differently next time. “If X happens, do Y.”

Because of this, their risks are largely static, predictable, and mostly introduced in design, with any failures usually localised and replicable. As a result, we typically test controls once and then monitor them periodically.

For example, a non-agentic machine-learning model flags potentially erroneous trade data. It receives a data file, applies its classification algorithm, returns flagged entries, and stops.

It cannot investigate further, access source systems to verify, or recommend corrective actions without separate human-initiated requests.

It is passive and user-directed, placing it on the bottom-left of our matrix.

Example: Agentic Workflow

In contrast, you train an agentic AI workflow to achieve its assigned outcome autonomously, choosing, within your constraints, how to reach that goal and adapt its future behaviour based on context, feedback, or prior outcomes.

For example, an agentic AI system given the objective “clean and validate this week’s trade data” might:

- Access the database and identify data quality issues.

- Query reference data systems to verify counterparty identifiers.

- Cross-reference transaction amounts against market data feeds.

- Detect anomalies and investigate potential causes by accessing additional systems.

- Propose specific corrections with supporting rationale.

- Iterate on unclear cases by seeking additional data sources, potentially informed by previous experience it gained rather than the training it received.

- Summarise findings and recommend human review for edge cases.

It actively pursues the objective you have given it, but does so under its own direction, placing it in the top-right quadrant of our matrix. As a result, in contrast to the static predictability of a non-agentic workflow, an agentic risk profile will change over time as new (learned) behaviours emerge.

Your major influence over this lies in your design decisions before you even train the agent.

Key Differences Between Agentic and Non-Agentic Workflows

As we are starting to see, agentic workflows differ from traditional workflows in several important ways:

- Decision authority – agents can choose actions within defined limits.

- Scope of action – workflows can span multiple systems and steps.

- Adaptability – agents can adjust behaviour based on feedback or memory.

- Failure profile – failures may emerge gradually rather than at a single point.

- Risk management – oversight must extend beyond design into continuous monitoring.

A Systematic Comparison: Agentic vs Non-Agentic Workflows

| Dimension | Non-Agentic Workflow | Agentic Workflow | Implications For Agentic Risk Identification |

|---|---|---|---|

| 1. Scope of Action | Single, predefined task. | Multi-step workflow. | Map the full workflow, not just individual task outputs. |

| 2. Point of risk introduction | Mostly introduced in design phase. | 1. Design phase - the privileges and freedoms you grant to an agent. 2. Execution - how the agent reasons and what it learns. | 1. Assess risk in design stage – number of tools, steps, transactions; nature and maturity of knowledge sources; depth of training data needed. 2. Monitor continuously during execution. |

| 3. Decision Authority | No decisions; only predictions / classifications. | Decides what actions to take and when, within the limits you allow. | Define and code the agent’s permitted actions and decision boundaries upfront. Specify how it will log decisions, tool calls, and data sources. |

| 4. System Access | No independent access. | Can exercise the systems privileges you give it autonomously. | Apply least-privilege access and segregate critical system permissions. |

| 5. Security | Focus on protecting the model itself and its training data. | Could be manipulated to misuse its access privileges. | Protect with access controls, input sanitization to prevent prompt injection, and output filtering to prevent data exfiltration. |

| 6. Predictability | Fixed behaviour. | Scope for emergent behaviour from reasoning process and level of autonomy you give it. | Minimise ambiguity: the format, fields, and types of information the agent will use as its working memory; assumptions in design and training; stress-test for drift; monitor behavioural KRIs; consider periodic revalidation. |

| 7. Failure profile | Failures usually localised and replicable. | Failures can emerge, starting small. | Invest in real-time or near-real-time monitoring. |

| 8. Error Propagation | Errors typically contained to single output. | Errors can compound fast. | Implement downstream reconciliations and circuit breakers to halt cascading automated actions. |

| 9. Failure Modes | Single-point failure (incorrect prediction). | Scope for multi-dimensional failures (wrong actions, wrong sequence, wrong systems accessed, logic errors). | Design multi-layered controls, fallback procedures, and human intervention requirements and triggers. |

| 10. Accountability | Clear ownership: humans initiate actions, AI assists, humans approve, humans are accountable. | Agents take dozens of intermediate actions autonomously, complicating logging and human oversight. | Assign human responsibility of agent actions and require end-to-end audit logs. |

The Emerging Role of The Agentic Risk Manager

Ultimately, risk managers should ensure an agent’s level of autonomy is controlled from the outset, making them vital participants in the design phase of agentic AI workflows.

By advising on appropriate risk controls, boundaries, and KRIs before the agent receives its training, risk professionals can ensure organisations secure the benefits of agentic workflows through safe and effective adoption.

Understanding how agentic workflows differ from traditional workflows is therefore a foundational step in developing effective agentic AI risk management.

These differences are just one of multiple sources of agentic AI risk that, because of the novelty of the risk controls, we will continue to blog about here to support the evolution in risk management needed for this new technology.

If you would like our help with your agentic transformation, check our services here, or book a free consultation to discuss your situation.

FAQs

An agentic workflow is a system in which an AI agent can plan, decide, and execute multi-step actions autonomously in pursuit of a goal. Unlike traditional AI workflows, which produce outputs such as predictions or classifications, agentic workflows pursue outcomes by coordinating tasks across systems and adapting their behaviour based on feedback or experience.

Agentic workflows pursue outcomes by allowing AI agents to plan and execute multi-step actions autonomously, while non-agentic workflows perform predefined tasks such as predictions or classifications without independent decision-making.

Agentic workflows can access systems, make decisions, and adapt their behaviour over time. This autonomy introduces risks such as emergent behaviour, cascading errors, and misuse of system privileges.

Risk managers should assess risks both during design and during execution, define strict boundaries for agent autonomy, monitor behaviour continuously, and implement controls such as audit logs, circuit breakers, and human escalation triggers.

No. Traditional workflows remain effective for well-defined tasks such as prediction or classification. Agentic workflows are better suited to complex multi-step processes where autonomous coordination and adaptation are valuable.