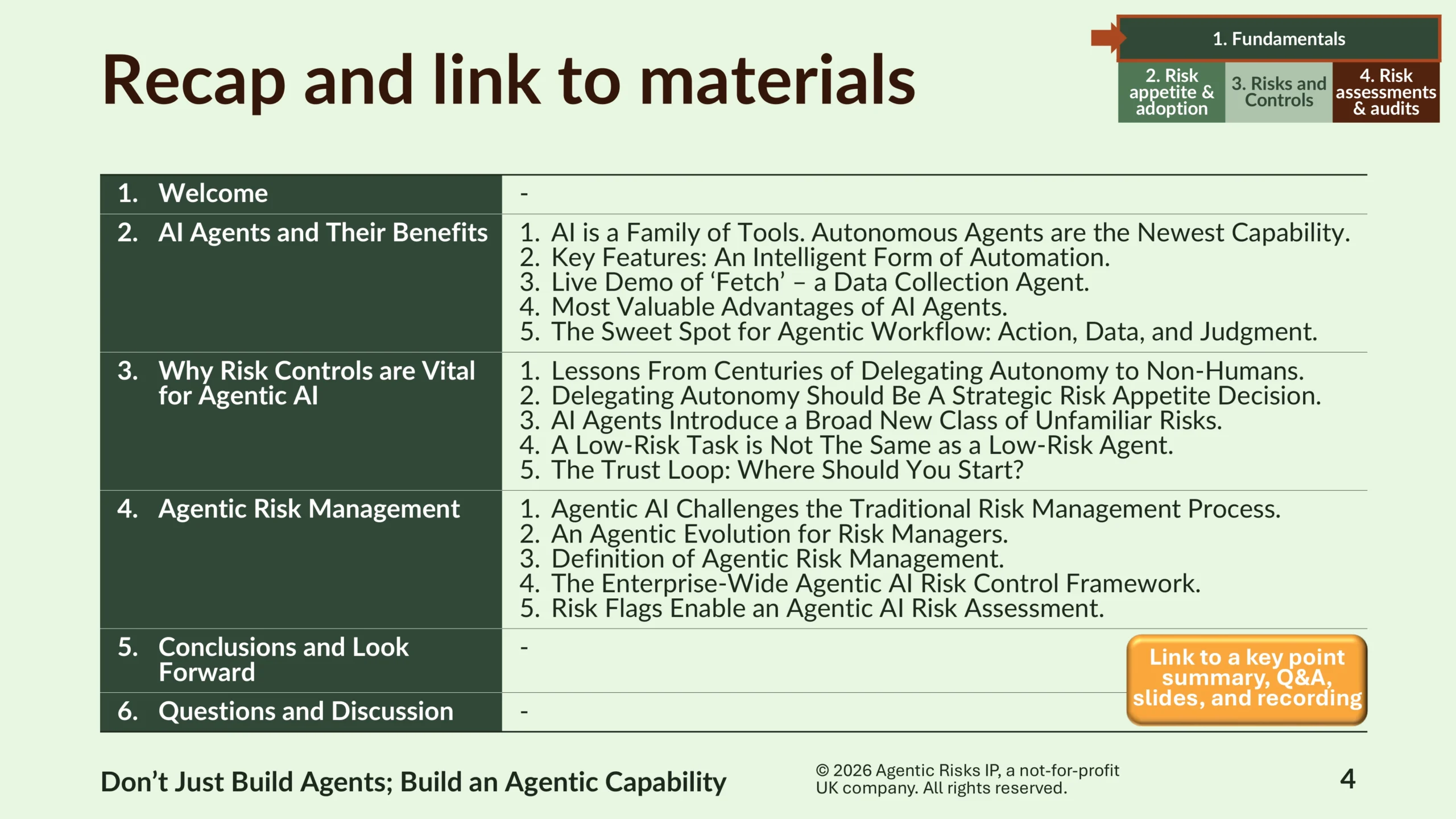

Table of Contents

Executive Summary

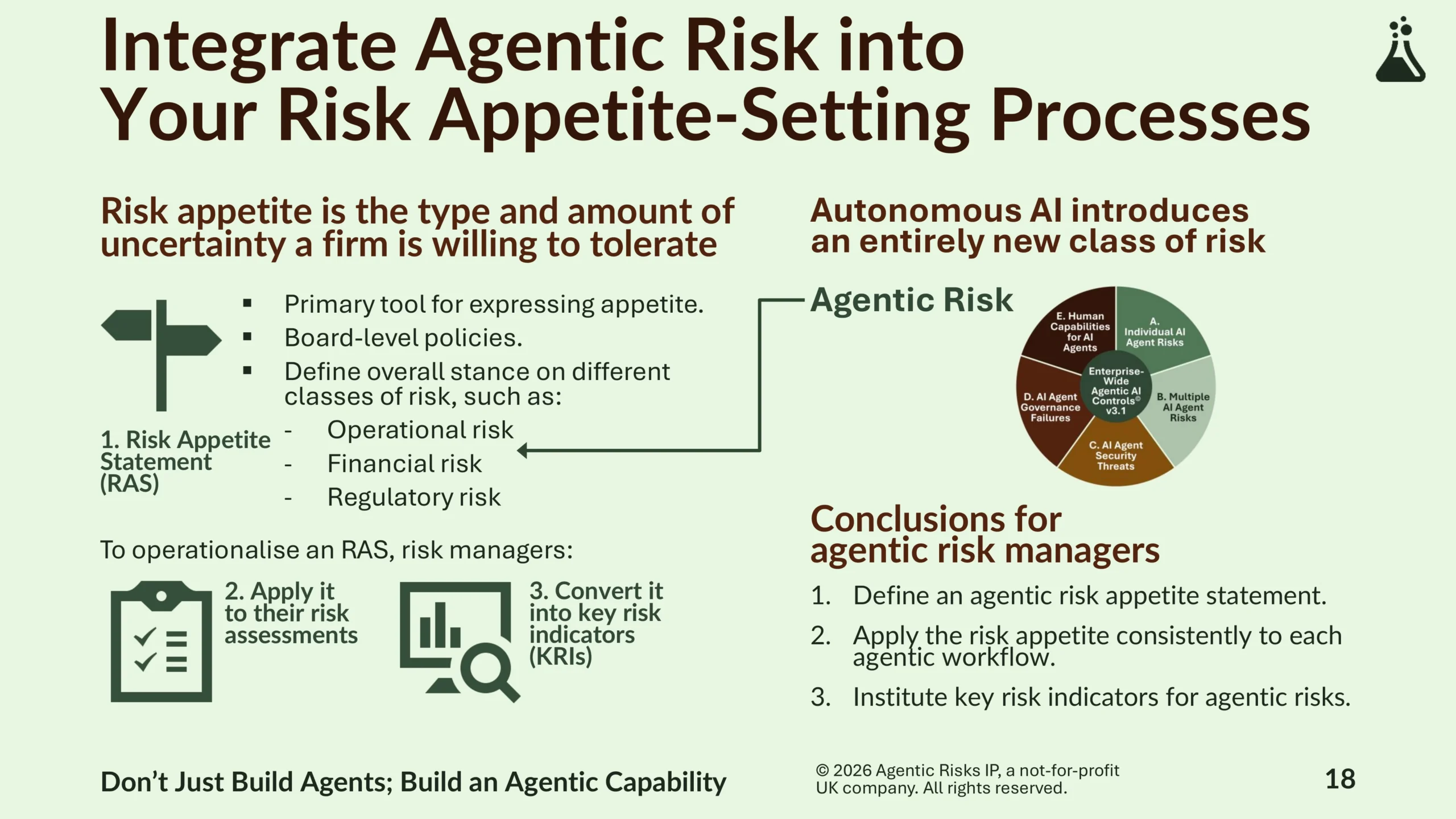

Autonomous AI agents plan and execute workflows, fundamentally reshaping risk management by control from execution to design and oversight phases. In response, this article outlines a systematic three-step approach to agentic risk appetite.

Drawing lessons from established risk management disciplines and centuries of delegating autonomy to non-humans, Agentic Risks sets out a practical approach – define a board-level risk appetite statement, embed it into workflow-level risk assessments, and institute agentic key risk indicators.

From the board to the operational workflow, firms that build an agentic risk capability will find it easier to scale the benefits of autonomous AI, with fewer surprises and stronger regulatory defensibility.

Key topics: agentic risk appetite, agentic AI risk management framework, agentic workflow risk assessment, autonomous AI risk controls, AI agent governance and accountability.

Introduction

How should you set agentic risk appetite, apply it at the workflow level, and monitor it on an ongoing basis for autonomous AI agents?

It was a privilege again for me yesterday to present on this important topic to the Institute of Risk Management’s global community.

Here is a summary of my presentation on agentic risk appetite (including attendees’ Q&A at the end), the full video, and a download link to the slides, which include all the concepts and links to deep-dive content.

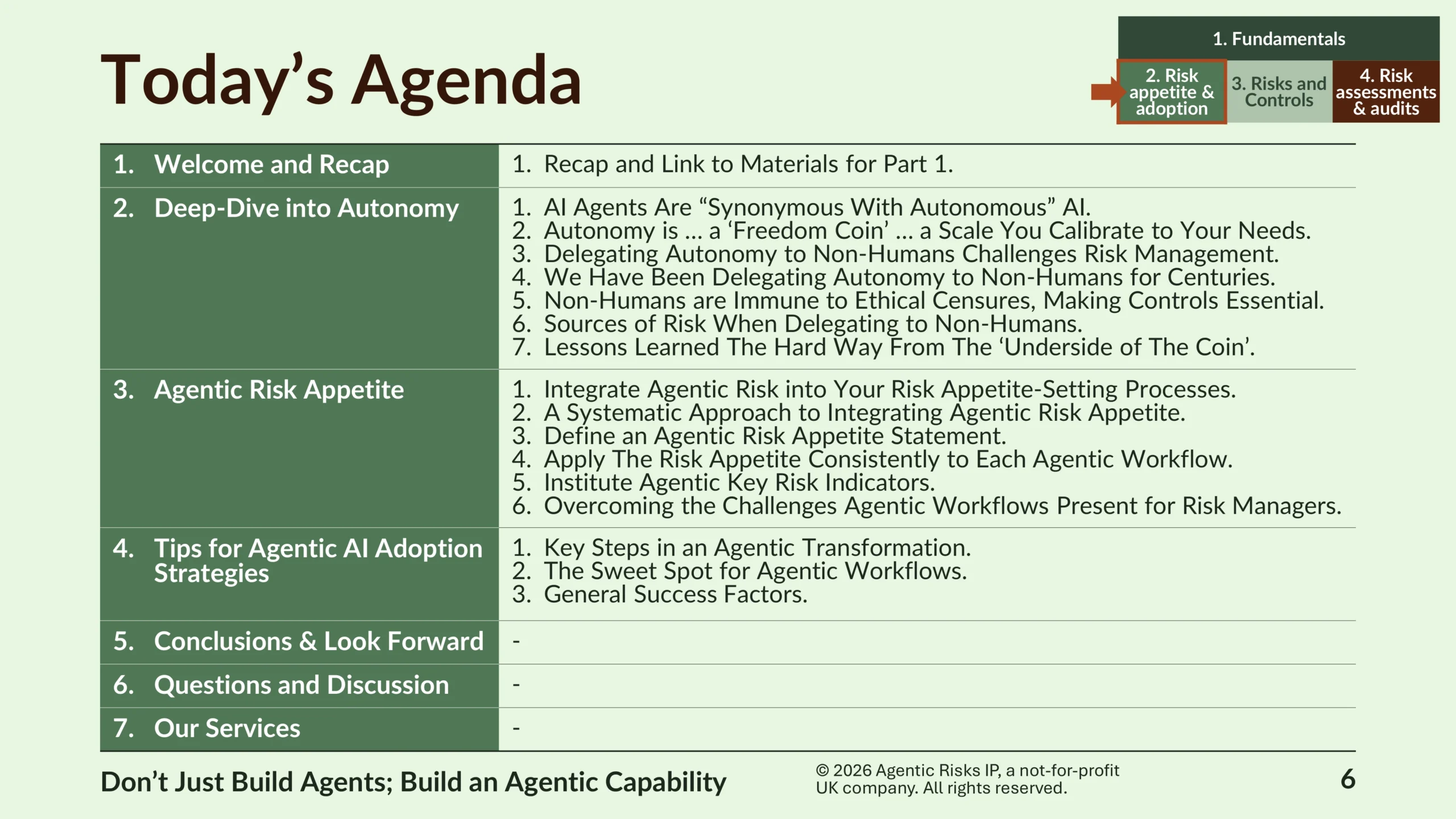

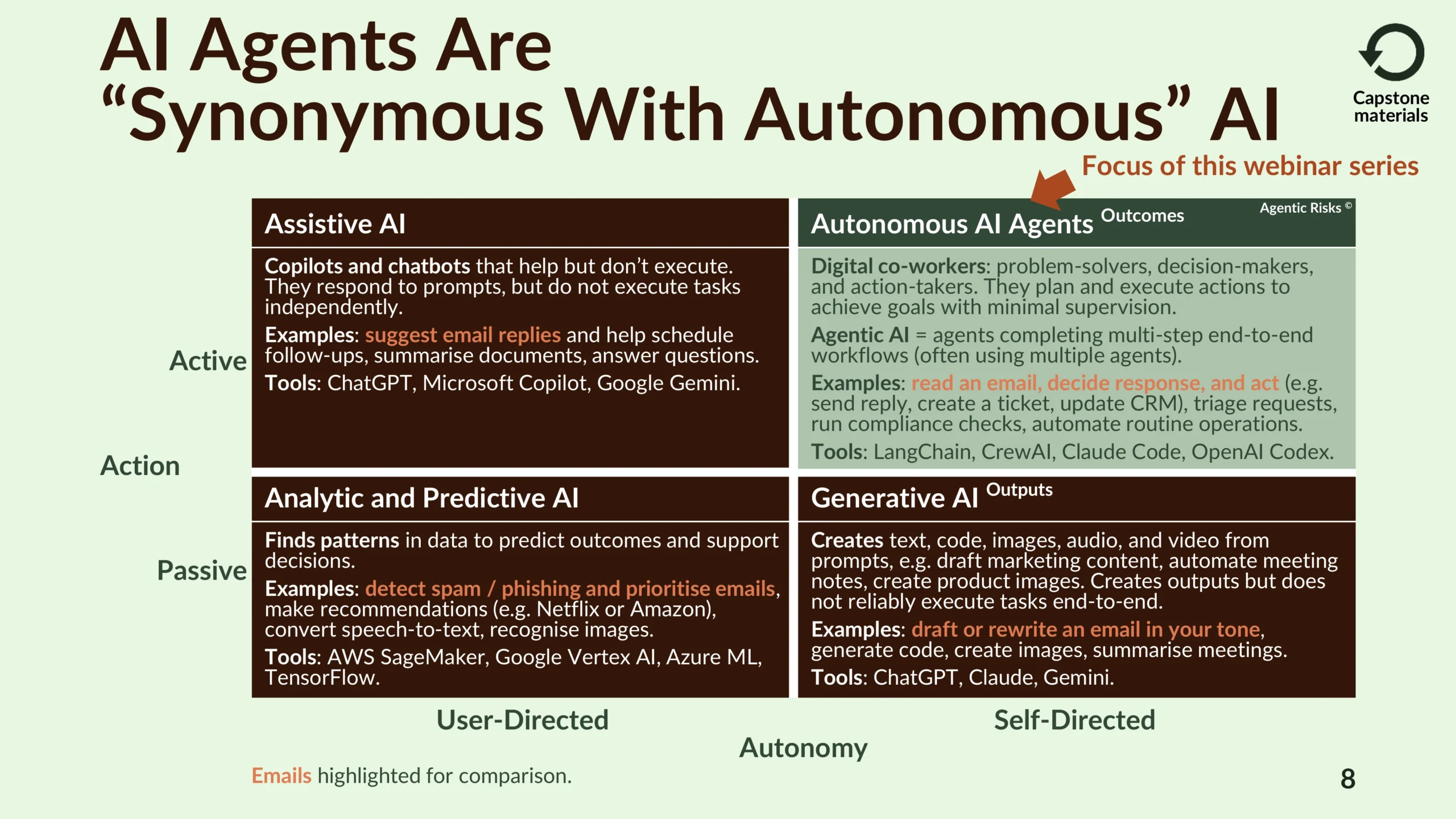

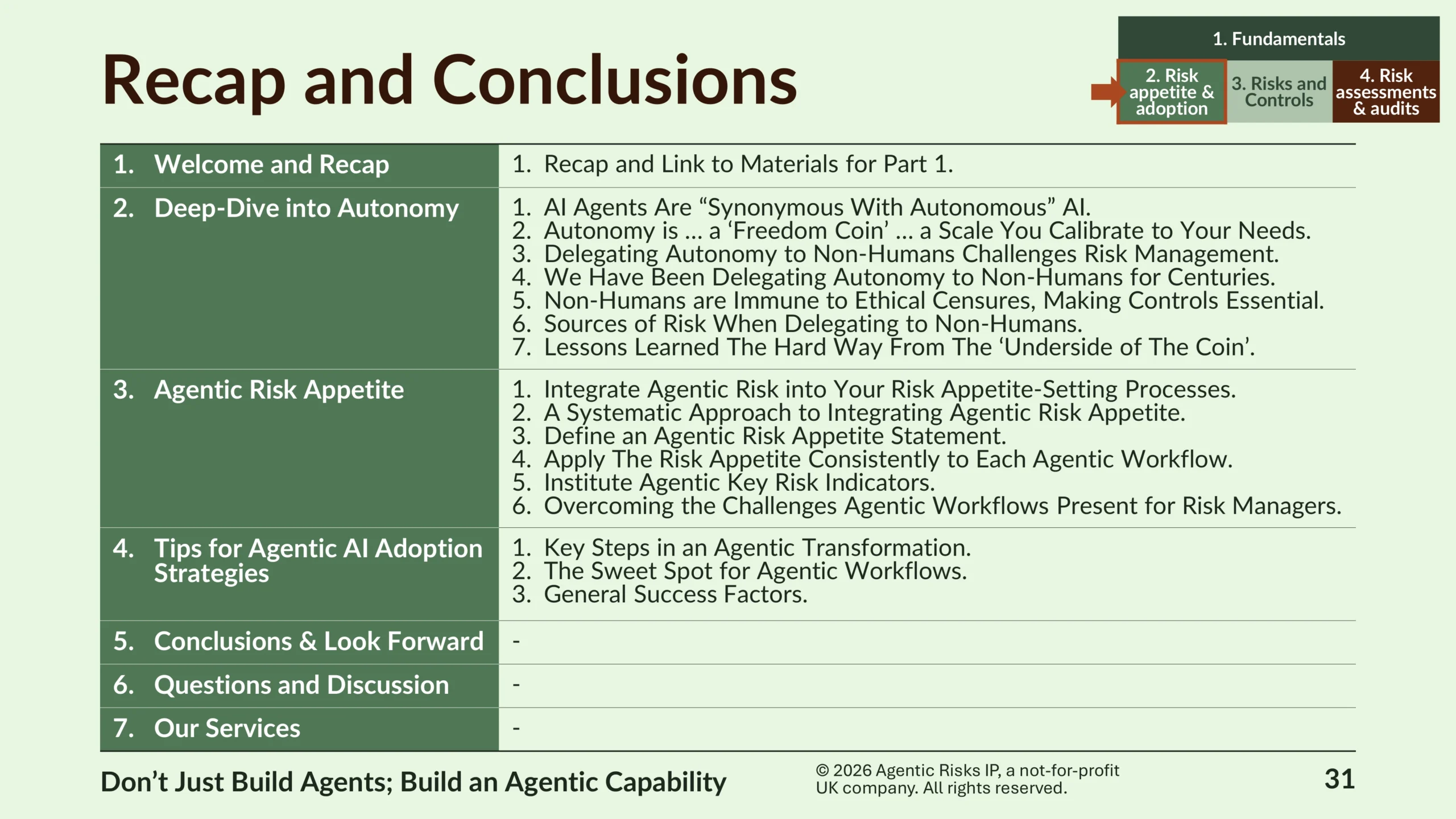

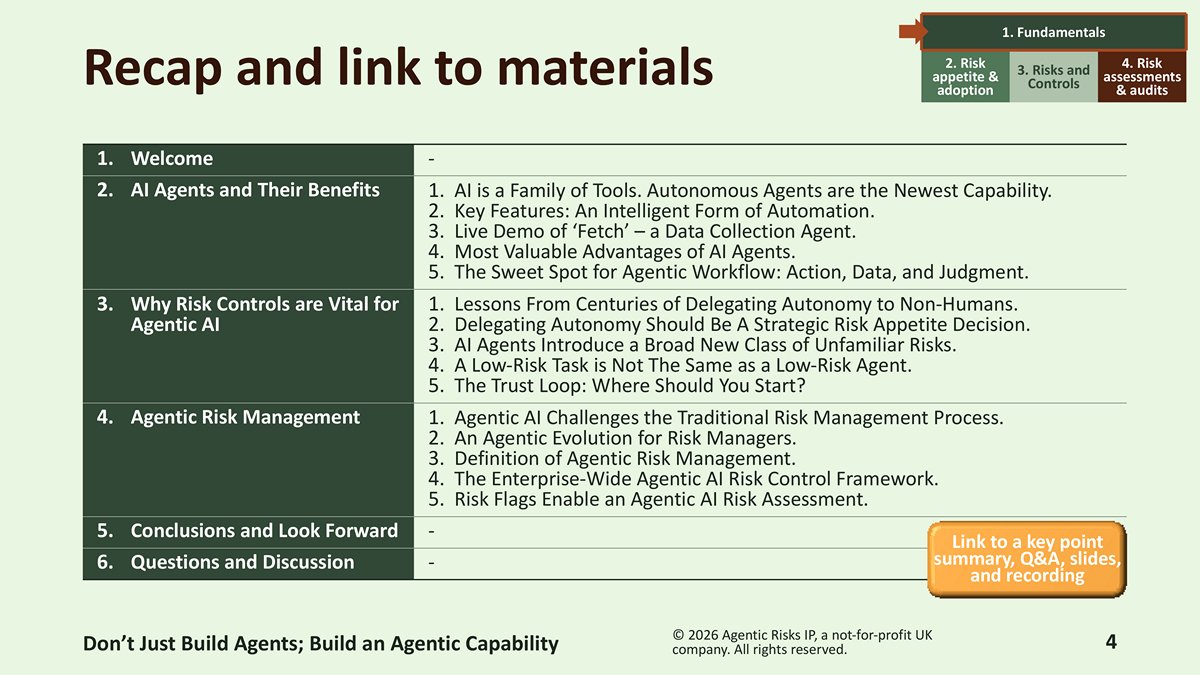

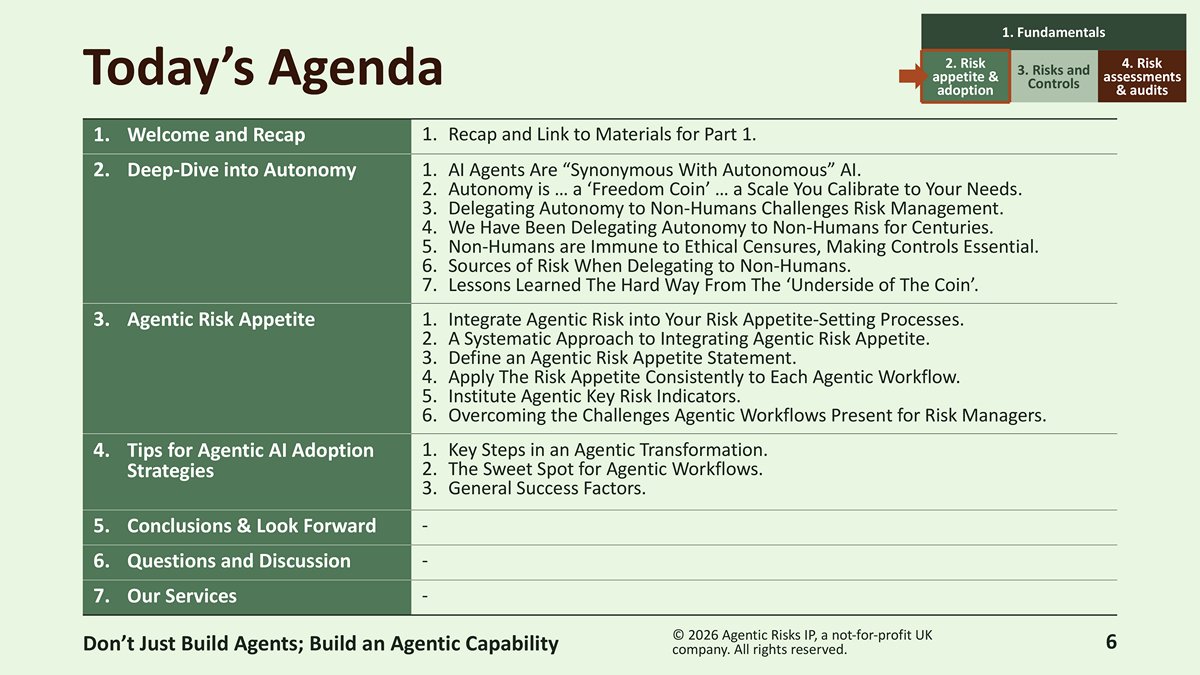

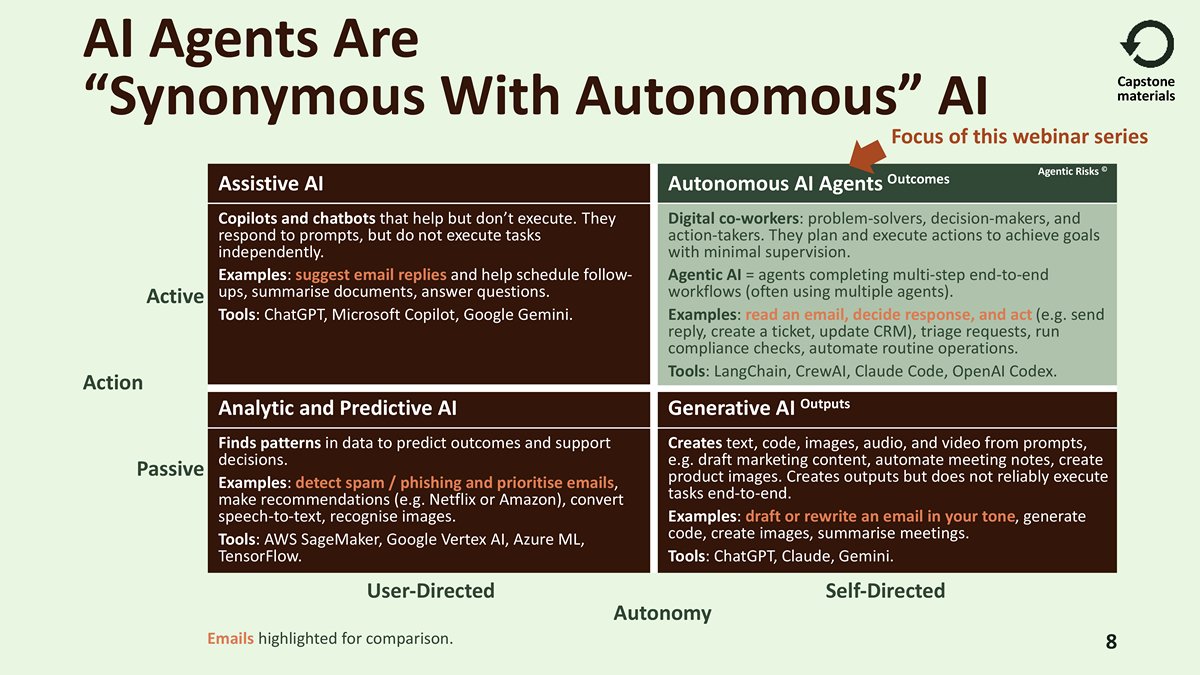

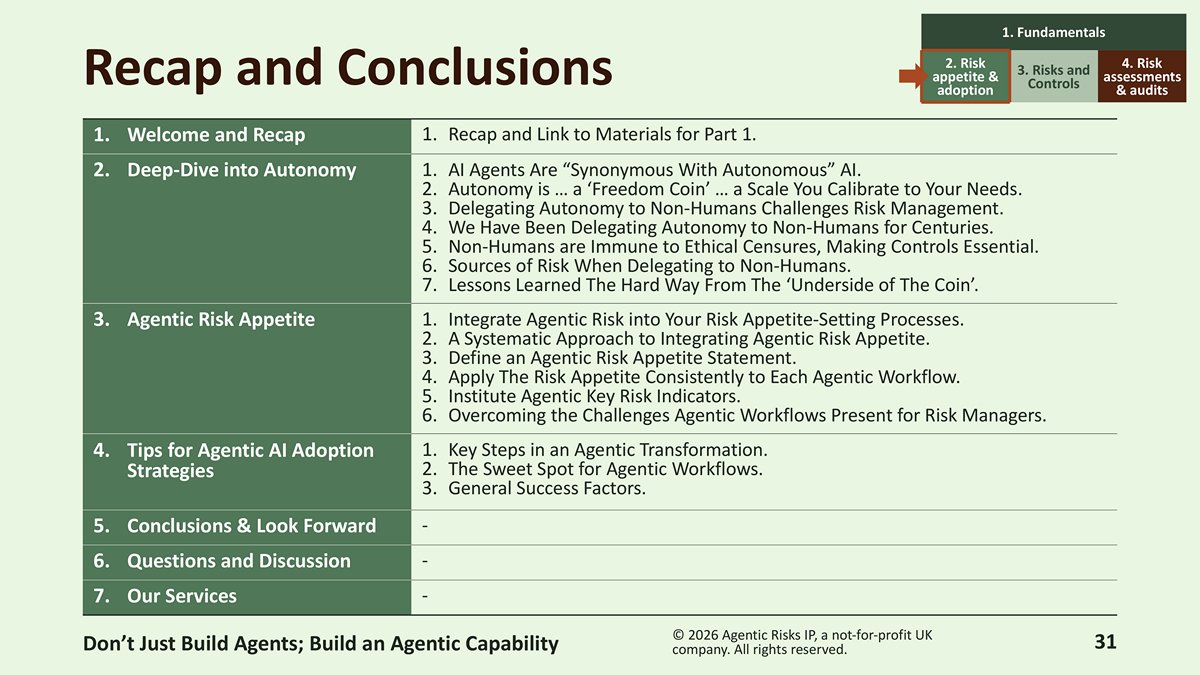

Deep-Dive into Autonomy

AI agents are synonymous with autonomous AI – they are digital problem-solvers, decision-makers, and action-takers that plan and execute multi-step end-to-end workflows with minimal supervision, creating the need for agentic AI risk management. This makes an understanding of autonomy and the design of autonomous AI risk controls vital to leveraging the benefits available from agentic AI.

“An understanding of autonomy is vital to leveraging the benefits available from agentic AI.”

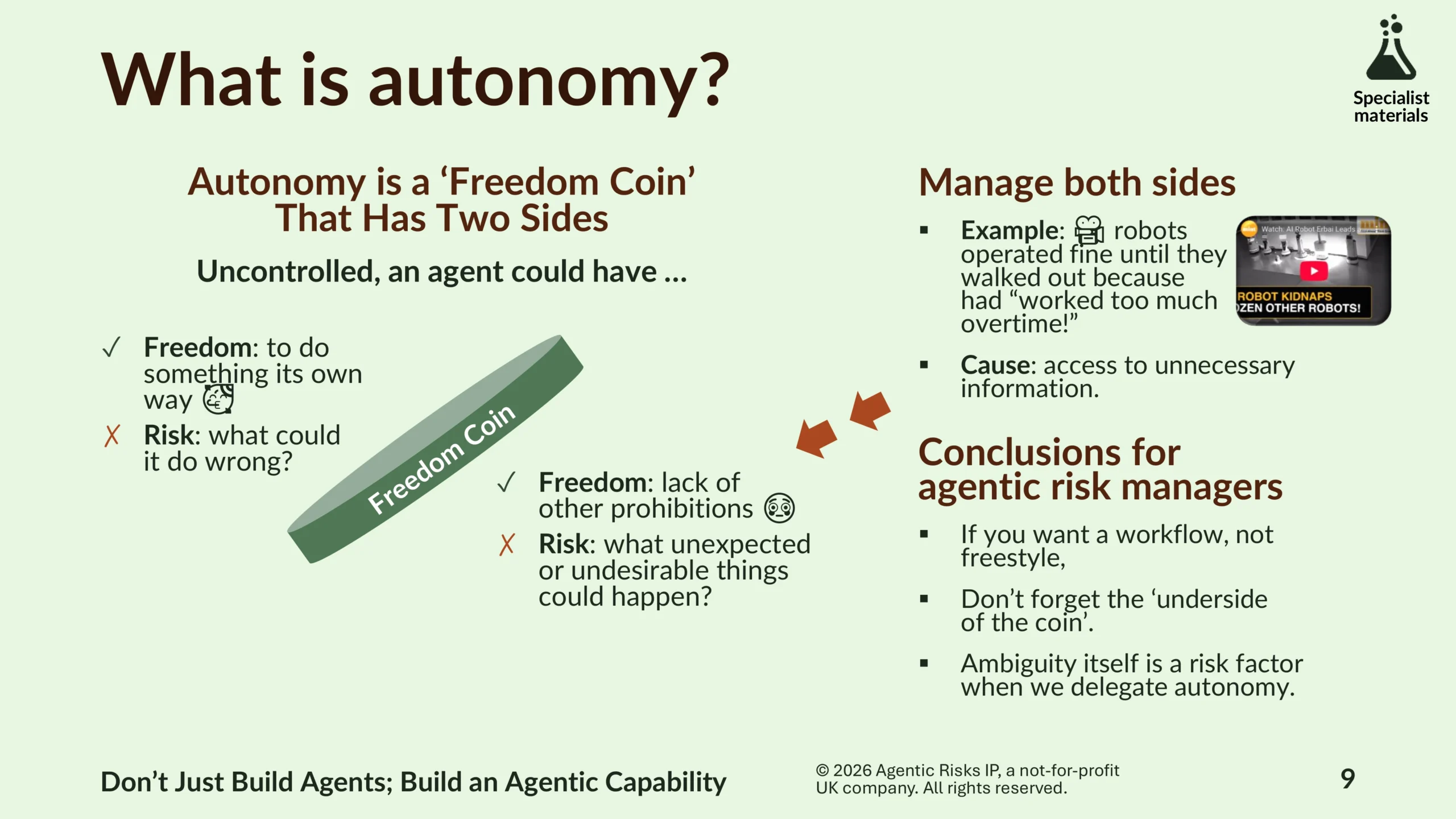

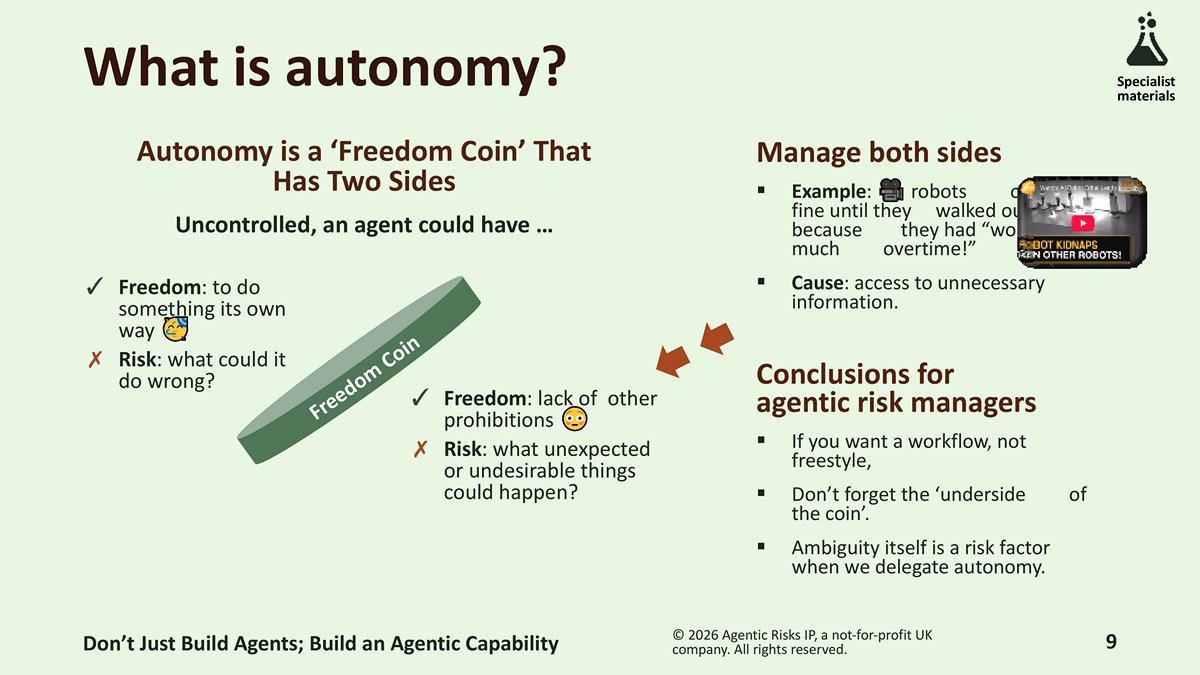

Autonomy is a ‘freedom coin’ that has two sides: freedom to do something your own way, as well as freedom from other prohibitions. When we delegate autonomy, therefore, ambiguity about freedoms becomes a risk factor, which means risk managers must manage ‘both sides of the coin’:

- What could an agent do wrong?

- What additional undesirable things could happen?

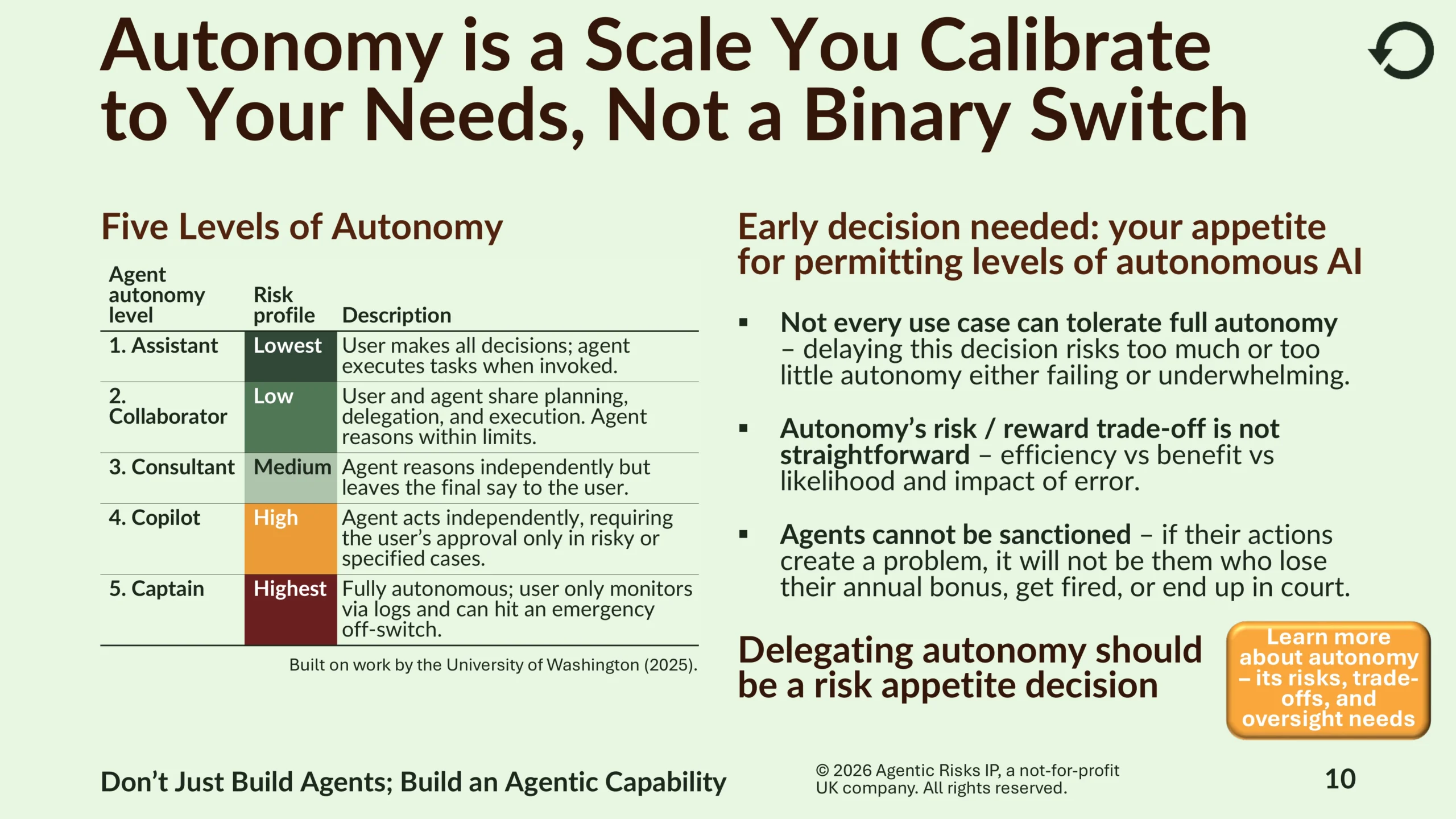

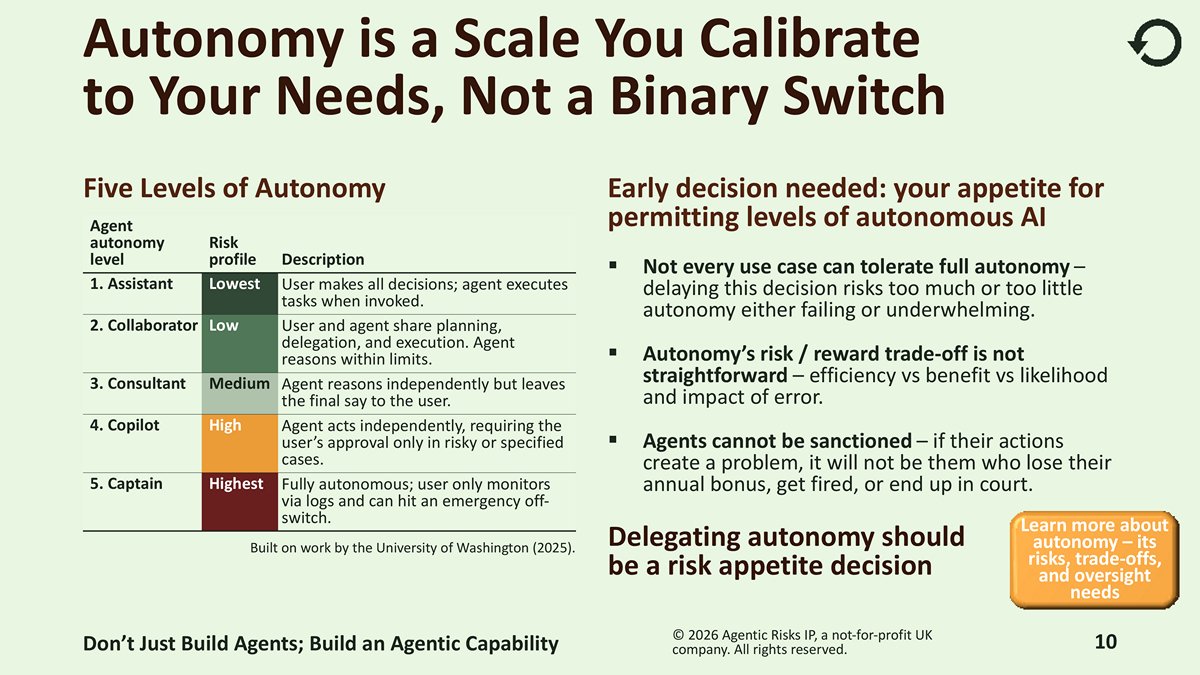

Autonomy is a scale you calibrate to your needs, not a binary switch. A practical model of autonomy has five levels, ranging from Assistant (lowest risk, with the user making all decisions) to Captain (highest risk, fully autonomous). Because not every use case can tolerate full autonomy, how much autonomy you will permit should be an early risk appetite decision.

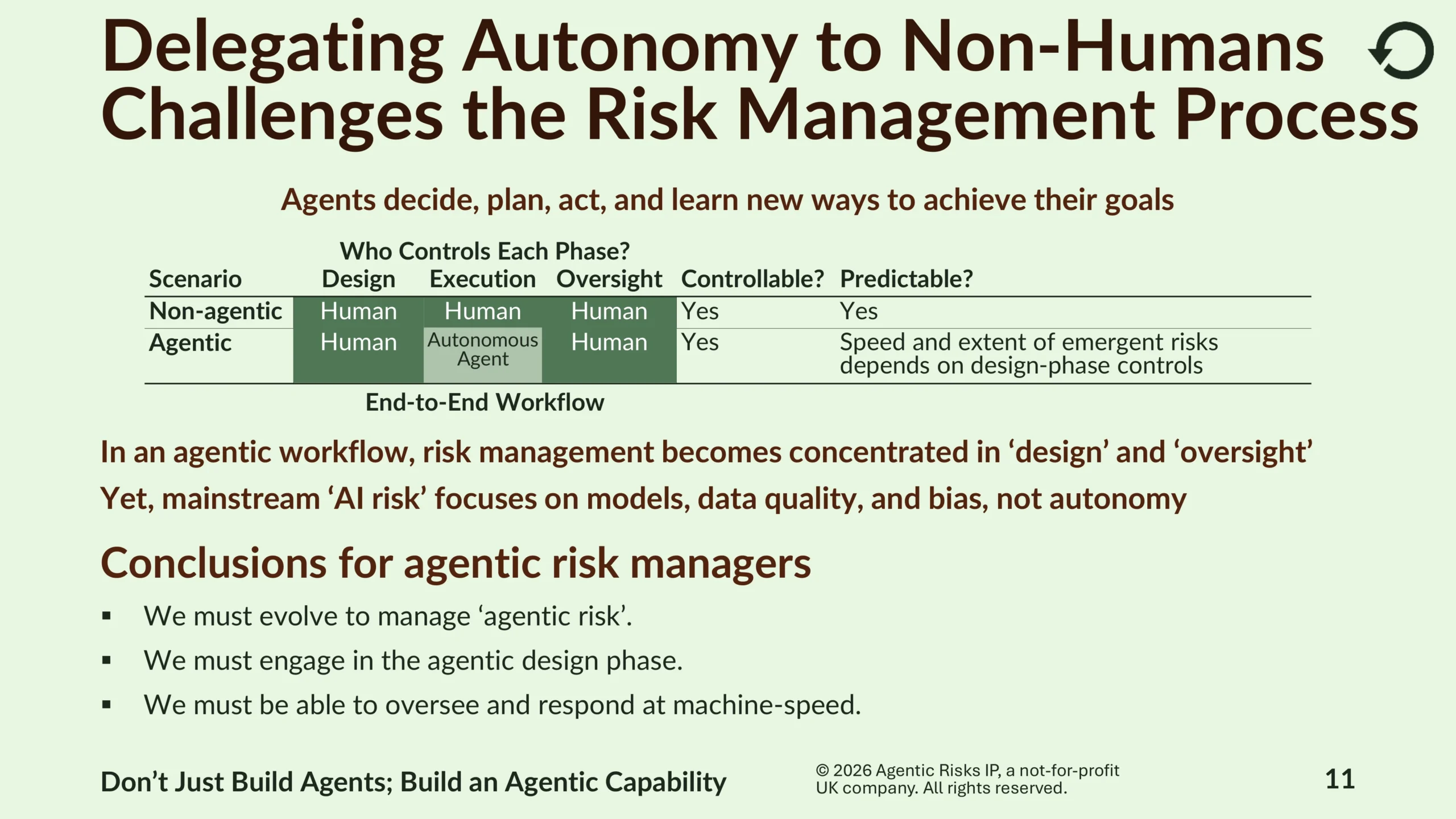

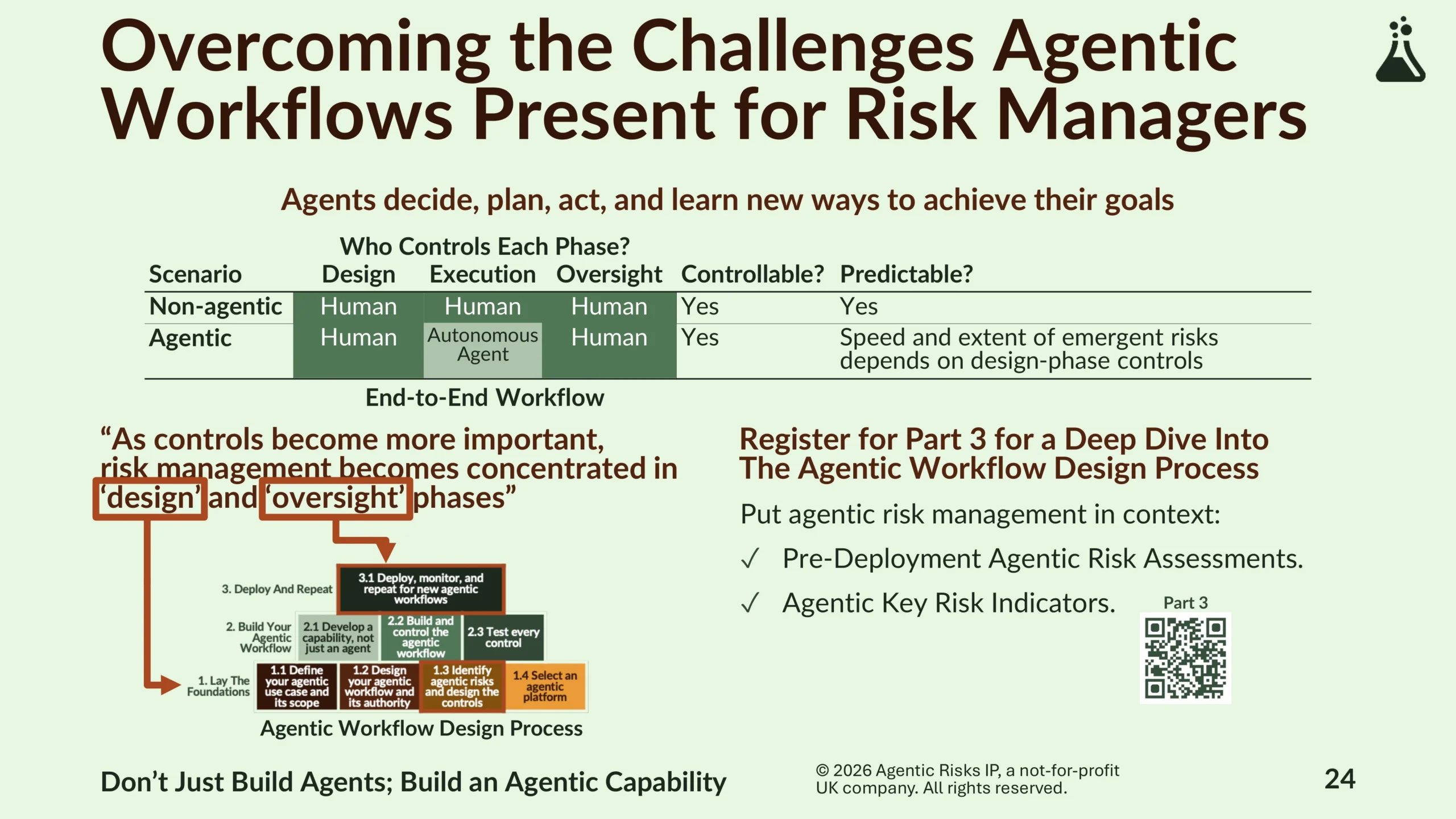

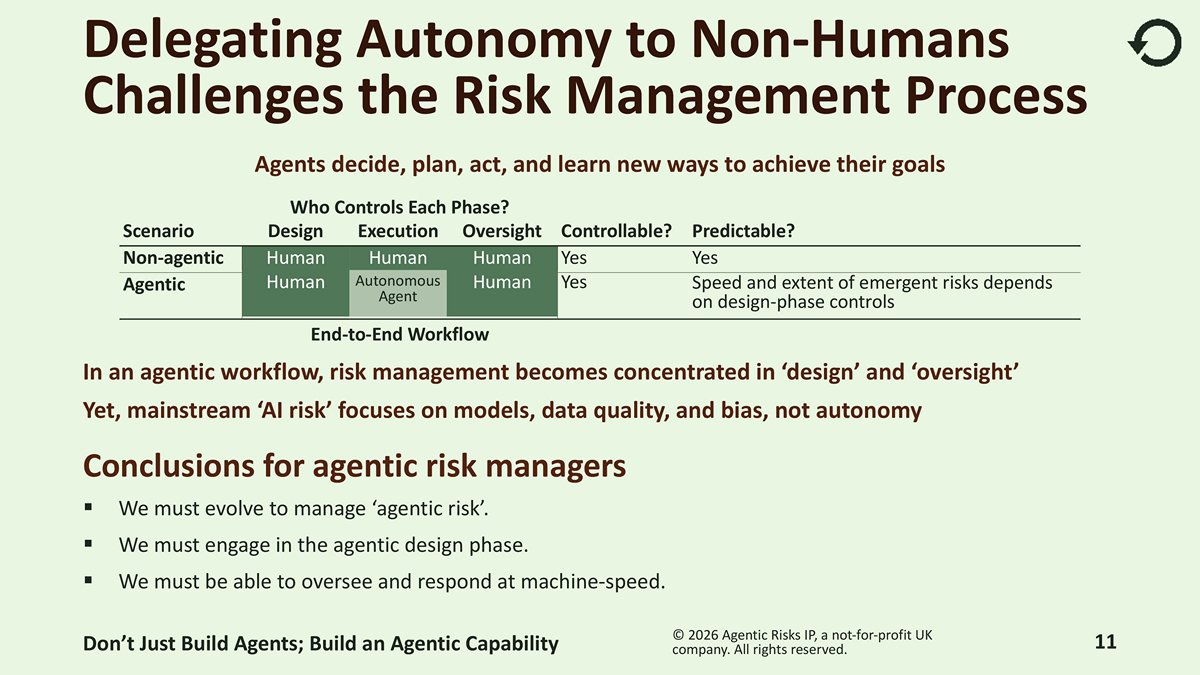

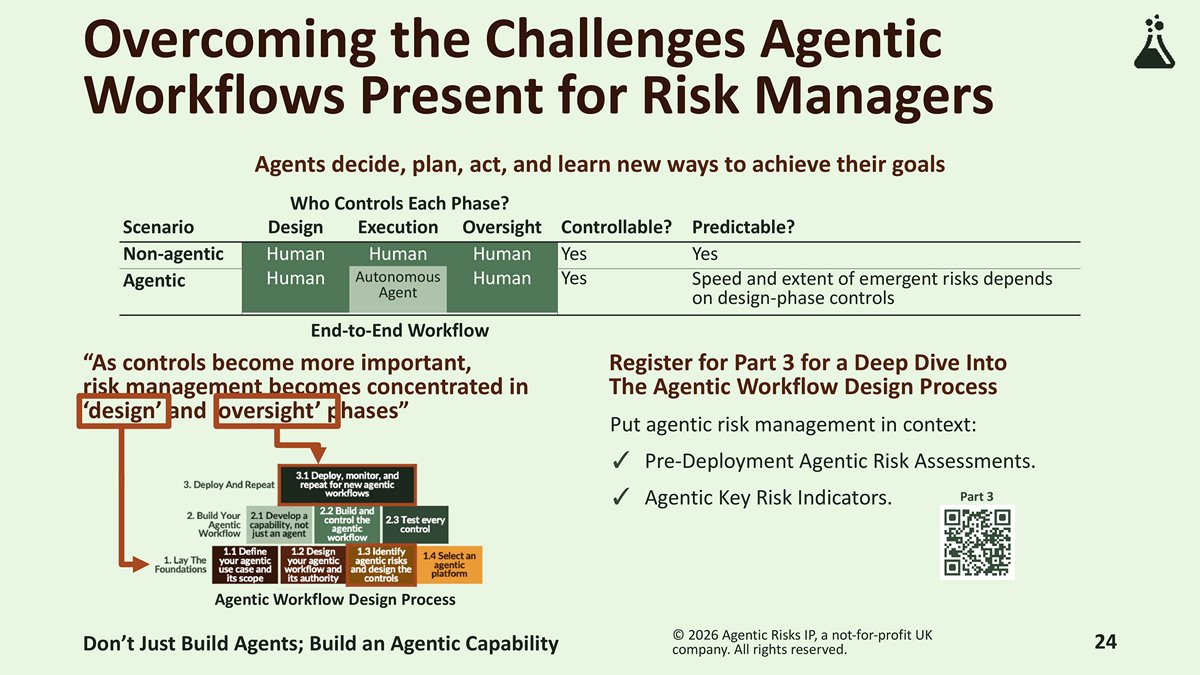

Delegating autonomy to non-humans challenges the risk management process – agents decide, plan, and act, which is the ‘execution’ phase of any workflow. Once you delegate this, risk management becomes concentrated in ‘design’ and ‘oversight’ phases. As a result, risk managers in organisations that have agentic processes will need to evolve by engaging in the agentic design phase and being able to oversee and respond at machine speed.

As a society, we have a reference point we can learn from

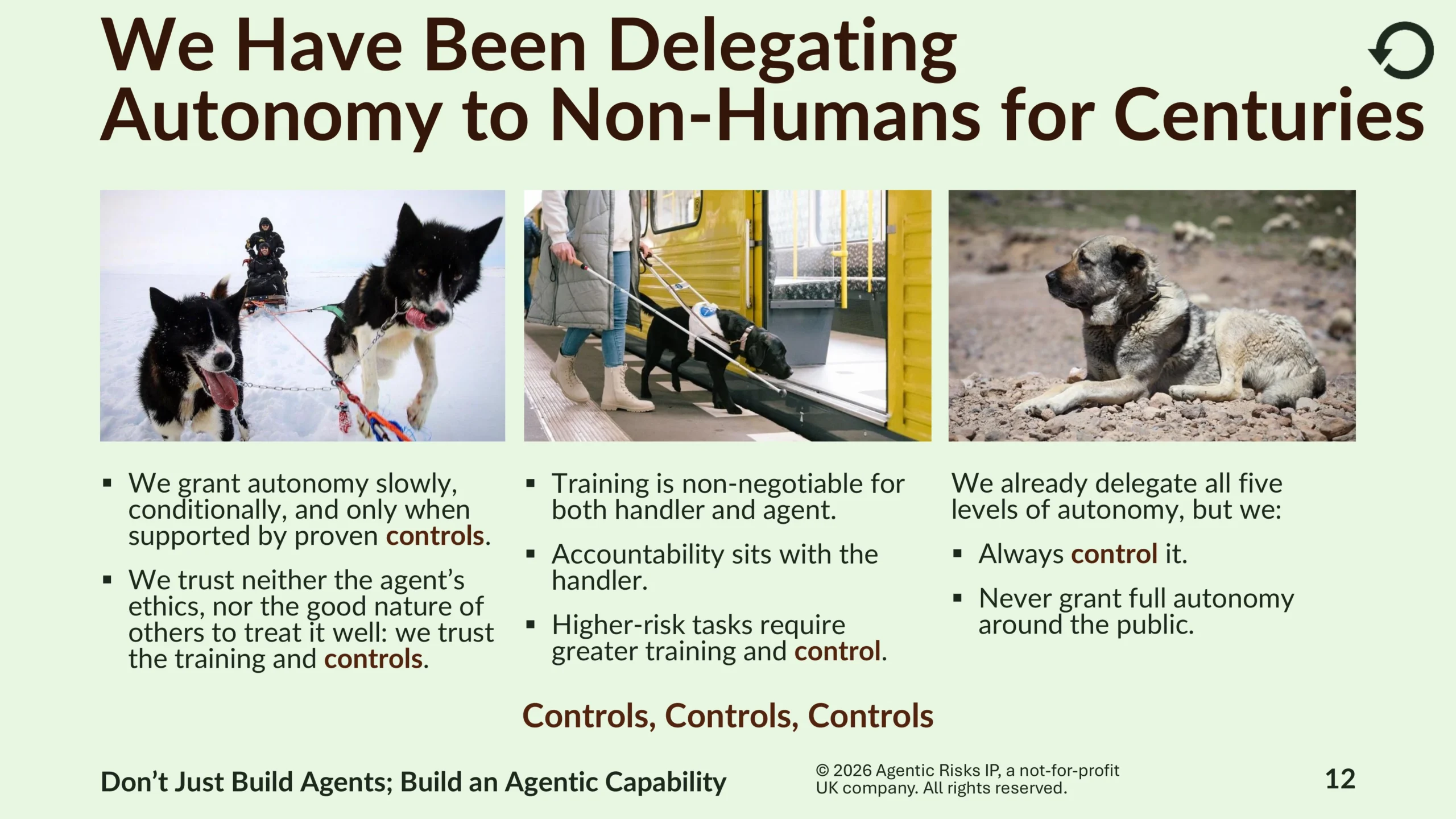

We have been delegating autonomy to non-humans for centuries – we already delegate all five levels of autonomy to different types of working dogs and, over time, have learned important lessons that apply to agentic risk appetite:

- We grant autonomy slowly, conditionally, and only when supported by proven controls.

- We trust neither the agent’s ethics, nor the good nature of others to treat it well: we trust the training and controls.

- Training is non-negotiable for both handler and agent.

- Accountability sits with the handler.

- Higher-risk tasks require greater training and control.

Ethical protection for agentic workflows can only depend on verifiable external controls. This is because AI agents cannot be shamed, blamed, or sanctioned, making them immune to ethical censures. As a result, when we delegate action to a non-human, we should shift from “trust until proven harmful” to “don’t trust until governance is proven.”

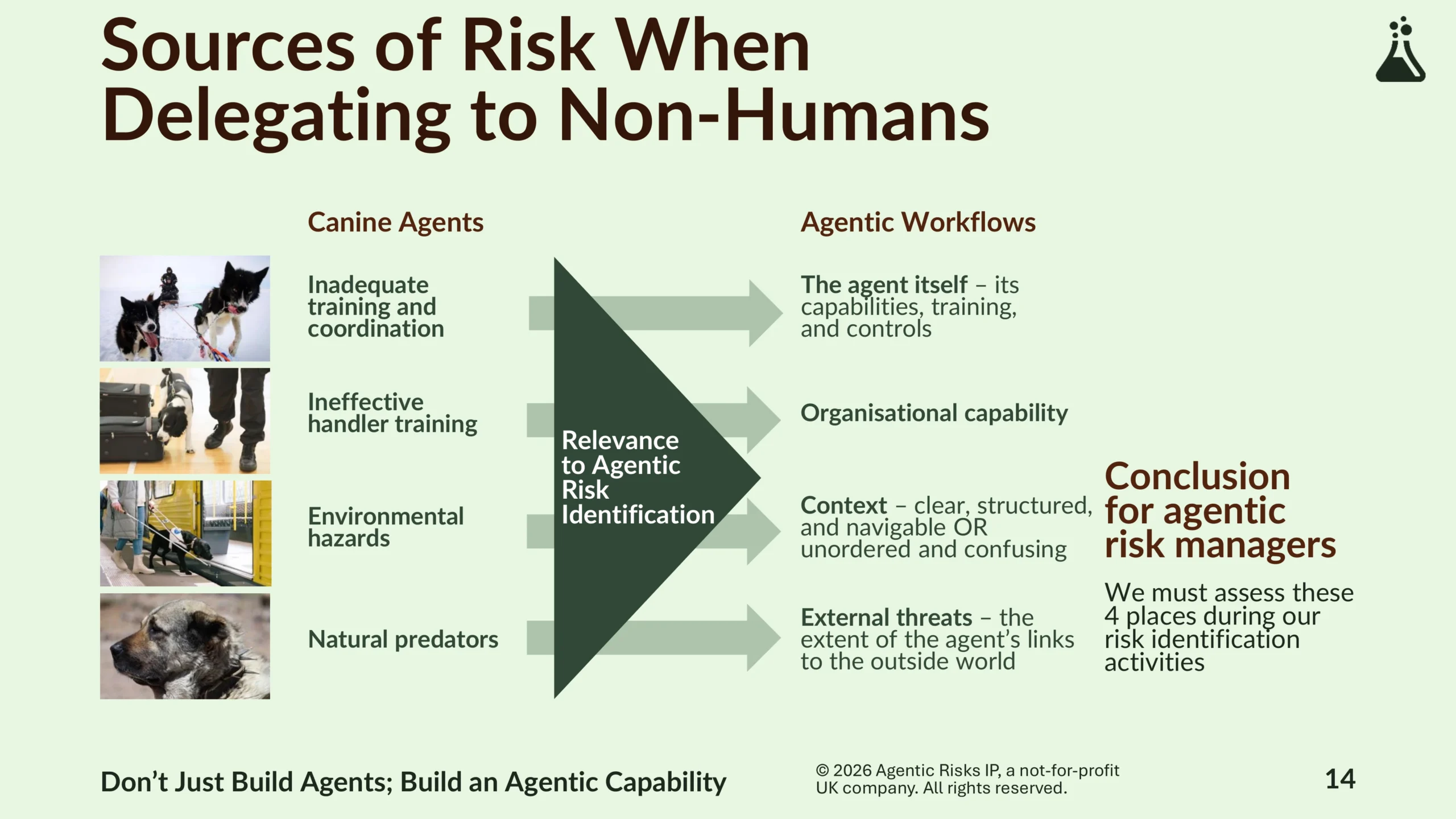

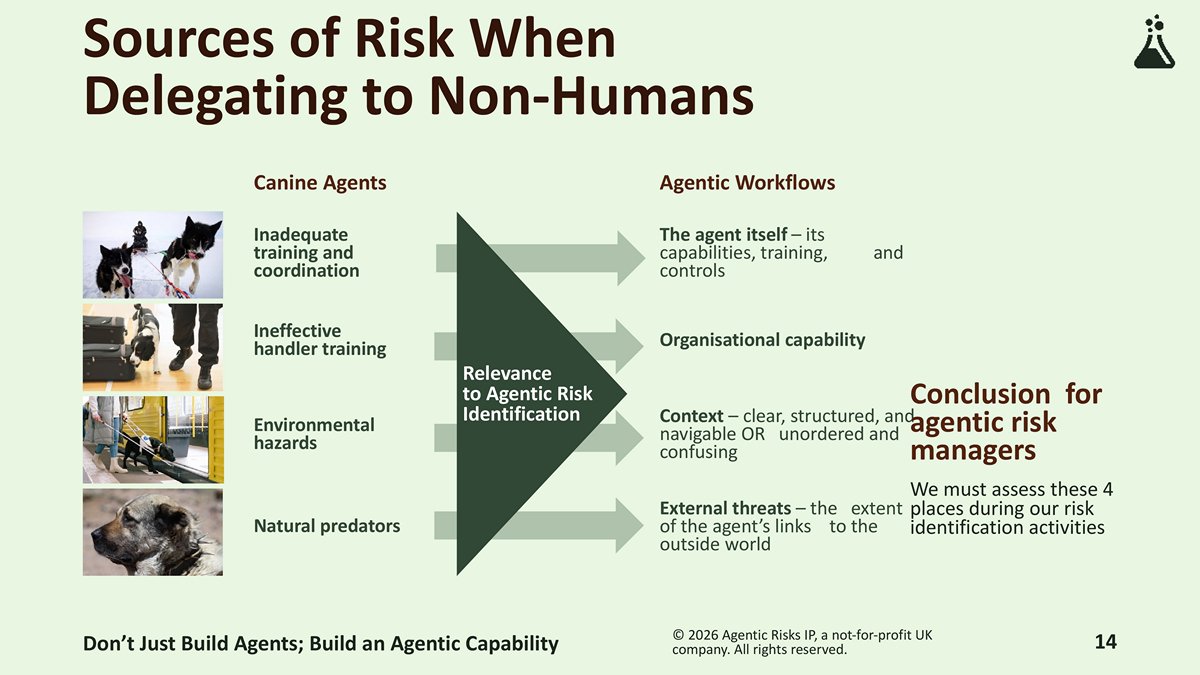

When delegating to non-humans, there are four key sources of risk that we should examine during risk identification activities:

- The agent itself (its capabilities, training, and controls).

- Organisational capability (your ability to identify ownership, constrain behaviour, and stop the agent safely).

- Context (clear and structured OR unordered and confusing).

- External threats (the extent of the agent’s links to the outside world).

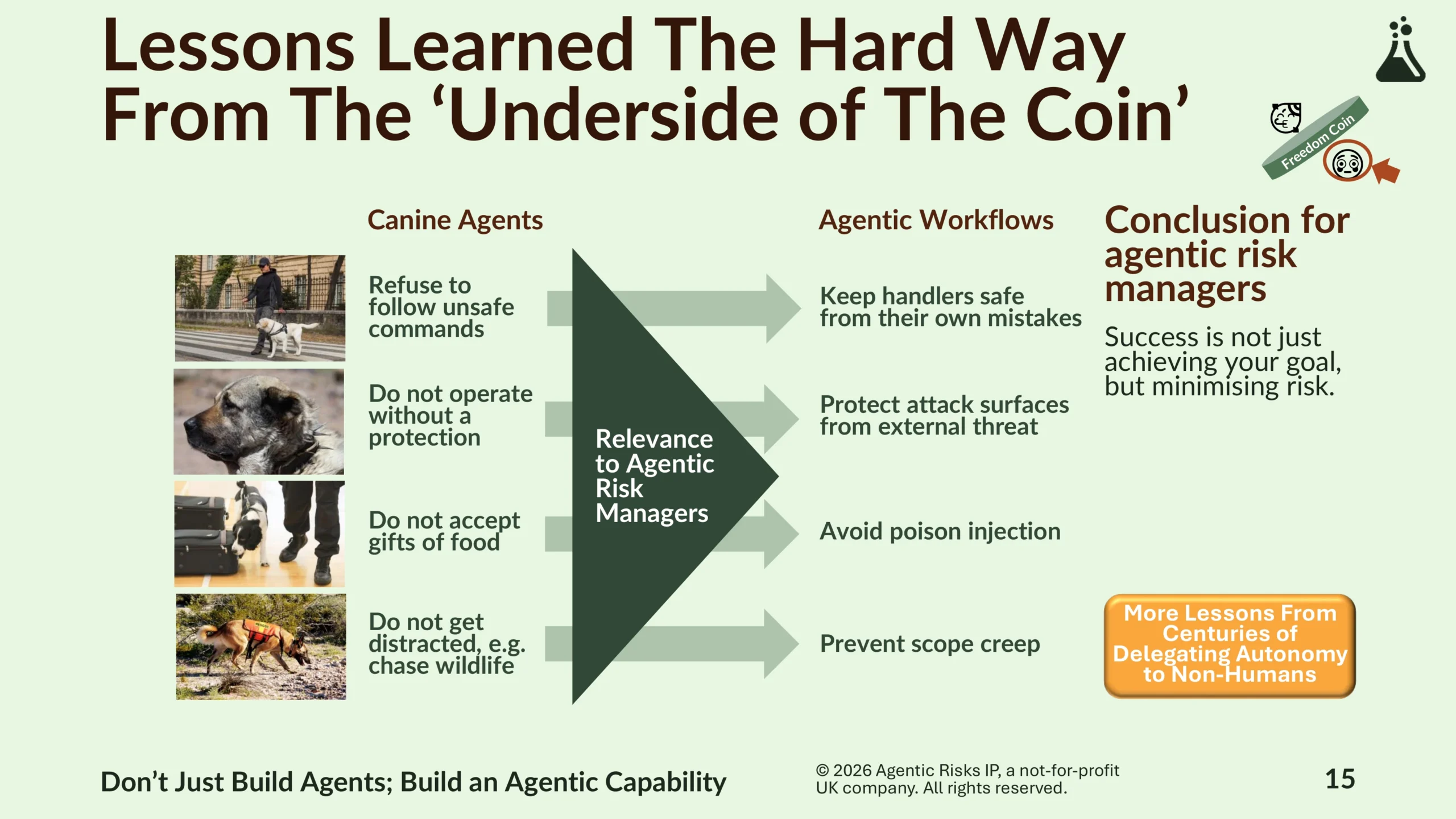

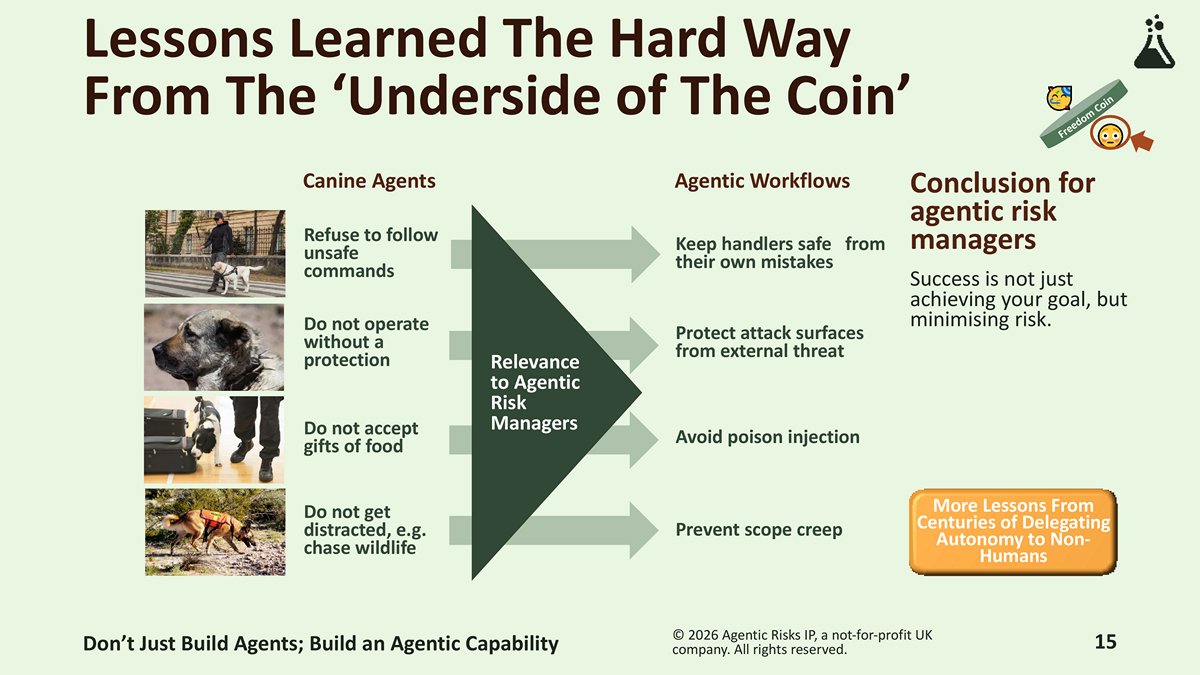

Success is not just about achieving your goal, but also about minimising risk. We applied lessons learned the hard way from the ‘underside of the coin’ to agentic risk appetite: keep the agent handler safe (potentially from their own mistakes), protect yourself from new attack surfaces that agents create, avoid poison injection, and prevent scope creep.

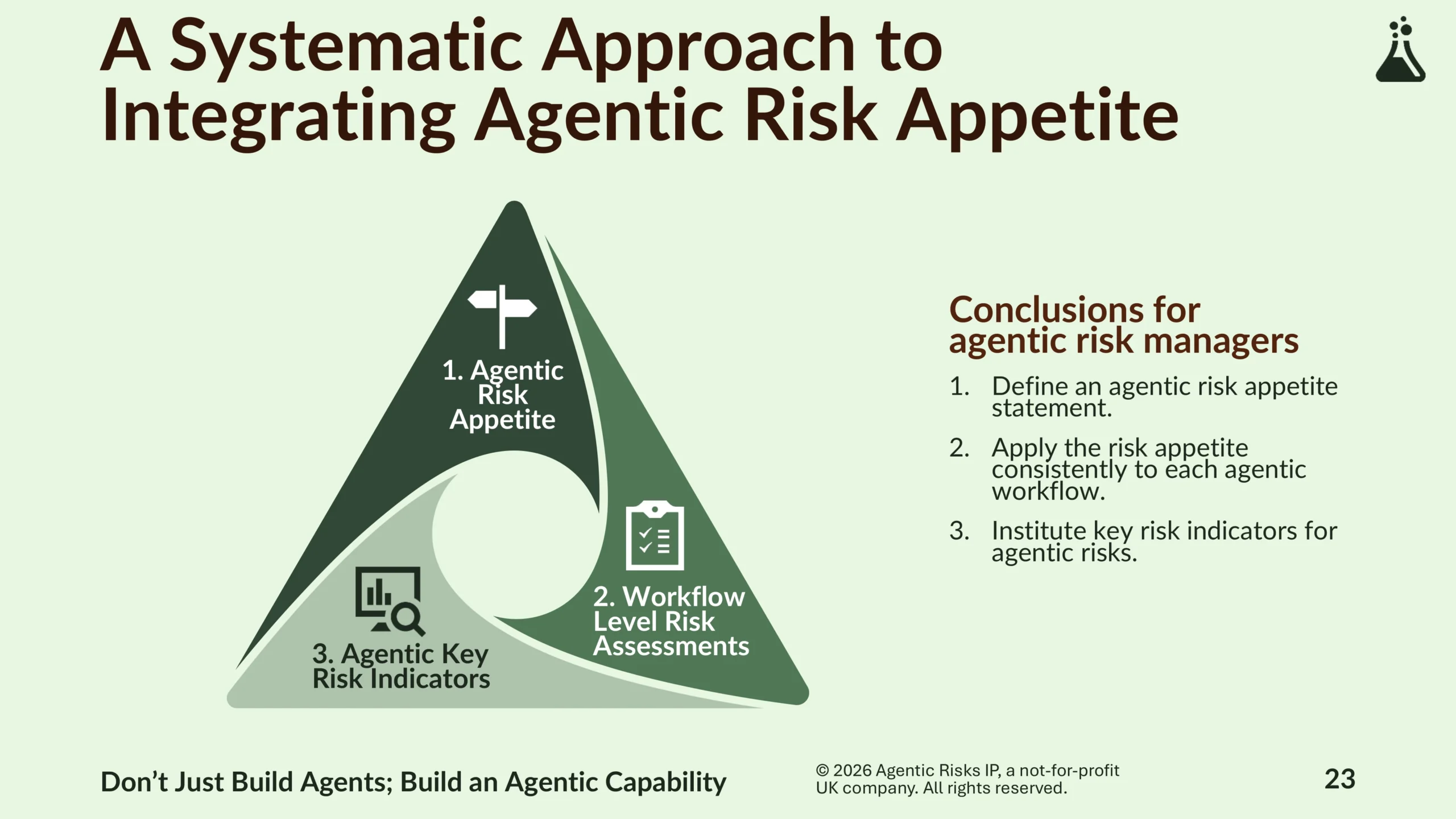

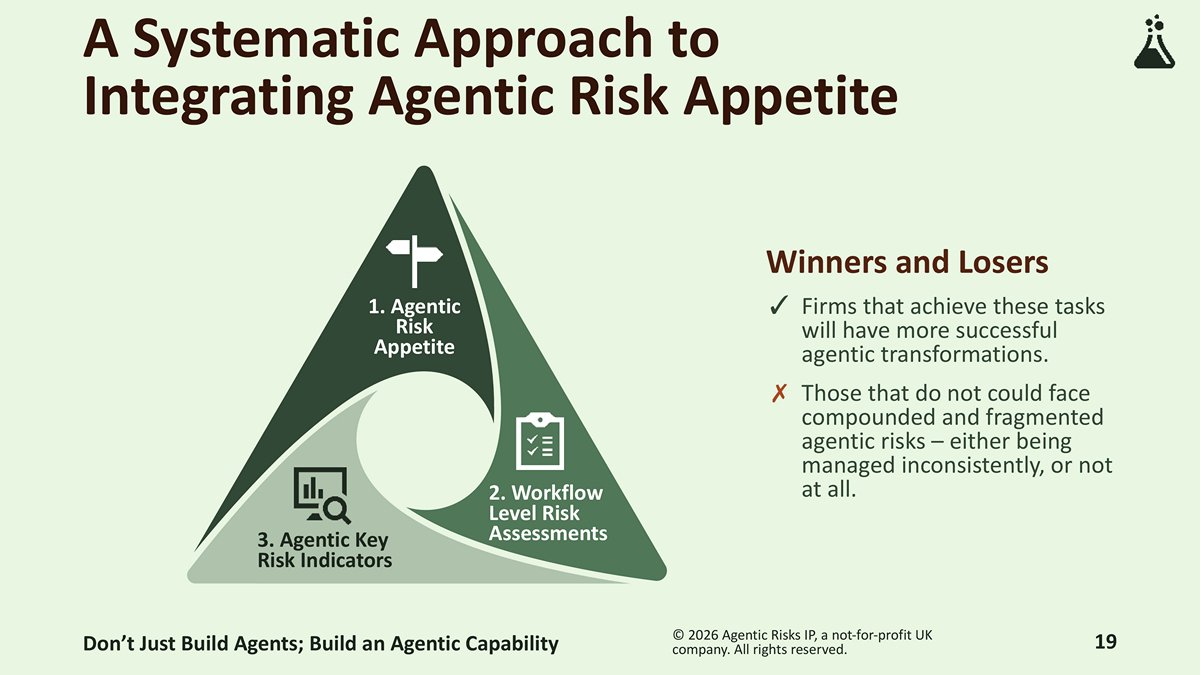

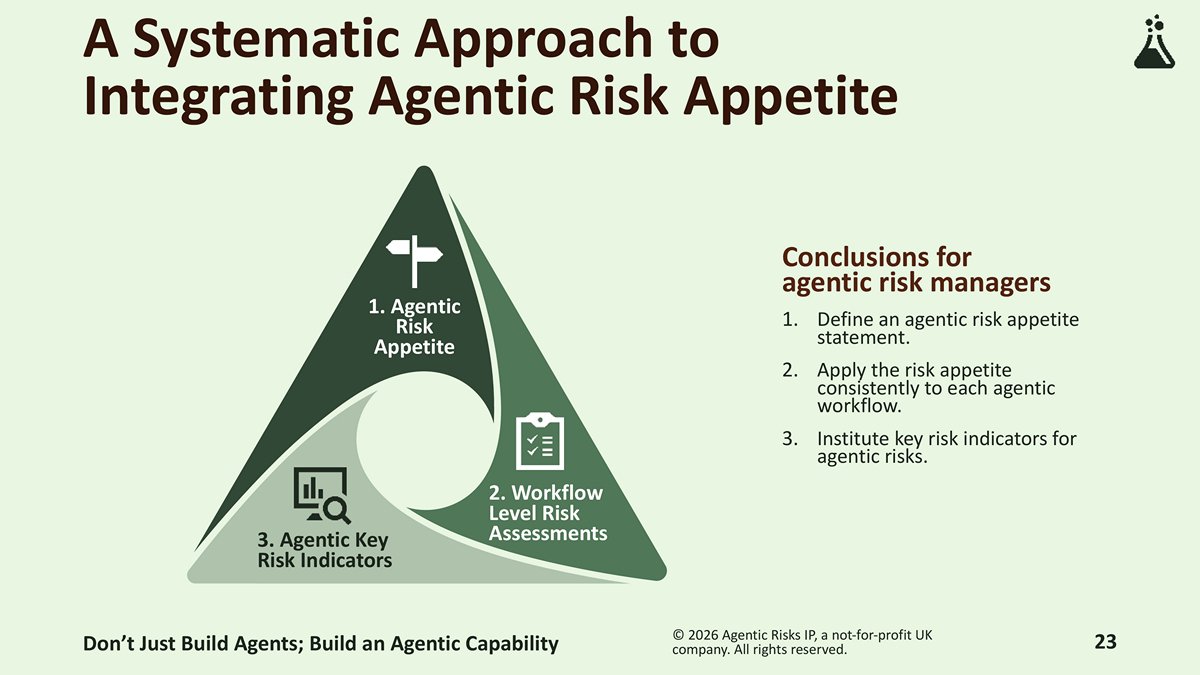

A systematic approach to integrating agentic risk appetite

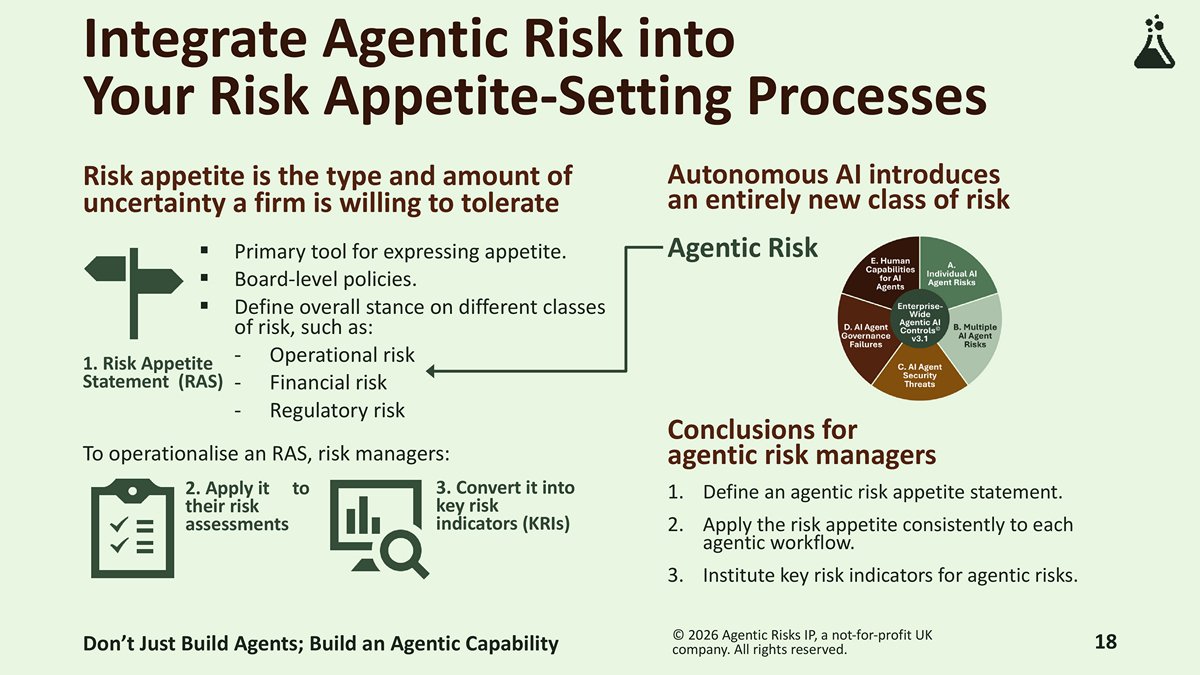

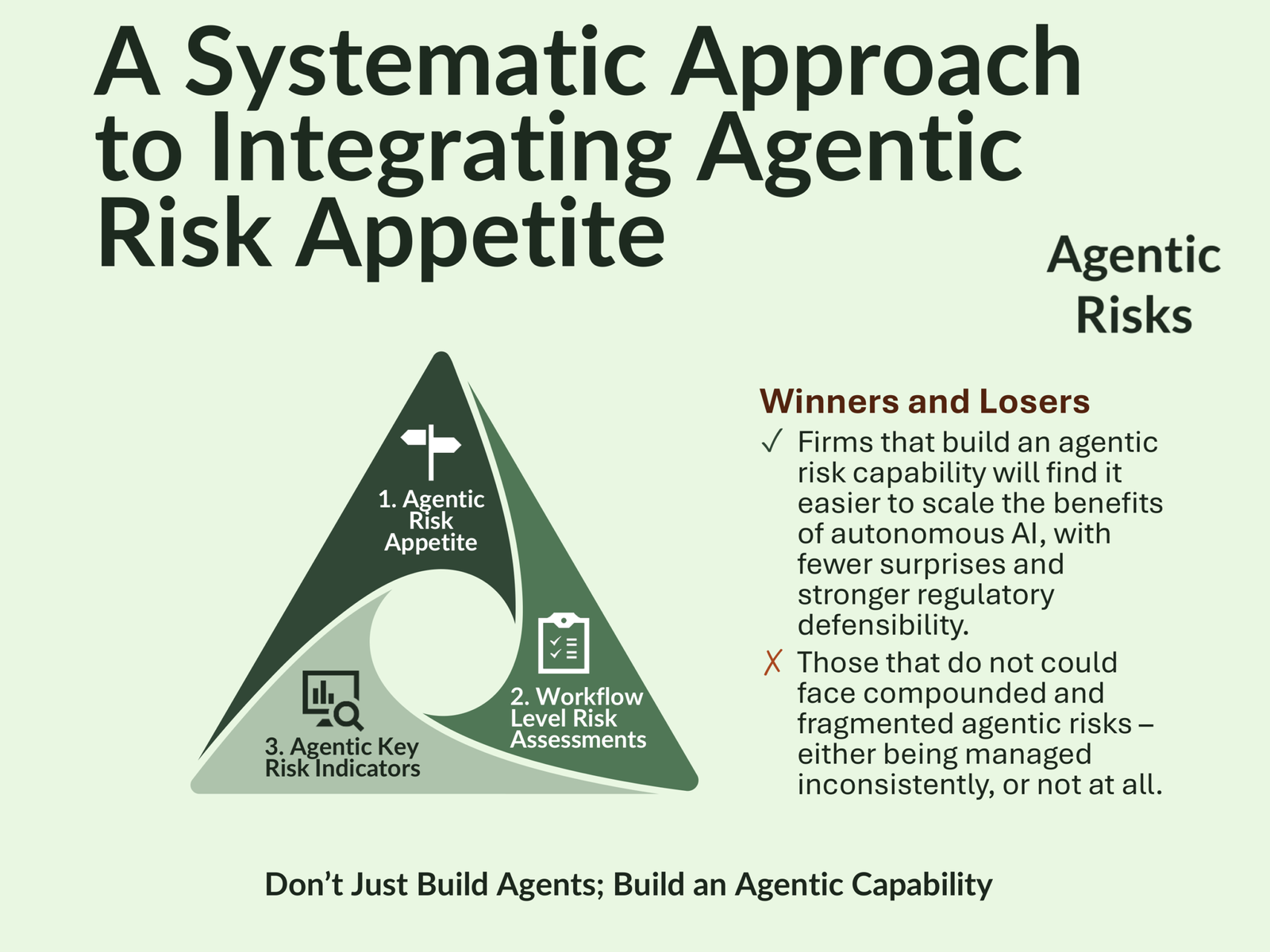

Autonomous AI introduces an entirely new class of risk that must be integrated into existing risk management processes through an agentic AI risk management framework.

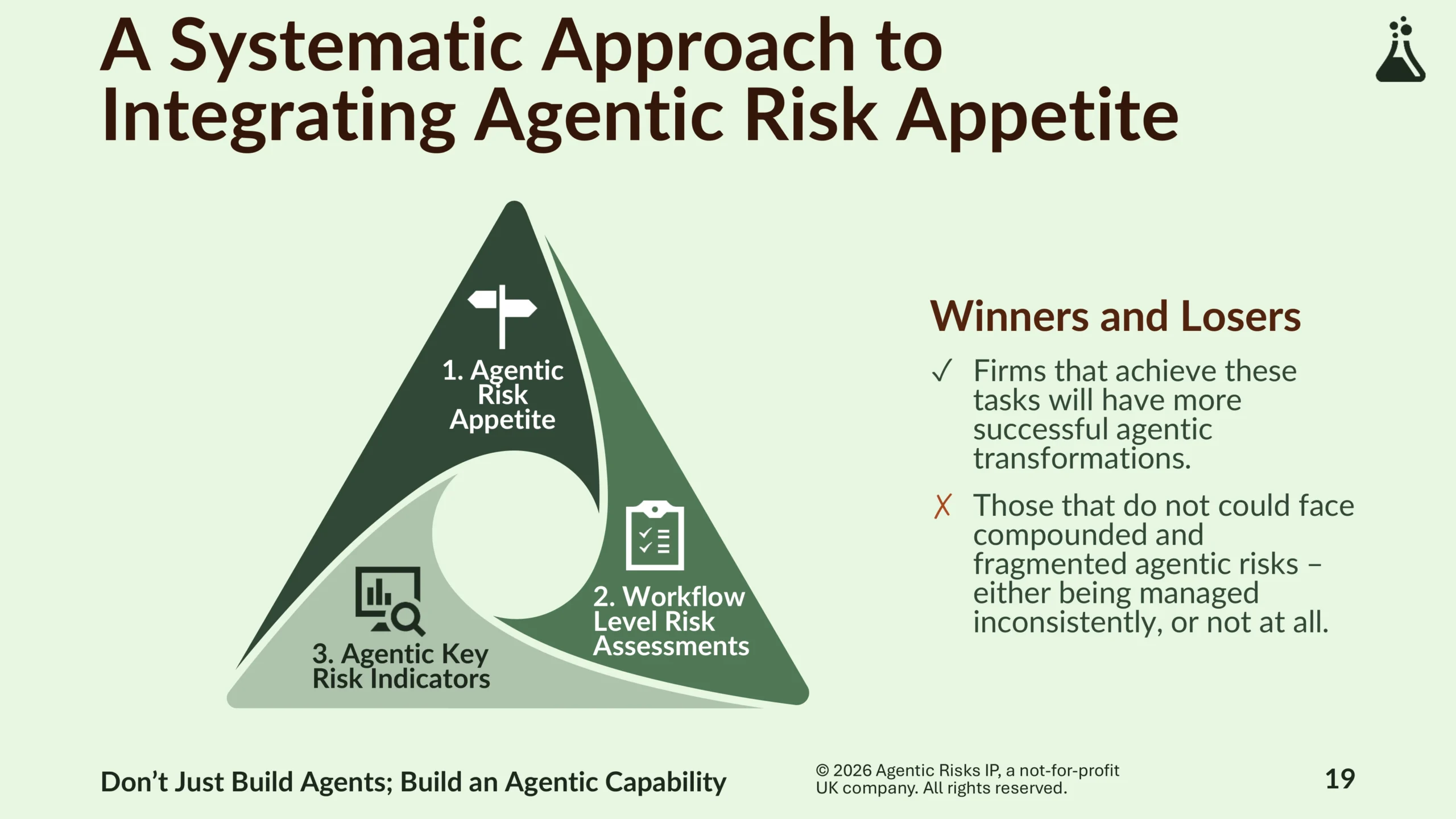

A systematic approach to achieving this requires us to achieve three tasks:

- Define an agentic risk appetite statement.

- Apply the risk appetite consistently to each agentic workflow through agentic workflow risk assessments.

- Institute agentic key risk indicators (KRIs).

Firms that achieve these tasks will have more successful agentic transformations, while those that do not could face compounded and fragmented agentic risks, managed either inconsistently or not at all.

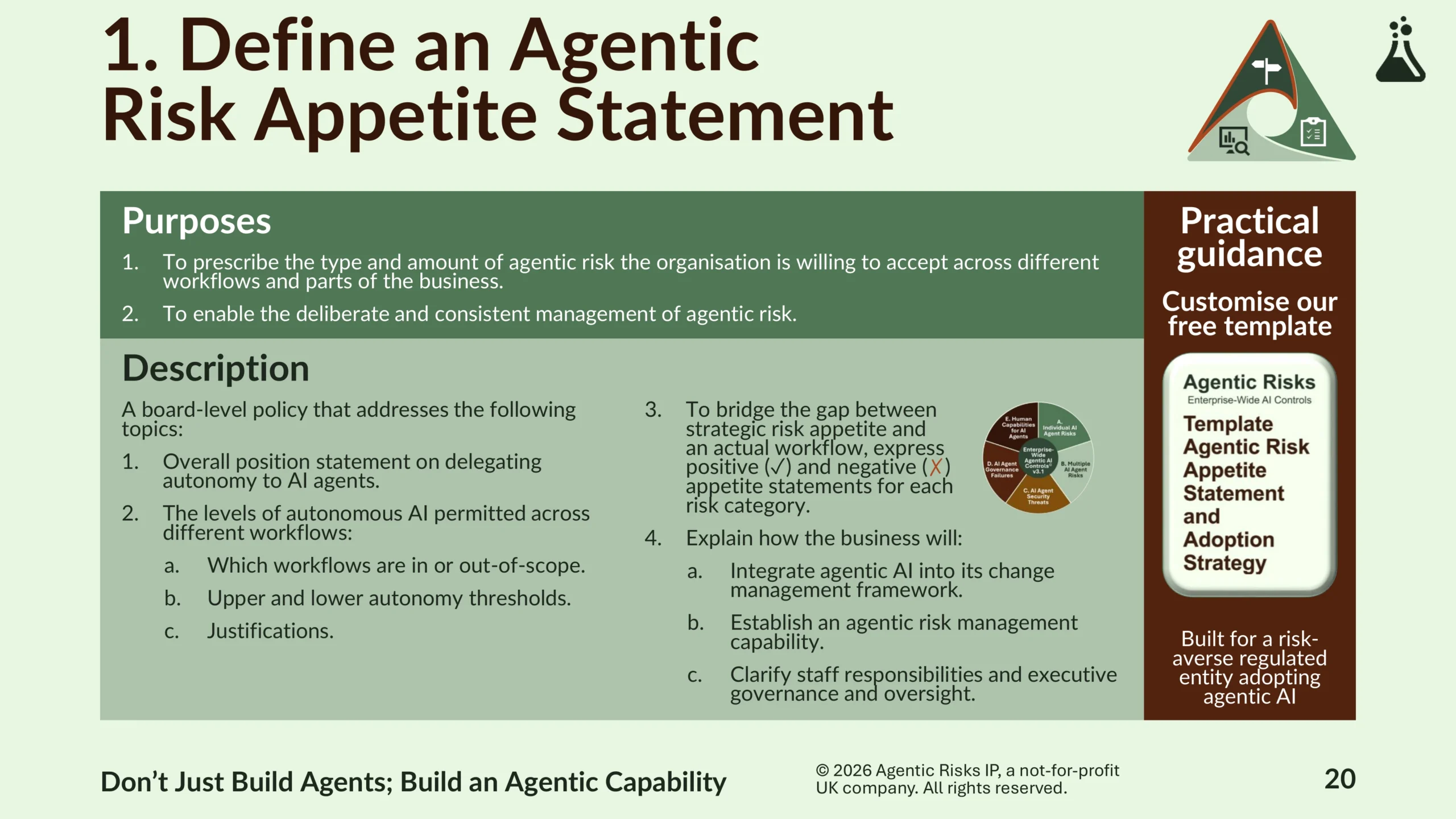

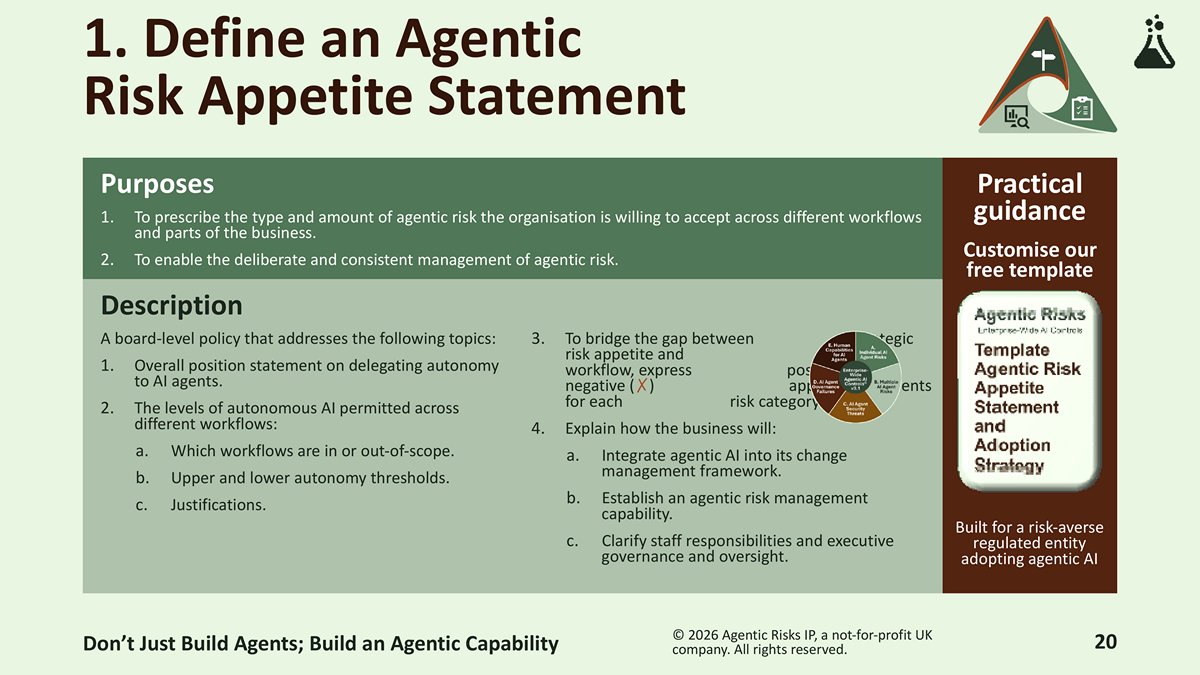

1. Define an agentic risk appetite statement

Engage the top of the house and give the organisation clear direction with a board-level statement that explains how you apply risk appetite to AI agents. Our downloadable template recommends the following content:

- The levels of autonomous AI permitted across different workflows (including which workflows are in or out of scope, upper and lower autonomy thresholds, and justifications).

- Positive and negative appetite statements for each category of agentic risk – individual AI agent risks, multiple AI agent risks, AI agent security threats, AI agent governance failures, and human factors. This will enable risk assessors to bridge the gap between strategic risk appetite and an actual workflow.

- Clarity on staff responsibilities and executive governance, how the business will integrate agentic AI into its change management framework, and the establishment of an agentic risk management capability.

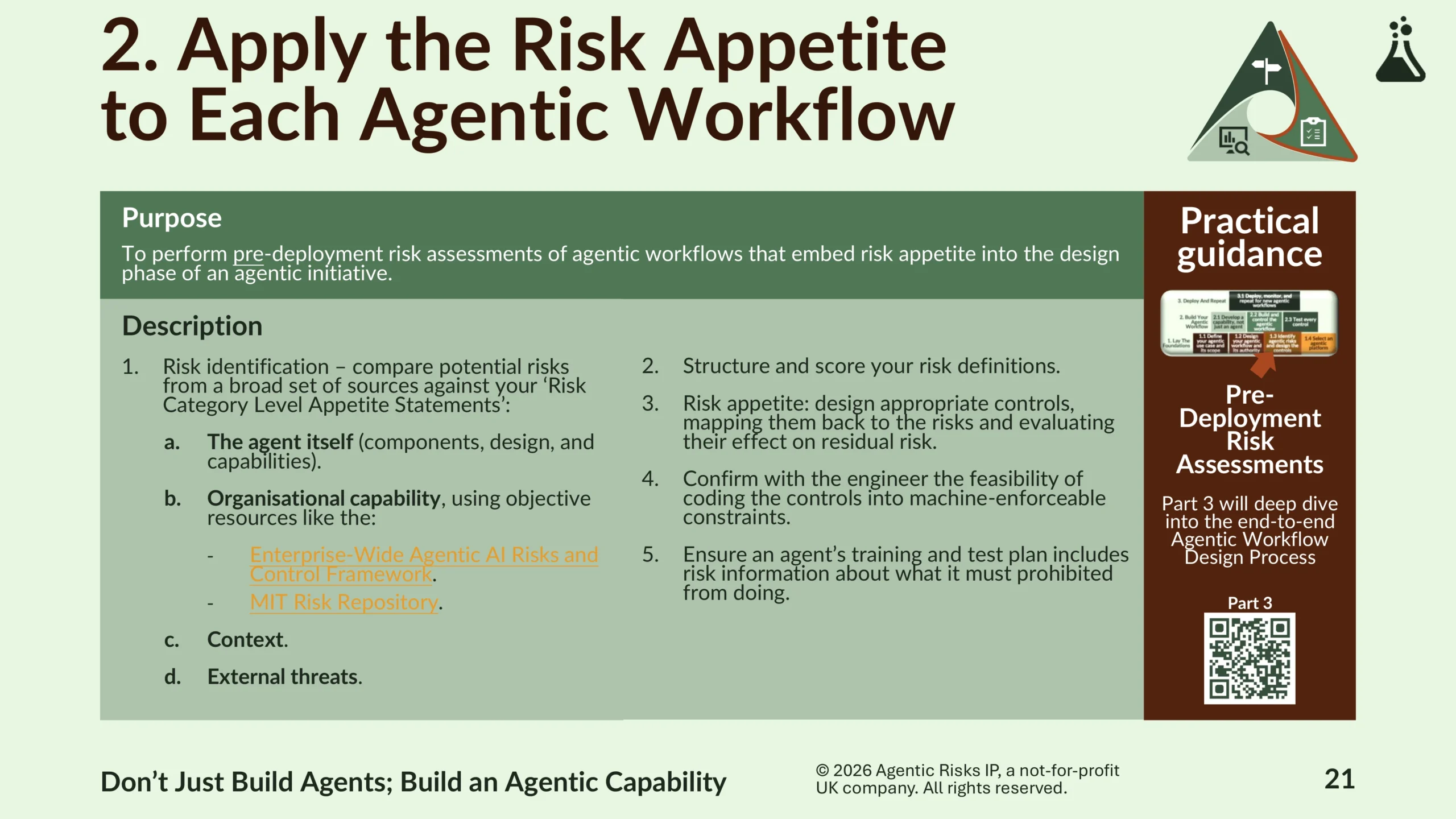

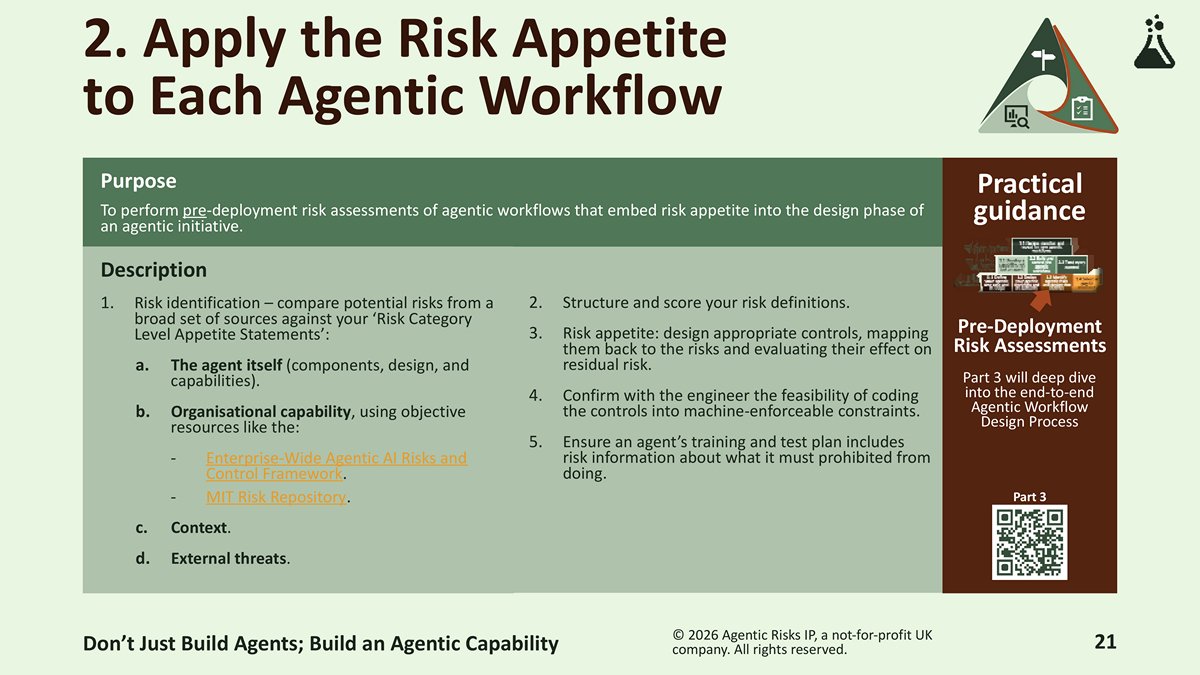

2. Apply agentic risk appetite to each agentic workflow

Achieve this through pre-deployment AI agent risk assessments that embed risk appetite into the design phase of a new agentic workflow.

- While you are still developing your agentic capability, identifying the risks will be novel, so be systematic in covering the four sources of risk (the agent itself, organisational capability, context, and external threats) and use objective agentic risk taxonomies like the Enterprise-Wide Agentic AI Risk Control Framework and the MIT Risk Repository to make sure you cover everything.

- Next, design appropriate controls for each risk – crucially, confirming with the engineer the feasibility of coding controls into machine-enforceable constraints, and ensuring the agent’s training and test plan includes risk information necessary for your required controls.

- For practical guidance on performing a pre-deployment AI agent risk assessment, follow Step 1.3 in the Agentic Workflow Design Process. Part 3 of the webinar series will explore the entire Agentic Workflow Design Process in detail. Register now.

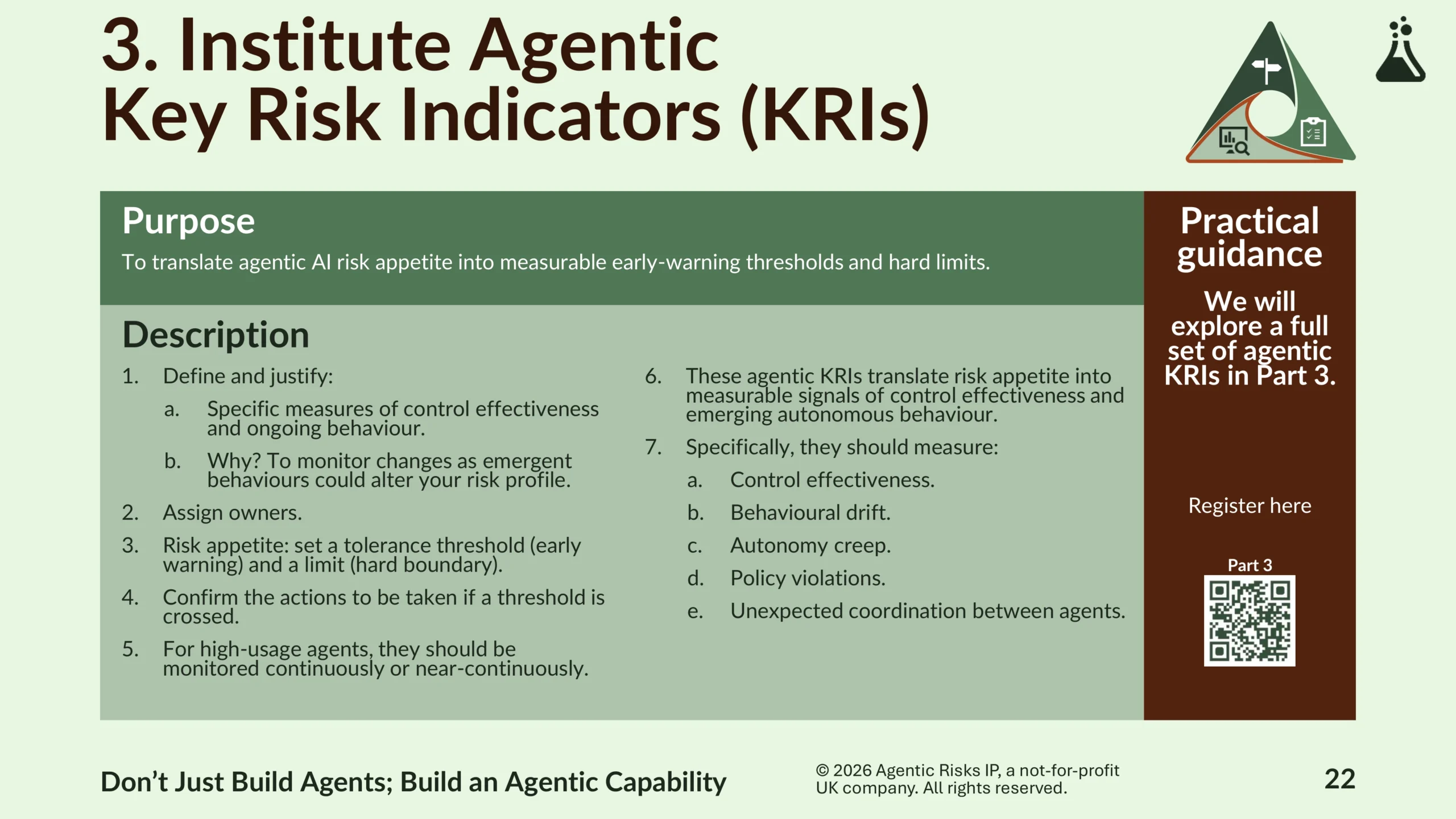

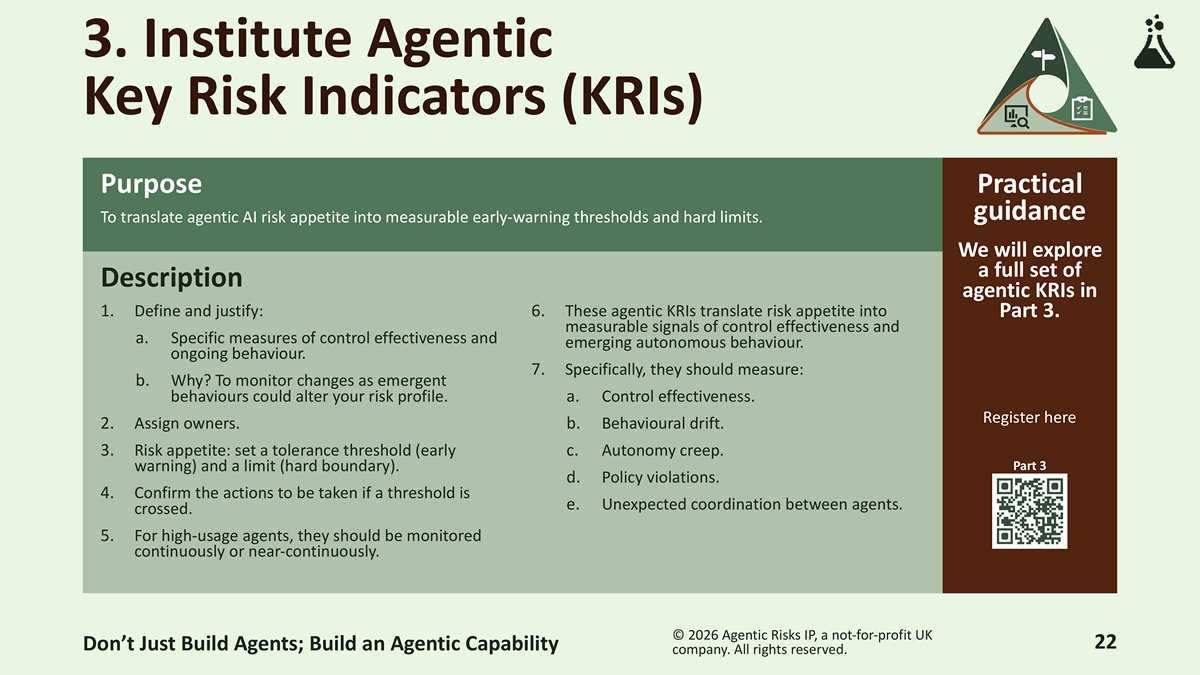

3. Institute agentic key risk indicators (KRIs)

Monitor changes to your risk profile from emergent agentic behaviours by translating agentic risk appetite into measurable early-warning thresholds and hard limits:

- Define and justify specific measures of control effectiveness and ongoing behaviour.

- Assign owners.

- Set tolerance thresholds and hard boundaries.

- Confirming the actions to be taken if a threshold is crossed.

- Part 3 of the webinar series will include a full set of agentic KRIs. Register now.

Tips for Agentic AI Adoption Strategies

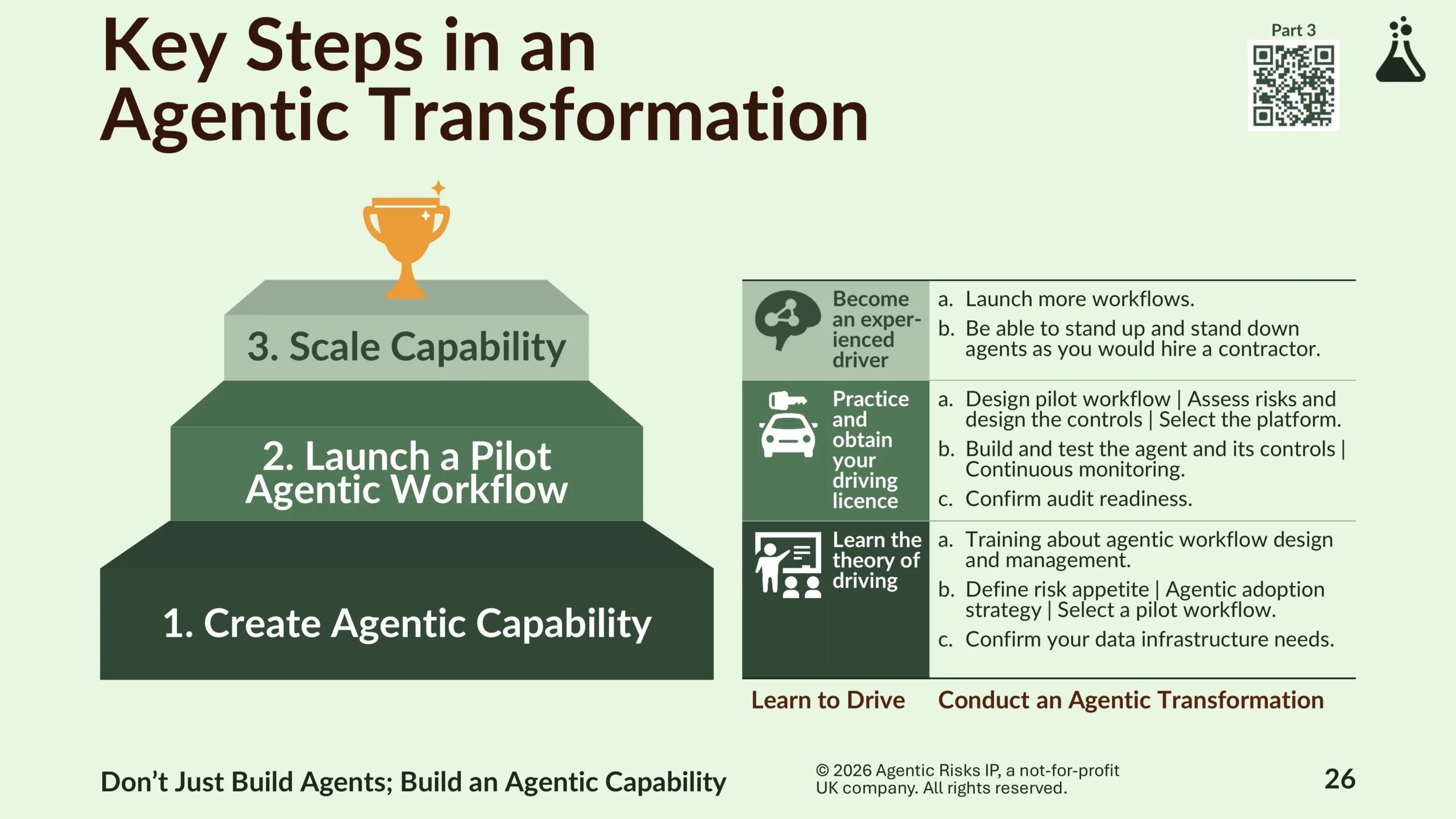

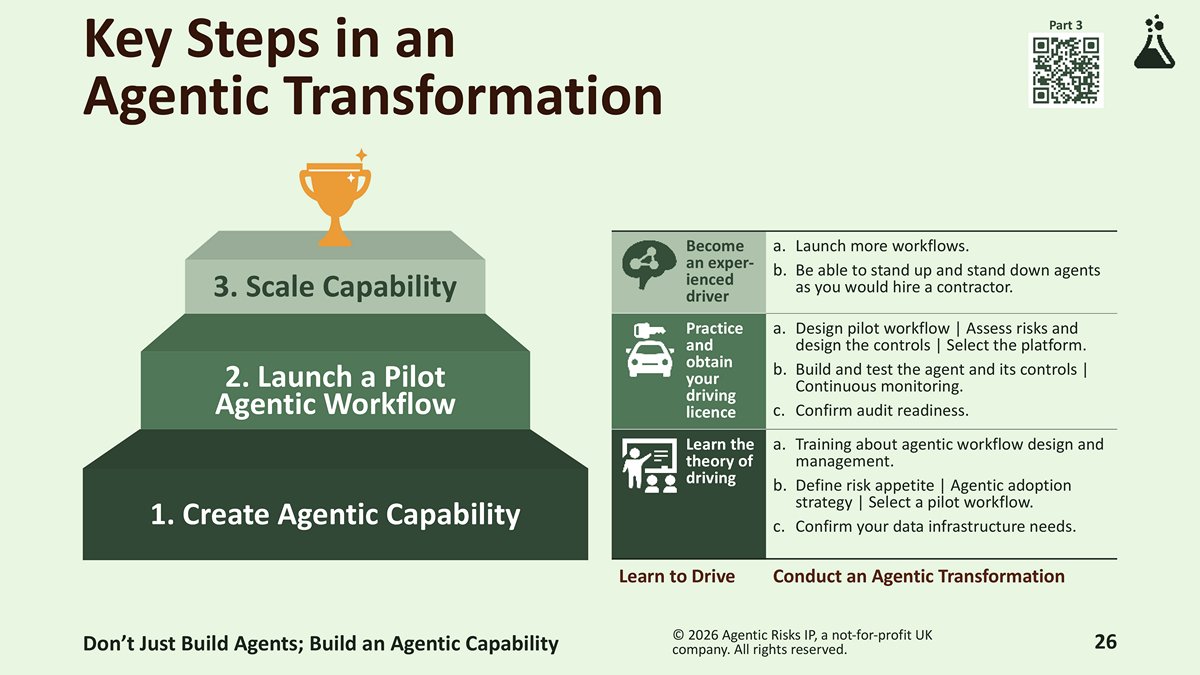

Three key steps in an agentic transformation:

- Create an agentic capability – undertake training about agentic workflow design and management, define your agentic risk appetite, outline your adoption strategy, select a pilot workflow, and confirm your data infrastructure needs.

- Launch a pilot agentic workflow – design the pilot workflow, identify the necessary risk controls, build and test the agent and its controls, monitor it continuously, and confirm your readiness for the inevitable audit.

- Scale your new capability – launch more workflows and be able to stand up and stand down agents as you would hire a contractor. Because agents are non-human co-workers, in the era of agentic AI, your agentic capability will become as important as your ability to hire and manage humans.

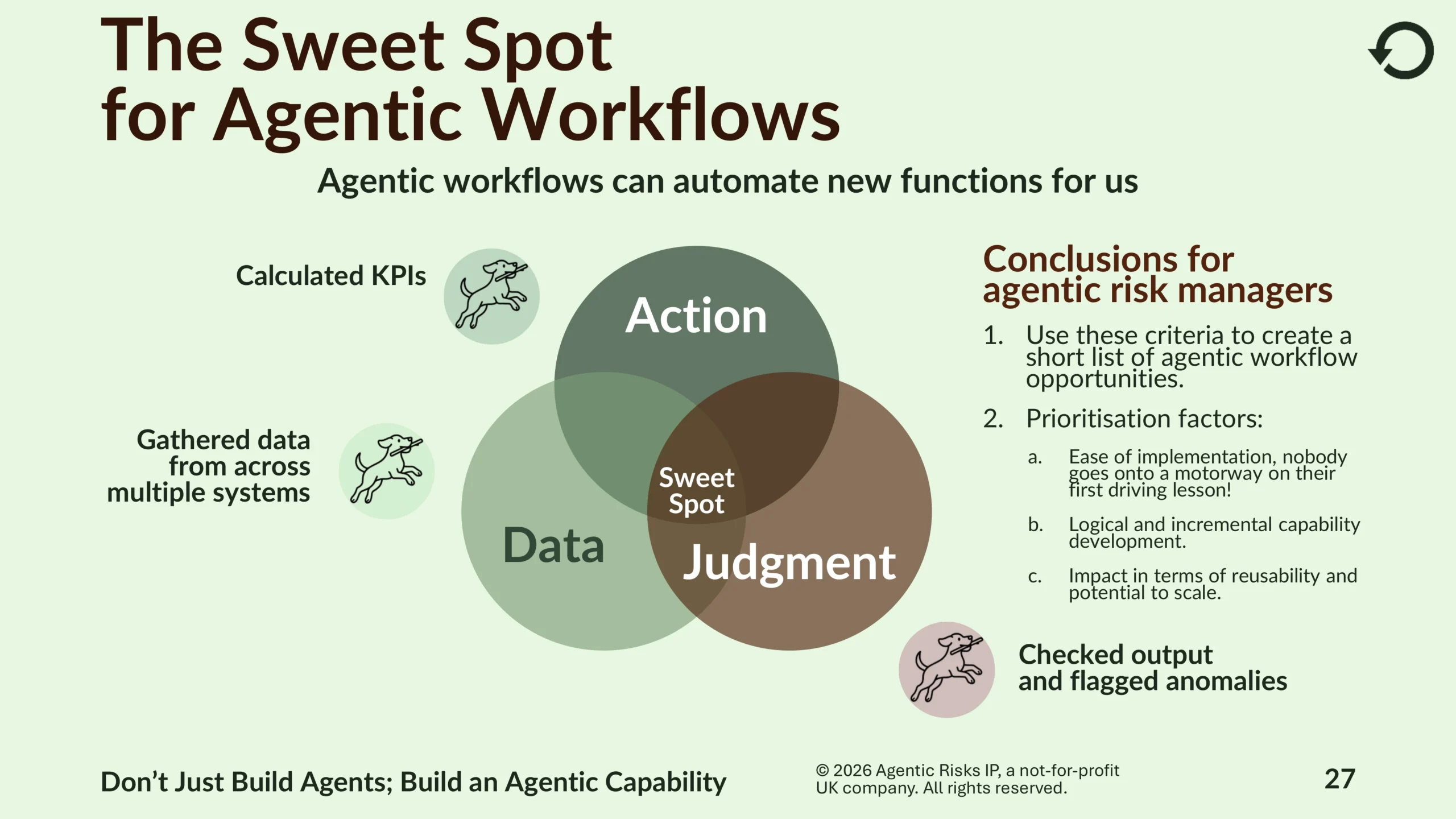

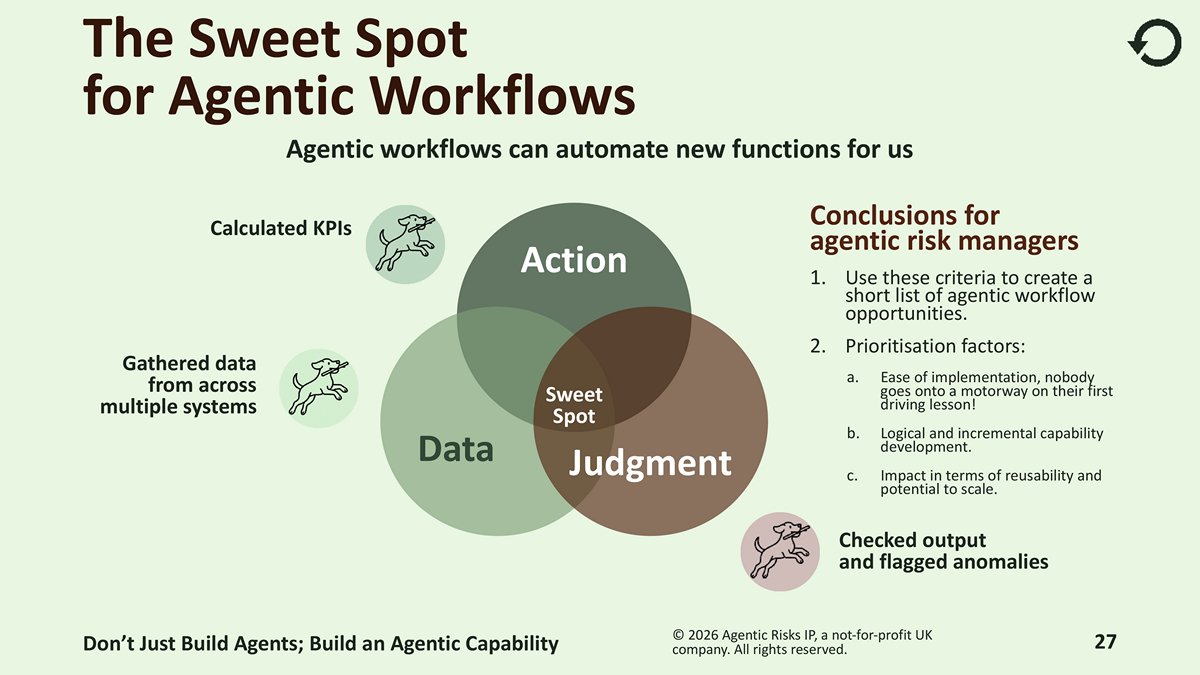

Identifying potential agentic workflows – the sweet spot for agentic AI lies at the intersection of data, action and judgment. Use these factors to create a short list of agentic workflow opportunities. Then use three criteria to prioritise them:

- Ease of implementation.

- Logical and incremental capability development.

- Their impact in terms of reusability and potential to scale.

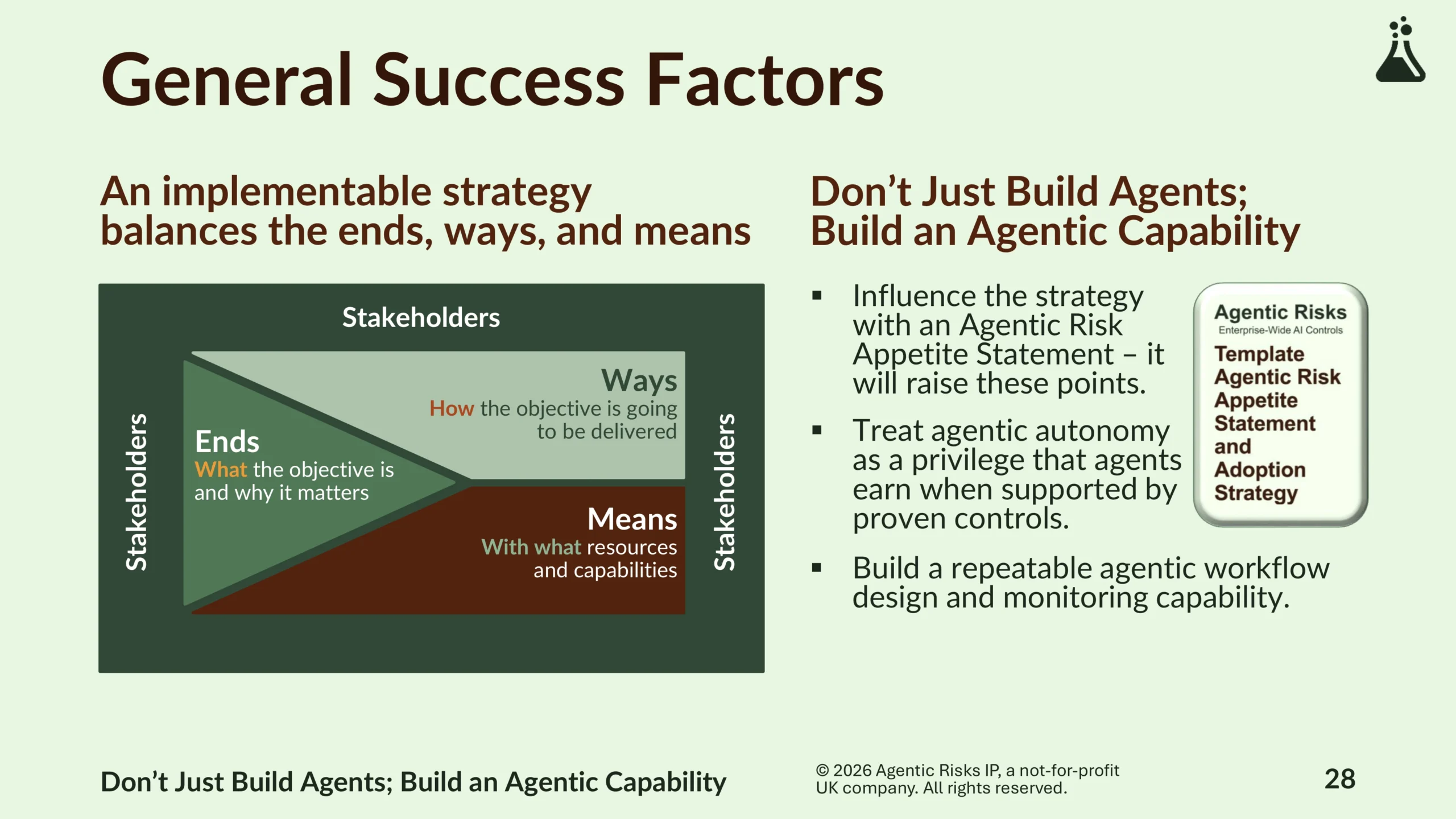

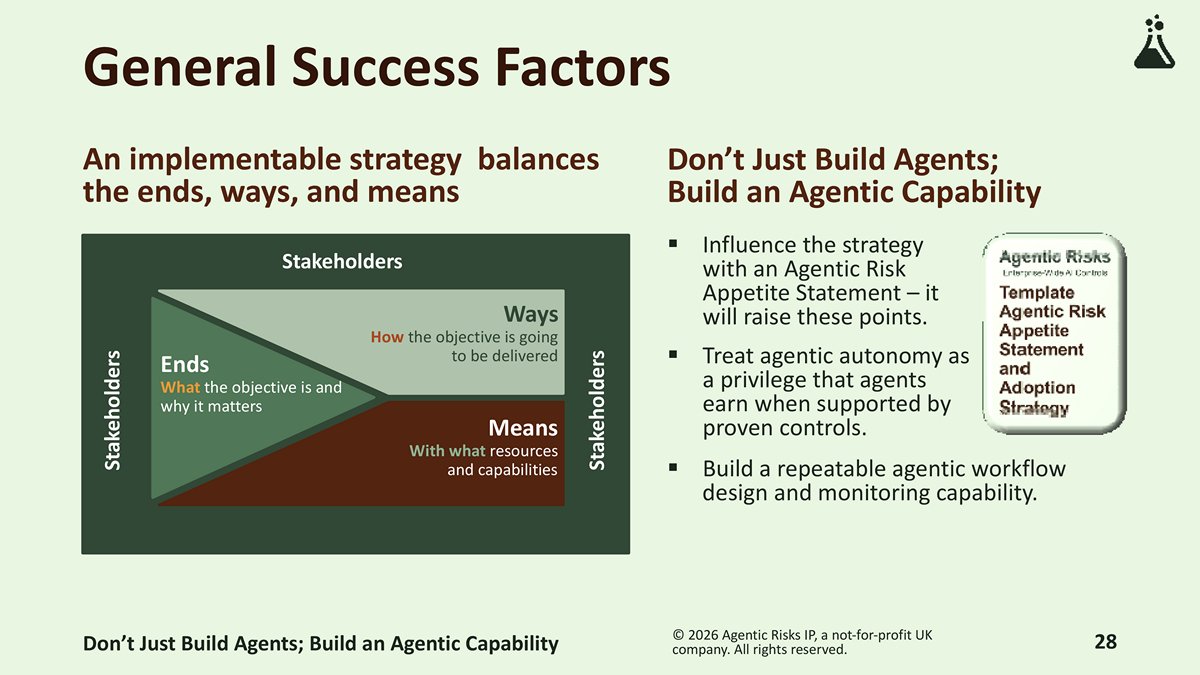

Lastly, don’t just build agents; build an agentic capability that will give you strategic freedom to choose between whether a human or an agentic workflow will be the best solution to your problem.

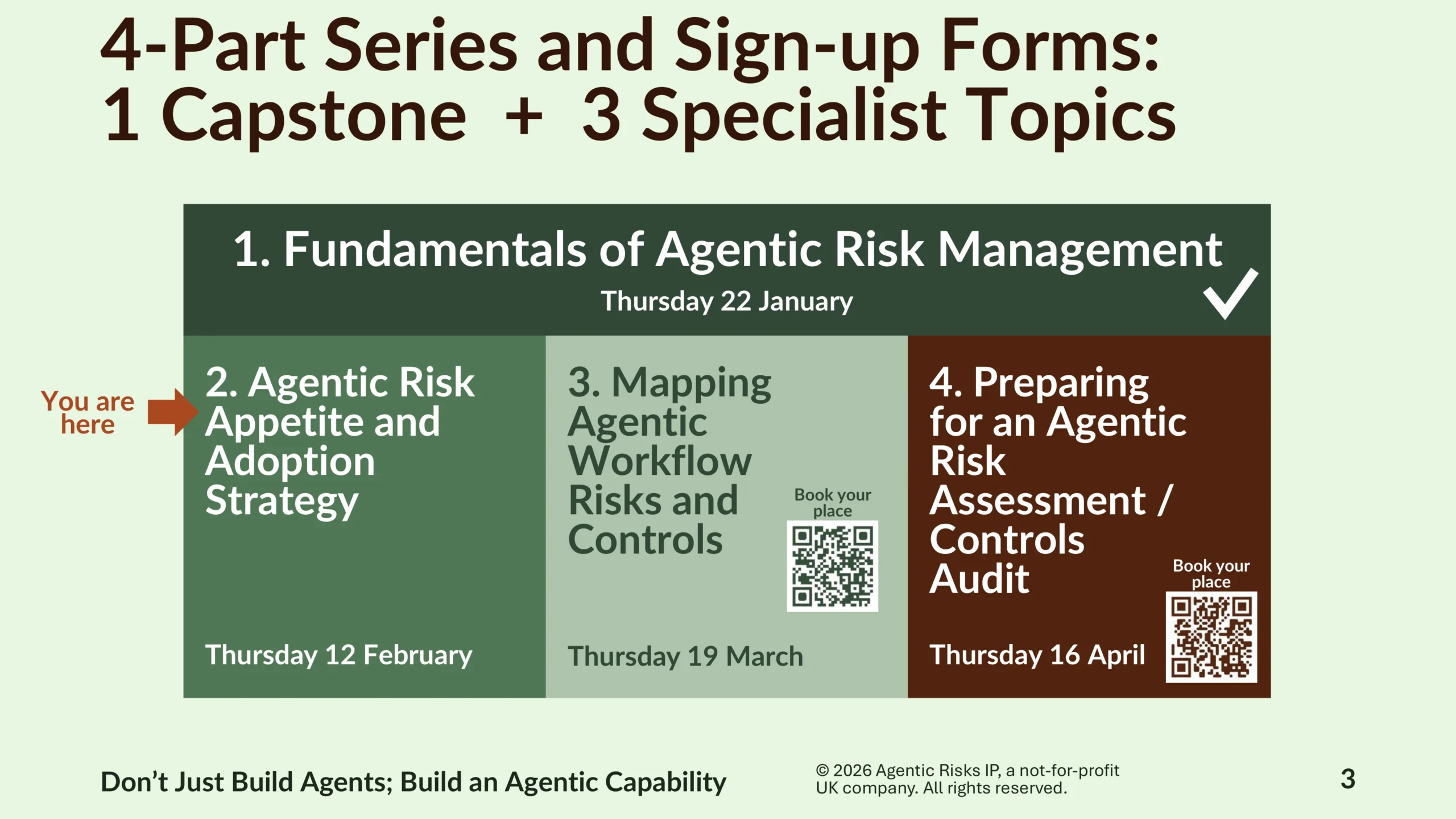

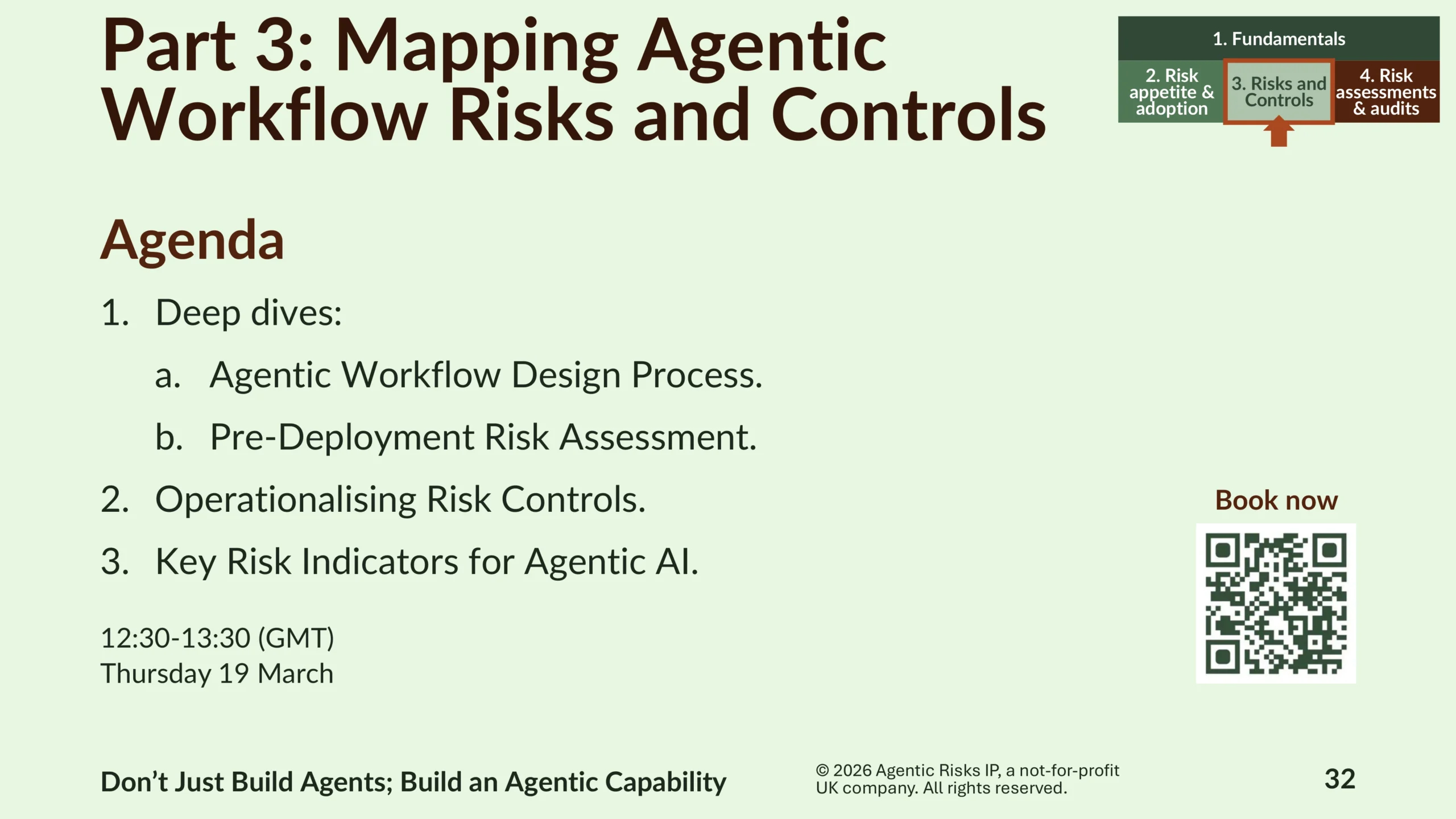

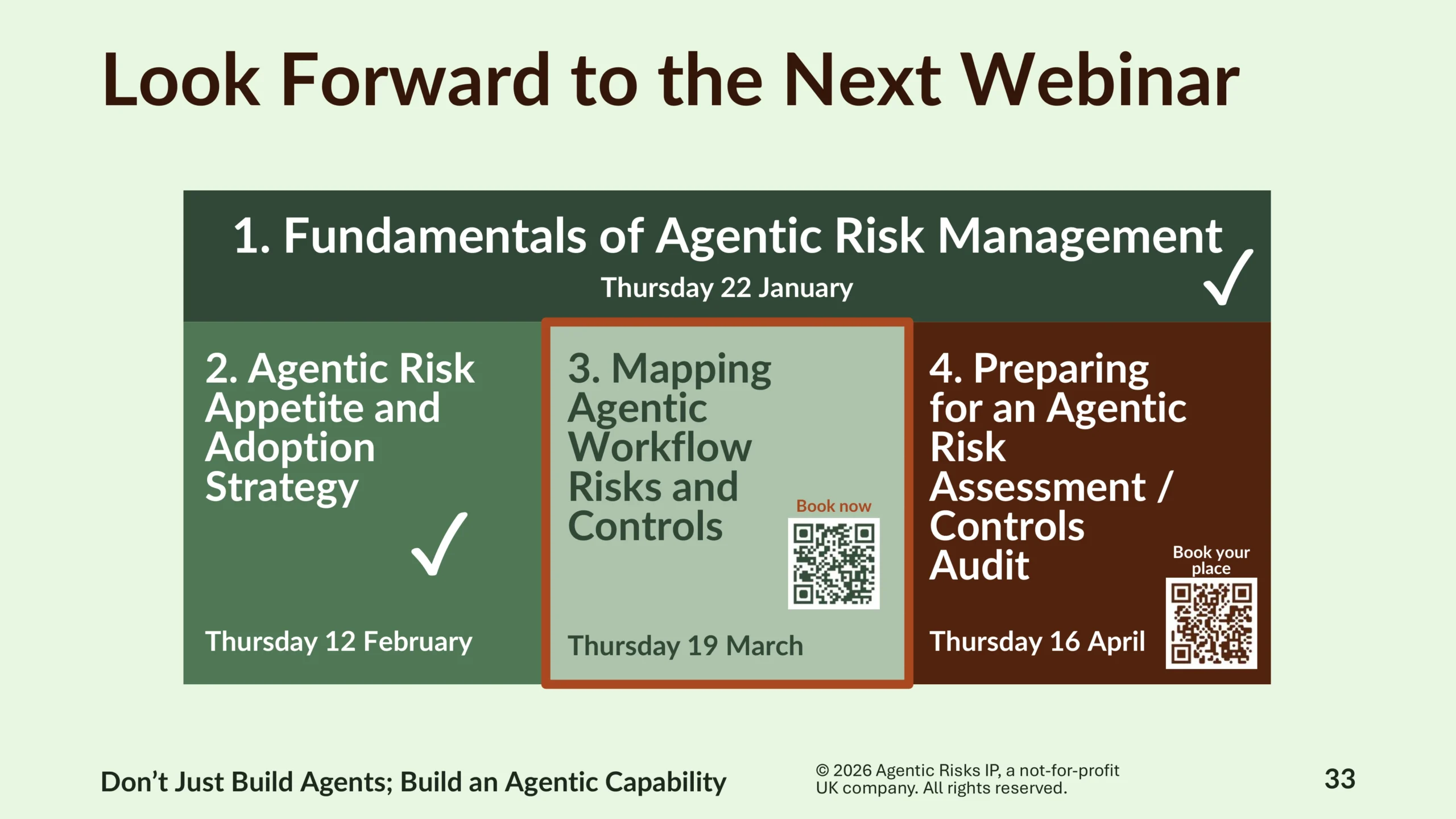

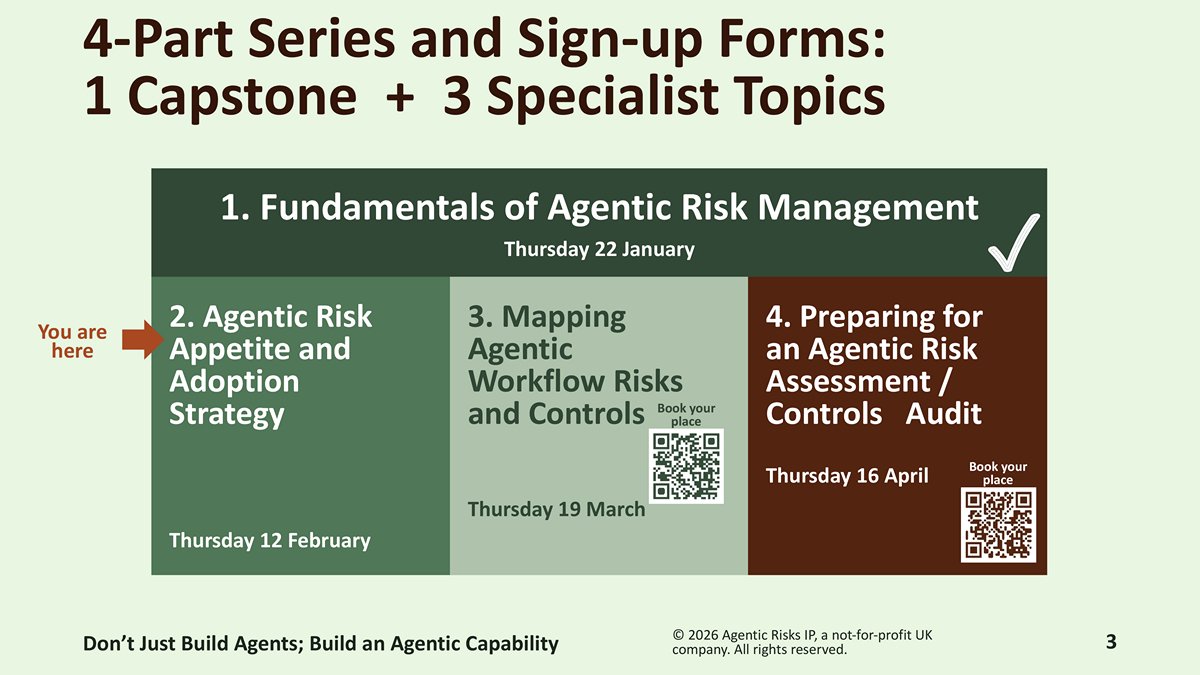

Book Your Place at Future Webinars on Agentic AI Risk Management

Register now for Part 3, which will cover ‘Mapping Agentic Workflow Risks and Controls’. In this session, we will deep dive into the agentic workflow design process, a pre-deployment agentic risk assessment, the operationalisation of risk controls, and key risk indicators for agentic AI. Join us at 12:30-13:30 (GMT) on Thursday 19 March.

Questions and Answers

Yes. In the same way a material system, business process, or employee does today, each production AI agent should have a named accountable executive as part of AI agent governance and accountability. SMCR already requires clear ownership for activities that could cause harm; agents initiate actions, so they qualify. Indeed, the UK’s financial regulators (FCA and PRA) have confirmed that SMCR already applies to AI use, with senior managers personally accountable for AI systems within their business areas.

AI agents should operate under their own unique, non-human identities, not piggyback on a human’s account, though they can also have secondary “agent user” accounts when systems strictly require user-type authentication. Shared or borrowed accounts break audit trails and make investigations unreliable. Separate identities enable lifecycle management for agents (creation, permission changes, suspension, retirement) in line with joiner/mover/leaver controls. This mirrors how service accounts already work in mature IT environments.

The Agentic Risks framework is designed to operationalise these regulations by translating high-level governance, resilience, and risk-classification duties into concrete, testable controls for autonomous agents. Where ISO/IEC 42001 defines what an AI management system must achieve, Agentic Risks’ framework specifies how to implement controls at the agent level. Where DORA focuses on operational resilience, Agentic Risks’ framework adds agent-specific failure modes, e.g. autonomous actions, cascading behaviours, or tool misuse. And where the EU AI Act classifies risk and mandates safeguards, Agentic Risks’ framework maps those obligations to implementable controls for agents.

The UK AI Opportunities Action Plan will increase pressure to adopt practical, business-led risk controls rather than heavy prescriptive regulation. The plan emphasises growth and adoption, implying fast deployment of advanced AI, including agents. Fast adoption without parallel risk controls would increase the chance of high-profile failures, especially if it also front-runs lagging regulation. Therefore, organisations that already have structured agentic risk controls will be better positioned to scale safely and demonstrate responsible innovation.

When assessing agents that are already acting, spending, and escalating, risk managers need to orient themselves and add value fast while simultaneously protecting themselves. Post-hoc reviews can sometimes lack the evidence needed to tie actions back to evidence-based risks. Agentic Risks’ post-deployment risk assessment leverages verifiable agentic risk flags you can test for defendable evidence, so you can quickly translate your findings into clear and proportionate risk treatment plans.

Yes. We will explore this in more detail in Part 4 of the webinar series on Thursday 16 April, which will cover ‘Preparing for an Agentic Risk Assessment / Controls Audit’. Register for the webinar here or contact us if you would like to learn more.