Table of Contents

Executive Summary

Why focus on agentic AI risk appetite? Autonomous AI introduces a new class of risk – agentic risk – because it delegates execution to non-humans that interpret objectives, constraints, and context differently from people.

In agentic workflows, ambiguity itself becomes a risk: unless risk appetite is expressed in behavioural and quantitative terms, agents cannot reliably understand or enforce organisational expectations.

Risk managers must therefore integrate agentic risk into existing risk appetite processes by defining an agentic AI risk appetite statement, applying it consistently at the workflow level, and instituting key risk indicators for agentic risks.

Organisations that do this will enable safe, scalable agentic adoption; those that do not will face fragmented, compounding, and unmanaged autonomous behaviour risks.

Agentic AI Risk Appetite: Integrating Autonomous AI Agents into Risk Appetite Processes

Risk appetite is the type and amount of uncertainty an organisation is willing to tolerate.

The primary tool for expressing it is the risk appetite statement (RAS), strategic policies that outline the overall stance on different classes of risk, such as operational, financial, and regulatory risks.

To operationalise these statements, risk managers apply them to their risk assessments and convert them into key risk indicators (KRIs) that sit on quantitative scales, enabling us to prescribe and monitor actionable thresholds and limits.

However, autonomous AI introduces an entirely new class of risk – agentic risk – that, unmanaged, can materialise across five categories, requiring organisations to address agentic AI risk management.

As a result, risk managers in organisations that adopt autonomous agents need to integrate agentic risk into their existing appetite-setting processes.

For Agentic AI, Ambiguity Itself is a Risk

Integrating agentic risk requires a specific approach because, uniquely, agentic workflows delegate execution to non-humans that lack lived experiences and process information differently than we do.

As a result, we cannot simply replace a human in a workflow with an AI agent; instead, we must re-design the workflow.

Let’s illustrate this via a thought experiment:

- Consider the risk policies and procedures human workers must understand to run an end-to-end workflow competently. Would you be tempted to make assumptions if they had years of competitor experience?

- Now imagine placing a school-leaver in charge: what additional risk information would you want them to understand? And which of your earlier assumptions might now seem ambiguous or unsafe?

- Finally, delegate the workflow to an AI agent that lacks the lived experience that gives meaning to statements like “we have a low tolerance for reputational risk.”

- Without behavioural guidelines or a quantitative definition of ‘low’, the agent will struggle to understand your expectations, in practice leaving the risk unmanaged.

- What extra knowledge and context would you provide this agent beyond what you’d give the school-leaver or experienced hire? And in what format?

The lesson from this thought experiment is that, for agentic AI, ambiguity itself is a risk. Even mainstream AI risk management techniques cannot help us here because their focus on model accuracy and data quality does not address autonomous behaviour.

A Systematic Approach to Integrating Agentic AI Risk Appetite

Therefore, risk managers face three tasks when operationalising AI risk appetite for agentic AI:

- Define an agentic AI risk appetite statement.

- Apply the risk appetite consistently to each agentic workflow.

- Institute key risk indicators for agentic risks.

Firms that achieve them will have more successful agentic transformations, while those that do not could face compounded and fragmented agentic risks – either being managed inconsistently, or not at all.

In the following sections, we explain how to achieve these tasks and provide free templates you can leverage.

1. Define an Agentic AI Risk Appetite Statement

Purposes

- To prescribe the type and amount of agentic risk the organisation is willing to accept across different workflows and parts of the business.

- To enable the deliberate and consistent agentic risk management.

Description – a board-level policy that addresses the following topics:

- Overall position statement on delegating autonomy to AI agents and the levels of autonomous AI permitted across different workflows: which workflows are in or out-of-scope, upper and lower autonomy thresholds, and justifications.

- To help business managers apply it in workflows, good practice is to also express positive and negative appetite statements for each risk category:

- Individual AI agent risks.

- Multiple AI agent risks.

- AI agent security threats.

- AI agent governance failures.

- Human capabilities for AI agents.

- This document should explain how the business will integrate agentic AI into its change management framework, clarify staff responsibilities and executive governance and oversight, and establish an agentic risk management capability.

Customisable Template – download our customisable risk appetite statement and adoption strategy.

2. Apply the Risk Appetite Consistently to each Agentic Workflow

Purpose – to perform pre- and post-deployment risk assessments of agentic workflows that systematically identify and score risks arising from autonomous AI agents.

Description

- Ensure you identify risks from a broad set of sources: organisational capability (using objective resources like the enterprise-wide agentic AI risks and control framework and the MIT Risk Repository), the agent itself (components, design, and capabilities), context, and external threats.

- Structure and score your risk definitions and design appropriate controls, mapping them back to the risks and evaluating their effect on residual risk.

- Lastly, to apply the lesson of the thought experiment, ensure an agent’s training and test plan includes not just data about its objective but also risk information about what it must prohibited from doing.

Practical guidance

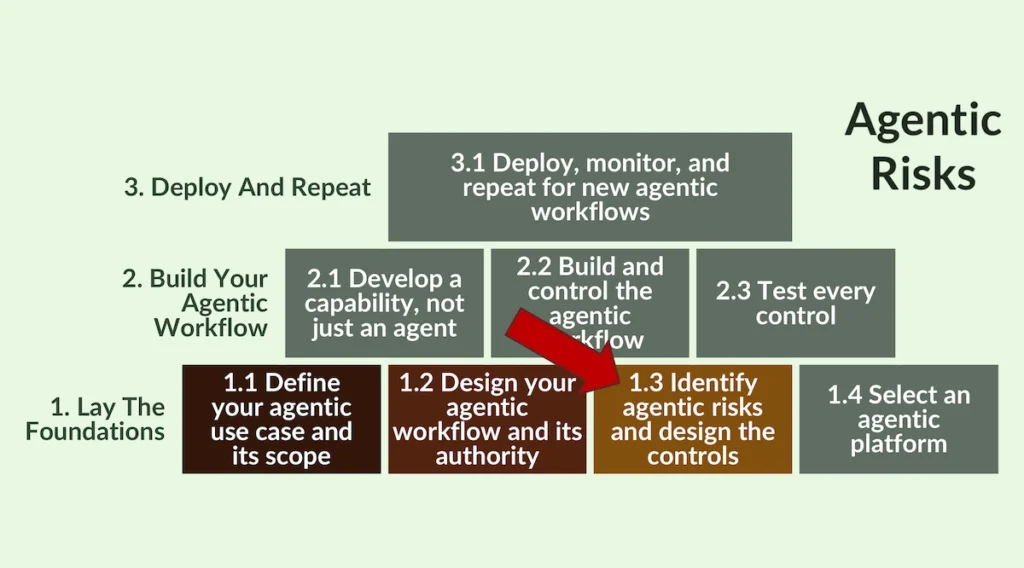

1. Pre-Deployment Risk Assessments – if, as the risk manager, you are involved in the workflow design phase, follow the step-by-step guide at section 1.3 of the Agentic Workflow Design Process.

2. Post-Deployment Risk Assessments – if the agent is already live, use these risk flags to test for evidence of appropriate controls, so you can formulate your findings fast.

3. Institute Key Risk Indicators for Agentic Risks

Purpose – to translate agentic AI risk appetite into measurable early-warning thresholds and hard limits.

Description

- Define and justify the specific measures of control effectiveness and ongoing behaviour you will need to monitor changes as emergent behaviours could alter your risk profile.

- These key risk indicators for AI agents translate risk appetite into measurable signals of control effectiveness and emerging autonomous behaviour.

- Specifically, they should measure control effectiveness, behavioural drift, autonomy creep, policy violations, and unexpected coordination between agents.

- Assign owners, set a tolerance threshold (early warning) and an appetite limit (hard boundary), and confirm the actions to be taken if a threshold is crossed.

- For high-usage agents, they should be monitored continuously or near-continuously.

Practical guidance

In addition to Steps 1.3 and 3.1 of the Agentic Workflow Design Process, in March 2026, we will publish a set of agentic KRIs. Check back here or subscribe to our newsletter to receive notifications of our new content.

De-Risk Your Agentic Transformation

Integrating agentic risk into your existing risk appetite-setting processes provides a practical, auditable method for governing autonomous AI systems without creating parallel processes between which will be gaps that will create problems.

You are welcome, therefore, to leverage our free content as much as possible to ensure a safe agentic transformation.

If you want some support with achieving this, we could either upskill your team with a practical training workshop, or work alongside you to develop your agentic AI risk appetite or assess the risks of a particular agentic workflow.

- Agentic AI Executive Workshop – to kick-start the development of your agentic capability and set your organisation on the right path.

- Agentic AI Risk Appetite And Adoption Strategy – download our free template or hire us to develop your policy and strategy.

- Workflow Level Agentic Risk Assessments – if your risk function is stretched, or you need an assessment delivered before training can be scheduled, we could perform a pre- or post-deployment risk assessment with your team using our proprietary agentic risk assessment software (Gerido).

Frequently Asked Questions

An agentic workflow risk assessment evaluates how risks emerge when autonomous AI agents execute tasks across a workflow, including risks from agent behaviour, system context, organisational capability, and malicious interference. It is performed pre-deployment and post-deployment to test whether appropriate controls exist and whether agent behaviour remains within appetite. This assessment operationalises agentic AI risk appetite at workflow level.