Table of Contents

Executive Summary

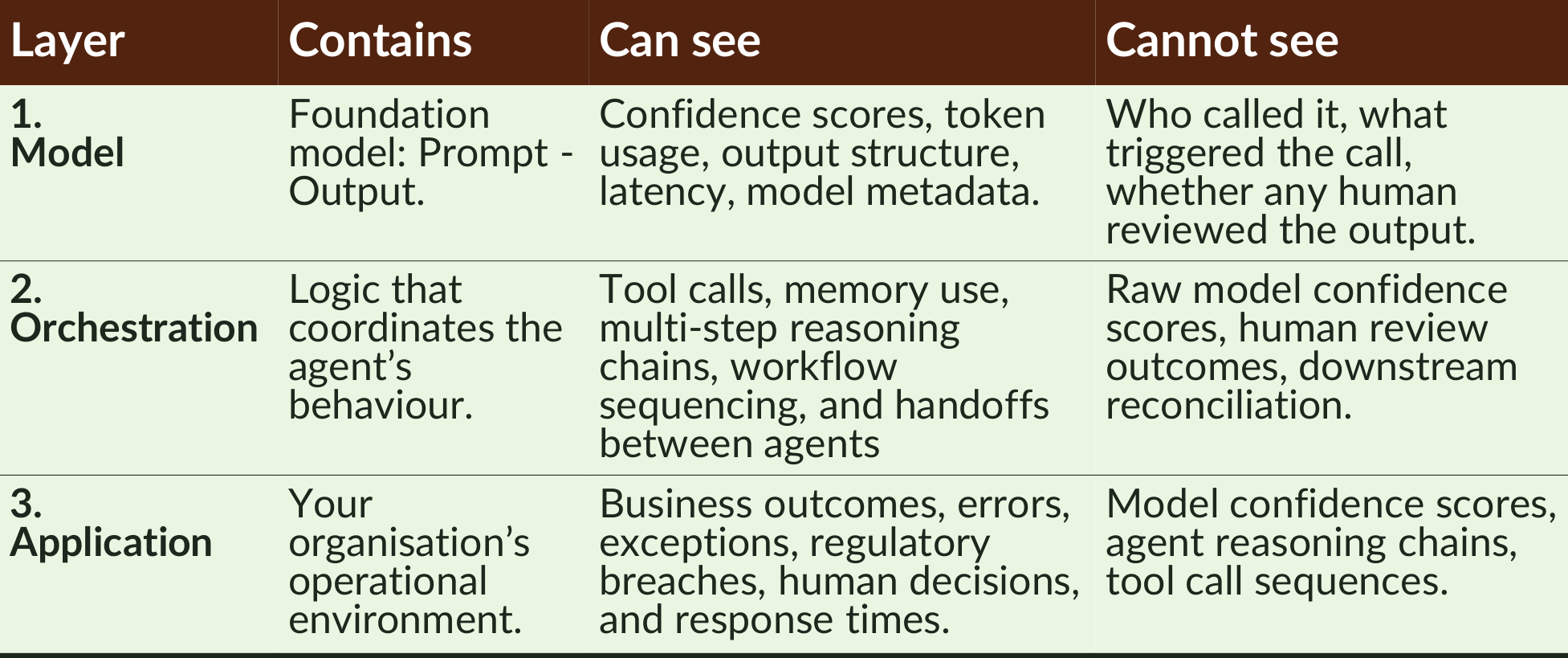

Across most enterprise platforms, an agentic workflow comprises three layers – the model, orchestration, and application – and each layer can see agentic risk signals that the others cannot.

This matters when you are operationalising your agentic KRIs because for your agentic workflow monitoring to be viable, a KRI should reside where its risk signal lives, with those that do not risking noise, blind spots, or a false sense of security.

Some indicators require data from a single layer, while others cannot function without data from multiple layers. As a result, effective agentic AI risk management requires a deliberate, multi-layer capability that also accounts for handoffs between layers.

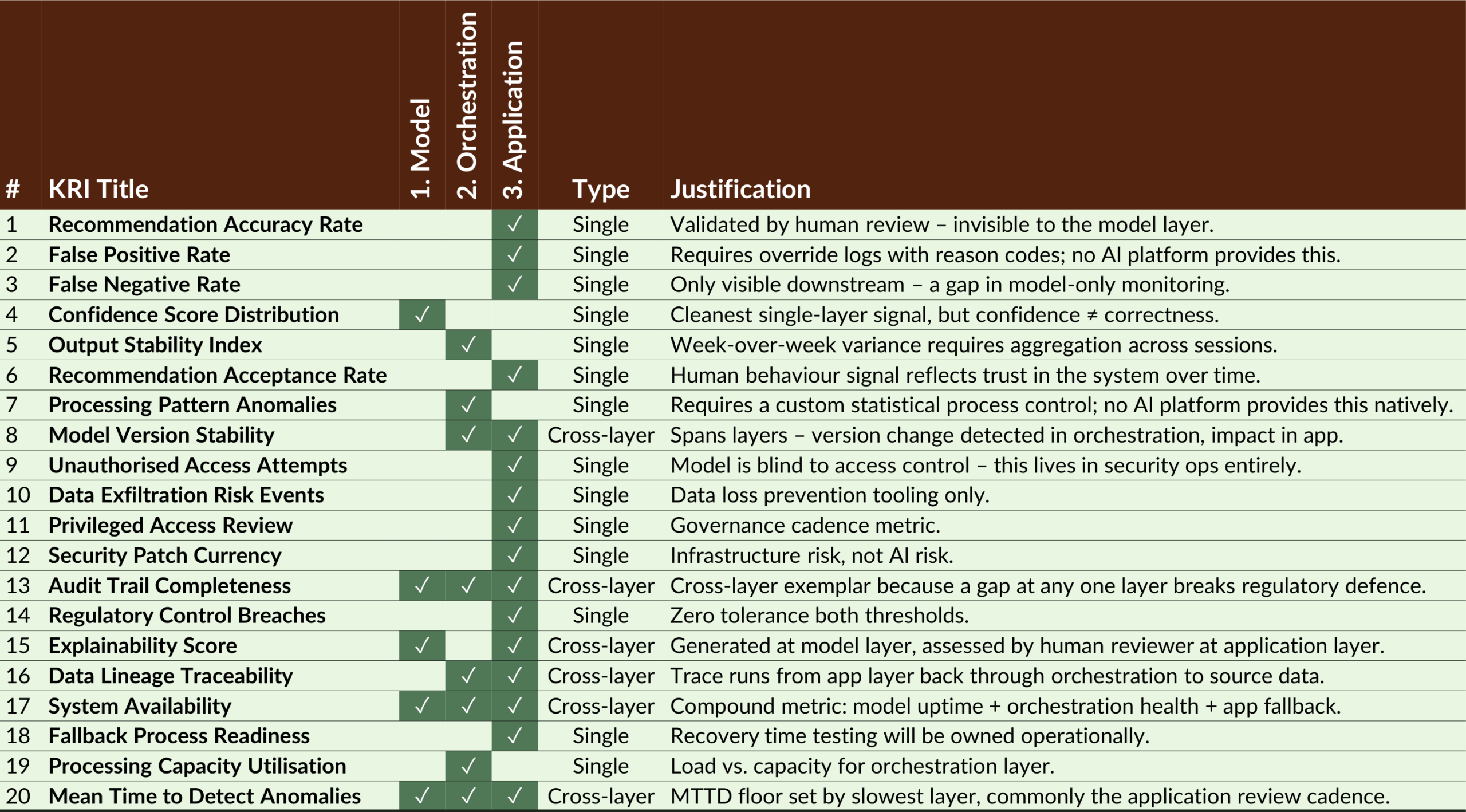

We mapped a sample of KRIs across the three layers and found that half reside primarily at the application layer, while the model layer was the primary source for only one.

Monitoring built on functional layers is therefore less likely to have coverage gaps than a framework tied to a single platform.

Therefore, this analysis provides a methodology for ensuring that other agentic KRIs beyond the sample are instrumented to their appropriate layer.

We conclude by advising firms to understand their risk requirements before selecting a platform – and embed their risk controls into their workflow designs.

To achieve this, this blog explains how to operationalise your agentic KRIs for monitoring workflows across model, orchestration, and application layers.

If you are implementing an agentic workflow and would like to ensure you can monitor it, book a free consultation with our expert design, risk management, and delivery consultants. We’ll look forward to meeting you.

Definition

Operationalising agentic KRIs means implementing agentic key risk indicators so they continuously monitor an agentic workflow’s behaviour across the model, orchestration, and application layers. This ensures each KRI captures the risk signal where it naturally occurs, allowing organisations to detect agentic AI risk, maintain audit trails, and respond to incidents before they propagate across the workflow.

Where You Place Agentic KRIs Will Determine Their Viability

Across most enterprise platforms, an agentic workflow will comprise 3 layers: a model, orchestration, and an application, and each layer can see risk signals that the others cannot.

- The Model – this is the foundation model where inference happens: it receives a prompt and returns an output.

- The Orchestration – this is the logic that sits between the model and the outside world, coordinating the agent’s behaviour. It is the primary site where behavioural drift tends to surface first.

- The Application – this is your organisation’s operational environment: the workflows, human reviewers, downstream systems, audit logs, compliance controls, and breach procedures that consume the agent’s outputs.

Because of this, you will need to place each agentic key risk indicator (KRI) in the layer that can see its required risk signal.

Operationalise Agentic KRIs With Single and Cross-Layer Metrics

In our previous blog, we completed a structured mapping exercise to develop 20 agentic KRIs for 5 common risks. The purpose of that blog was to show how to design KRIs for agentic workflows; it does not claim exhaustive coverage of all agentic risks.

To explain how organisations can then operationalise agentic KRIs, in this blog we have mapped them to each layer based on the source of their data and where, therefore, they should reside.

Most of the KRIs (14 out of 20) proved to be ‘single-layer KRIs’, where one layer provides all the data the metric needs to be accurate.

The remainder were ‘cross-layer KRIs’, highlighting inter-dependence between the three layers. In these cases, a risk event originated in one layer and propagated through others, meaning it could only be measured accurately with signals from multiple layers.

A hallucination is an example of an agentic AI risk that needs a cross-layer KRI:

- Model layer – the hallucinated output is generated with a satisfactory confidence score, so many current models might not fire an alert because confidence scores measure output coherence (not factual correctness) and the model does not know it is wrong.

- Orchestration layer – if the output deviates from the statistical pattern of behaviour, it will log an anomaly. An alert may or may not fire, as it could be interpreted as either a drift or a legitimate change in the input distribution.

- Application layer – a cross-layer audit trail will capture the full sequence, a human reviewer (or downstream validation step) can identify the incorrect output, and only here can the event be confirmed as a hallucination.

Effective Agentic Workflow Monitoring Requires a Multi-Layer AI Monitoring Capability

Other key findings were that:

- Half of the KRIs (10 / 20) lived primarily or entirely at the application layer.

- The model was the primary layer for only one of our KRIs and contributed partially to three others.

This is a problem because the dashboards of standalone model providers take from the model layer, which would satisfy the needs of only 1 out of the 20 KRIs in our sample.

Using purely logic, the initial conclusion is that monitoring an agentic workflow requires a deliberate, multi-layer capability that should be designed at each layer and account for handoffs between them. In practice, this means defining KRI ownership by layer at design time, not after deployment; see our pre-deployment agentic risk assessment.

Viable KRIs Reside Where Their Risk Signal Lives

The next conclusion is that organisations should instrument their KRIs at the layer where the risk signal naturally lives, not necessarily where a vendor solution is readily available.

Let’s examine three example KRIs:

- KRI 4 (Confidence Score Distribution) is a good example of a model-layer signal: the model returns a confidence score with each output, and you monitor the distribution. The metric needs no orchestration logic or human review. However, it is the exception, not the rule – and it illustrates the model layer’s limitation because confidence only tells you the model’s self-assessment, not whether that assessment was correct.

- KRI 9 (Unauthorised Access Attempts) is a clean example of a single-layer signal that must reside in your organisation’s application layer; specifically, in your security information and event management (SIEM), your identity and access management tooling (IAM), and your security operations. This is because the model has no visibility into who is calling it or under what permissions, serving as a reminder that agentic AI risk monitoring extends beyond traditional AI model risk – it is also the risk of an AI system operating within a broader attack surface.

- KRI 13 (Audit Trail Completeness) is the clearest example of a cross-layer metric because it is needed for regulatory defence and AI agent audit trail traceability, yet it can only be implemented across layers:

- The model layer logs the input.

- The orchestration layer logs the decision chain.

- The application layer logs the human review and downstream outcome.

A gap at any one layer would break the chain and, therefore, your regulatory defence under frameworks such as the EU AI Act (Article 12) or GDPR’s accountability principle (Article 5(2)). We believe this points to the need for an aggregation mechanism that pulls records from all three layers and validates that none are missing.

While some platforms (e.g. AWS Bedrock Agents or Microsoft Azure AI Foundry) are extending into cross-layer AI observability, not all offer this (making it a differentiator for your platform selection process). Even for those that do, it will remain possible that their boundaries may not align with your organisation’s operational environment.

We advise caution here because accepting a vendor default that misaligns with your signal source would introduce the risk of blind spots, noise, or metrics that could lull you into a false sense of security.

Embed Your Risk Controls Into Your Workflow Designs

Finally, we believe this analysis implies several additional conclusions:

- Monitoring built on functional layers is less likely to have coverage than a monitoring framework tied to one platform. While the former requires greater integration effort across tools, the latter will have gaps the moment an agent calls a tool outside that platform’s boundary. One built on functional layers will not.

- Absence of cross-layer monitoring will impair regulatory defence, particularly under frameworks requiring end-to-end audit trails, such as the EU AI Act or sector-specific regulations (e.g. SR 11-7 for US banking).

- Organisations should understand their risk requirements before selecting a platform for a particular agentic workflow as part of a coherent agentic AI risk management And their engineers should embed their agentic AI risk controls into their workflow designs, rather than assuming the platform will meet their governance requirements. Those that take the opposite approach may end up discovering monitoring gaps only in post-incident reviews, which is obviously best avoided.

- Final confirmation may move at the pace of the slowest layer – the last implication we see in these results is that the slowest layer will determine the Mean Time to Detect (MTTD). So, if your application layer review runs weekly, your fastest MTTD can only be one week, regardless of how fast your model and orchestration monitoring fires.

If you are implementing an agentic workflow and would like to ensure you can monitor it, book a free consultation with our expert design, risk management, and delivery consultants. We’ll look forward to meeting you.

Frequently Asked Questions

Agentic KRIs (key risk indicators) are measurable signals used to monitor whether an AI agent or agentic workflow is behaving within acceptable risk boundaries. They help organisations detect abnormal behaviour, operational failures, or governance breaches before they escalate.

Agentic workflows operate across multiple layers – the model, orchestration logic, and the application environment – and each layer observes different risk signals. Effective monitoring therefore requires cross-layer visibility rather than relying on model-level metrics alone.

Agentic KRIs should be monitored in the layer where their risk signal naturally appears – whether the model, orchestration layer, or application environment. Placing a metric in the wrong layer can create blind spots, noise, or a false sense of security.

Some risks propagate across layers of an agentic workflow. A cross-layer KRI combines signals from multiple layers so organisations can detect and diagnose incidents that would otherwise remain invisible within a single-layer monitoring system.

Not always. Many platform dashboards focus primarily on model-layer metrics, while many operational risks appear in orchestration or application layers. Effective monitoring therefore often requires integrating signals across multiple tools and environments.