In brief

An AI agent autonomy policy is essential because autonomy is not binary and must be calibrated for each use case.

Organisations adopting agentic workflows should decide their risk appetite for different autonomy levels early, as delaying this decision causes either too much or too little autonomy to undermine your adoption of agentic AI.

The article highlights key risks, trade-offs, and oversight needs, especially in regulated sectors.

It outlines what a strong policy must include, from objectives and agentic risk appetite to governance, approval, and autonomy monitoring.

It also provides a free agentic risk appetite statement and adoption strategy template built for a risk-averse regulated entity.

Autonomy isn’t either ‘on’ or ‘off’

Autonomous AI agents are on the rise and, if they haven’t already arrived at your company, they’re probably on their way.

However, before rushing in, remember that autonomy isn’t a binary on/off switch. It’s a spectrum you calibrate to your needs. Indeed, many failed AI projects stem from unclear decisions on how much autonomy to delegate.

To help you decide how much control to retain and where the risks change, this model builds on five levels of autonomy classified in a recent paper by Feng, McDonald, and Zhang (2025) of the University of Washington.

Linking this to the real-world, check out this separate article that shows how society has delegated all 5 levels of autonomy to non-humans for hundreds of years.

Agent autonomy level | Risk profile | Description |

|---|---|---|

1. Assistant | Lowest | User makes all decisions; agent executes tasks when invoked. |

2. Collaborator | Low | User and agent share planning, delegation, and execution. Agent reasons within limits. |

3. Consultant | Medium | Agent reasons independently but leaves the final say to the user. |

4. Copilot | High | Agent acts independently, requiring the user’s approval only in risky or specified cases. |

5. Captain | Highest | Fully autonomous; user only monitors via logs and can hit an emergency off-switch. |

3 reasons autonomy must be an early design decision

Because autonomy comes in varying degrees, you need to calibrate how much you delegate to each use case. The winners, therefore, will treat delegating autonomy to an AI agent as a formal risk appetite decision, permitting different levels of it dependent on an AI agent’s business case. There are three important reasons for this:

- Not every use case can tolerate full autonomy – for example, creative, low-risk tasks may suit high autonomy, while regulated activities require lower autonomy and clear risk management.

- Autonomy’s risk / reward trade-off is not straightforward – while greater autonomy increases reward (e.g. efficiency) it also raises the risk of error. However, if you give away too much control and it goes wrong, the remediation exercise will be inefficient – eroding and potentially neutralising initial benefits.

- Software agents cannot be sanctioned – if you give them too much autonomy and their actions create a problem, it will not be them who lose their annual bonus, get fired, or end up in court. Because we cannot sanction an agent, the burden of proof therefore flips from “trust until proven harmful,” to “distrust until proven governable.”

Indeed, overlooking this early design decision will increase the chance your agentic AI transformation fails in a costly way by giving away too much autonomy or underwhelms by delegating too little.

Define your AI agent autonomy policy and adoption strategy

To maximise the value of your agentic transformation, we recommend you define a Board-level policy on how much autonomy you will delegate.

This is vital because you will want to avoid a ‘free-for-all’ approach yet, without guidance, staff may be influenced by the easy availability of AI agents to experiment in some ways that end up being disadvantageous.

In terms of its content, we believe a best practice policy should cover the following areas:

1. Agentic Risk Appetite Statement

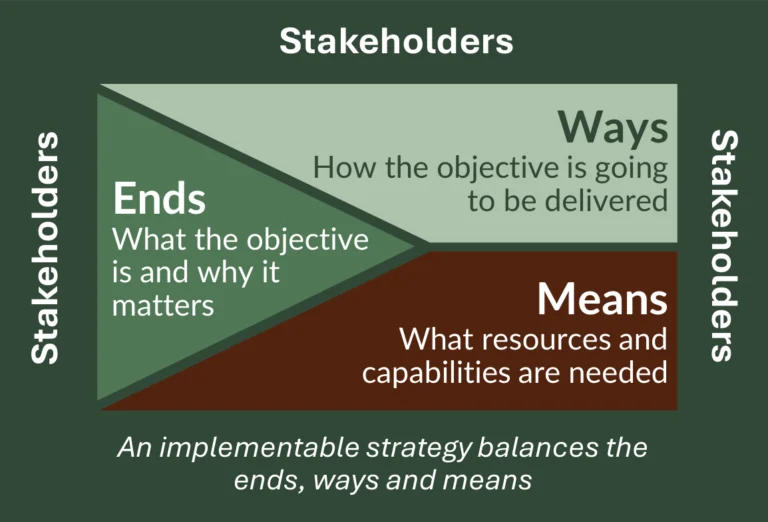

- Overall position on agentic AI – define the company’s objective in deploying agentic AI, why it is important, and its overall position on delegating autonomy to AI agents.

- Permitted range of autonomy across different workflows – outline permitted uses and parts of the company that are in- and out-of-scope for agentic AI. Using the 5 levels of autonomy, express upper and lower limits for the level and nature of autonomy your company will tolerate in each area. To ensure a holistic perspective, break it down by processes, departments, geographies, software, information assets, as well as supplier / partner relationships.

- Risk Category Level Appetite Statements – to help managers apply this strategic risk appetite to specific workflows, you should also express positive and negative appetite statements for each category of agentic risk.

- Agentic transformation – to coordinate and scale your agentic change projects, explain how you will integrate agentic AI into your organisational change management framework.

- Agentic risk management – to mitigate risk in specific agentic workflows, establish an agentic risk management capability and specify its role.

- Responsibilities – clarify staff responsibilities for policy adherence as well as accountabilities if agent outcomes diverge from intent.

- Governance – confirm the governance arrangements for overseeing compliance with the policy.

2. Agentic AI adoption strategy

Crucially, the policy should conclude with a coherent adoption strategy that is a step-by-step guide on how the policy will be implemented, maintained, and continually improved.

To help here, our template includes both general success factors for any strategy as well specific ones for an agentic transformation.

Phase | Key steps | Analogy: don’t crash the car! |

|---|---|---|

1. Create agentic capability |

| Learn the theory of driving. |

2. Launch your pilot agentic workflow |

| Practice and obtain your driving licence. |

3. Scale capability |

| Become an experienced driver. |

Agentic Risk Appetite Statement Template

You now have the key elements to draft your AI agent autonomy policy with confidence.

If you would like to leverage our experience further, you are welcome to download our Agentic Risk Appetite Statement and Adoption Strategy template for free. It is built for a risk-averse regulated entity and you can customise it to meet your needs.

If you want some support with achieving this, we could either:

- Upskill your team with a practical training workshop.

- Work alongside you to develop your agentic AI risk appetite and adoption strategy.

- Assess the risks of a particular agentic workflow.

FAQs

An AI agent autonomy policy sets clear rules on how much decision-making power AI agents can have, and under what conditions. It prevents a “free-for-all” approach by defining where autonomy is allowed, limited, or prohibited. Without a policy, organisations may discover – too late – that teams set autonomy levels inconsistently, creating safety, compliance, and reputational risks. A policy ensures AI agents are used responsibly, safely, and in line with your organisation’s goals and values, maximising your chances of successful pilots that you will then be able to scale.

If you delegate too much autonomy too early, the organisation may lose visibility, control, and accountability. Remediation becomes costly, and the initial efficiency gains are often eliminated. In the worst cases, you may breach policies, contracts, or industry regulations. A structured approach – supported by human-in-the-loop controls, approval checkpoints, and monitored guardrails – keeps autonomy governable and aligned with your risk appetite.

Regulated industries such as asset management, law, and accounting must take a more cautious, evidence-driven approach. These sectors face stricter expectations around conduct, documentation, and auditability. A risk-based approach to AI agent autonomy is essential, supported by clear human oversight, audit logs, and policies that prove the organisation maintained control. This ensures compliance obligations are met while still benefiting from agent capabilities.

A strong policy should cover:

a. Objectives and risk appetite for using AI agents.

b. Approved use cases, in-scope and out-of-scope workflows.

c. Responsibilities for staff, owners, and teams.

d. The AI agent approval and certification process.

e. Minimum human input and oversight requirements.

f. How the organisation will monitor autonomy boundaries and handle exceptions.

g. AI agent adoption strategy, outlining phases and key steps.

These elements ensure consistency and safe decision-making across the organisation.

Monitoring involves checking whether an AI agent continues to operate within its permitted autonomy level over time. This includes audit trails, feedback loops, escalation paths, and performance testing under real conditions. Regular reviews ensure autonomy hasn’t quietly expanded beyond what was approved. If boundaries are breached, corrective action should be fast and transparent.

Move gradually. First, use copilots that help with tasks but don’t control workflows. Once the organisation is comfortable, trained, and has embedded controls, pilot a narrow agentic workflow with clear success measures. Only consider higher autonomy when you can demonstrate stable performance, oversight maturity, and alignment with your enterprise AI agent adoption strategy. When safety, auditability, and governance are proven, autonomy can increase.

Yes. If you’re looking for a practical, ready-to-use AI agent autonomy policy template, you can download ours for free. It includes the key components you need to set autonomy levels for AI agents, define oversight and approval requirements, and monitor autonomy boundaries over time. You can customise the template to suit your organisation, whether you are just starting your enterprise AI agent adoption strategy or looking to strengthen governance in a regulated environment. Email us if you’d like tailored support adapting the template.