Table of Contents

Executive Summary

Evidence is growing that a pre-deployment agentic risk assessment reduces incidents, rework, and build cycles by embedding risk controls into workflow design – creating a stronger organisation that is ready for future deployments.

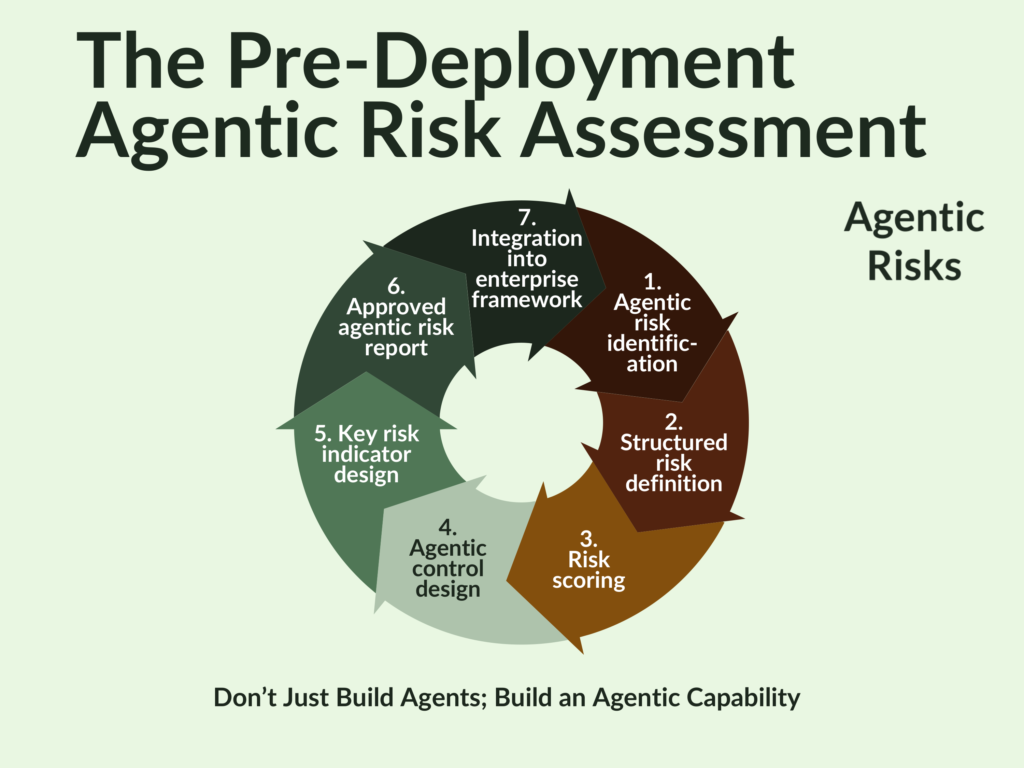

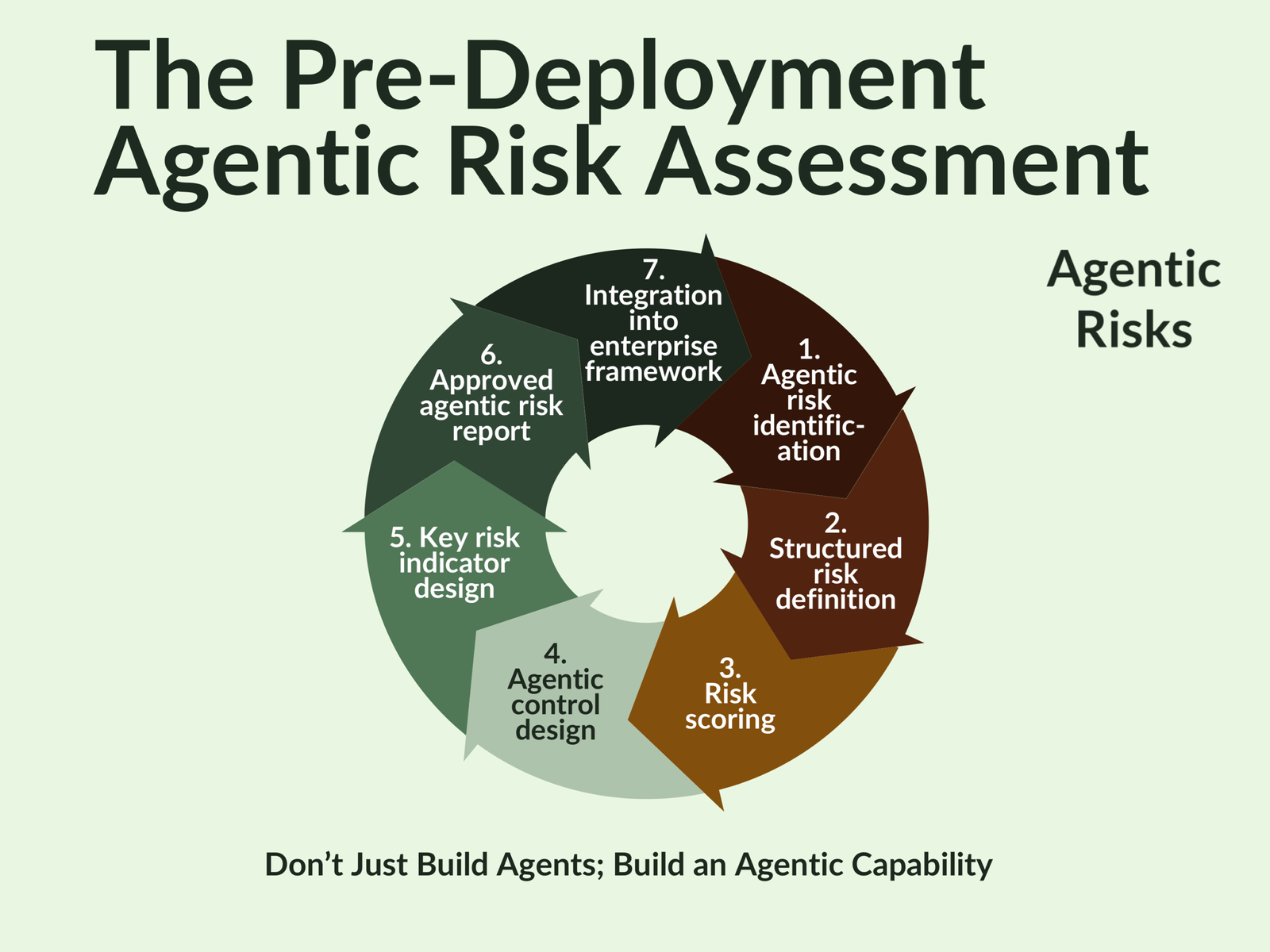

This article outlines the 7-step process from agentic risk identification to an approved risk report that integrates into your enterprise framework.

To finish, we note that, at the time of writing, we are incorporating this functionality into Gerido© – Agentic Risks’ in-house risk management tool. Subscribe to our newsletter if you would like to receive further updates on this topic.

If you may already be running some agentic risks, skip straight to our post-deployment agentic risk assessment.

Agentic Risk Assessments Create Structural Advantages

Evidence is growing that embedding risk controls in the design phase of an agentic workflow yields a smoother and cheaper transformation by avoiding post-deployment incidents and re-engineering – ultimately shortening build cycles.

Pre-deployment agentic risk assessments for autonomous AI agents stimulate this by combining corporate knowledge (e.g., agentic authority models, control sets, and structured risk profiles) with practical experience (e.g., escalation paths, human approval gates, and kill switches).

And, should an issue occur, evidence of preventive risk management will give you a defendable answer to the regulator’s question, “How did you decide what controls were needed?”

The Costs of Skipping This Step

The European Commission’s AI Act explicitly requires prior risk management for high-risk AI systems, including autonomous and agentic AI systems, but what about when insufficient risk controls unintentionally create a high-risk agent for a workflow that had been classified as ‘low risk’?

This can happen all too easily if isolated pockets of improvisation limit the development of a broad agentic capability. When this occurs, you should expect repeated firefighting of preventable incidents, costly remediations, or a growing reliance on workarounds.

All too often, this results from a combination of internal factors that erode confidence and make future approvals harder to obtain:

- Weak cross-functional coordination can discourage a business owner and technology lead from requesting a risk assessment.

- The novelty of delegating autonomy to non-human systems may leave an organisation unaware of the new risks it has introduced through agentic AI deployments, e.g. excessive agency, prompt injection, and over-reliance. Yet the pitfalls are known and already available in a format you can import into your risk taxonomy.

- A culture that prioritises fast, visible progress exposes itself to threats by cutting corners. Skipping the risk assessment will not make the risks disappear, they will just materialise later as unmitigated issues – in testing, if you’re lucky; in production, if you’re not.

Key Success Criteria

As we stand near the start of the agentic era, therefore, it is worth defining the characteristics of an effective pre-deployment agentic risk assessment that will avoid these pitfalls.

Specifically, we believe it should:

- Input requirements for platform selection, giving you confidence that your chosen platform will be able to implement the controls you need in a machine-enforceable way.

- Detail the risk controls the engineer should build

- Inform the selection of training data, so risk controls can be embedded into an agent’s memory.

- Give structure to testing – enabling the systematic evaluation of controls, as well as the key risk indicator thresholds you will need for effective monitoring.

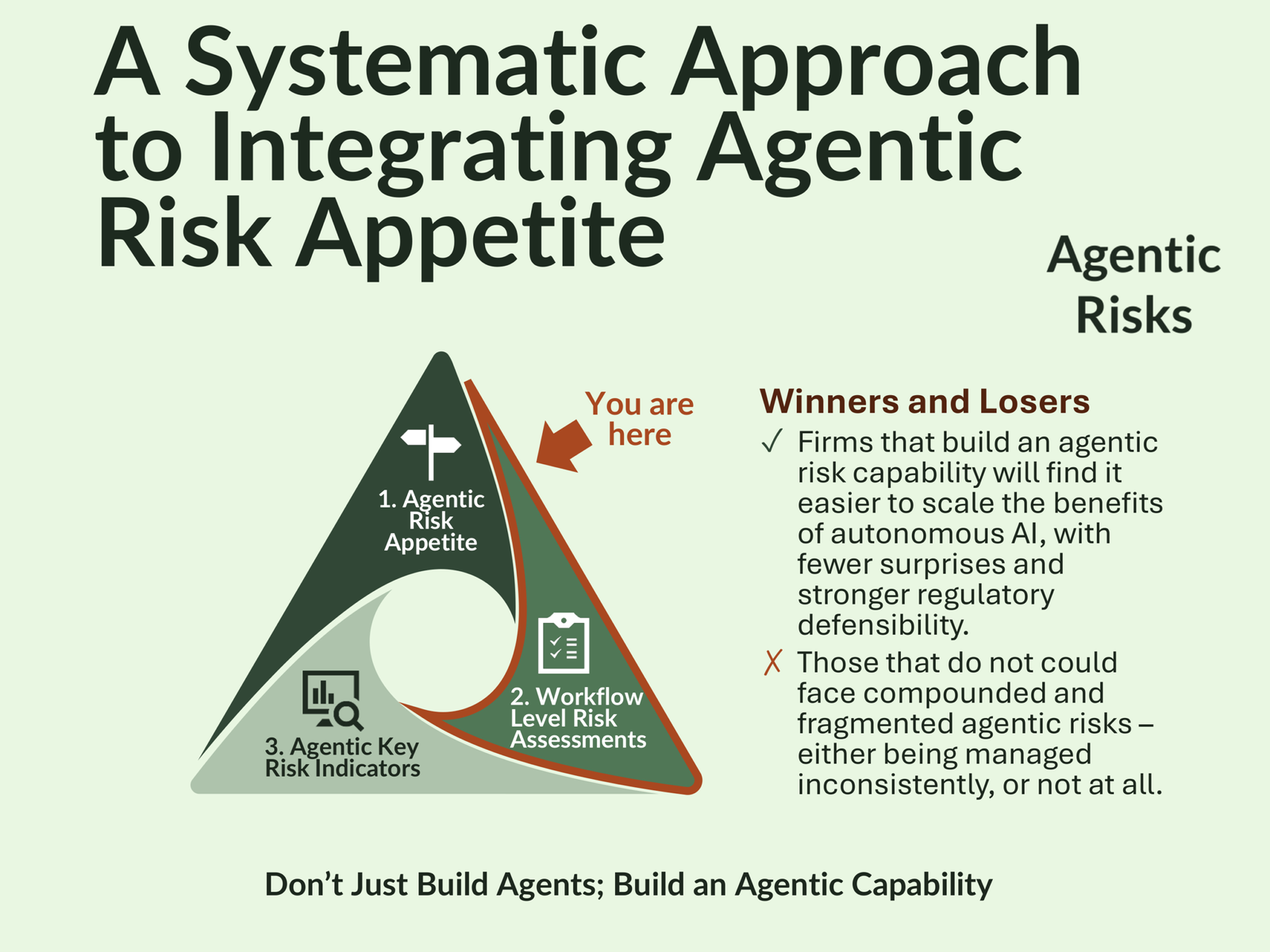

- Include a formal decision that the planned agentic workflow conforms with the organisation’s agentic risk appetite statement.

The Pre-Deployment Agentic Risk Assessment

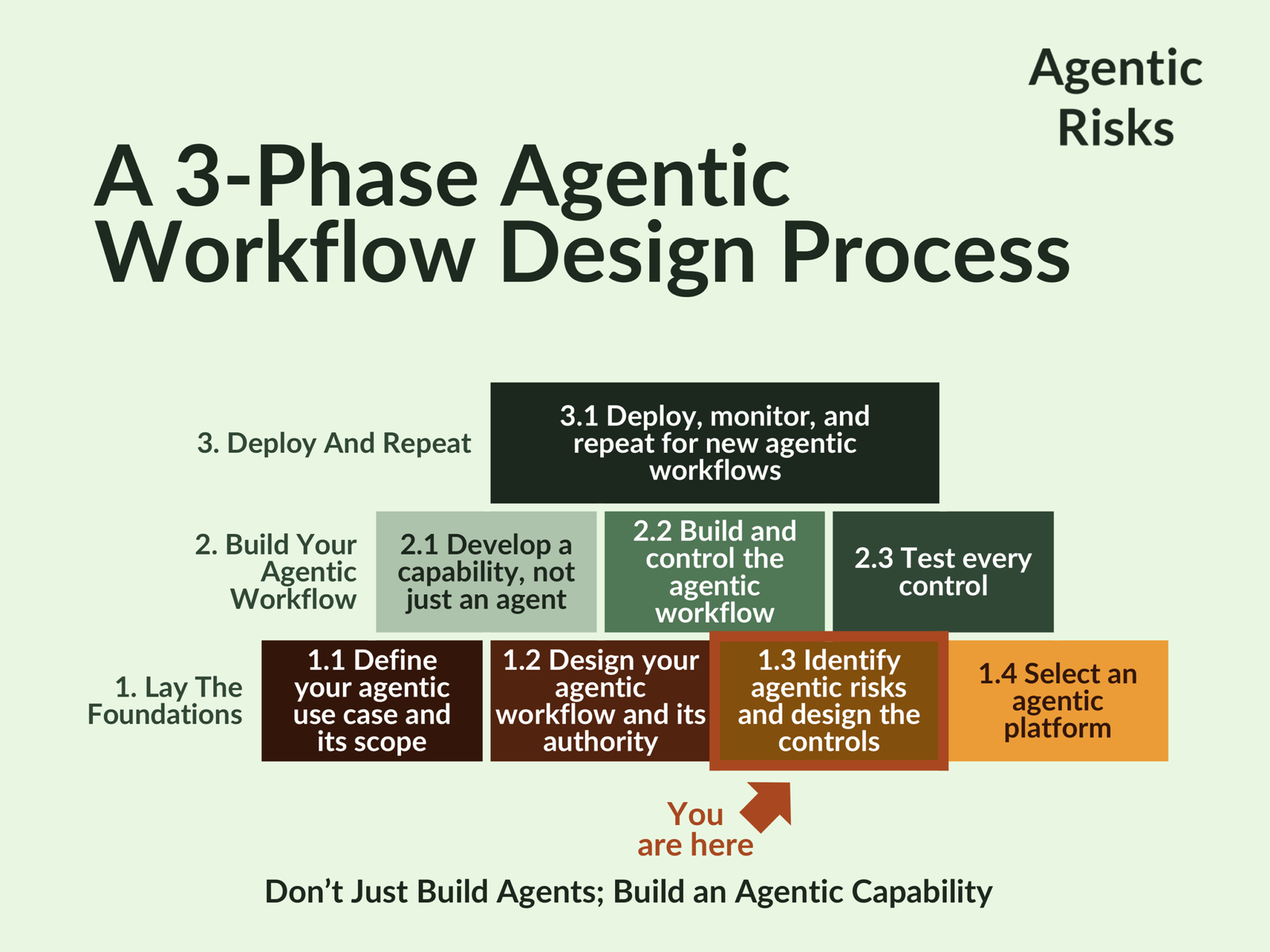

Putting these criteria into practice, the pre-deployment agentic risk assessment constitutes Step 1.3 of Agentic Risks’ agentic workflow design process. Its purpose is to apply your organisation’s agentic risk appetite statement at the workflow level, resulting in the risk managed delegation of autonomy.

We follow a 7-step process that typically takes two to three structured workshops, including preparation and follow-up actions:

- Agentic risk identification – to prevent ambiguity in the goal definition, identify the risks, and ensure consistency with the strategic risk appetite, the risk manager should walk the agent’s decision pathway with the business and technology representatives. Consider risks from a broad set of sources:

- The agent itself which will comprise three elements

- Components (LLM, tools, instructions, memory, orchestration)

- Design (architecture, access controls, monitoring, fail-safe behaviour)

- Capabilities (cognitive, interaction, operational abilities)The agent itself which will comprise three elements: Focus on: the desirable and undesirable actions the agent could take; the data dependencies you should manage; and how an agent ‘game’ the system?

- Organisational capability – use the enterprise-wide agentic AI risks and control framework of the MIT Risk Repository to ensure an end-to-end review.

- Context – deep-dive into the format, fields, and types of information the agent will use as its working memory (state schema) to avoid drift. How will it log decisions, tool calls, and data sources? Other risk factors to consider: model complexity (number of tools and steps), transaction volume, depth of training data required, nature and maturity of the knowledge source, and human oversight requirements.

- External constraints and threats

- Evaluate compliance requirements – new ones like the EU AI Act, as well as existing ones like regulatory rulebooks and international standards such as information security.

- Map the attack surface to identify every way the agent could come under attack, e.g. inputs, internal reasoning, tool use and execution, memory and learning, and human override and escalation paths.

- The agent itself which will comprise three elements

- Structured risk definition

- Agent element – component, design, or capability.

- Failure mode, e.g. agent failure, external manipulation, or tool malfunction.

- Resulting hazard, e.g. data breach, misinformation, unsafe actions.

- Risk scoring – determine the inherent risk rating using your standard risk matrix. Identify existing controls that apply and calculate residual risk.

- Agentic control design

- Design additional controls to bring residual risk within appetite, leveraging the risk and control framework again to ensure best practice control strategies.

- Assign control ownership and define pre-deployment confirmatory and adversarial tests needed (Step 2.3 in the broader design process).

- Crucial feasibility checks:

- Confirm sufficient training data exists for each risk control.

- Confirm the engineer can code controls into machine-enforceable AI controls in Step 2.2 (“build”). If not, consider changing objectives or abandoning the initiative.

- Key risk indicator design

- Define and justify measures of control effectiveness and ongoing agentic behaviours to monitor.

- Define monitoring frequency.

- Assign owners, set a tolerance threshold (early warning) and an appetite limit (hard boundary), and confirm response actions if crossed.

- Approved agentic risk report

- Document results in an Agentic Workflow Risk Assessment.

- Submit for review by business and technology representatives and to the CRO for sign-off.

- Present to the AI Governance Committee for review.

- Integration into enterprise framework – map each agentic risk to standard enterprise risk categories (e.g., operational, strategic, compliance) to integrate with existing controls and audits.

Engage Us to Support Your Agentic Risk Assessments

To achieve this, the discipline of risk management needs to evolve, and the high attendance at our agentic risk management webinars for the Institute of Risk Management demonstrates encouraging engagement.

As well as knowledge, though, risk managers need tools. So, we have also built Gerido© – a purpose-built application that guides us through structured, consistent, and audit-ready workflow-level risk assessments.

At the time of writing, Gerido© is operational for POST-deployment assessments – helping our clients identify and respond to risk flags in their live agentic workflows.

In the coming weeks and months, we will upgrade Gerido© to help our expert risk managers navigate:

- PRE-deployment agentic risk identification, definition, and scoring.

- Control strategy and key risk indicator design.

- Independent workflow-level risk reporting.

Subscribe to our newsletter if you would like to receive further updates on this topic, or book a meeting with us if you would like to discuss your needs with our experts in data and AI strategy, agentic engineering, and agentic risk management.

FAQs

A pre-deployment agentic risk assessment is a structured evaluation performed before an autonomous AI agent is built or released. It identifies agentic risks, scores inherent and residual risk, and designs machine-enforceable AI controls aligned to the organisation’s agentic risk appetite. The assessment also confirms feasibility: that controls can be implemented, tested, and monitored in practice. The output is a documented agentic workflow risk assessment that supports internal approval and governance.

A pre-deployment agentic risk assessment prevents low-risk use cases from unintentionally becoming high-risk agents. It embeds risk controls into design, reducing post-deployment incidents, rework, and regulatory exposure. It also provides a defendable answer to how controls were selected if challenged by auditors or regulators. For organisations adopting agentic AI at scale, it is a foundational element of agentic AI risk management.

A pre-deployment agentic risk assessment should be performed once a candidate agentic workflow is defined but before a platform is confirmed and build begins. It should be refreshed if objectives, tools, data sources, or autonomy levels materially change. High-impact or customer-facing agents may warrant multiple iterations during design. This timing ensures risks are addressed while changes are still cheap.

The assessment should involve business owners, technology leads, and risk management, with input from compliance and security where relevant. In practice, it is typically delivered through two to three structured workshops supported by preparation and follow-up actions. Risks are identified, scored, and mapped to controls using an enterprise agentic risk and control framework. The resulting autonomous AI agent risk assessment is submitted for business, technology, and CRO approval.

A pre-deployment agentic risk assessment focuses on autonomy, goal-directed behaviour, and tool-initiated actions rather than static models or systems. It examines agent components, design, and capabilities, not just data and algorithms. It also requires machine-enforceable AI controls and continuous behavioural monitoring. This makes it purpose-built for agentic workflows rather than conventional software or analytics.