Table of Contents

Executive Summary

This article is for experienced risk professionals and explains why agentic risk management is now essential for retaining control of agentic AI workflows.

Traditional risk management assumes humans design, execute, and oversee systems end-to-end, but agentic AI breaks that assumption by delegating execution to autonomous systems. This development fundamentally changes how risk emerges, how controls fail, and where accountability must sit.

To mitigate risk in an agentic workflow, therefore, risk management must extend its role beyond post-hoc monitoring into the design phase, monitor continuously rather than periodically, and be ready to address new risks arising from emergent behaviours.

Agentic AI’s Challenges to The Risk Management Process

Risk management is a ‘look-before-you-leap’ discipline aimed at mitigating uncertainty. Most frameworks converge on the same basic cycle: define objectives, identify risks, prioritise them, mitigate them with controls, and monitor those controls over time.

This model works well when humans control a workflow end-to-end: they design it, execute it using predictable technology, and monitor its performance.

Agentic workflows break this assumption by delegating execution to autonomous systems that decide, plan, act, and learn at machine speed without continuous human direction. This development creates two compounding challenges:

- Agentic execution becomes inherently less predictable, creating potential for ‘emergent behaviour’ as an agent learns new ways to achieve its goal. For example, it might start bypassing controls that we had assumed but never enforced.

- A risk manager’s control becomes concentrated into the design and monitoring phases, creating the need for risk controls to be an integral part of agent design and for continuous monitoring to identify emergent risks.

Agentic risk management, therefore, is the discipline of identifying, constraining, testing, and continuously monitoring the risks introduced when humans delegate execution to autonomous AI agents rather than control it directly.

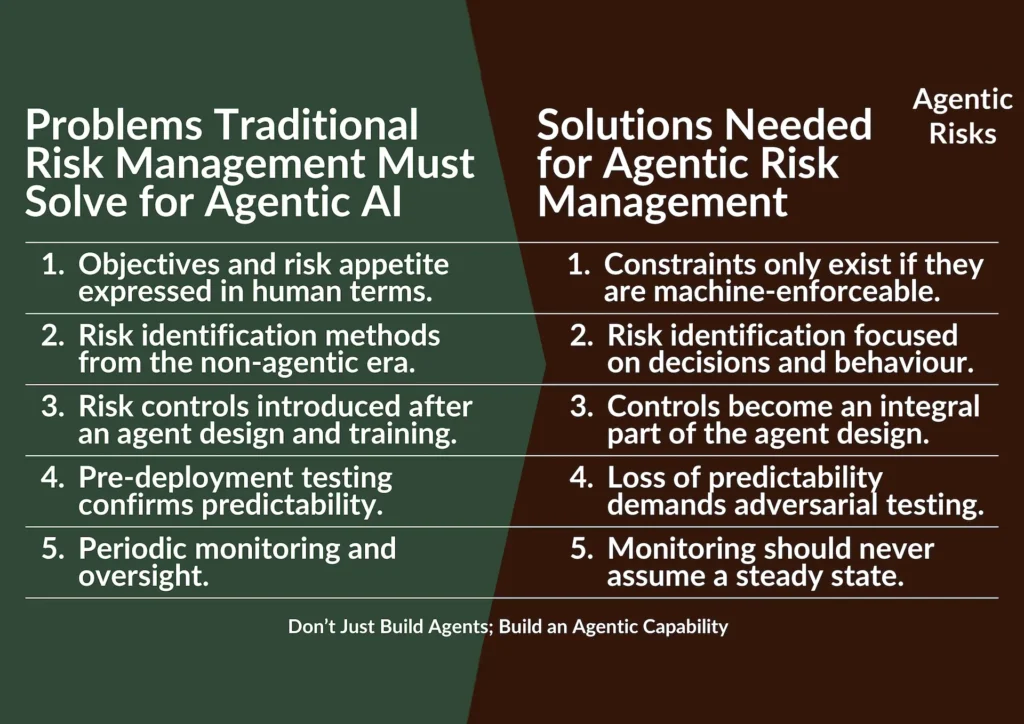

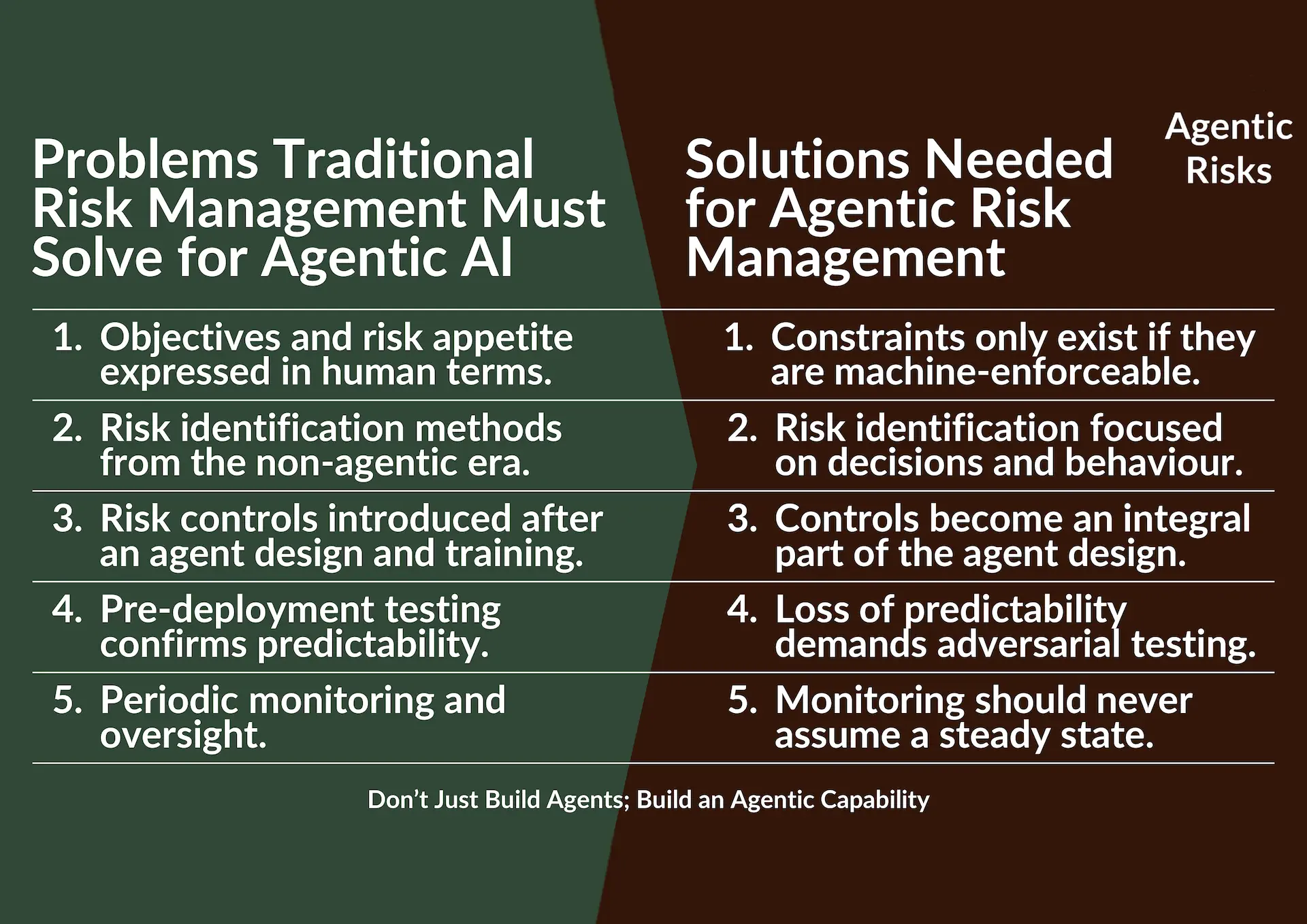

Five Problems Traditional Risk Management Must Solve for Agentic AI

An assessment of the impact these challenges have on traditional practices reveals five problems that risk management for agentic AI must solve to remain effective:

Problem 1: Objectives and risk appetite expressed in human terms may be meaningless to an autonomous agent unless translated into machine-enforceable constraints.

Problem 2: Risk identification methods from the non-agentic era, e.g. lists of common risks for a particular system, company-specific brainstorming, and last year’s risk register, will be either less effective or redundant.

Problem 3: Risk controls introduced after agent design and training may be costly and too late, challenging the practice of engaging risk managers in the second half of a project to give an opinion on what has been designed.

Problem 4: Pre-deployment testing confirms predictability – testing a traditional system involves confirming it meets requirements. But a positively tested autonomous system could still be co-opted by a malicious actor or could start succeeding in the wrong way, demonstrating how confirmatory testing alone will be insufficient.

Problem 5: Periodic monitoring and oversight – lastly, during an agent’s operational phase, emergent behaviour can change its risk profile, creating new and uncontrolled risks. However, the breaks inherent in a periodic monitoring system would delay their identification, during which time an autonomous agent could rapidly compound a problem.

Agentic Risk Management Must Extend into the Design Phase of Agentic AI Systems

To solve these problems, risk management must evolve in five ways that may initially resemble traditional practices done earlier or more rigorously.

But the difference is more important than that: agentic risk management must also be ready for systems that actively decide how to pursue their objectives, adapt their behaviour, and exploit gaps in design or oversight.

In response, risk management must shift from static assurance of a steady state to continuous oversight of autonomous decision-making at machine speed.

Solution 1: Constraints only exist if it they are machine-enforceable

Agent designers should translate business intent into ranked objectives and risk appetite into machine-enforceable constraints e.g. trade-off rules, explicit prohibitions, and thresholds.

Risk managers should evaluate the strength of objectives, incentives, and controls, and ensure they map to code-level constraints, treating ambiguity itself as a risk. Where ‘known unknowns’ dominate, they should ensure that abandoning an autonomy project remains an explicit and respectable outcome.

Delegating autonomy should therefore be treated as a formal risk appetite decision, not a technical implementation detail. The question is not whether an agent is well designed, but whether the organisation is prepared to accept the consequences of independent action within defined boundaries. Where this cannot be articulated clearly, the appropriate risk response may be to limit or decline autonomy.

This translation already exists in some enterprise platforms. For example, agent platforms increasingly allow administrators to enforce tool-level permissions, data-access constraints, spending limits, environment separation (development vs production), and mandatory approval gates. These are executable constraints that determine what an agent can and cannot do, regardless of its learned behaviour.

Solution 2: Risk identification should focus on decisions and behaviour

In the design phase, engineers and risk professionals should walk the agent’s potential decision pathway together to assess the risks it would raise. Take care to assess:

- The complexity and impact of the decision rights being delegated.

- The specificity, completeness, and consistency of the goal definition.

- The ability to attribute accountability for an issue.

- The potential for risk cascades.

- How an agent might ‘game’ the system if given those objectives.

Lastly, unmanaged agent replication can create latent risk even if the original agent appears well designed. Therefore, risk identification should also extend beyond a single agent instance to its lifecycle: how many versions may exist, who can modify or republish it, how it is shared, and how retired agents are decommissioned.

Solution 3: Controls become an integral part of the agent design, not additional safeguards

To avoid the inefficiency and potential impossibility of adding controls later, risk managers should ensure controls are embedded into an agent’s design and enforced at machine speed, forming the core of effective AI agent controls.

Examples include action limits, tool-use permissions, spend or time budgets, mandatory human checkpoints before irreversible actions, confidence thresholds that trigger halts, and rollback mechanisms.

As a result, beyond traditional likelihood and impact assessments, risk reviews should ask: how far could this go before a human notices and intervenes? This requires explicit consideration of escalation speed, detectability via early warning signals, reversibility, and potential blast radius if things go wrong.

Your situation might also warrant using a second, more restricted agent to watch the first and be ready to intervene or simply an item of ‘old-fashioned code’ that cannot be argued with.

Solution 4: Loss of predictability demands adversarial testing

In addition to confirmatory testing, agentic risk management should ask how an agent fails under pressure, behaves when incentives conflict, succeeds in the wrong way, or responds to malicious manipulation.

Agentic testing should therefore confirm not only that an agent works, but what happens when it is deliberately stressed. Disciplines such as red-teaming, adversarial prompting, autonomy boundary testing, incentive manipulation, and multi-agent testing become foundational.

From a regulatory and audit perspective, this also evidences due care: the ability to show that failure modes were actively explored will increasingly matter if you need to explain an incident after the fact.

Solution 5: Monitoring should never assume a steady state

Once an agent is operating autonomously, its risk profile can change faster than any human review cycle. Therefore, risk monitoring for AI agents should be continuous where autonomy, speed, or impact exceed human reaction times, and at least near-real-time in all other cases.

It should focus on the direction and speed of drift and link directly to ongoing risk identification and control tightening. As a result, continuous monitoring is not a replacement for staged governance, but the only way to make it effective under conditions of autonomy.

Specifically, oversight should ensure risk managers can address new risks arising from emergent behaviours. For example, it might include visuals like dynamic traceability graphs that show tool calls, decisions, and data sources used. But it should also cover an agent’s reasoning as well as leading behavioural indicators, such as:

- Boundary-testing behaviour.

- Rising tool use.

- Shrinking human intervention rates.

- Deviations from expected decision paths.

- Unusually confident actions.

Risk choice: does your situation merit continuous monitoring of an agent’s every move, or would a sampling strategy suffice?

Finally, agentic risk is not purely behavioural or technical. Consumption-based pricing, automated scaling, and delegated purchasing authority introduce incentive risks. Cost and usage analytics should therefore be treated as first-class risk signals, not merely financial controls.

Illustrative Example Using a Major Platform

In practice, end users, semi-technical makers, and professional developers can all create agents, each using different tools, permissions, and assumptions.

This fragmented accountability allows autonomy to accumulate without a single point of assessment.

However, accountability for whether an autonomous agent complies with the firm’s risk appetite rests with senior risk owners who should explicitly approve it in the same way they would any other material business model change.

Consequently, we encourage risk managers to familiarise themselves with their agentic platform’s controls so they can recognise when controls are missing and deliberately mitigate agentic risk.

The following table shows how each solution maps directly to controls already available in a common enterprise platform.

(horizontal scroll on some tablets)

(horizontal scroll on mobile)

| Solutions needed for agentic risk management | Illustrative example: how Microsoft already supports agentic risk management | Primary Microsoft control | Why this is important |

|---|---|---|---|

| 1. Constraints only exist if it they are machine-enforceable | Environment separation (dev / test / prod), user and role-based access controls, tool permissions, action / connector availability, approval gates. | Tool controls | Objectives and authorities only matter if they are machine enforceable. |

| 2. Risk identification should focus on decisions and behaviour | Agent inventory, versioning, sharing controls, lifecycle management, usage reporting. | Agent management | Decision risk cannot be managed if agents are invisible or proliferating. |

| 3. Controls become an integral part of the agent design | Tool permissions, knowledge source scoping, data loss prevention policies, sensitivity labels, connector classification (business / non-business / blocked). | Tool controls and Content controls | Post-hoc controls may fail to identify an issue early enough. |

| 4. Loss of predictability demands adversarial testing | Audit logs, prompt / response review, anomaly detection, security information and event management integration, e.g. Sentinel. | Agent management and Security monitoring | Observability and logging only become risk controls when used actively to probe failure modes. |

| 5. Monitoring should never assume a steady state | Real- or near real-time analytics and alerting of performance, behavioural drift, and cost and usage. | Agent management | Periodic review becomes insufficient once agents adapt faster than human governance cycles. |

Conclusion: an Agentic Evolution for Risk Managers

Taken together, these changes define agentic risk management as a distinct evolution for the broader risk management profession.

As a result, the agentic risk manager’s role extends beyond post-hoc review into design, testing, and continuous oversight of autonomous behaviour.

This does not require risk professionals to become platform engineers, but it does require them to understand how controls operate in production and to work closely with IT to ensure governance reflects real agent behaviour.

Webinars and Training Sessions

At Agentic Risks, we use our proprietary agentic AI controls framework, governance tool, and real-world experience within a regulated environment to help firms de-risk their agentic transformations.

In the first half of 2026, several industry bodies will sponsor webinars by Agentic Risks on the ‘Fundamentals of Agentic Risk Management’:

- If you are a member of the Institute of Risk Management, register here to join us between 12:30 and 13:30 (UK) on Thursday 22 January.

- If you are a member of the Investment Association and want to join their webinar in April (tbc), subscribe to our newsletter for updates about this upcoming event.

- If you would like us to provide a customised training session, book a meeting with us to discuss your needs.

FAQs

Agentic AI systems can change behaviour faster than human governance cycles due to learning and optimisation. Periodic reviews are therefore insufficient once autonomy or impact exceeds human reaction times. Continuous or near-real-time monitoring is required to detect behavioural drift, boundary testing, and emerging risks before they compound.

Accountability for agentic AI risk should sit with senior risk owners. While engineers design agents and IT operates platforms, responsibility for whether autonomous decision-making fits within the organisation’s risk appetite cannot be delegated. Therefore, approving autonomy should be a formal risk appetite decision, not just a technical implementation choice.

Machine-enforceable risk appetite means expressing risk limits as executable constraints that an AI agent cannot override. Examples include tool permissions, spending / action limits, and approval gates. These controls define behaviour at the code level, not just in policy statements.

No. Agentic risk management is not a replacement for existing risk frameworks. It is an evolution that adapts established practices to environments where execution is autonomous and adaptive. Most required controls already exist; what changes is how deliberately and continuously they are applied to agent behaviour.