In brief

AI agent ethics cannot rely on human-style moral tests because AI agents feel no shame, consequence, or responsibility, so ethical protection must come from external controls.

Society already delegates autonomy to non-humans such as working dogs, but only with strict training, clear boundaries, accountability, and controlled contexts – showing that autonomy should be earned, limited, and supervised.

To deploy AI agents ethically, organisations should grant autonomy gradually and with robust controls that define purpose, restrict risk, ensure supervision, and maintain clear accountability.

Why Traditional Ethics Tests Fail on AI Agents

We can now delegate AI agents to make decisions, take actions, and complete tasks on our behalf without asking for permission at every step. This raises an obvious question: how will we handle autonomous AI agent ethics?

Traditional ethical tests rely on a human’s desire to avoid embarrassment, punishment, or social shame. Indeed, many business schools teach ‘gut-check’ morality tests to help individuals make considered decisions before acting. For example, would you still go ahead if:

- You had to explain your next step to your family?

- You saw your action on the front page of a newspaper?

- Everyone else did the same thing too?

These work on people because we fear regret, judgment, and consequences if something goes wrong, e.g. losing a bonus, a job, or a reputation.

However, because AI agents are non-humans they lack an internal conscience, rendering them immune to these censures because they cannot be shamed, blamed, or sanctioned.

This gets to the heart of the debate on the ethics of AI agents and why ethical protection for an agentic workflow must depend on verifiable external controls: the alternative – a conscience – doesn’t exist.

How Society Already Delegates Autonomy to non-Humans

To understand how to delegate autonomy to AI agents ethically, it helps to look at where society already trusts non-humans to act on our behalf.

Working dogs give us a useful real-world precedent. They carry out everyday tasks, often make decisions without immediate supervision, and rely on a system of controls rather than ‘morality’.

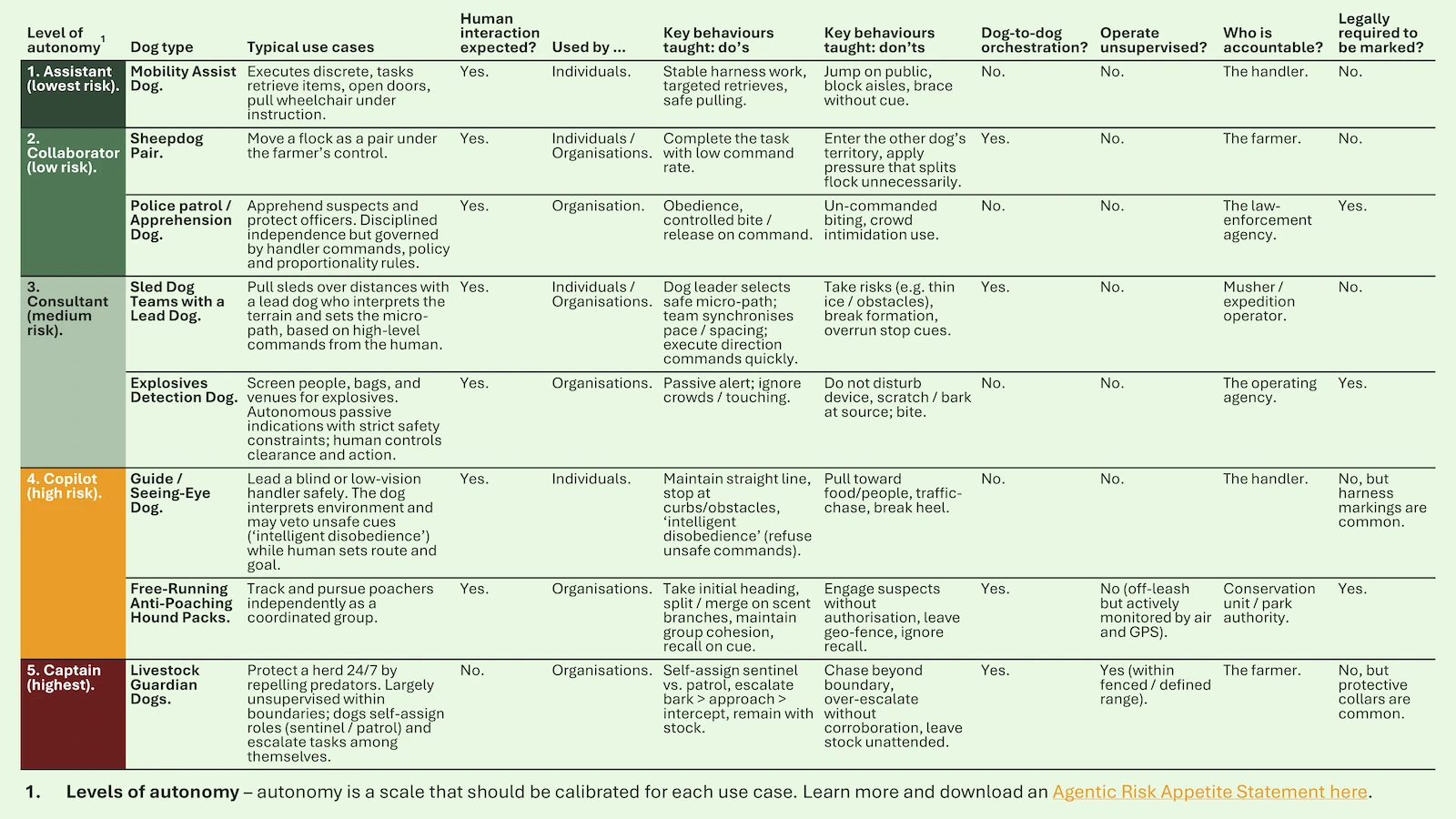

After reviewing many types of working dog, we want to highlight these eight examples that cover all five levels of autonomy (from on-leash to fully unsupervised). They even include ‘dog-to-dog’ coordination, which can act as a useful proxy for multi-agent orchestration.

Five Lessons We Can Learn from Working Dogs

- We already delegate autonomy, but we always control it – society delegates all five levels of autonomy to dogs but never grants full unsupervised autonomy around the public. Maximum freedom only occurs in remote settings where the risk to people is low.

- Training and accountability are non-negotiable – every working dog and its handler receives role-specific training on what to do and what not to do. If something goes wrong, accountability sits with the handler, not the dog.

- Clear identification signals responsibility – service dogs are often identifiable (by a vest or harness), making authority and accountability visible. Exceptions are in private settings or in remote environments with minimal external human contact, although in the latter they can be fitted with protective collars to safeguard them against external threats (see the final image).

- More autonomy in a higher-risk context requires greater control – the more responsibility a dog has, the more training and conditioning it must complete. The best examples of this are guide dogs for blind people, which take around two years to qualify.

- Coordinated autonomy is only allowed in low-risk spaces – dogs can ‘orchestrate’ their own work – from sheepdog pairs to sled teams and packs of livestock guardian dogs – but these activities happen away from populated areas, which limits harm from unexpected interactions with unrelated humans. This remote context mitigates the risk inherent in lower levels of supervision.

Relevance to AI Agent Ethics

Delegating action to a non-human is possible, but the burden shifts from “trust until proven harmful” to “don’t trust until governance is proven.” With dogs, society has spent centuries developing and breeding controls to make autonomy safe. Very few of these controls rely on the dog’s own judgement or on the good nature of third parties.

It is the same for AI agent ethics. We should not expect agents to behave ‘morally’. Ethical deployment will depend on controls the agent understands (clear tasks, training signals, boundaries, and recall commands), controls beyond its awareness (testing, certification, accountability, revocation, and termination), and training for handlers to ensure their competence.

If we learn one underlying lesson from this real-world comparison, it is that earning autonomy is slow, controlled, and context dependent. We do not grant it easily.

The Controls Needed for Ethical AI Agent Deployment

The Enterprise-Wide Agentic AI Risk Control Framework classifies 30 agentic risks into five categories that span the agent lifecycle.

It is important because it lets you perform tasks that are vital to keeping your company safe and compliant:

- Comprehensive agentic AI risk identification.

- Construction of proportionate, multi-disciplinary risk treatment plans.

Many controls are needed for non-ethical reasons, e.g. cost management, however our analysis of autonomy in working dogs indicates the following eight controls are needed for ethical reasons:

(horizontal scroll on some tablets)

(horizontal scroll on mobile)

| Control Theme | How Society Manages Autonomy in Working Dogs | How We Should Manage Autonomy in AI Agents | Why It Matters for AI Agent Ethics |

|---|---|---|---|

| 1. Purpose and Role | Dogs are assigned specific jobs (e.g. guide, detection, herding) with clear expectations. | Define the agent’s purpose, scope, allowed actions, and boundaries before deployment. | Prevents ‘mission creep’ and unsafe behaviour draft beyond the agent’s intended use case. |

| 2. Non-Negotiable Boundaries | Dogs are conditioned to have hard limits (no biting, no chasing children, no leaving the task). | Hard-coded prohibited actions, red-lines, and non-override safety rules. | AI agent ethics cannot rely on judgment – some rules must be absolute. |

| 3. Training and Readiness | Dogs complete rigorous, role-specific training before gaining freedom. Higher autonomy + risker context = longer training. | Test agents in staged environments; increase autonomy only after safe performance is proven. | Autonomy must be earned, not assumed. |

| 4. Certification and Licensing | Some dogs need formal qualification (e.g. police, assistance dogs). Unsafe dogs lose certification, or risk termination. | Pre-deployment testing, audits, and “licensing”. Revoke autonomy or terminate the agent if behaviour becomes unsafe. | Ethical controls must include removal of privilege when standards slip. |

| 5. Identification and Accountability | Working dogs wear vests or harnesses to signal their role and owner accountability. | Agents should be identifiable (ID, owner, logs). A named human remains legally accountable. | Third parties should know they are dealing with an AI agent, and users must know who is responsible if things go wrong. |

| 6. Environment and Risk Settings | More autonomy is more easily managed in controlled or low-risk settings (remote areas, defined tasks). | Restrict higher autonomy to lower-risk workflows. Expand gradually, if necessary. | Minimises ethical and safety harm during adoption. |

| 7. Multi-Agent Coordination | Dogs work in self-coordinating pairs or teams only when the environment is safe and roles are well-drilled. | Allow agent-to-agent collaboration only with strict guardrails, transparency, and audit logs. | Prevents emergent behaviour and diffusion of responsibility. |

| 8. Supervision and Recall | Handlers need training to supervise and can recall or override a dog at any time. | Users must be competent with using override, pause, and recall controls to regain immediate control. | Ethical safety requires humans to stay in charge. |

Conclusion: Ethics for AI Agents Must Be Engineered, Not Assumed

Delegating autonomy to non-human actors is not new – society has been doing it with working dogs for centuries. But we grant autonomy slowly, conditionally, and only when supported by proven controls. We trust neither the dog’s ‘morality’ nor the good nature of others to treat the dog well: we trust the system wrapped around it.

The same must apply to AI agent ethics. We cannot rely on values training, ‘responsible AI’ statements, or hopeful assumptions about good behaviour. Ethical safeguards for autonomous agents must be designed, tested, and enforced. If we want agents to act on our behalf safely and fairly, they should earn autonomy over time, not receive by default.

The organisations that succeed in this next wave of AI will be those that treat agentic autonomy as a privilege, tightly governed by controls, and applied to at right level and in the right context.

If your organisation is exploring AI agents and wants a structured way to grant autonomy without creating risks, we can help. Check out our services, leverage our free content as much as you like, or get in touch if you would like some more help.

FAQs

The ethical risks of autonomous AI agents include acting outside their intended purpose, making harmful decisions without human awareness, and creating unfair or discriminatory outcomes at speed and scale. Additional risks arise when agents collaborate, make assumptions, or take actions on contrast to what humans assume will be “common sense”. Without strong oversight, autonomy can lead to safety breaches, reputational harm, and loss of public trust.