Home > Agentic AI Risk categories > Individual AI Agent Risks

Home > Agentic AI Risk categories > Individual AI Agent Risks

An AI agent may behave unpredictably, inconsistently, or unfairly – drifting from its intended purpose, compounding errors, or operating outside policy, leading to failures, bias, or inconsistency.

To protect your firm against individual AI agent risks, you should register, test, approve, and track every agent and its artifacts (prompts and knowledge sources) end-to-end. Block anything unverified, maintain version and change records, monitor drift, and retire or roll back any elements that no longer meet policy or security standards.

You should confine each agent to clear goals, permissions, and limits. Test before launch; monitor confidence and boundary breaches in production; require human approval for critical actions; and keep kill-switches, rollback plans, and real-time stop controls ready.

You should make reasoning steps machine-readable and testable with gates and consistency checks, log and review every intermediate action, set KPIs that favour accuracy and coherence over speed, and enforce fairness by defining boundaries, rotating reviewers, testing for group-wide bias, and mitigating any biased outcomes.

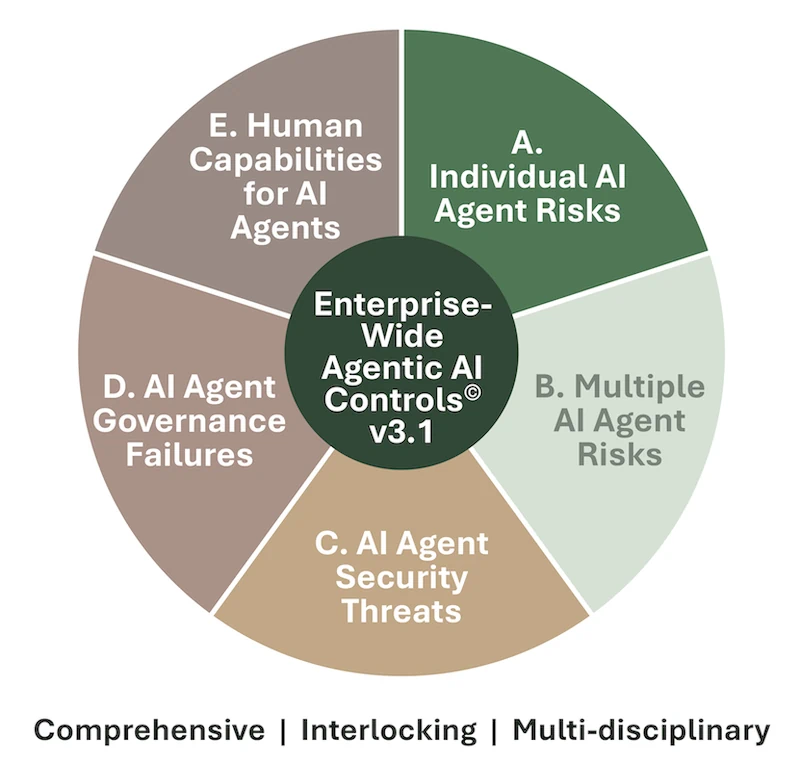

The Enterprise-Wide Agentic AI Risk Control Framework v3.1, breaks down the individual AI agent risk category into 5 distinct risks and 37 best practice controls:

Download the framework for free to understand the risks, determine if your company is exposed to them, and select the controls that apply to your situation.

The Framework will let you perform tasks that are vital to keeping your company safe and compliant:

As agentic AI continues to evolve, the Governing Council will approve future versions, keeping your career and you at the leading edge of agentic AI risk management.

Download the current version to join our mailing list and receive future versions too.

We invite you to leave your thoughts below. Please leave your name and email address, so we can get in touch, and to minimize spam.

Individual AI agent risks refer to failures or unsafe behaviours that occur at the level of a single agent – such as unpredictability, drift, bias, or reasoning errors. Managing these risks within an individual AI agent risk management framework helps organisations ensure every agent behaves predictably, fairly, and within defined goals and limits.

Without proper lifecycle governance, AI agents can be deployed, modified, or retired without oversight, leading to drift or insecure behaviour. Applying strong AI agent lifecycle governance controls involves registering, testing, approving, and tracking every agent version end-to-end, as well as blocking unverified or outdated agents from deployment.

Unpredictability occurs when agents act outside of intent or make compounding mistakes across multi-step tasks. To manage AI agent unpredictability and error control, firms should define each agent’s goals and permissions, monitor real-time behaviour, require human approval for critical actions, and keep kill-switches and rollback plans ready.

When reasoning steps lose coherence, the agent may contradict itself or reach invalid conclusions. Organisations should log each reasoning step, apply consistency gates, and test for accuracy. This ensures inconsistent reasoning in AI agents is detected early, improving explainability and trust.

Bias and unfairness arise when AI agents amplify historical or data-driven discrimination. Implementing bias and fairness testing in AI agents involves defining fairness boundaries, rotating reviewers, testing group-wide outcomes, and documenting mitigation steps to maintain equitable treatment.

AI behavioural drift happens when an agent’s performance changes over time due to altered prompts or data sources. To prevent AI behavioural drift, firms should version-control all prompts and knowledge bases, monitor drift metrics, and roll back agents that exceed drift thresholds.

You can download the Enterprise-Wide Agentic AI Risk Control Framework v3.1 for free on www.agenticrisks.com to explore all five risk categories, including Agentic AI Risk Category A – Individual AI Agent Risks, which comprises 5 risks and 37 best-practice controls. The framework will ensure your management of agentic risk is comprehensive, interlocking, and multi-disciplinary.

We use some cookies - read more in our policies below.

Fill in this form and get access to our

Template Agentic Risk Appetite and Adoption Strategy for free

Fill in this form and get access to the whitepaper of the

Enterprise-Wide Agentic AI Controls Framework.

Fill in this form and get access to the pdf with the

Agentic Workflow Risk Flags

pdf links still to be changed

Fill in this form and stay up to date