Home > Agentic AI Risk categories > Human Capabilities for AI Agents

Home > Agentic AI Risk categories > Human Capabilities for AI Agents

People may resist, misuse, or over-trust AI systems, leading to stalled adoption, poor oversight, loss of skills, ethical breaches, and erosion of trust and legitimacy.

To address the human capabilities for AI Agents, you should introduce agents gradually under strong governance, train and engage staff, and scale only when teams demonstrate readiness and competence. Build trust through transparent communication, pilot reviews, and structured adoption.

Match human oversight to each agent’s purpose and risk, with clear authority, escalation, and override controls, while continuously reviewing oversight quality, documenting decisions, and training human operators to maintain critical judgment and prevent over-reliance.

You should keep humans accountable for every AI-assisted decision, assess impacts on privacy, dignity, and fairness before deployment, and ensure that all AI systems reinforce, not replace, human responsibility, ethical integrity, and organisational legitimacy.

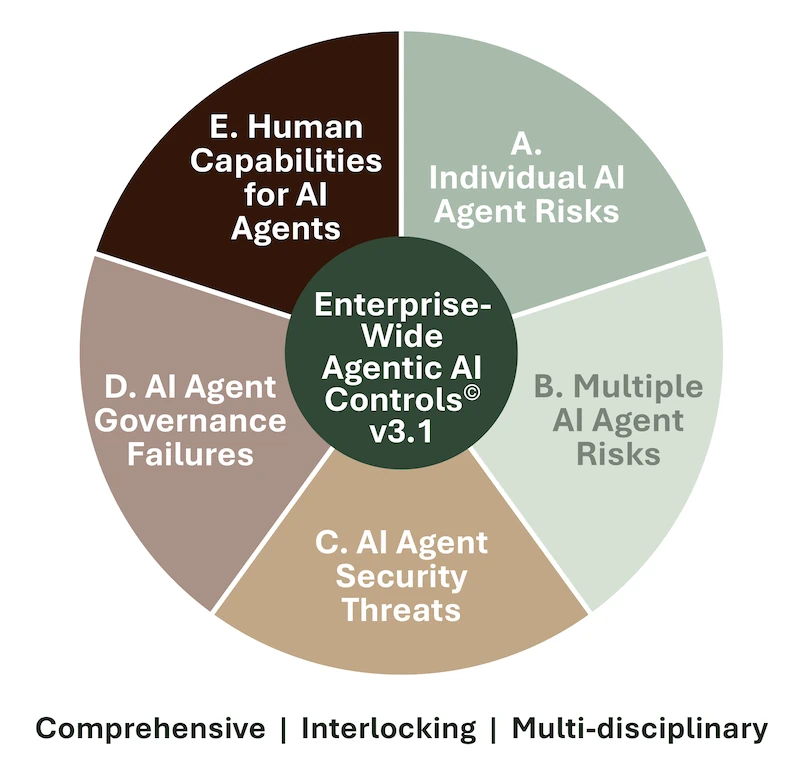

The Enterprise-Wide Agentic AI Risk Control Framework v3.1, breaks down the human capabilities for AI Agents category into 7 distinct risks and 50 best practice controls:

Download the framework for free to understand the risks, determine if your company is exposed to them, and select the controls that apply to your situation.

The Framework will let you perform tasks that are vital to keeping your company safe and compliant:

As agentic AI continues to evolve, the Governing Council will approve future versions, keeping your career and you at the leading edge of agentic AI risk management.

Download the current version to join our mailing list and receive future versions too.

We invite you to leave your thoughts below. Please leave your name and email address, so we can get in touch, and to minimize spam.

Human capabilities for AI Agents address how people adopt, oversee, and trust AI systems. Strong human factors for AI agents and a governance framework ensure safe adoption, sustained human accountability, and balanced interaction between human judgment and automated reasoning.

Introducing AI can disrupt roles and workflows. Effective AI change management and cultural adoption controls include phased rollouts, readiness checks, transparent communication, and pilot reviews that build trust and competence before scaling.

Oversight should match each agent’s purpose and risk. Designing effective human oversight for AI agents means defining clear authority, escalation paths, and override controls, supported by real-time visibility, documented boundaries, and ongoing operator training.

Over-reliance can lead to skill erosion and weak critical thinking. To prevent staff over-trust and AI deskilling, train employees on AI’s limits, vary prompts to avoid repetition bias, rotate reviewer roles, and document decision rationales to reinforce human accountability.

When users defer blame to AI, trust erodes. Preserve AI moral legitimacy and human accountability by assigning a named human to each AI-involved decision, maintaining informed consent, avoiding anthropomorphic branding, and ensuring AI augments, not replaces, ethical judgment.

AI can unintentionally infringe on privacy, dignity, or fairness. Embed AI legal protection of fundamental rights compliance by assessing rights impacts before deployment, conducting formal rights assessments, documenting decisions, enabling redress, and suspending systems that pose ethical or legal risks.

You can download the Enterprise-Wide Agentic AI Risk Control Framework v3.1 for free on www.agenticrisks.com to explore all five risk categories, including Agentic AI Risk Category E – Human Capabilities for AI Agents, which comprises 5 risks and 35 best-practice controls. The framework will ensure your management of agentic risk is comprehensive, interlocking, and multi-disciplinary.

We use some cookies - read more in our policies below.

Fill in this form and get access to our

Template Agentic Risk Appetite and Adoption Strategy for free

Fill in this form and get access to the whitepaper of the

Enterprise-Wide Agentic AI Controls Framework.

Fill in this form and get access to the pdf with the

Agentic Workflow Risk Flags

pdf links still to be changed

Fill in this form and stay up to date