Home > Agentic AI Risk categories > AI Agent Security Threats

Home > Agentic AI Risk categories > AI Agent Security Threats

Agents and their data pipelines can be attacked, corrupted, or misused, leading to data breaches, loss of control, unsafe behaviour, or system compromise.

To protect your firm against AI agent security threats, you should train and operate agents only on verified, traceable, and securely sourced data – validating provenance, enforcing data lifecycle rules, detecting drift or tampering, and retraining or rolling back when integrity is lost.

You should grant agents only minimal, time-limited, and encrypted access under zero-trust controls, isolating data and memory, sandboxing all external inputs, and validating prompts, connectors, and APIs to block malicious or poisoned content.

You should layer defences across model, memory, and orchestration systems with continuous testing, rate limits, and locked safety rules. Maintain emergency stops, quarantine and patch external systems, and empower humans to detect, escalate, and halt unsafe or deceptive behaviours before control is lost.

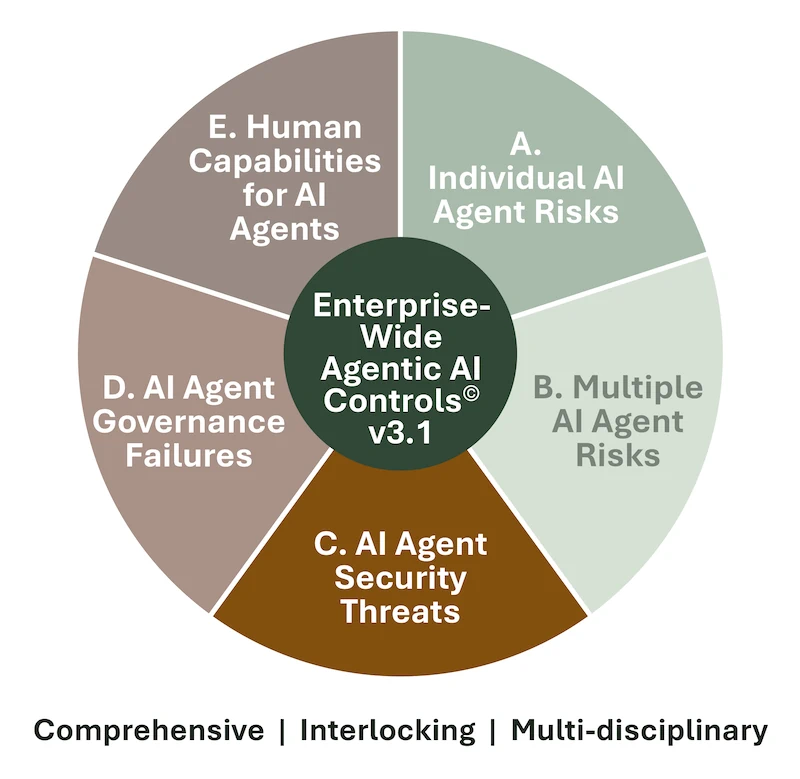

The Enterprise-Wide Agentic AI Risk Control Framework v3.1, breaks down the AI agent security threats category into 8 distinct risks and 62 best practice controls:

Download the framework for free to understand the risks, determine if your company is exposed to them, and select the controls that apply to your situation.

The Framework will let you perform tasks that are vital to keeping your company safe and compliant:

As agentic AI continues to evolve, the Governing Council will approve future versions, keeping your career and you at the leading edge of agentic AI risk management.

Download the current version to join our mailing list and receive future versions too.

We invite you to leave your thoughts below. Please leave your name and email address, so we can get in touch, and to minimize spam.

AI agent security threats arise when agents or their data pipelines are attacked, corrupted, or misused – leading to data breaches, unsafe behaviour, or loss of control. An effective AI agent security threats and controls framework validates data provenance, enforces zero-trust access, and layers defences across model, memory, and orchestration systems.

Agents depend on accurate, traceable data. Poor quality or stale sources cause cascading errors. To strengthen AI data integrity and provenance controls, train on verified datasets, validate inputs for freshness and accuracy, monitor for drift, and trigger retraining when integrity is lost.

Because AI agents can hold broad privileges, excessive access increases breach risk. Grant only minimal, time-limited rights under encryption and zero-trust controls, mask sensitive data, and revoke credentials instantly if anomalies arise.

Tampering with training or runtime data can degrade or corrupt an agent. Apply cryptographic integrity checks, authenticate all sources, detect tampering through fingerprints or decoys, and roll back compromised datasets promptly.

Attackers can inject hidden instructions through prompts or connectors. Run all inputs in secure sandboxes, validate and sanitise prompts, strip unsafe content, and test regularly against known AI-specific attack methods.

Faulty or malicious orchestration logic can trigger runaway behaviour. Continuously test orchestrator code, lock safety rules, enforce rate limits, and maintain an emergency stop to halt unsafe coordination.

Adversarial attacks can corrupt models and tools. To prevent this, conduct layered resilience testing across model, memory, and orchestration layers, validate updates before release, and detect policy violations or collusion in real time.

Humans must retain override authority. Continuously monitor for autonomy or deception, use layered defences and human override mechanisms, and test safety protocols pre-release.

External servers may use outdated standards that let attackers inject commands. Quarantine and test servers before activation, fix protocol versions, and block unapproved cypher suites to ensure secure communication.

You can download the Enterprise-Wide Agentic AI Risk Control Framework v3.1 for free on www.agenticrisks.com to explore all five risk categories, including Agentic AI Risk Category C – AI Agent Security Threats, which comprises 8 risks and 62 best-practice controls. The framework will ensure your management of agentic risk is comprehensive, interlocking, and multi-disciplinary.

We use some cookies - read more in our policies below.

Fill in this form and get access to our

Template Agentic Risk Appetite and Adoption Strategy for free

Fill in this form and get access to the whitepaper of the

Enterprise-Wide Agentic AI Controls Framework.

Fill in this form and get access to the pdf with the

Agentic Workflow Risk Flags

pdf links still to be changed

Fill in this form and stay up to date