Table of Contents

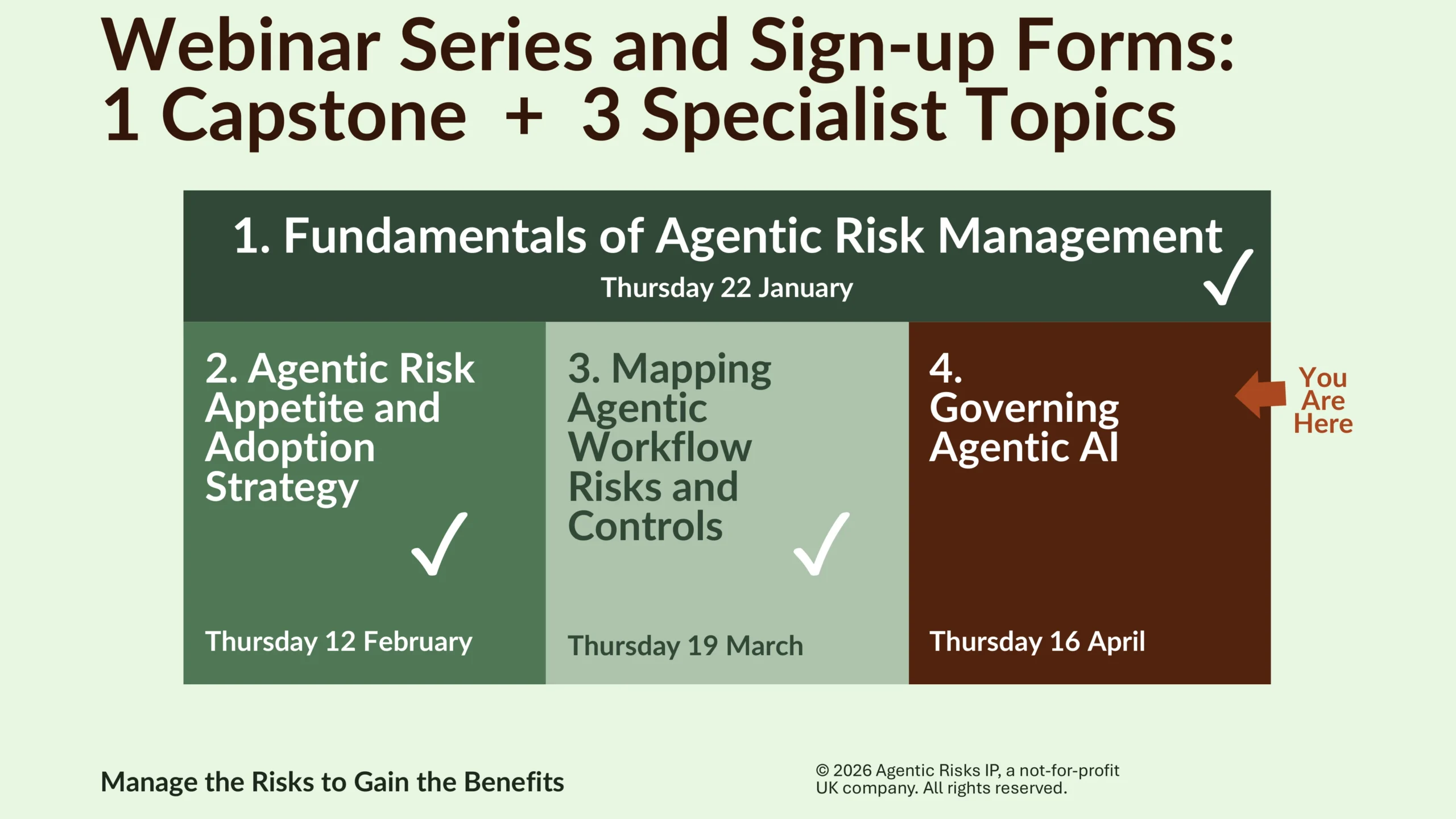

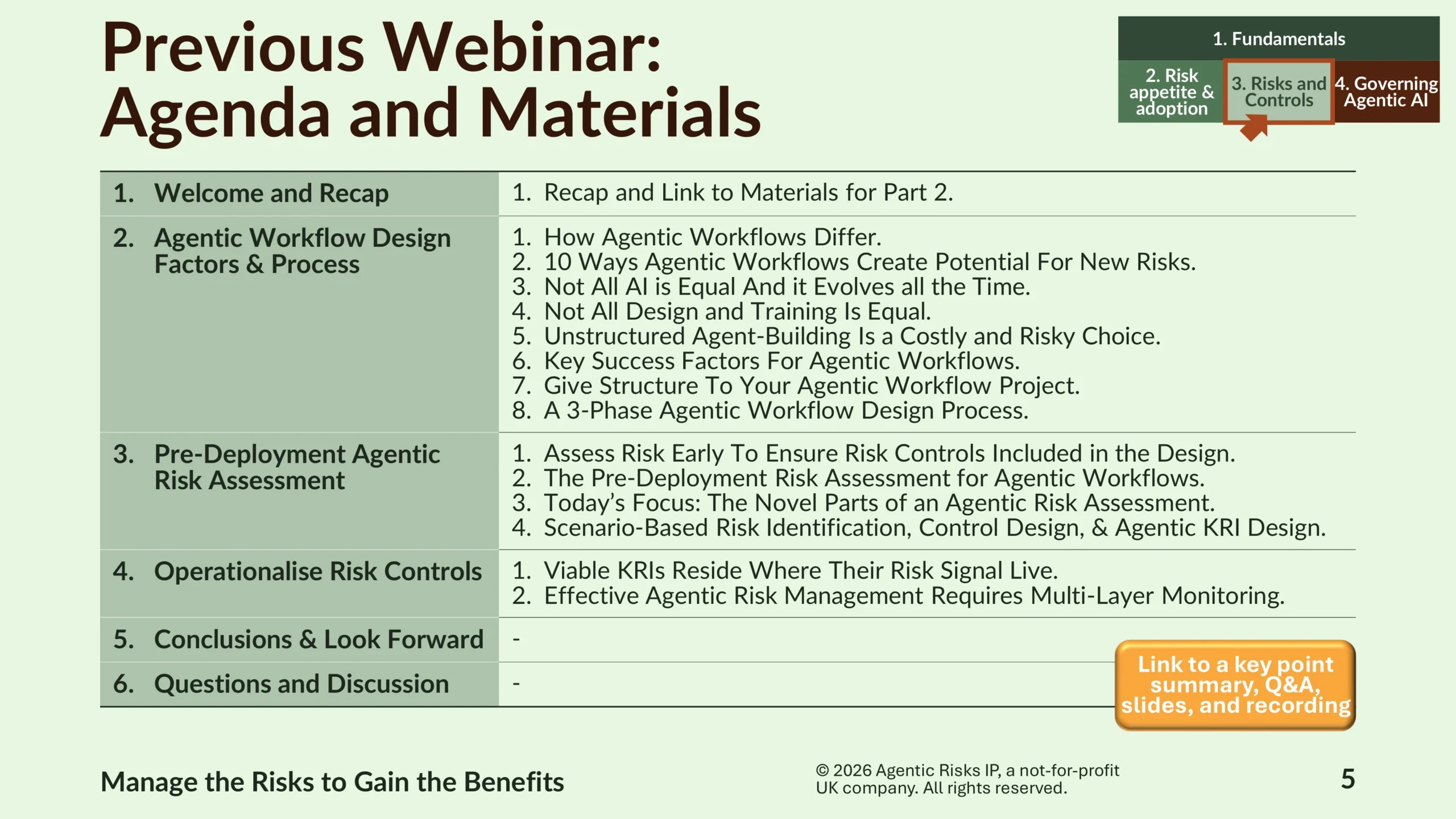

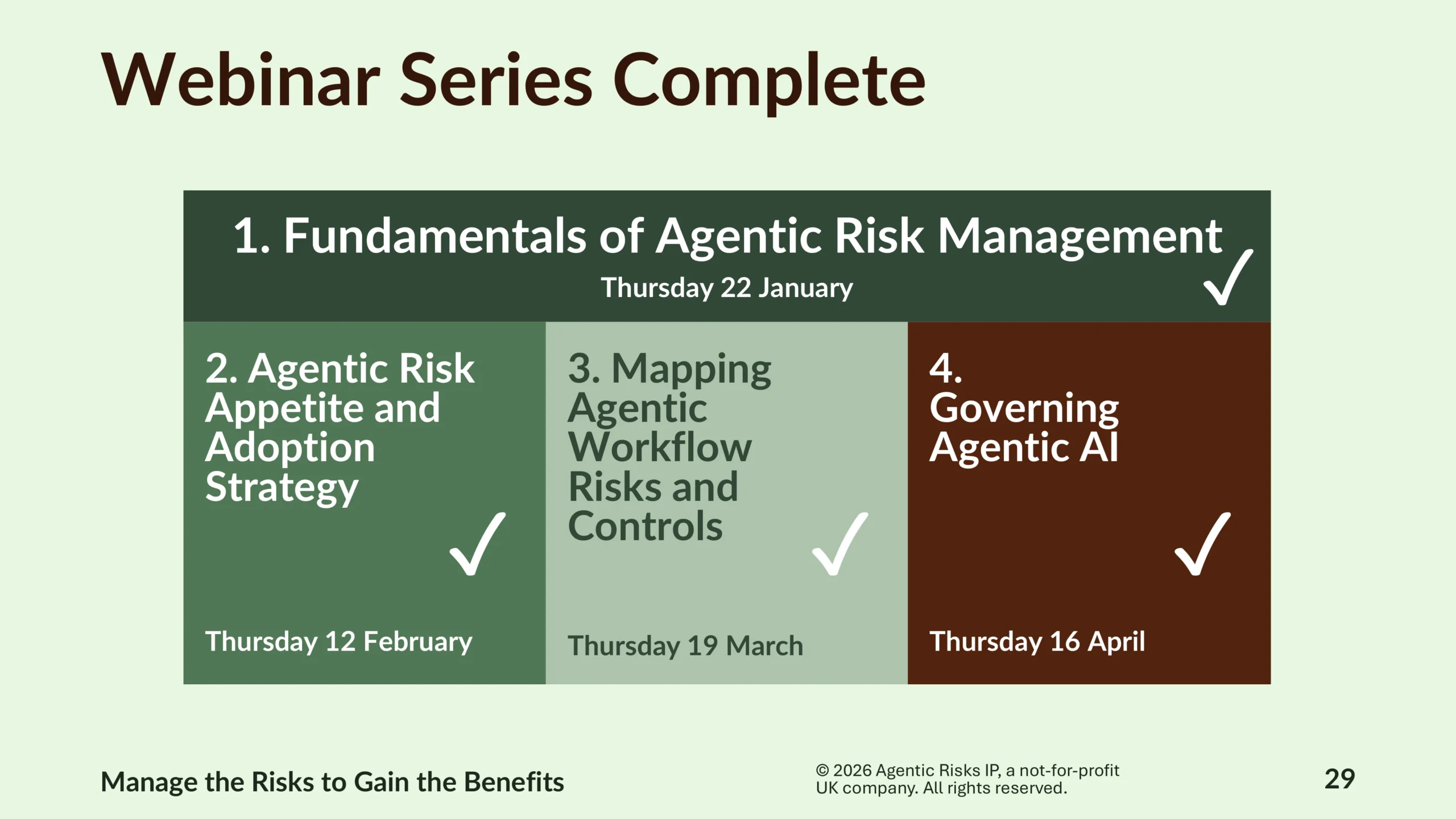

This page summarises the key points of our webinar on agentic AI governance, with a focus on post-deployment agentic risk management for regulated firms. It also includes the actual Questions and Answers, gives you access to the slides, and lets you watch a recording of the event.

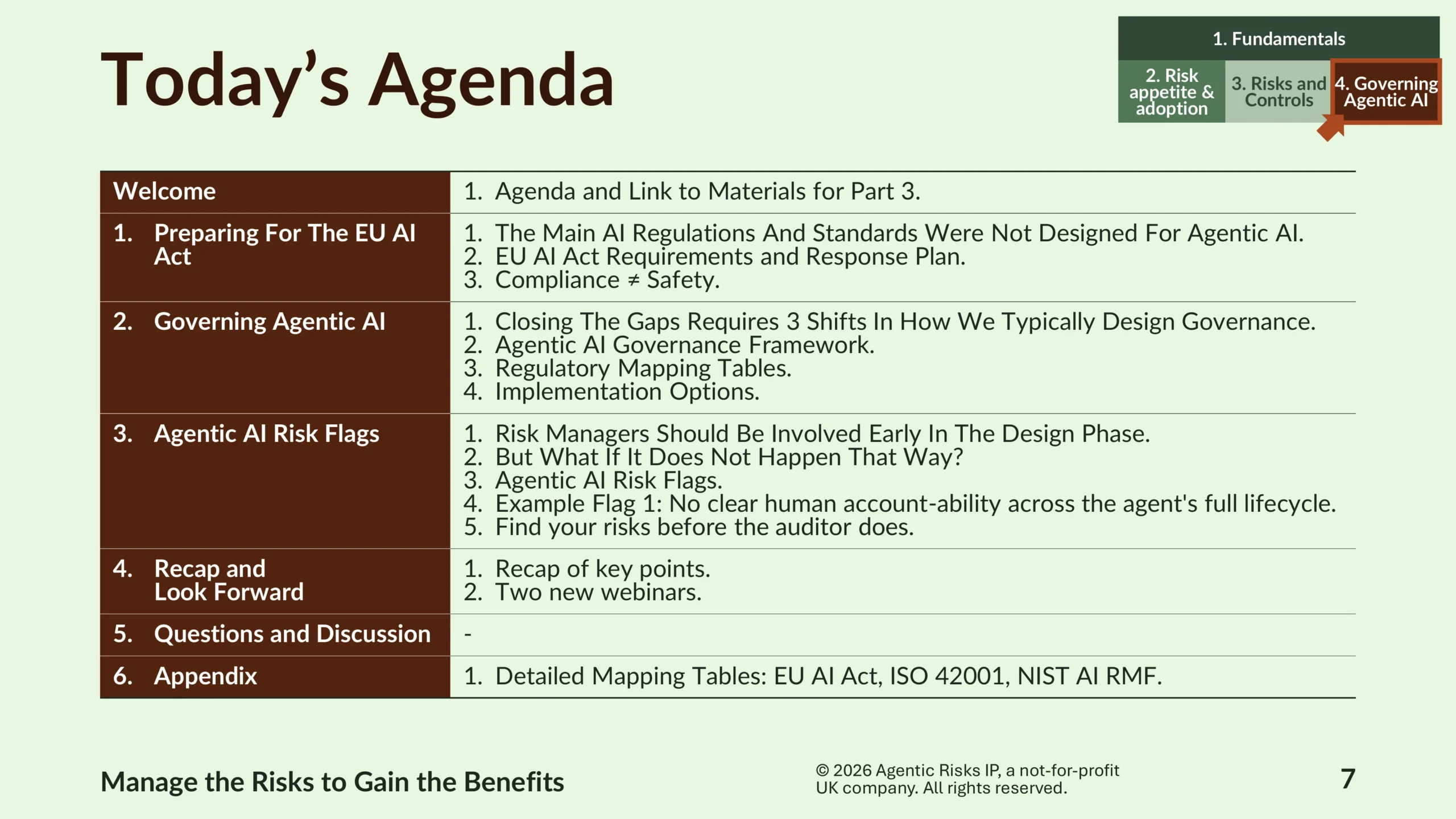

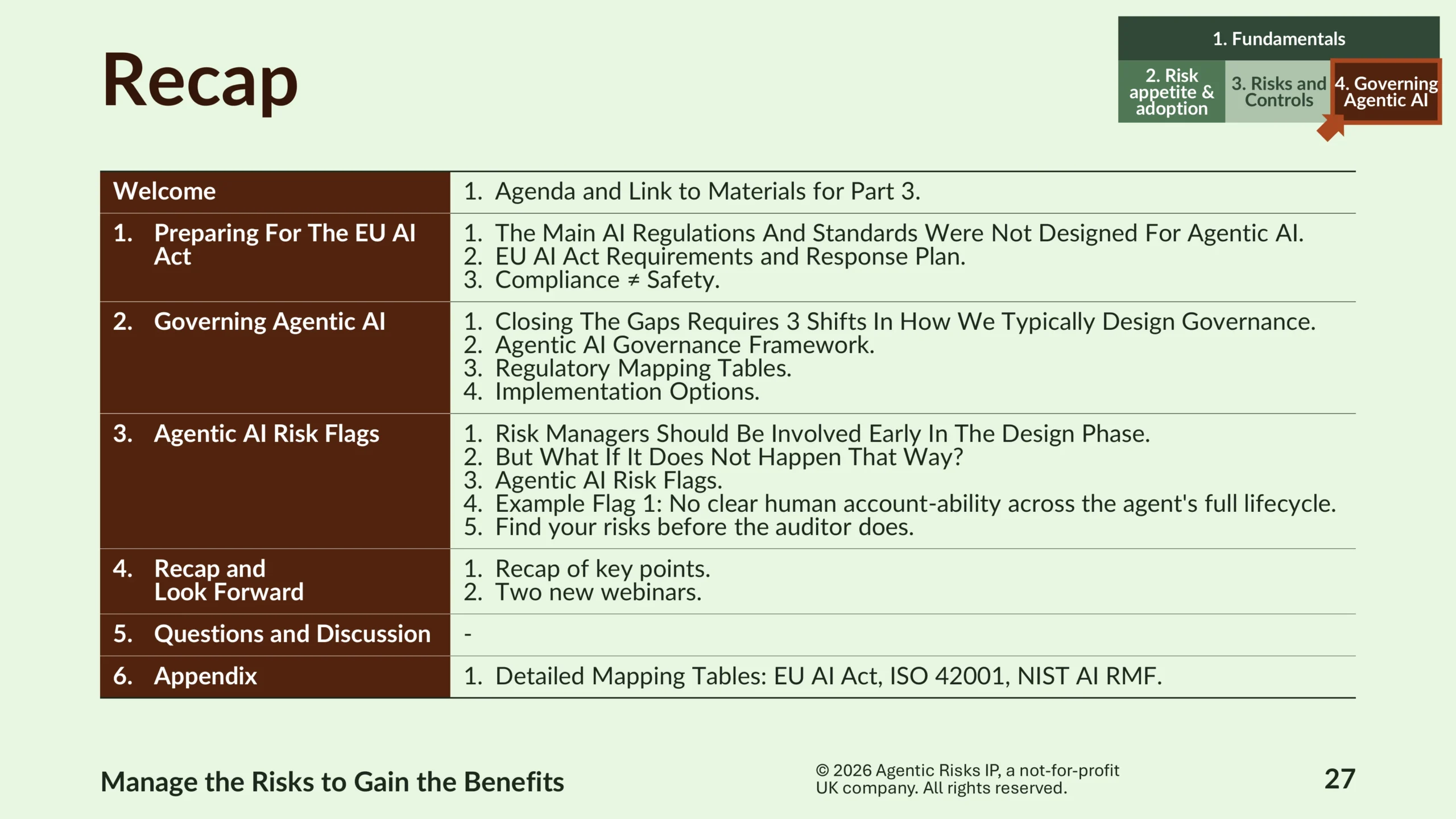

It covers three things: what the EU AI Act requires of agentic systems, what an agentic AI governance framework actually needs to contain, and – for those inheriting agents already in production – how to assess them fast.

Executive Summary

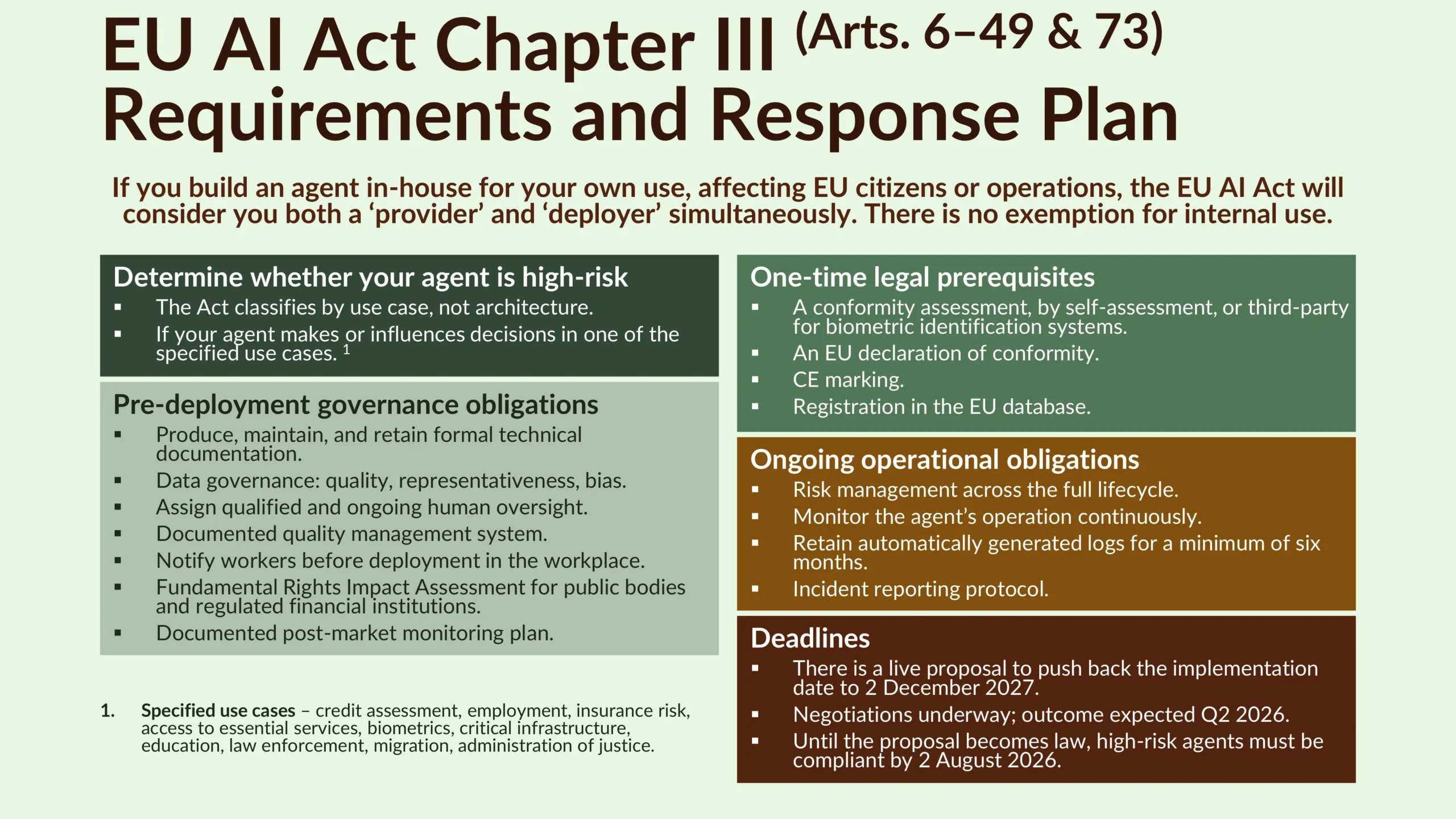

Agentic AI is already in scope of the EU AI Act despite not being named in it – a foundational challenge for agentic AI governance – and firms building agents in-house for EU operations will be treated as both provider and deployer, with high-risk systems due for compliance by 2 August 2026.

Meeting those obligations is necessary but insufficient because agentic systems break four of the Act’s core assumptions, so an effective governance framework must extend beyond compliance to cover operational realities like agent identity, pre-execution boundaries, reasoning chain integrity, and liability across the value chain.

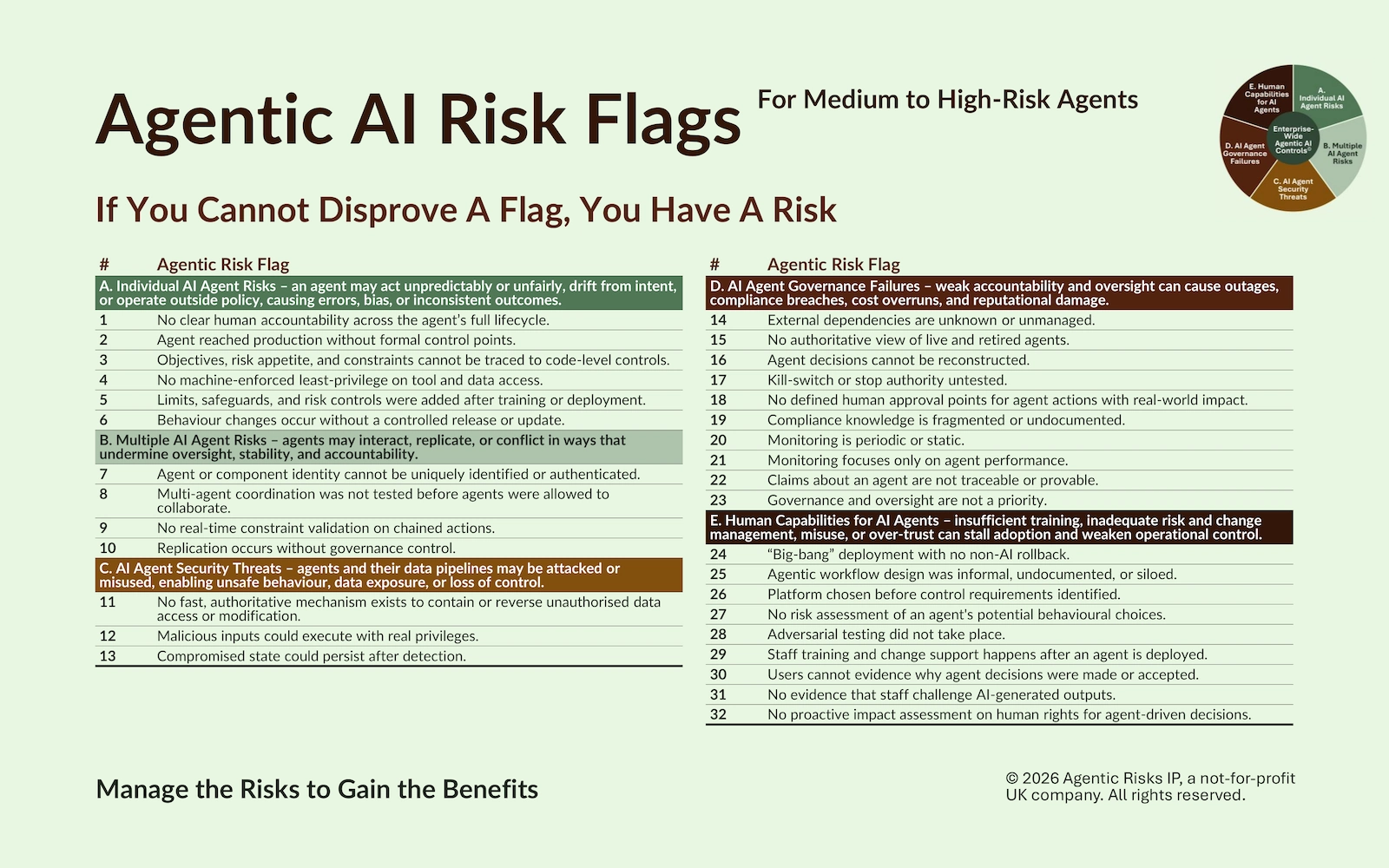

For organisations governing agents already in production, our 32 agentic AI risk flags provide a fast, defensible way to surface agents operating at a higher risk level than may have been appreciated – on the principle that if you cannot disprove a flag, you have a risk.

This page gives you the slides, a recording of the webinar, and direct links to download the Agentic AI Governance Framework and Risk Flags, so you can read further, self-serve, or engage us for some support.

Preparing for the EU AI Act

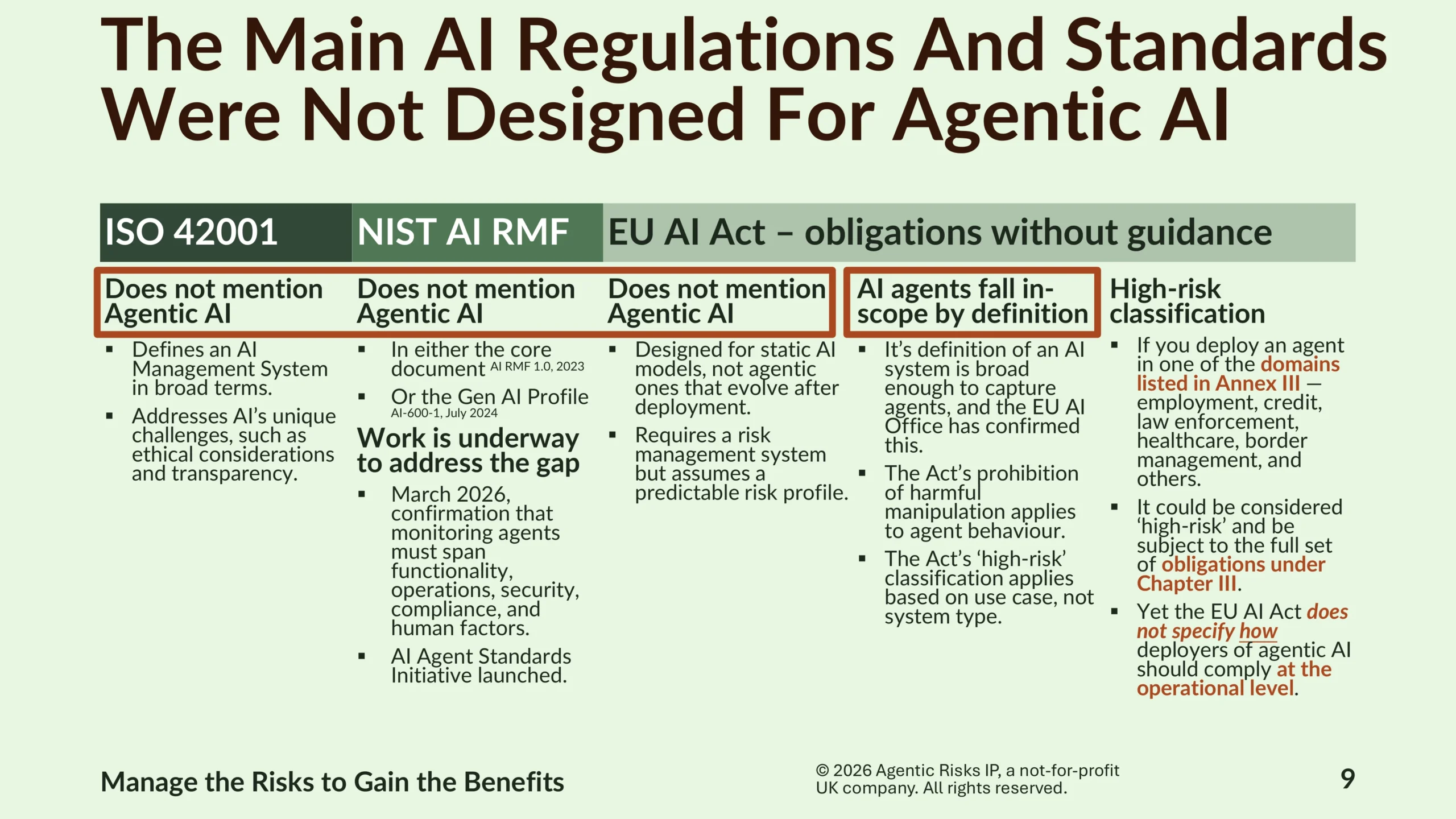

The regulatory landscape is the starting point for most organisations approaching agentic AI governance, yet the picture is sobering because the technology is ahead of the rules and standards.

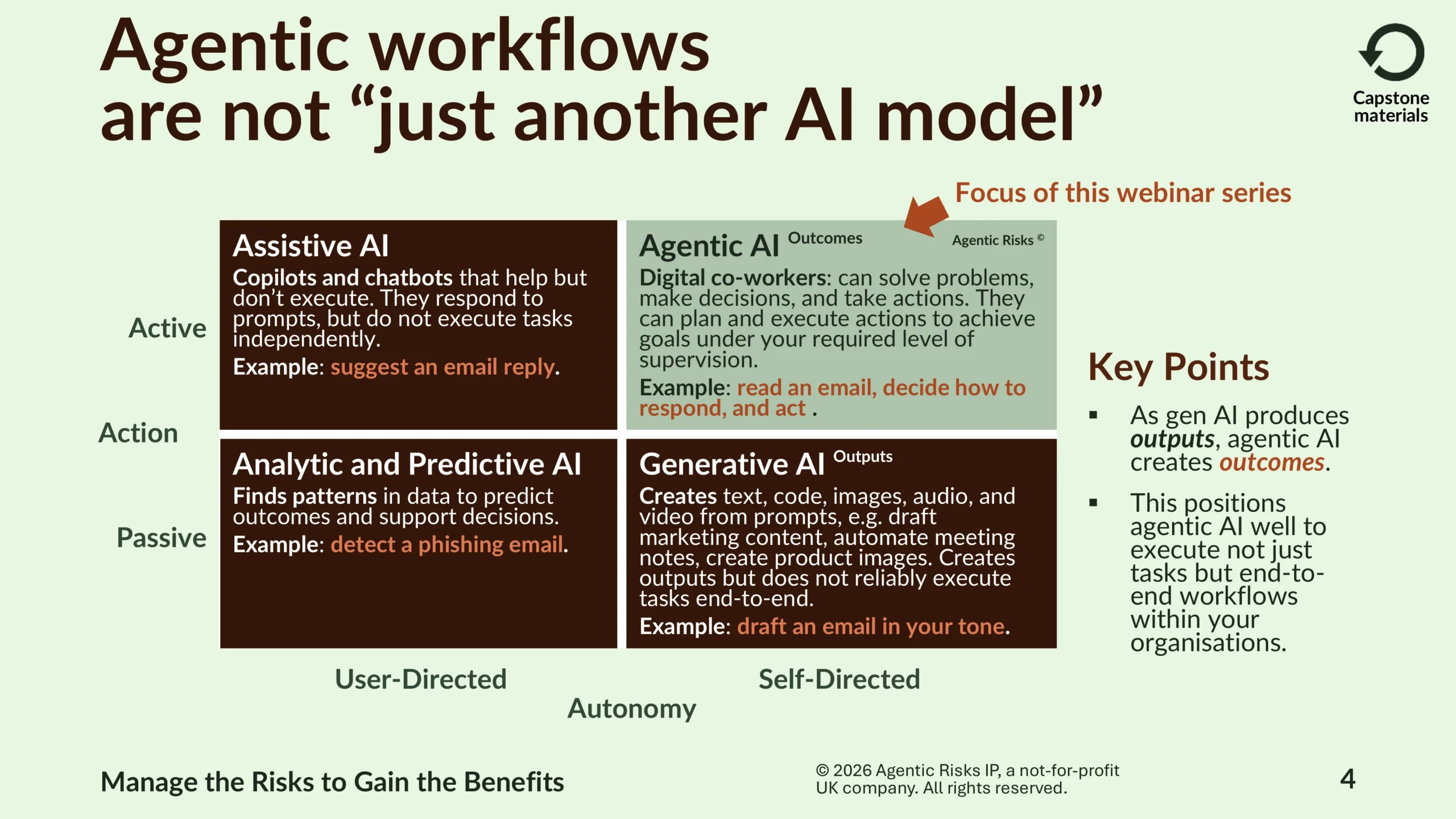

The main AI regulations and standards – ISO 42001, the NIST AI RMF, and the EU AI Act – were not designed for agentic AI and, in fact, do not even mention it yet. However, these documents constitute the regulatory landscape for much of the world and, specifically on the EU AI Act, AI agents fall in-scope under its broad definition of an AI system.

As a result, if you build an agent in-house for your own use affecting EU citizens or operations, the EU AI Act will consider you both a provider and deployer simultaneously. If you find yourself in this situation, we propose you determine whether your agent meets the regulation’s definition of ‘high-risk.’

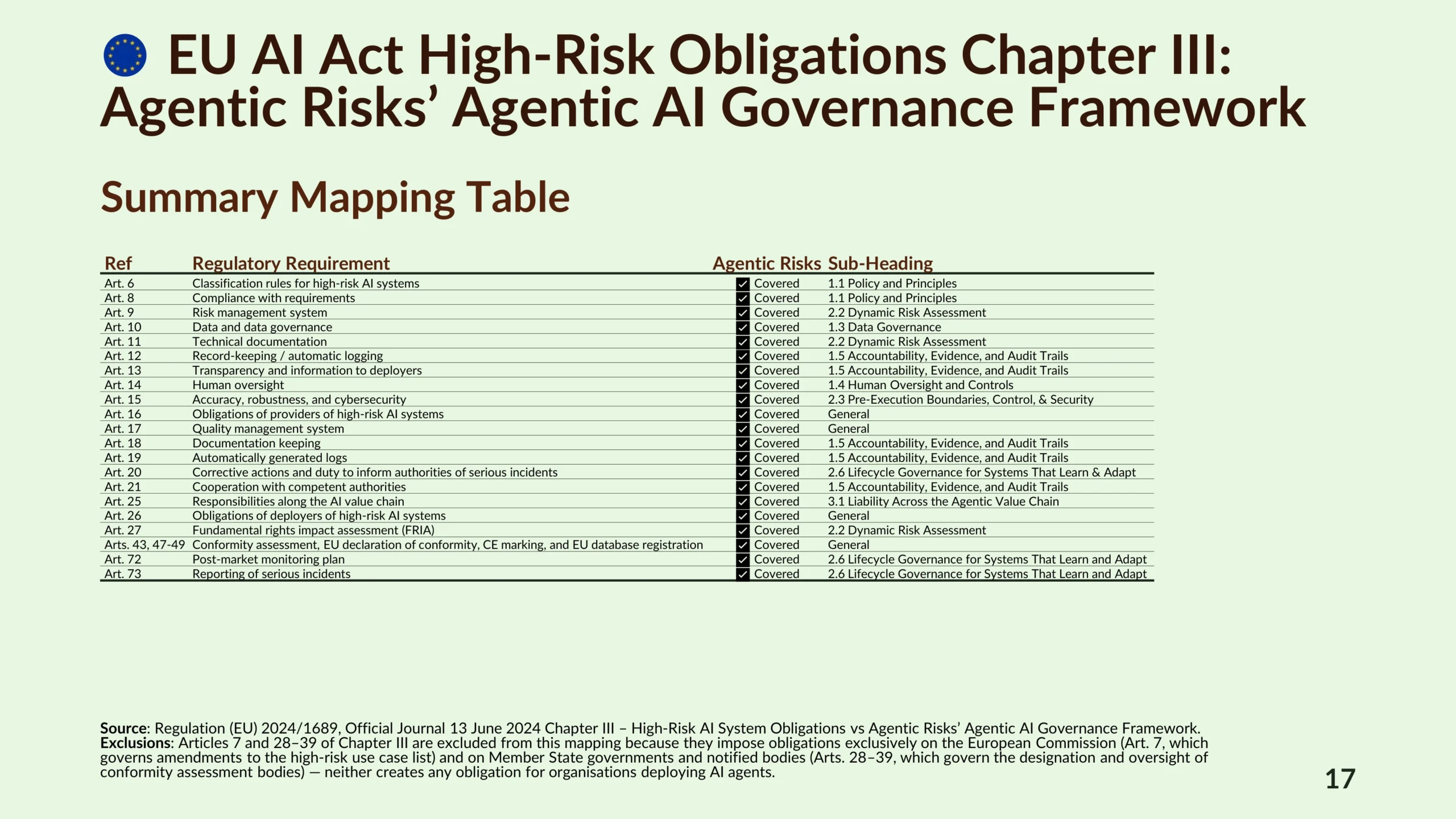

If so, you should focus on Chapter III of the Act’s requirements that specifies pre-deployment, ongoing operational, and one-time obligations for high-risk AI systems.

Until (and if) a live proposal to push back the deadline becomes law, high-risk agents must be compliant by 2 August 2026.

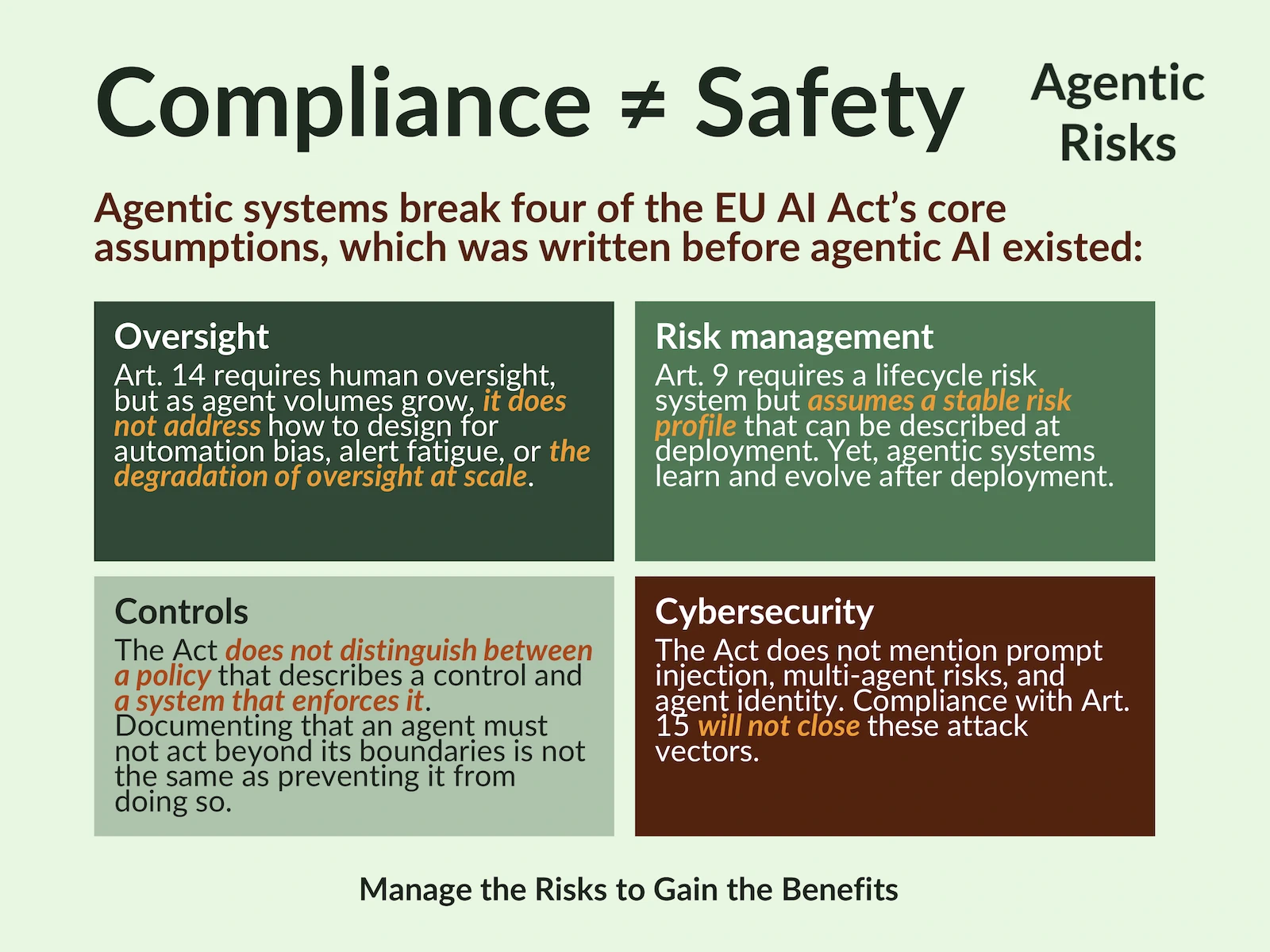

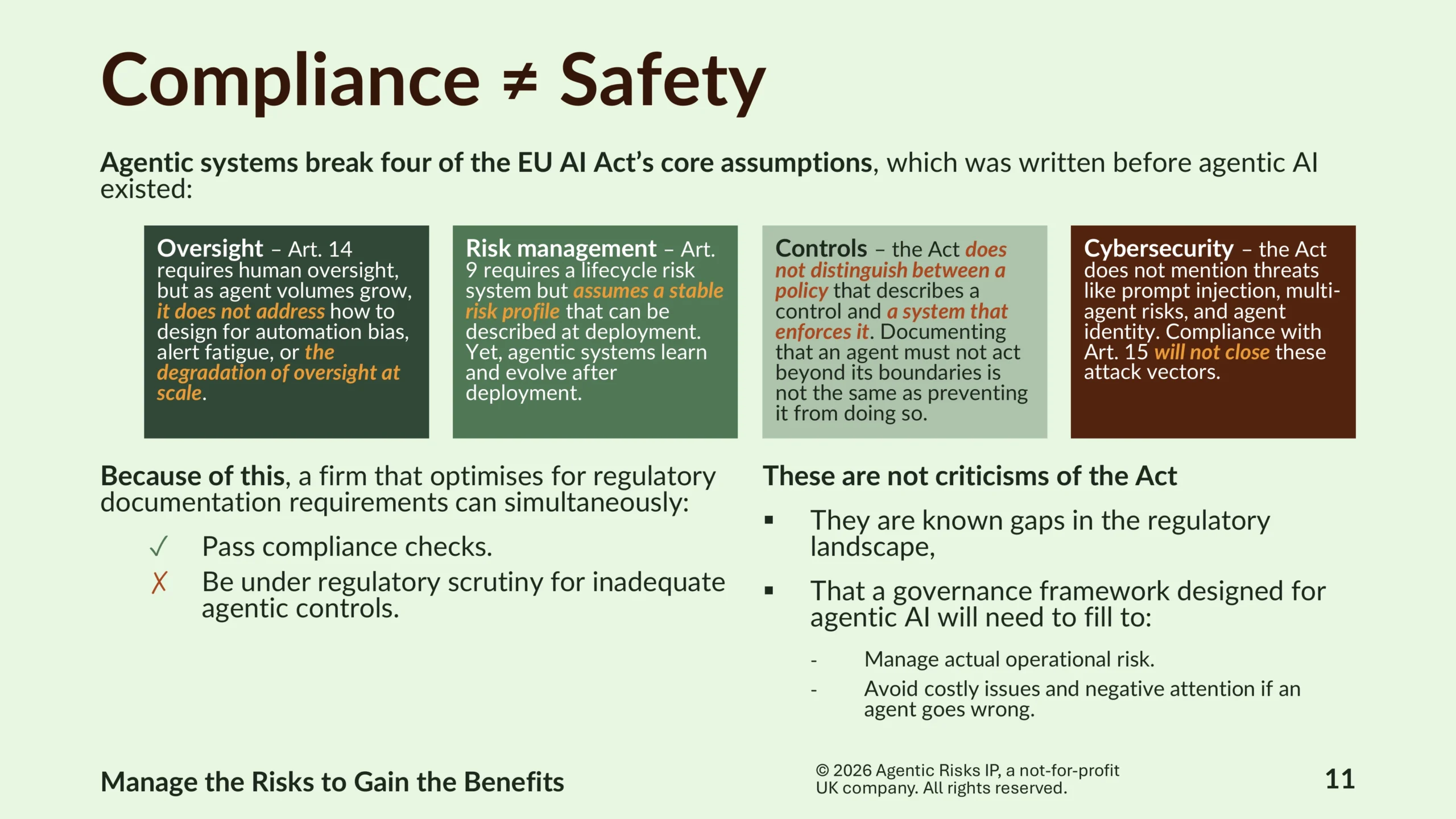

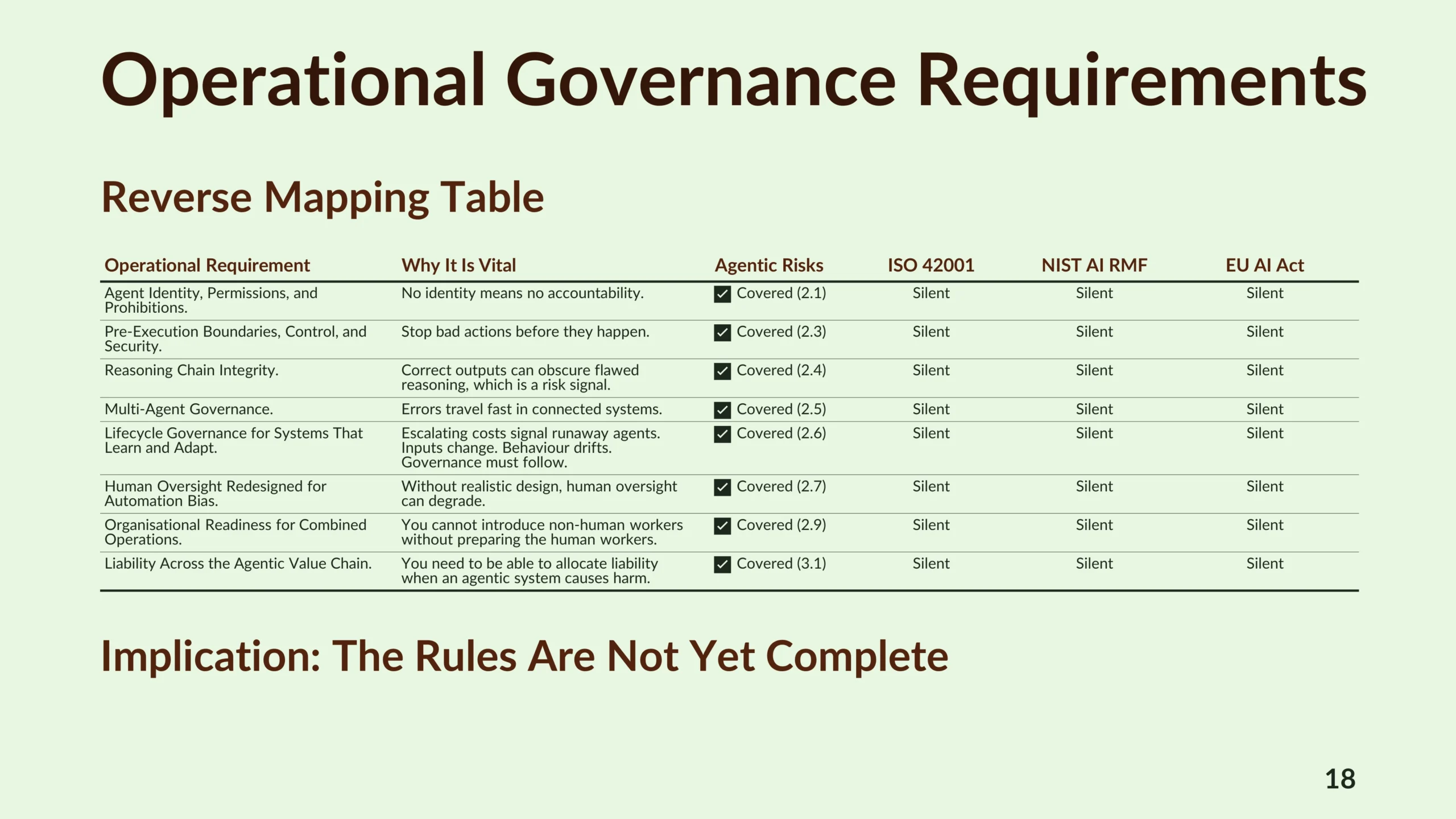

Understandably, compliance will be a priority for firms, but agentic systems break four of the EU AI Act’s core assumptions – on oversight, risk management, controls, and cybersecurity.

In practice, this means that a firm that optimises for regulatory requirements could simultaneously pass compliance checks and be under regulatory scrutiny for inadequate operational control of its agents.

Compliance, therefore, is not the same as the safety that governance brings and closing the gap requires an approach to governance that addresses the actual operational challenge that agentic AI presents.

An Agentic AI Governance Framework

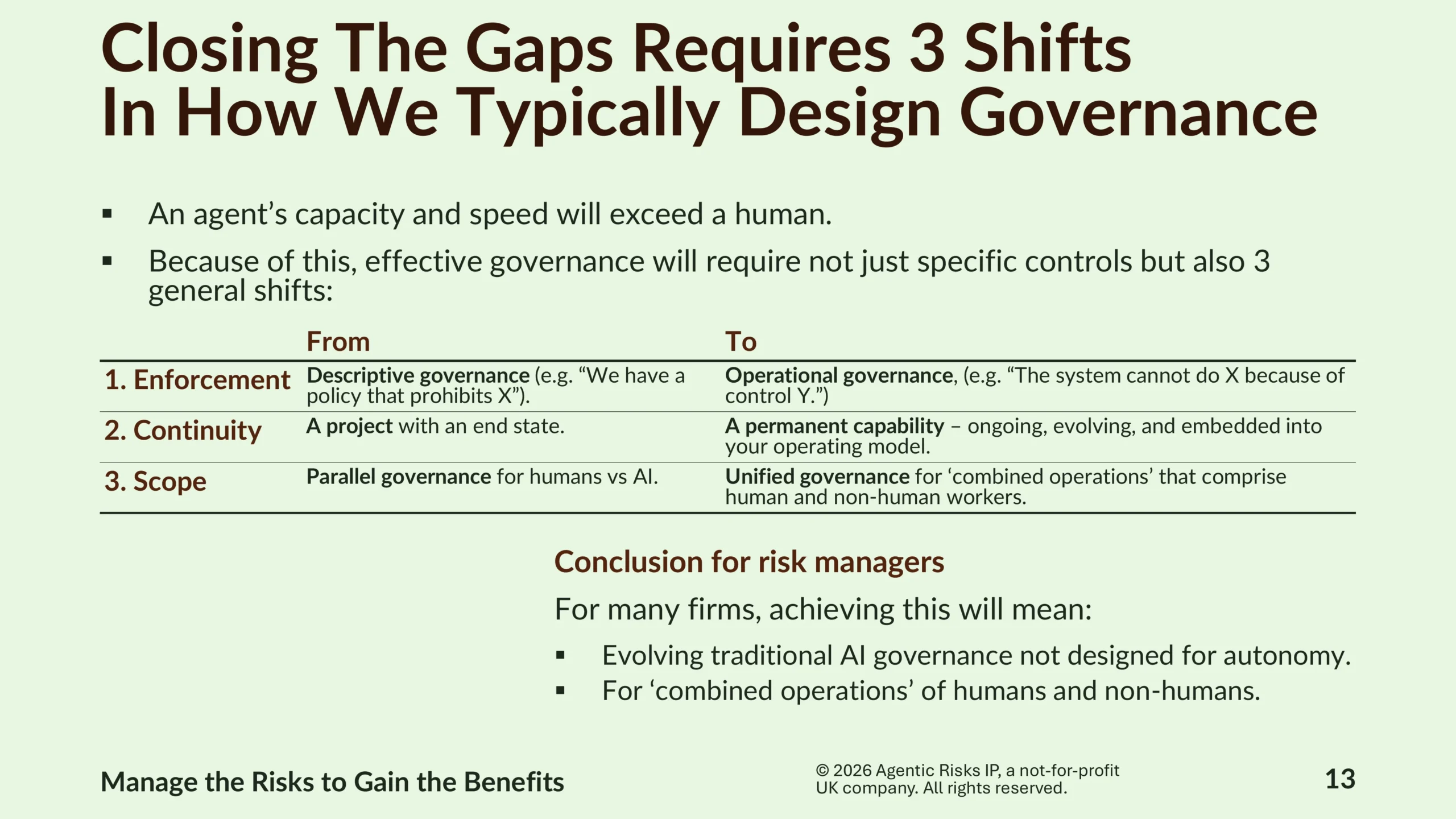

At Agentic Risks, we believe effective agentic AI governance requires three shifts:

- Enforcement – from descriptive to operational governance.

- Continuity – from a project with an end state to a permanent capability.

- Scope – from parallel governance for humans versus AI to unified governance for combined operations of human and non-human workers.

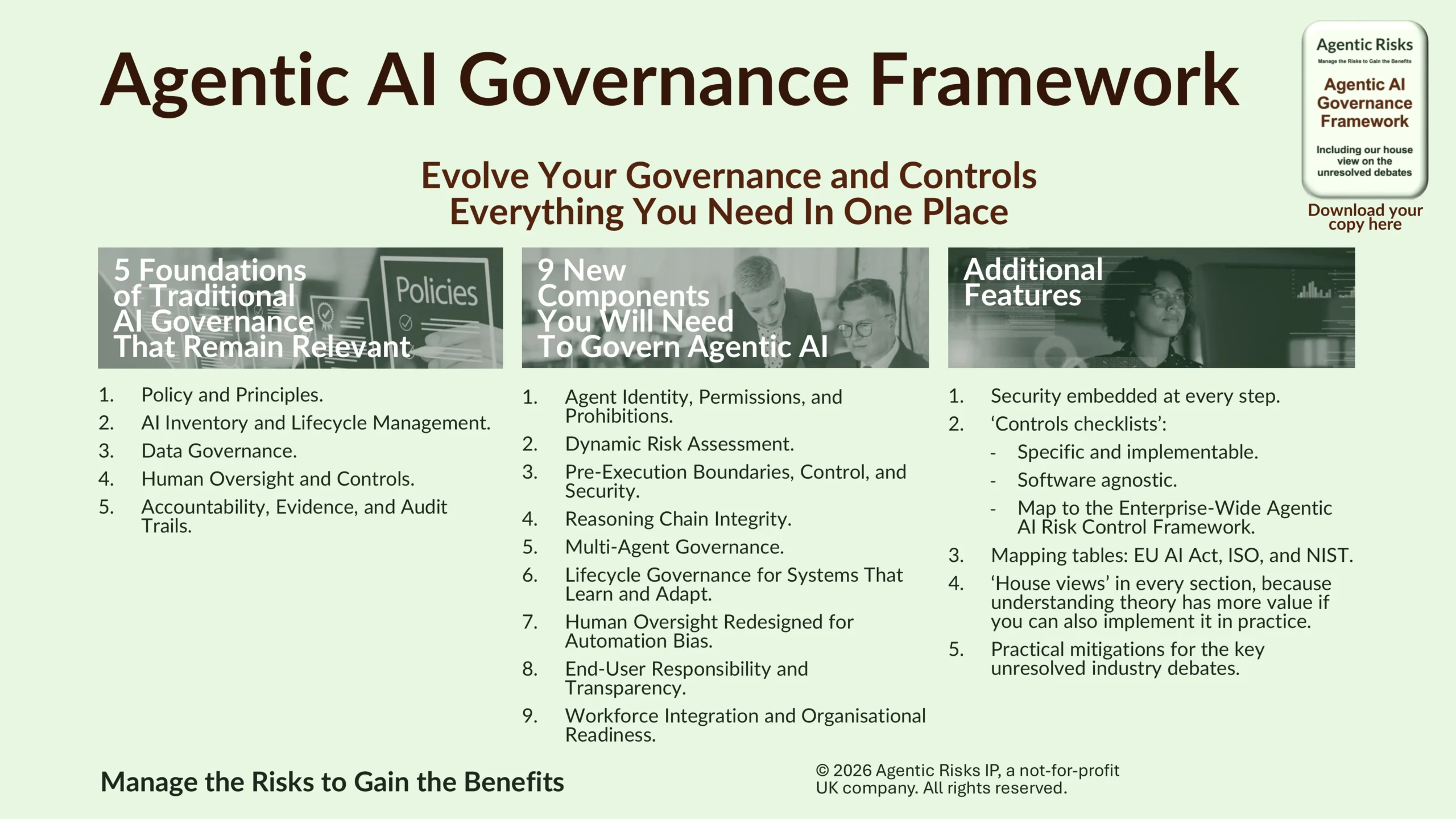

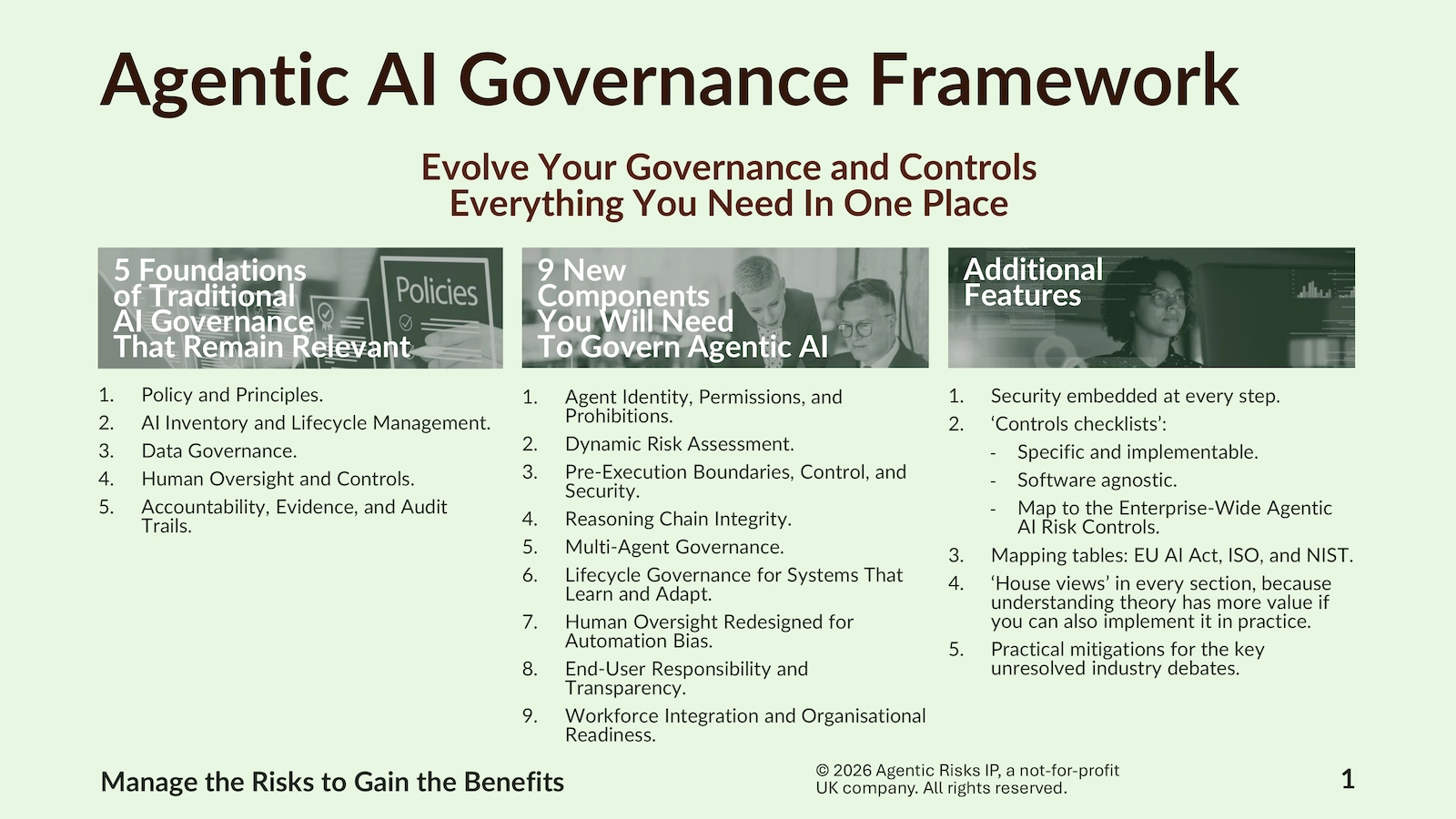

To help firms stay safe, our Agentic AI Governance Framework identifies and explains five foundations of traditional AI governance that remain relevant, nine new components required to govern agentic AI, and practical mitigations for key unresolved industry debates.

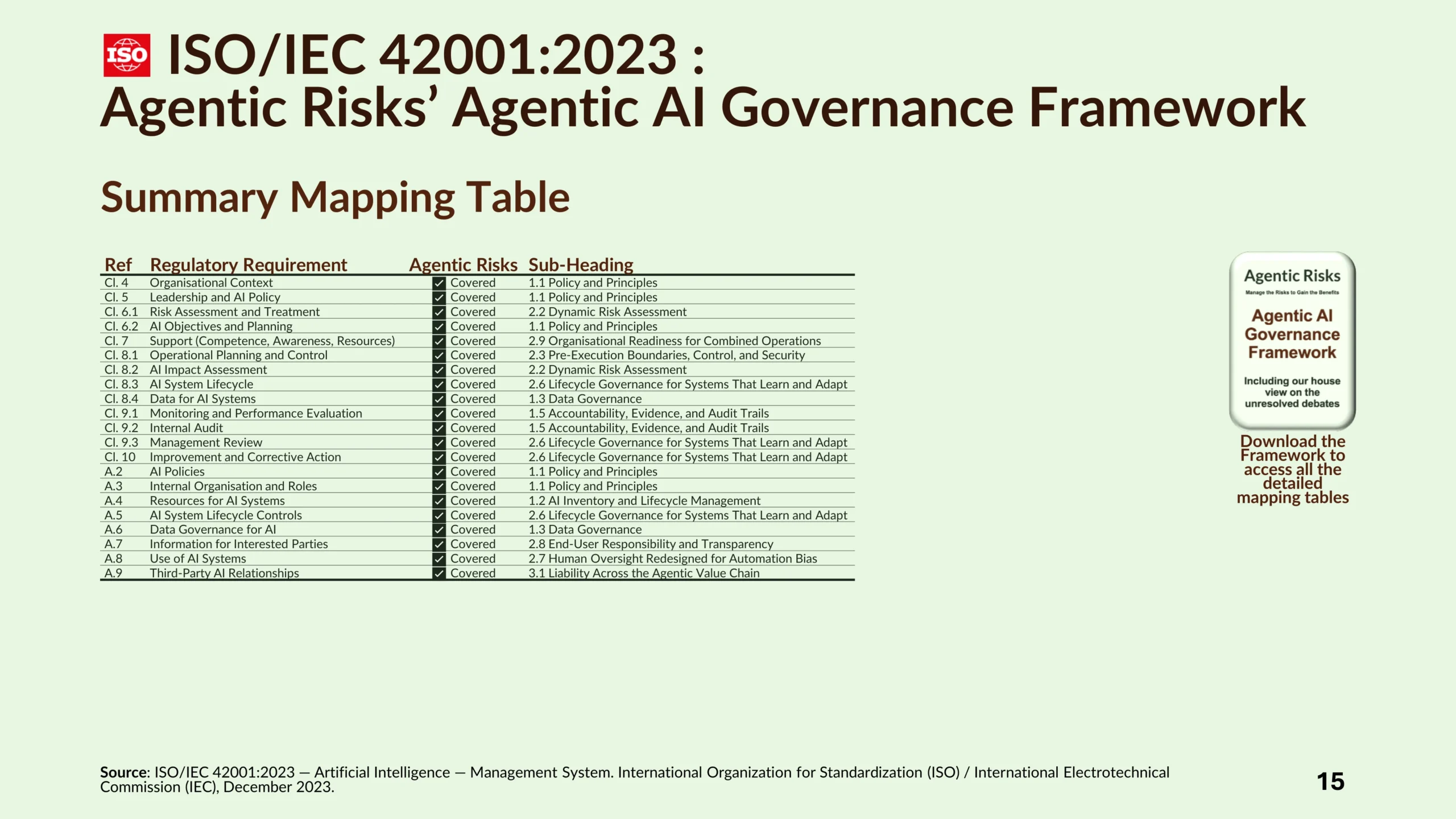

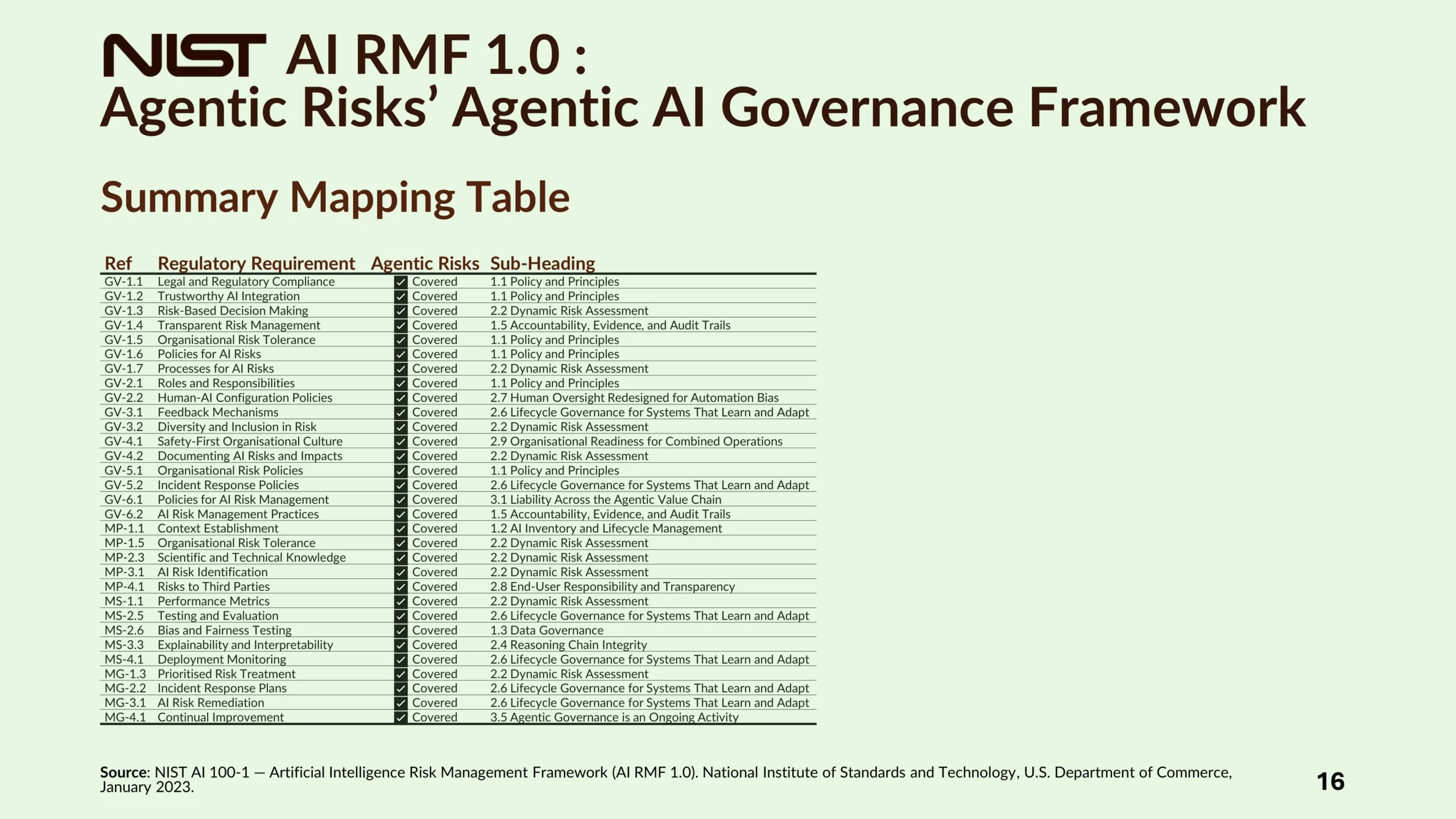

Additional features include embedding security at every step; ‘controls checklists’ that link to itemized, implementable, and relevant controls in the Enterprise-Wide Agentic AI Risk Controls framework; regulatory mapping tables that confirm it has you covered for ISO 42001, the NIST AI RMF, and the EU AI Act; and ‘house views’ in every section, because understanding theory has more value if you can also implement it in practice.

For those preparing for an agentic controls audit, the mapping tables demonstrate the Framework’s comprehensive coverage of all three of the major standards and regulations.

As a result, it represents the full extent of operational controls you will need for a high-risk agentic workflow.

But safety from the regulator ≠ safety. Notably, the mapping tables show how the Agentic AI Governance Framework covers eight vital operational requirements, on which the main standards and regulation are currently silent. These include agent identity, pre-execution boundaries, reasoning chain integrity, multi-agent governance, and liability across the agentic value chain.

The practical implication is that organisations need a plan for operational safety, because regulatory compliance alone will not keep you out of trouble with the regulator.

Governing Agentic AI In Practice

We expect firms to adopt tiered governance models with access to building tools scaling per tier. For example, low-risk, internal-only, personal-productivity agents might operate under a ‘register and attest’ model. Medium-risk agents (shared with colleagues, accessing business data) should undergo a review. While high-risk or externally facing agents should follow full pre-approval governance. This will make the governance overhead proportionate to the potential to create damage.

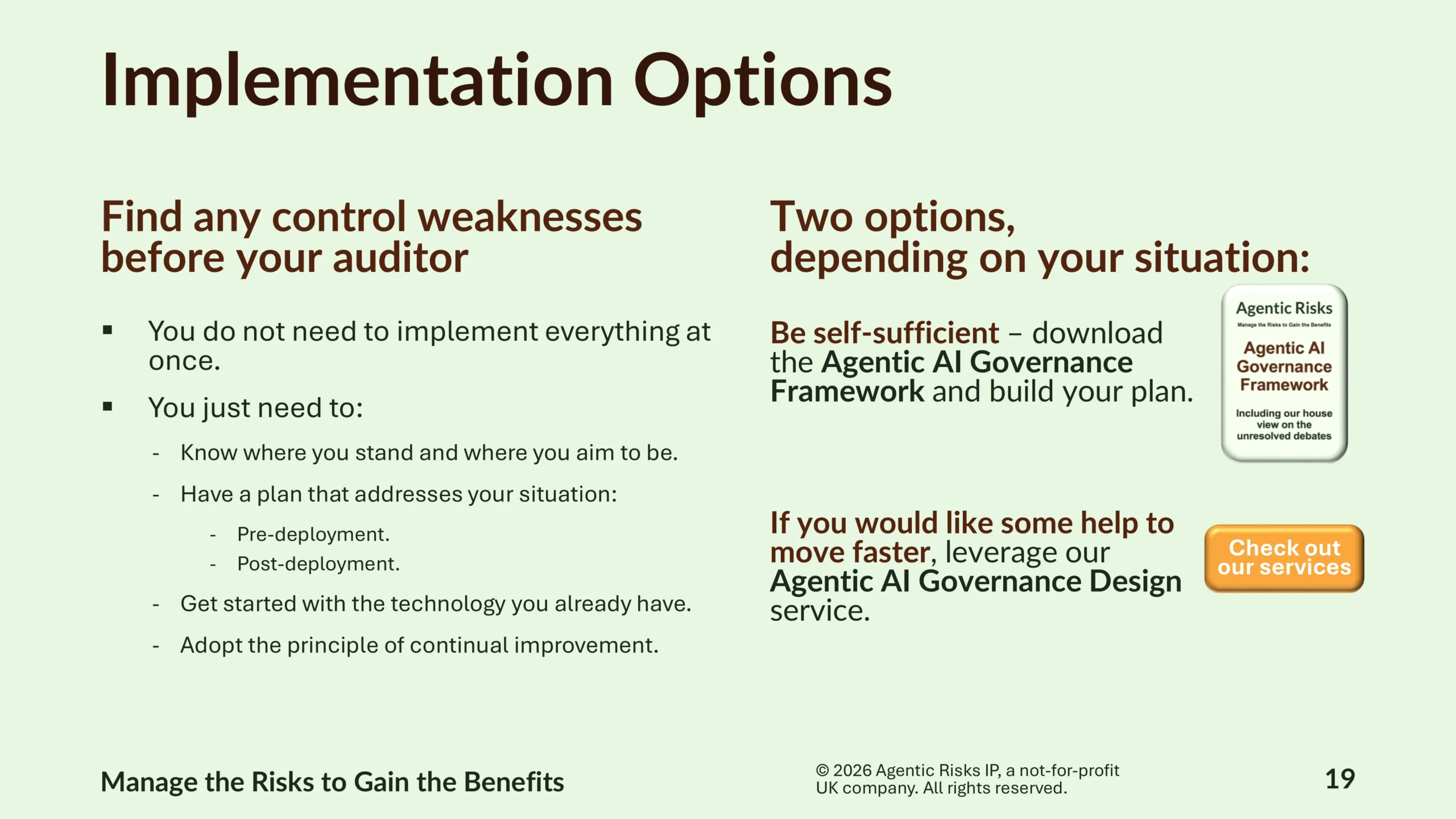

Depending on your circumstances, you may not need to implement everything at once: but you should know where you stand, have a plan that addresses your situation (e.g. pre- or post-deployment), get started with the technology you already have, and adopt the principle of continual improvement.

To get started, you can download the Agentic AI Governance Framework here. If you would like some help navigating the various factors to consider, our Agentic AI Governance Design service will create the customised specifications for embedding agentic controls into your organisation.

Agentic AI Risk Flags

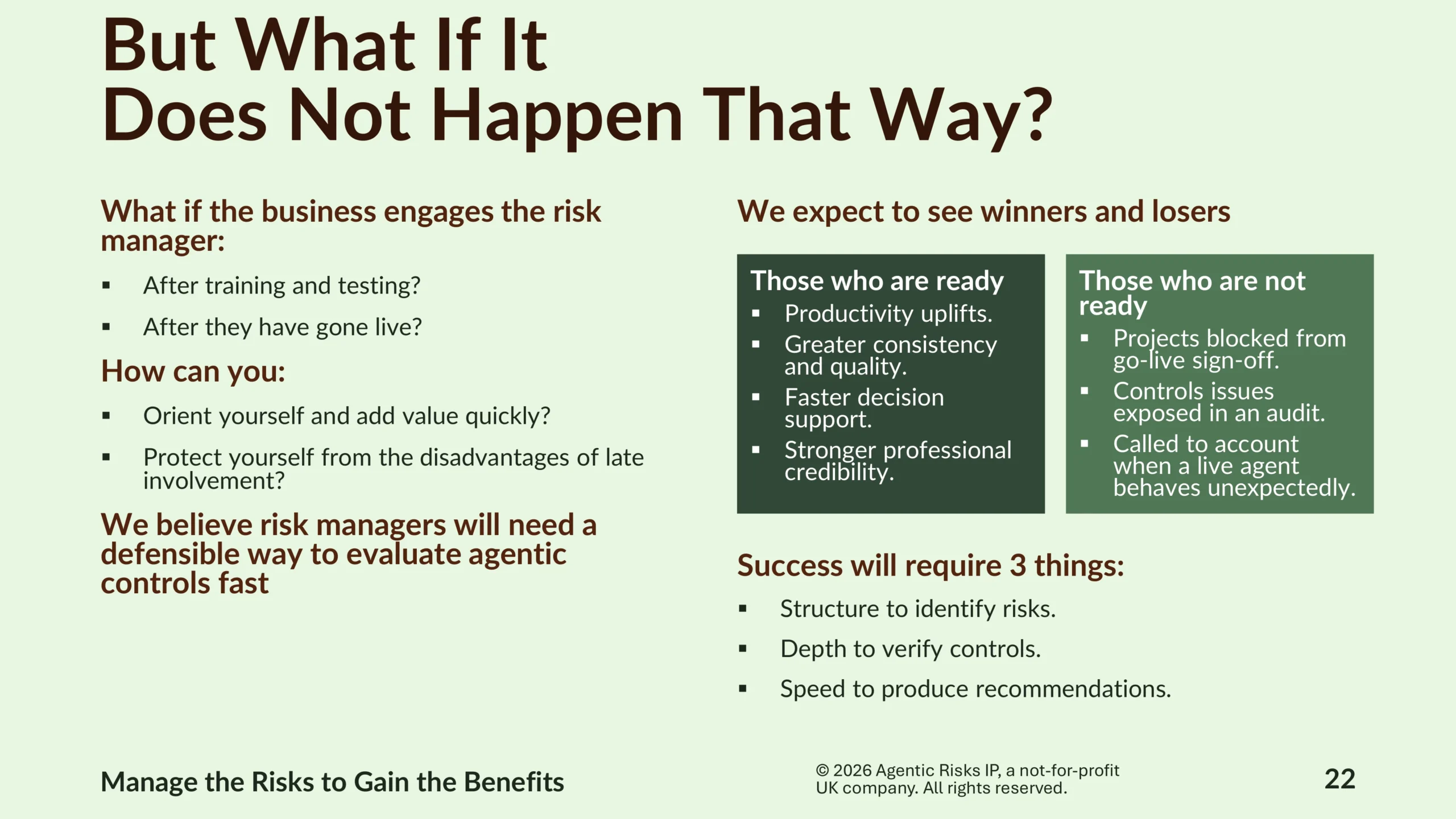

The Framework assumes you are governing agents from the design phase onward. But what if you already have agents in production?

Risk managers are sometimes engaged only after training, testing, or go-live – and with agentic AI, the window for influencing design has already closed by then.

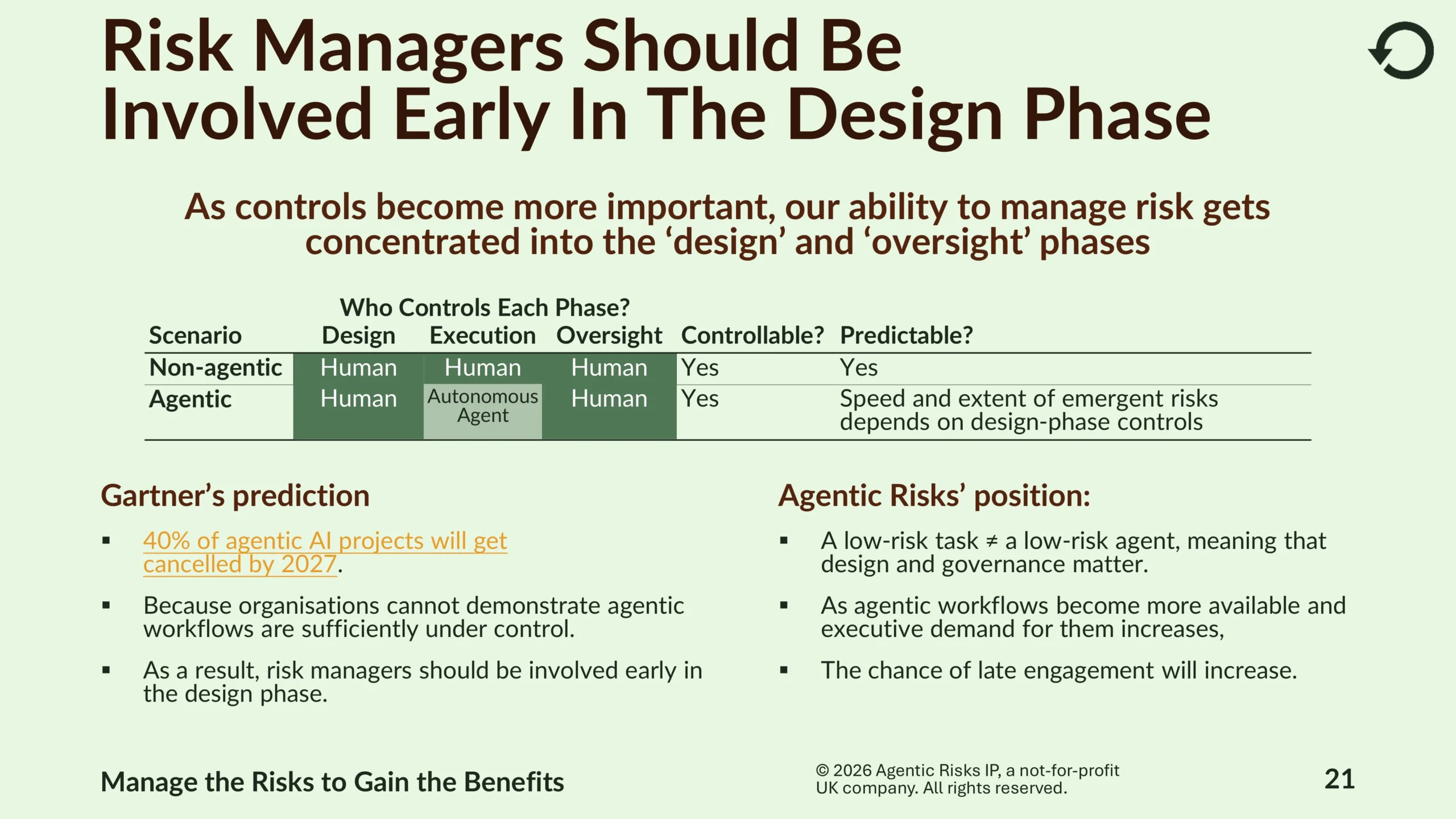

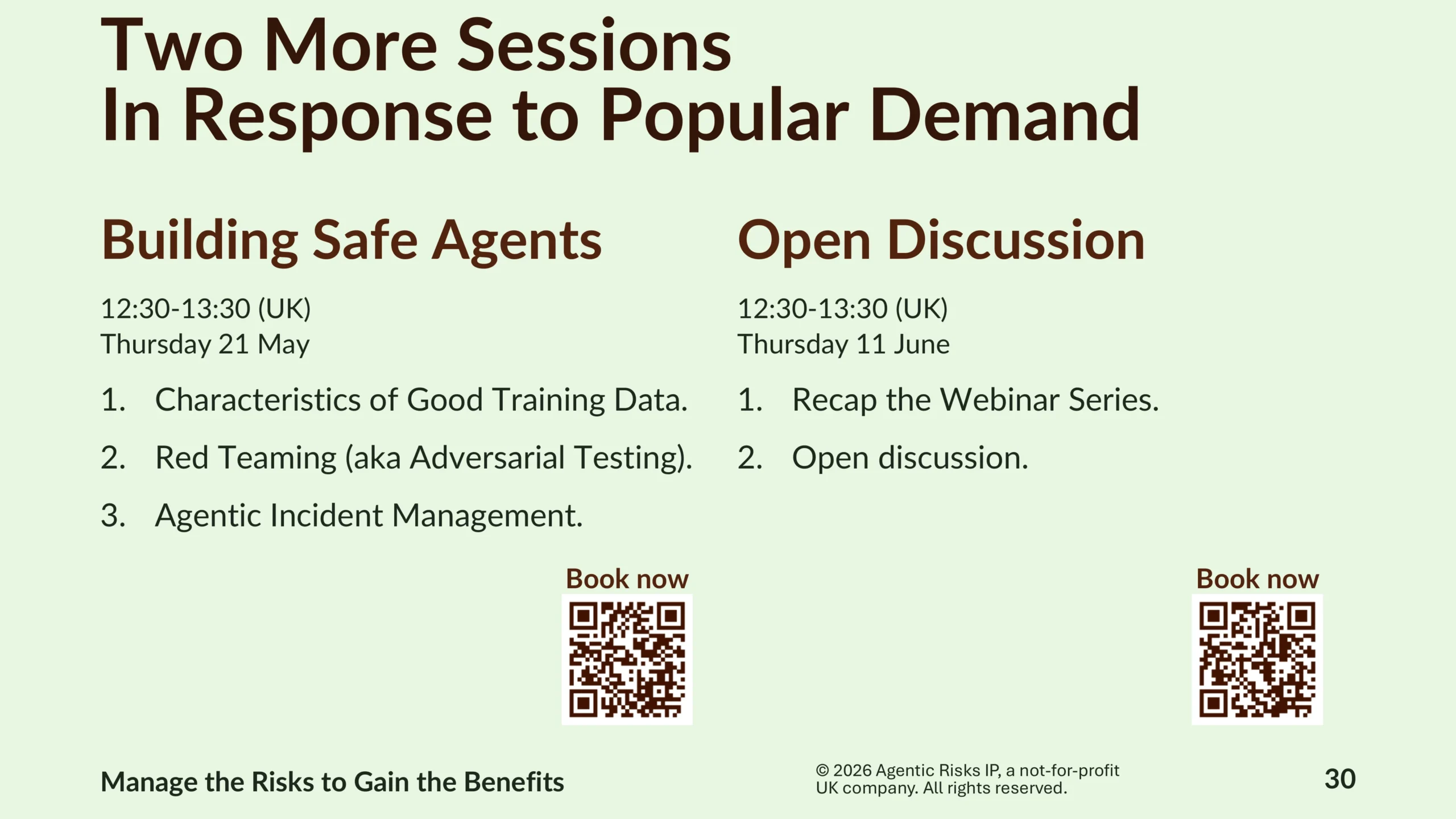

Indeed, Gartner predicts that 40% of agentic AI projects will get cancelled by 2027 because organisations cannot demonstrate agentic workflows are sufficiently under control, which is why we propose risk managers be involved early in the design phase.

In such a situation, risk managers will need a defensible way to identify and verify the controls that matter most.

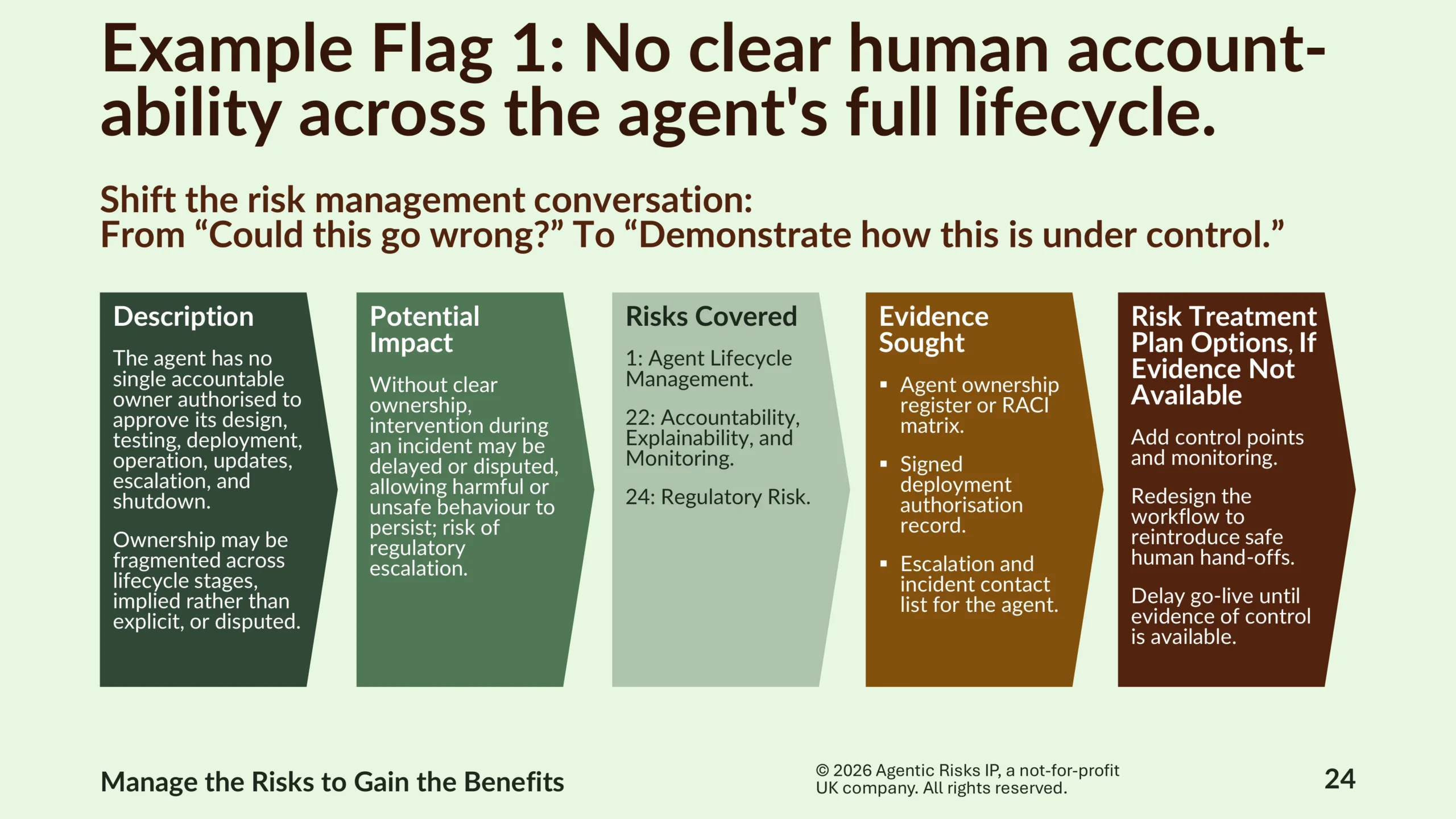

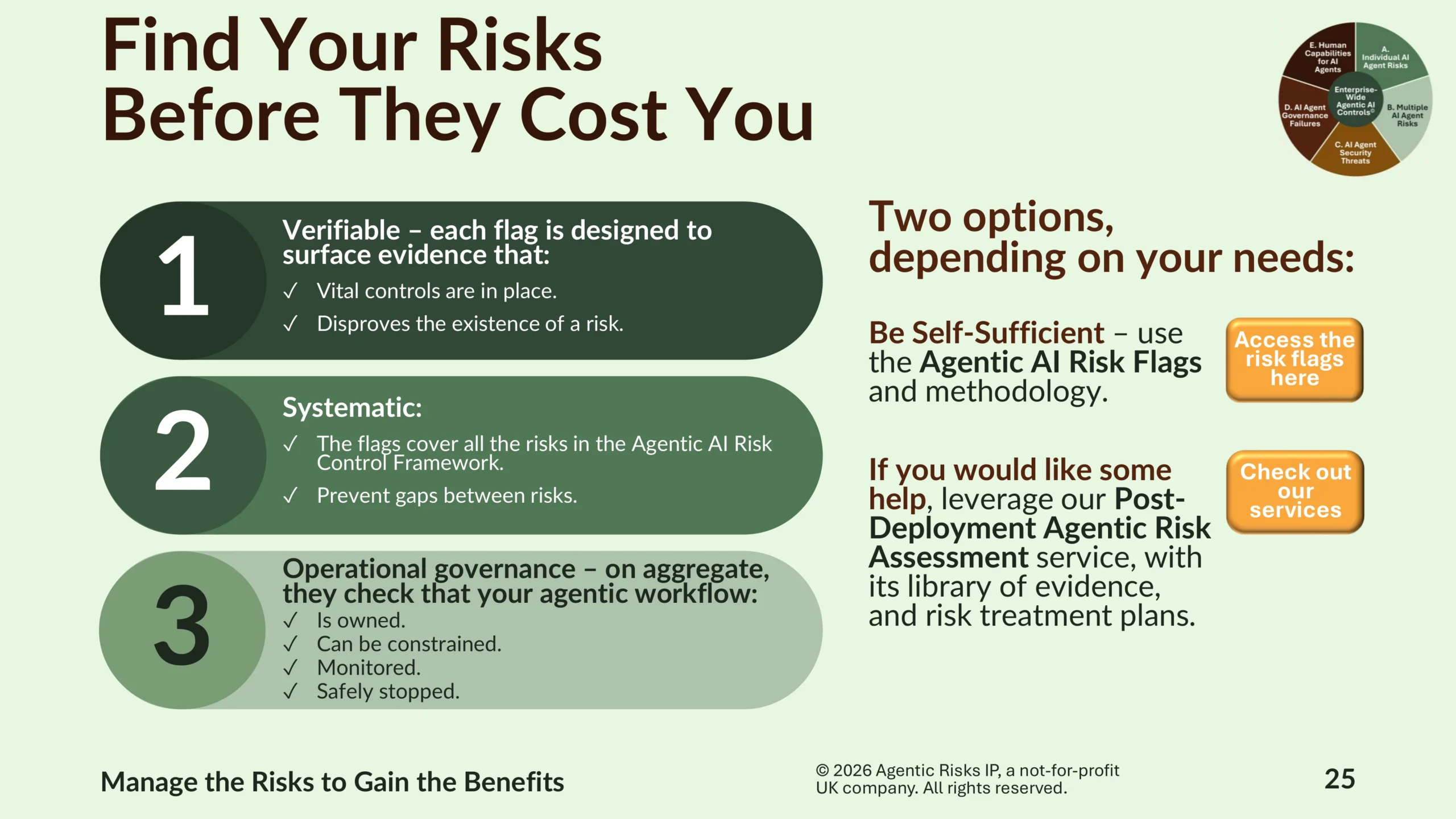

We designed the Agentic AI Risk Flags to deliver exactly that: 32 risk flags cover the five categories of agentic risk systematically and call for verifiable evidence that each risk does not exist. The principle is simple: if you cannot disprove a flag, you have a risk.

Taken together, the flags shift the risk management conversation from “could this go wrong?” to “demonstrate how this is under control.” Crucially, they perform the vital task of checking that your agentic workflow is owned, can be constrained, monitored, and safely stopped. For this reason, they sit at the heart of our Post-Deployment Agentic Risk Assessment service – a service few providers offer.

Working with Agentic Risks

Across the webinar, three themes recur around which we have designed our services:

- That compliance is not the same as safety.

- That agentic governance is a continuous capability rather than a project.

- That the window for influencing agent behaviour closes quickly once systems are in production.

We offer a range of agentic AI governance services, designed for regulated firms, that take you from first awareness of agentic AI – or from an estate that has grown faster than its governance – to a fully governed, audit-ready, and continuously improving agentic transformation programme at whatever pace your situation requires.

Two services are worth drawing to your attention specifically, because they correspond to the two positions firms can easily find themselves in today.

If you are building your governance posture from the ground up, our Agentic AI Governance Design service translates the Framework into customised specifications for your organisation – the policy architecture, the tiered governance model, the controls checklists, and the regulatory mapping – so that you can demonstrate to a supervisor not just that you have a policy, but that you decided which controls were appropriate and why.

If you have agents already in production and need to know where you stand, our Post-Deployment Agentic Risk Assessment service applies the 32 risk flags systematically across your existing estate. It is specifically designed to surface agents operating at a higher risk level than anyone in the organisation may have appreciated – sometimes agents that have been running for months – and to produce a defensible, evidence-led position on each one. Where a flag cannot be disproved, you have a prioritised list of what to fix.

Both services are available alongside our self-serve materials: you can download the Agentic AI Governance Framework and the Agentic AI Risk Flags and work with them directly, or leverage our services if you would like some help or want to move faster.

Best wishes with your agentic transformation – and if you have any questions, we look forward to hearing from you.

Frequently Asked Questions

Agentic AI governance is the operational discipline of keeping AI agents – systems that plan, reason, and act autonomously – owned, constrained, monitored, and safely stoppable throughout their lifecycle. It extends traditional AI governance, which focuses on model outputs, to cover the actions agents take: pre-execution boundaries, reasoning chain integrity, agent identity, multi-agent coordination, and liability across the agentic value chain. For regulated firms, effective agentic AI governance goes beyond EU AI Act compliance to address the operational realities that the regulation does not yet name.